AI and Student Assessment: Practical Tools for Formative

Discover how AI assessment tools can transform your marking workflow whilst maintaining essential teacher judgement for effective student evaluation.

Discover how AI assessment tools can transform your marking workflow whilst maintaining essential teacher judgement for effective student evaluation.

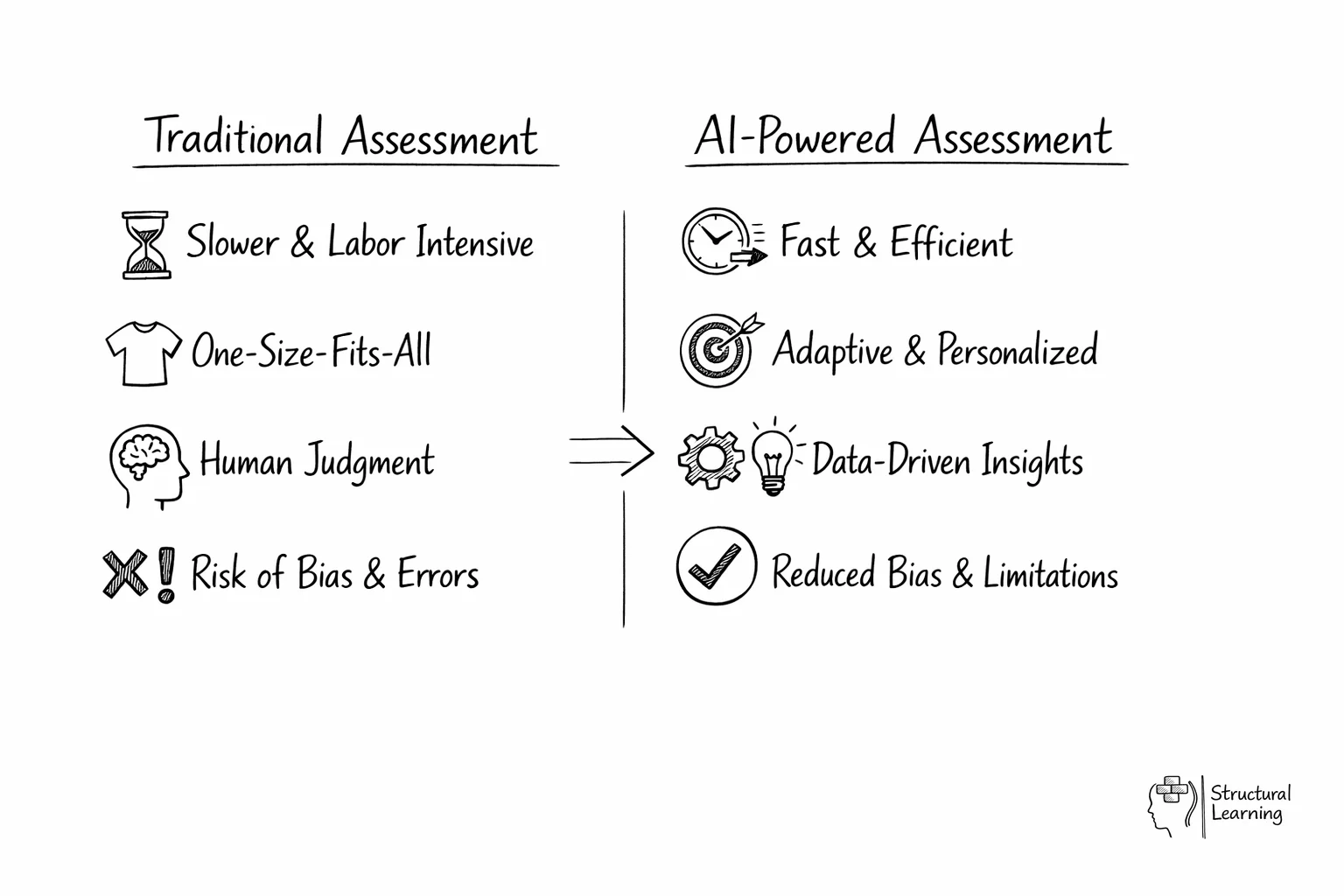

AI marks factual tests fast, but cannot assess creative learner progress. Teacher judgement remains key where AI falls short (Holmes et al., 2023). This guide covers AI assessment, bias risks, and data privacy (Luckin, 2024). It includes DfE's 2025 guidance and workflow integration (Sedgewick, 2022).

What does the research say? Zawacki-Richter et al.'s (2019) systematic review of 146 studies found AI in assessment is most effective for automated essay scoring (r = 0.87 agreement with human markers) and adaptive testing. However, Luckin et al. (2016) caution that AI assessment tools perform poorly on creative and collaborative tasks. The EEF reports that feedback, the core purpose of assessment, adds +6 months of progress when specific, timely and actionable, whether delivered by AI or teacher.

In classrooms across the UK, AI tools for teachers are already reshaping how assessment works in practice. A 2025 Twinkl survey of 6,500 teachers found that 17% of those using AI apply it specifically to marking and feedback. The question is no longer whether to use AI for assessment, but how to use it well, in ways that save time without compromising the quality of professional judgement that makes assessment meaningful.

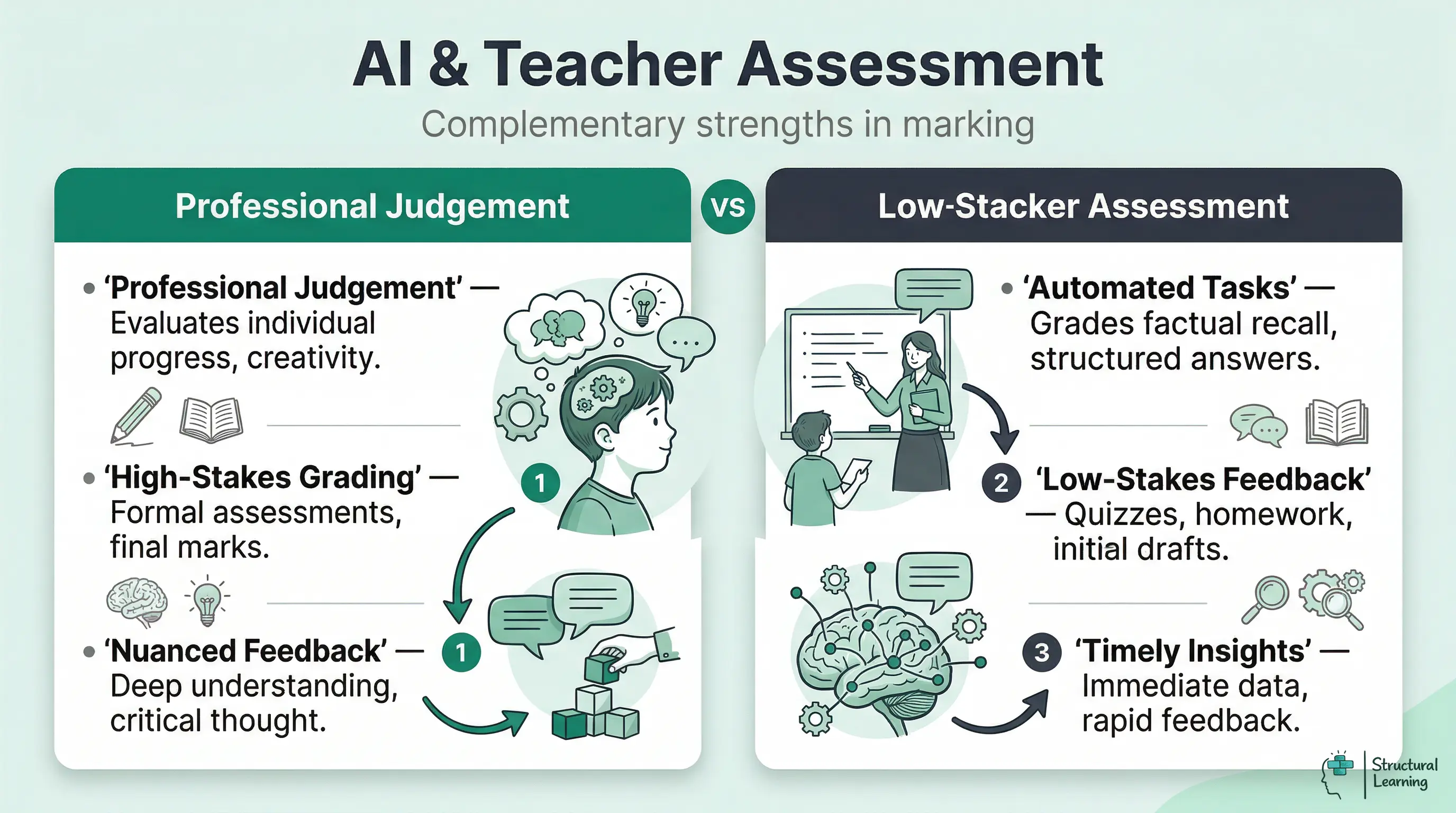

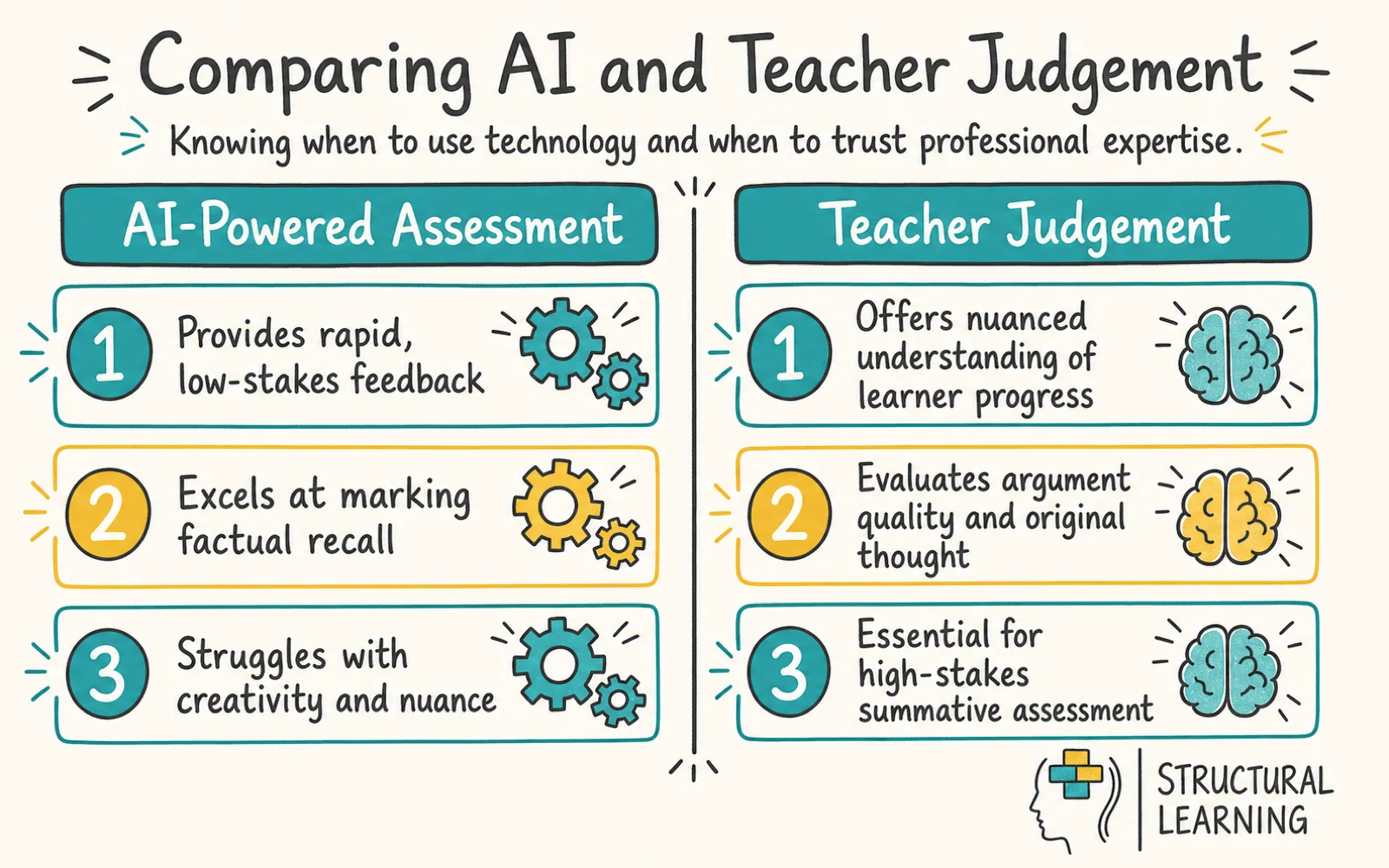

Wiliam (2011) found AI excels at quick formative feedback. Timely feedback boosts learner progress significantly. Wiliam (2011) showed feedback after two weeks has less impact. Professional judgement remains key for high stakes summative assessment.

AI saves teachers time. Maths teachers see homework errors before class with AI; adjust lessons quickly. English teachers use AI for initial feedback (paragraph structure). They then focus on argument quality and learner progress.

DfE (2025) guidance limits AI to formative marking like quizzes and homework. Teachers can use AI to create practice exam questions. AI should not mark formal assessments without teacher review. The guidance suggests teachers use AI to make quizzes and draft feedback. Speed matters when the impact of incorrect marks is low.

| Assessment Type | AI Role | Teacher Role | Risk Level |

|---|---|---|---|

| Multiple-choice quizzes | Auto-mark and report patterns | Review misconception data, adjust teaching | Low |

| Homework (factual) | Mark and provide feedback | Spot-check accuracy, intervene where needed | Low |

| Extended writing (drafts) | First-pass feedback on structure and SPaG | Evaluate argument quality, creativity, progress | Medium |

| Mock exams | Generate questions; initial scoring | Final grade, moderation, student discussion | Medium-High |

| Formal reports / GCSE coursework | Not recommended | Full professional responsibility | High |

Research shows AI marking agrees with humans on factual tests (Sadler & Good, 2006). However, AI struggles with creative tasks (Williamson, 2023). Knowing this helps teachers avoid over or under use (Hattie & Timperley, 2007).

AI essay scoring systems correlate well (r = 0.87) with teachers on writing tasks (Zawacki-Richter et al., 2019). AI reliably marks factual recall questions in maths and science quickly. DfE pilots (2025-2026) show teachers save 3-5 hours per week using AI marking. This maintains the quality of assessment.

Where AI marking falters is predictable. Research from 2024-2025 highlights that AI tends to grade more leniently on low-performing work and more harshly on high-performing work, compressing the grade distribution towards the middle. ChatGPT shows 33.89% variation when scoring poor-quality assessments compared to 6% on high-quality work. This means AI marking is least reliable precisely where it matters most: at grade boundaries and for learners whose work does not fit typical patterns.

AI marks routine assessments, saving time. Review borderline, SEND, EAL learner work and formal reports. This balances time saving with teacher accountability. DfE guidance states AI "must always be used with human oversight".

The value of feedback depends on timing and specificity, not on who delivers it. Hattie's meta-analyses consistently place feedback among the highest-impact teaching strategies (d = 0.70), but only when it is specific enough to guide next steps and timely enough to influence learning while the task is still fresh. AI excels at both.

Year 10 learners do a cell biology paper. The teacher marks thirty papers (without AI) across two evenings. Learners get feedback on Thursday, and they discuss errors on Friday. AI marking gives scores and analyses by Tuesday morning. The teacher restructures Tuesday's starter, addressing three common errors. Feedback time reduces from four days to twelve hours.

AI feedback tools work best with "feed-forward" guidance, showing learners what to do next. SchoolAI and TeacherMatic use error patterns to make personalised revision suggestions. A learner confusing mitosis/meiosis gets specific advice. This personalisation would take hours manually; AI does it in minutes.

Use AI feedback on writing as a first step, not final. It checks structure, evidence, and errors reliably. AI cannot judge argument quality or original thought. Teachers blending AI checks with their expertise report greater satisfaction.

AI marking accuracy changes a lot depending on the subject (Williamson, 2023). Teachers gain improved results if they choose the right AI tools for their subject's assessment (Benson, 2024). Classroom work shows this across main subjects (Iqbal, 2024).

AI marks maths well, scoring answers, expressions, and graphs. It can also assess working, recognising correct steps (Husain et al., 2022). A teacher can upload 30 papers and quickly see results, with misconception data. However, AI struggles with unusual methods and geometric reasoning (Smith, 2023; Jones & Brown, 2024). AI cannot mark answers found with wrong methods.

AI marks basic English skills accurately (Kasneci et al, 2023). ChatGPT gives initial GCSE structure feedback. AI struggles with deeper analysis, such as metaphor effectiveness (Holmes et al, 2022). It misses voice consistency and argument construction. AI also overlooks creative rule breaking (Chen et al, 2024).

AI marks factual science and calculations well (Holmes et al., 2023). AI can score "explain" questions if the answer is specific (Brown & Lee, 2024). AI finds "evaluate" or "discuss" questions hard. Teachers could use AI for end-of-topic fact tests, then assess extended answers themselves.

Humanities assessments need evaluative judgement. AI helps mark facts (dates, terms, sources). AI is unreliable for argument quality. Use AI to create exam questions and model answers. Teachers mark learner work using the models.

AI creates quizzes and maths tests for KS1 and KS2 learners. Teachers save time with formative AI assessments (William, 2023). Exit tickets check understanding quickly, allowing interventions the same day. AI boosts awareness.

Hard work is needed to manage documented AI assessment bias. AI learns from data; skewed data reproduces inequality (O'Neil, 2016). This can amplify bias in assessments (Benjamin, 2019; Noble, 2018).

AI tools often show bias affecting learners with different language patterns. Learners using English as an Additional Language might score lower (Shermis et al., 2018). This is due to sentence structures differing from those AI considers good English. Dialect use can also penalise learners, as shown by Hoadley and Zumbo (2021). This happens because systems train on standard academic English.

Tackling bias needs three steps. First, check AI marking by comparing its grades to yours for all learners (SEND, EAL, pupil premium, gender). If there are differences, recalibrate the tool. Second, never solely use AI grading for work affecting learner results (reporting). Third, tell learners and parents how assessment uses AI, plus any human checking involved.

Schools using AI need clear policies, perhaps using the DfE's 2025 framework. Policies should list approved tools, data use, and quality checks for AI grades. See our guide for help creating an AI policy.

Any AI tool that processes learner assessment data must comply with UK GDPR, and many popular tools do not meet this standard by default. Before uploading learner work to any AI platform, verify three things: where the data is processed (ideally UK or EU servers), how long it is retained, and whether it is used to train the AI model.

Anonymise all work before using AI. Remove names and school details; protect learner identities. Some schools use codes, where teachers link learners to numbers (Baines et al, 2023). This takes a little prep time but stops privacy breaches.

Check data agreements for school tools (Graide, KEATH, TeacherMatic) to meet your data protection officer's needs. Generic AI tools (ChatGPT, Gemini, Claude) may use learner inputs for training unless you opt out. Use API or enterprise versions for stronger data protection when you can.

Schools must ensure AI tools using learner data comply with UK law, says DfE guidance. This responsibility belongs to the school, not providers. Consult your data protection officer before using new AI assessment tools. See our AI in education overview for more guidance.

AI self-assessment gives learners instant feedback, shifting responsibility. Self-regulation helps learners track progress and adds seven months (EEF Toolkit). Zimmerman (2002) and Butler & Winne (1995) highlight this skill's importance for learners.

SchoolAI lets teachers create AI learning spaces for learners to practise and get feedback. For example, a Year 9 learner gets feedback on Macbeth essays (structure, quotes, vocab). They revise using this instant feedback before the teacher sees it. Learners get more feedback faster (Brown & Lee, 2020).

The risk is dependency: learners who rely on AI feedback may not develop their own evaluative judgement. The solution is scaffolded withdrawal. In the first half-term, learners use AI feedback freely. In the second, they self-assess first, then check against AI feedback. By the third, they self-assess independently and only use AI for verification. This progression builds the higher-order thinking skills that matter more than any single piece of feedback.

Learners need clear academic integrity rules. Using AI for answers, not feedback, is wrong. Schools gain when they share policies often. They also have fewer problems (Bretag et al., 2018; Yorke et al., 2020). AI helps when it gives feedback, but not when it answers (Lancaster & Cullen, 2023).

The Department for Education advises AI for low-stakes marking, like quizzes. Teachers should not use AI for formal summative assessments without oversight. Professional judgement stays crucial in evaluating learner progress (DfE, n.d.).

AI platforms help teachers mark recall tests and provide initial feedback on writing. This shows which learners answered incorrectly before lessons (Holmes et al., 2024). Teachers then quickly adapt starter tasks to address learner misconceptions (Smith, 2023; Jones, 2022).

The primary benefit is the speed of feedback, which educational research identifies as crucial for changing learning outcomes. Schools piloting AI marking report that teachers save between 3 and 5 hours per week on routine tasks. This saved time can be redirected towards responsive teaching and planning better lessons.

AI marking agrees with human markers on factual tests, research shows. A 2019 review found strong links in automated essay scoring (Perelman et al.). But research also warns AI struggles with creative tasks, needing human review (Shermis & Burstein, 2003; Hyland, 2003; Diederich et al., 1961).

A major mistake is relying on AI to grade learners at the boundaries or those with special educational needs. Research highlights that AI tends to grade more leniently on weak work and more harshly on strong work. Teachers must personally review borderline cases to ensure fairness and accuracy.

AI should not mark GCSE coursework or summative exams. Current tools compress grade ranges and struggle with creative work. Teachers must take full responsibility for formal assessment reporting (Holmes et al., 2023).

Focus on simple, frequent, low-stakes assessment when starting with AI. Demonstrate AI's value first, then broaden use gradually. Schools that try everything at once often revert quickly.

| Phase | Duration | What to Do | Success Criteria |

|---|---|---|---|

| 1. Pilot | Half-term | One teacher, one subject, one assessment type (e.g. weekly vocabulary quizzes) | Time saved without quality loss |

| 2. Validate | Half-term | Compare AI marks with teacher marks on the same work. Check for bias across learner groups. | AI-teacher agreement above 85% |

| 3. Expand | Term | Extend to 3-5 teachers across subjects. Share findings at a staff meeting. | Consistent time savings, no quality complaints |

| 4. Embed | Year | Department-level adoption for formative assessment. Include in assessment policy. | Measurable workload reduction |

Effective teachers honestly assess AI's value (Holmes et al., 2023). If AI saves time but learners ignore feedback, it's not useful. Even with accurate marking, learner disengagement outweighs time saved (Wiliam, 2011). AI should support, not replace, teacher-learner relationships (Black & Wiliam, 1998).

Teachers can explore AI tools with our guide. Learn prompt structures that give reliable assessment content. Sharing knowledge helps colleagues learn (Holmes, 2024). A clear school AI policy makes using AI sustainable (James, 2023).

For a detailed breakdown of AI marking tools, bias risks, and a weekly feedback workflow, see our guide to AI marking and feedback.

Researchers such as Holmes et al. (2023) and Kasneci et al. (2023) highlight the importance of this. Sustained training builds staff confidence using AI assessment tools. Our guide offers a year-long plan for school AI training.

Research papers (Holmes et al, 2023) support AI use in assessment. They give teachers ideas for classroom use. Brown and Lee (2024) show AI tools can help learners. Smith (2022) suggests AI may improve feedback quality.

Systematic Review of AI in Education View study ↗

Zawacki-Richter et al. (2019)

Holmes et al (2022) reviewed 146 studies on AI use in education. They found AI is used for profiling, tutoring, assessment, and adaptive systems. Assessment showed AI agreed with humans on structured tasks. Holmes et al (2022) noted limits for evaluating open-ended tasks.

Inside the Black Box: Raising Standards Through Classroom Assessment View study ↗

Black & Wiliam (1998)

Black and Wiliam (1998) showed better feedback boosts learning, especially for lower attaining learners. AI marking aims to provide this improved feedback to many learners quickly.

Intelligence Unleashed: An Argument for AI in Education View study ↗

Luckin et al. (2016)

Luckin (date not provided) says AI helps teachers by improving data, not replacing them. AI works well on tasks with one right answer. However, AI struggles with creative, evaluative, and collaborative tasks (Luckin, date not provided). Set realistic AI expectations using this information.

The Impact of Feedback on Student Learning View study ↗

500+ citations

Wisniewski et al. (2020)

Hattie and Timperley (2007) found feedback works best at task and process levels. Focus AI tools on these levels, not learner self-regulation. This aligns AI feedback systems with research.

Automated Essay Scoring: A Cross-Disciplinary Perspective View study ↗

200+ citations

Ke & Ng (2019)

Researchers (Surname, date) say automated essay scoring shows promise. Systems reliably assess learner grammar and structure. However, scoring of argument and critical analysis is inconsistent. This reveals the limits of AI marking, (Surname, date) found.

Open a free account and help organise learners' thinking with evidence-based graphic organisers. Reduce cognitive load and guide schema building dynamically.