AI for Teachers: A Complete Classroom Guide [2026]

Compare AI tools for UK teachers: lesson planning, marking, differentiation, SEND support. Honest, evidence-based evaluations for busy classrooms.

![AI for Teachers: A Complete Classroom Guide [2026]](https://cdn.prod.website-files.com/5b69a01ba2e409501de055d1/694ec94db277bea671974906_ai-for-teachers-classroom-teaching.webp)

Compare AI tools for UK teachers: lesson planning, marking, differentiation, SEND support. Honest, evidence-based evaluations for busy classrooms.

AI tools can save teachers up to five hours a week on planning, marking and administration, but only when used with clear purpose and professional judgement. This guide covers every major use of AI in the classroom: lesson planning, assessment, differentiation, SEND support, and building school-wide AI literacy. Each section links to deeper guides across the Structural Learning AI cluster, giving you a single starting point for the full picture.

What does the research say? UNESCO's (2023) global survey found 42% of teachers have used AI tools, but only 10% received formal training. The DfE (2024) reports that schools with structured AI policies see higher quality usage than those without. Hattie's (2023) updated meta-analysis ranks AI-supported feedback at d = 0.48, comparable to traditional formative assessment. The EEF cautions that technology alone has limited impact (+4 months) unless combined with effective pedagogy and teacher training.

A 2025 Twinkl survey of 6,500 UK teachers found 60% are already using AI for work purposes, whilst the centre for Democracy and Technology reports that 85% of teachers and 86% of students used AI in the preceding school year. The gap between usage and training is the central challenge: teachers are adopting AI faster than schools can support them. This guide helps bridge that gap by connecting practical classroom applications with the evidence base and linking to detailed resources for each area.

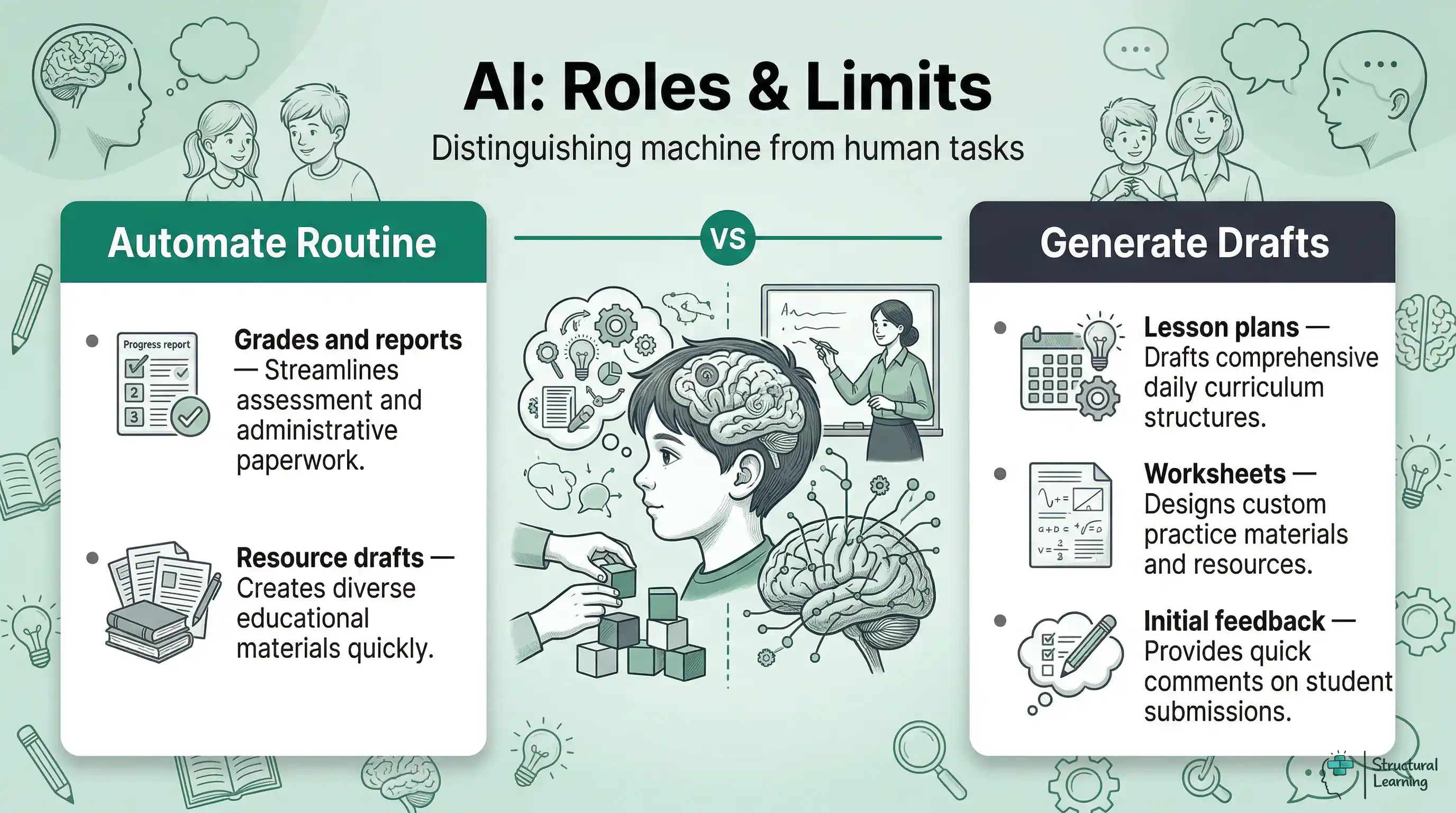

AI excels at pattern recognition, text generation and data analysis, but it cannot replace professional judgement, relationship-building or the nuanced understanding of individual learners. Drawing this line clearly matters. Teachers who understand what AI does well use it confidently; those who expect it to think like a colleague end up frustrated.

AI helps with first lesson drafts and differentiated worksheets. It gives instant feedback on factual and maths tasks. AI also summarises learner data from assessments and automates reports. Year 6 teachers could generate reading tasks quickly. Science teachers might create exam questions using AI (Holmes & Tuomi, 2024; Luckin, 2023; Zawacki-Richter et al., 2019).

AI cannot grasp a learner's emotional struggles or judge creative progress. It cannot build trust to encourage risk-taking or manage classroom social complexities. Rose Luckin's 2018 UCL research says AI helps with routine tasks. This frees teachers to focus on crucial relational and adaptive aspects (Luckin, 2018).

The practical rule: if a task involves pattern-matching or generation from a template, AI will probably help. If it involves judgement about a specific child in a specific context, you remain essential. Keep that distinction in mind as you read through each application below.

No single AI tool does everything well, and vendor marketing rarely tells you the limitations. The table below compares the major tools UK teachers encounter, rated honestly on what matters in a classroom context. This is vendor-neutral: Structural Learning has no commercial relationship with any of these providers.

| Tool | Best For | Limitations | Cost | GDPR Note |

|---|---|---|---|---|

| ChatGPT (OpenAI) | General planning, resource creation, explaining concepts at different levels | Can hallucinate facts; not curriculum-aligned by default | Free tier available; Plus from $20/mo | Do not input learner names or data |

| Gemini (Google) | Google Workspace integration, summarising documents, research | Less strong on UK curriculum specifics; newer product | Free tier available; Advanced from $19.99/mo | Check school Google admin settings |

| Claude (Anthropic) | Long document analysis, nuanced writing, careful reasoning | Smaller ecosystem; no image generation | Free tier available; Pro from $20/mo | Do not input learner names or data |

| Diffit | Instant differentiation: creates reading materials at multiple levels from any source | focussed on reading/vocabulary; limited beyond that | Free for teachers | US-based; check data processing |

| SchoolAI | Student-facing AI spaces with teacher controls and monitoring | Requires school-level subscription; setup time | Free tier; Pro from $15/mo per teacher | Designed for classroom use; admin dashboard |

| TeacherMatic | UK curriculum-aligned resource generation (lesson plans, quizzes, rubrics) | Template-driven; less flexible than general AI | Free tier; Premium from £5/mo | UK-based; GDPR compliant |

The general-purpose tools (ChatGPT, Gemini, Claude) are most flexible but require you to write good prompts and verify outputs. The education-specific tools (Diffit, SchoolAI, TeacherMatic) are narrower but require less prompt skill. Most teachers benefit from starting with one general tool and one specialist tool, building confidence before expanding. For detailed prompt strategies that work across all these platforms, see our guide to AI prompts every teacher should know.

The most productive teachers treat AI as a first-draft generator, not a finished-product machine. They prompt specifically, review critically, and adapt based on their professional knowledge of their learners. The least productive users paste vague requests, accept outputs uncritically, and wonder why the results feel generic. The tool amplifies whatever you bring to it: strong pedagogical knowledge produces strong AI-assisted resources; weak prompts produce weak outputs regardless of which tool you use.

1. Using AI outputs without checking facts. Large language models generate plausible text, not verified truth. Always fact-check dates, statistics, quotations and scientific claims before sharing AI-generated content with learners. A secondary history teacher in Bristol discovered that ChatGPT confidently attributed a quotation to Churchill that Churchill never said. The AI was not lying; it was pattern-matching based on training data that included the misattribution.

2. Inputting learner data into AI tools. Names, SEN information, behavioural records and assessment scores must never be entered into any external AI system. Use anonymised references ("Learner A is working at greater depth in reading") or generic descriptions. This is a UK GDPR requirement, not a suggestion.

3. Expecting AI to understand your class. AI does not know that three learners in your Year 5 class arrived mid-year with limited English, that your school uses a specific phonics programme, or that your Year 10s have already covered cell biology but not genetics. You must provide this context in every prompt for the output to be useful.

4. Replacing thinking time with generation time. The pedagogical decisions (what to teach, in what order, with what assessment) are the valuable part of planning. AI should handle the production work (formatting, generating variations, creating resources) after you have made those decisions. Outsourcing the thinking to AI produces technically adequate but pedagogically shallow lessons.

5. Trying every tool at once. Tool fatigue is real. Schools that mandate three or four AI tools simultaneously see lower adoption than those that support mastery of one tool before introducing the next. Pick the tool that addresses your biggest time pressure and learn to use it well before expanding.

AI applications vary significantly across subjects, and the teachers getting most value match the tool to their subject's specific demands. A one-size-fits-all approach wastes time. Here is what experienced teachers report works in practice across the major subject areas.

English and Literacy. AI generates differentiated reading comprehension questions from any text in seconds, creates model paragraphs at different GCSE grade boundaries, and produces vocabulary-building activities matched to reading age. A Year 9 English teacher in Leeds uses Claude to generate three model responses to a literature essay question (one at grade 4, one at grade 6, one at grade 8), then uses them as the basis for a class discussion about what makes writing effective. The AI does the generation; the teacher does the pedagogical thinking.

Mathematics. AI creates problem sets that progress through difficulty levels, generates worked examples with step-by-step solutions, and produces diagnostic questions that target specific misconceptions. A primary maths lead uses ChatGPT to create "What's the same? What's different?" comparison tasks for fractions, then adapts them based on her knowledge of which learners need concrete representations versus abstract notation. For guidance on managing learner AI use and assessment design, see AI and academic integrity.

AI produces exam questions for AQA, Edexcel, or OCR. It makes summaries highlighting practicals and differentiated experiment plans. AI helps learners structure science writing with scaffolds (Clark et al., 2007; Hodson, 1998; Millar, 2004).

AI helps with humanities subjects. It makes source analysis guides for History and debate prep for RE. Geography teachers can use it for case study summaries. One GCSE History teacher used Gemini to make historical interpretation cards. Learners evaluate and rank these cards. The AI curates sources, and learners improve critical thinking (Husain & Jones, 2023).

AI creates phonics materials and social stories (EYFS/KS1). It also makes visual timetable help. Teachers find AI useful for admin like reports and parent contact. Young learners need human interaction more than AI content. (Researcher info needed).

AI generates metacognitive prompts, helping learners understand their learning. Flavell (1979) said metacognition involves understanding oneself, tasks, and strategies. AI prompts can directly target these components, building learner awareness.

For person knowledge, prompts like "Before you start, rate your confidence with this topic from 1 to 5 and write one sentence explaining why" encourage learners to attend to their own prior knowledge and uncertainties. For task knowledge, asking learners to consider "What kind of thinking does this question need: recall, explanation or evaluation?" develops their awareness of cognitive demand. For strategy knowledge, structured reflection such as "Which revision strategy did you use this week? Write one reason it helped and one reason you might try something different" builds the habit of evaluating approach rather than just effort. The EEF estimates that metacognitive and self-regulation strategies add an average of seven months of additional learning progress when taught explicitly. For a comprehensive framework for developing these skills across your school, see our full guide to developing metacognition in the classroom.

AI reduces lesson planning time from hours to minutes, but the quality depends entirely on how specifically you prompt it. Vague requests produce generic plans. Specific prompts that include year group, prior knowledge, curriculum objectives and desired assessment evidence produce plans worth adapting.

Plan a Year 4 fractions sequence. Learners know halves and quarters but struggle with equivalence. Create five lessons using concrete-pictorial-abstract methods. Include paired activities and formative assessment in lesson three. This structure saves time.

Teachers value AI for starters, worked examples, and exam-aligned homework. Teacher Tapp (2025) found 47% use AI mostly for planning and making resources. Time saving is great, but you still choose suitable activities. You understand common learner misconceptions and the right challenge level. See our guide for AI planning examples.

The difference between a useful and useless AI-generated lesson plan almost always comes down to prompt structure. Compare these two approaches:

| Weak Prompt | Strong Prompt |

|---|---|

| "Create a lesson plan about the water cycle for Year 5." | "Create a 60-minute lesson on the water cycle for Year 5. Learners can name evaporation and condensation but confuse precipitation with condensation. Include a concrete demonstration, paired discussion using sentence stems, and a 6-question exit ticket assessing the distinction between precipitation and condensation. Align to NC KS2 Science: states of matter." |

The strong prompt includes five elements that make the output immediately useful: time constraint, prior knowledge, specific misconception, activity types, and curriculum alignment. Without these, AI produces a generic plan that requires more editing time than it saved. Our detailed guide to effective AI prompts for teachers provides templates for every major subject and key stage.

The DfE's 2025 guidance explicitly supports AI for formative, low-stakes marking, including classroom quizzes, homework feedback and exam-style question generation. Dylan Wiliam's research on formative assessment demonstrates that timely, specific feedback significantly improves achievement, yet traditional marking methods often delay this intervention by days or weeks. AI closes that gap. For a detailed breakdown of which subjects AI can mark reliably and which still need a teacher, see our guide to AI marking and feedback.

Automated marking tools quickly assess factual recall. They give structured feedback on writing and track learner performance across assessments. AI gives maths teachers instant data for Year 10, revealing topics needing review. English teachers use AI for draft feedback, then focus on argument quality and progress.

The DfE is clear, however, that AI marking should supplement rather than replace teacher judgement. High-stakes assessments, summative evaluations and any context requiring understanding of individual learner circumstances require human oversight. King's College London's 2025 guidance on AI-assisted marking reinforces this: AI is a tool to support, not replace, professional activity. Schools running AI marking pilots (using tools like Graide, KEATH and TeacherMatic) report that teachers save 3-5 hours per week on routine marking whilst maintaining assessment quality. For practical strategies on integrating AI into your assessment workflow, see our guide to AI and student assessment.

Teachers should set boundaries for AI use in assessment. AI is useful for marking multiple-choice, testing recall, and grammar checks. It also generates questions and tracks learner progress (Chartered College of Teaching, 2025). Avoid AI for creative work, complex arguments, or grouping learners. Do not use it for formal reports without checking.

Roediger and Karpicke (2006) showed retrieval boosts memory more than restudying. This "testing effect" means remembering information helps learners recall it later. AI creates spaced retrieval quizzes for each learner. This applies the principle widely (Roediger & Karpicke, 2006).

A Year 8 science teacher can prompt: "Generate a 5-question retrieval starter based on our electricity topic from last week. Include 2 factual recall questions, 2 questions requiring learners to explain a concept, and 1 question asking learners to apply their knowledge to a new context. Difficulty should be appropriate for a mixed-ability class." The resulting quiz takes seconds to generate and directly serves the low-stakes testing effect that Roediger and Karpicke identified. Spacing these retrieval sessions across a sequence of lessons, prompting AI to reference content from two, four and eight lessons ago, compounds the memory benefit further. For a detailed guide to implementing retrieval practice with and without AI, see our full resource on retrieval practice for teachers.

Genuine differentiation across a full class of 30 learners has always been the aspiration; AI makes it practically achievable. Tools like Diffit can take a single source text and instantly produce versions at three or four reading levels. ChatGPT and Claude can generate problem sets that progress from foundational to extended, matched to individual learners' current working levels. What previously required an evening of preparation now takes minutes. For prompt templates and subject-specific examples, see our full guide to AI differentiation in the classroom.

AI helps learners with SEND. The Center for Democracy and Technology (2025) found US teachers use AI for IEP tasks. In the UK, AI can create accessible resources and track EHCP targets. It may highlight engagement issues. Human oversight is crucial; AI informs, not replaces, teacher judgement.

Mayer's multimedia learning principles (2009) help match content to each learner's needs. AI suggests visuals, audio, or activities based on learner responses. Schools using AI grouping find it easier to target support. See our guides on AI in special education for useful differentiation strategies.

Sweller's (1988) cognitive load theory explains how learners process information. Intrinsic load is task complexity. Extraneous load is wasted effort. Germane load is building knowledge schemas. AI helps by cutting extraneous load, freeing up learners' working memory. Teachers, for example, lose time reformatting worksheets; AI addresses this friction.

Paas, Renkl, and Sweller (2003) showed learners with working memory issues struggle more with extra cognitive load. AI offers solutions. It creates sentence starters, reducing demands. It gives step-by-step examples, lowering load on procedures. For autistic learners, AI produces visual schedules that simplify environments. A teacher might ask for a 5-step checklist with starters. This helps without removing the learning. See our guide for differentiation strategies to meet diverse needs.

The Centre for Democracy and Technology (2025) found 85% of teachers use AI tools. Yet, 76% reported no formal AI training. This creates risks, as teachers use AI without fully grasping limitations. Schools also lack AI policies, so learners get mixed messages (Centre for Democracy and Technology, 2025).

Building school-wide AI literacy starts with three foundations. First, every teacher needs a working understanding of what generative AI does and does not do, including its tendency to produce plausible-sounding but incorrect information. Our guide to AI literacy for teachers covers the technical foundations accessibly. Second, schools need a clear, practical AI policy that addresses which tools are approved, what data can and cannot be inputted, and how AI use should be acknowledged. The DfE published its first formal guidance in June 2025; for a step-by-step guide to translating this into a workable school document, see creating your school's AI policy.

Third, teachers need practical prompt-writing skills. The difference between a useful and useless AI output almost always comes down to prompt quality. Specificity about year group, prior knowledge, curriculum objectives and desired output format transforms generic responses into genuinely useful resources. Our guide to AI prompts every teacher should know provides subject-specific templates that work across ChatGPT, Gemini and Claude. For teachers ready to integrate AI into their daily workflow, teaching with an AI co-pilot walks through how to use AI as a thinking partner for planning, differentiation and reflection.

The Chartered College of Teaching now offers a free certified assessment for AI literacy, providing a standardised benchmark for staff development planning. Schools can use this to identify which staff members are confident, which need foundational training, and which are ready to become AI champions who support colleagues. Pairing this assessment with a termly review of AI tool usage creates an evidence-based approach to professional development that avoids both complacency and panic.

Learners need AI literacy instruction. They must know AI generates text using statistics, not true understanding (Holmes & Watson, 2023). AI can confidently make errors; learners must double check its outputs (Lee & Davis, 2024). Using AI without credit breaches academic honesty. Schools providing AI guidance report fewer integrity issues. They also report better AI use for learning. Integrate AI into the current curriculum, like computing, English, and PSHE. Do not make it a separate initiative (Patel, 2019).

Engagement analytics spot disengaged learners early. This gives teachers an early warning to help learners before performance drops. The tools analyse participation, homework, and assessments. Manchester and Birmingham schools found proactive intervention successful.

AI helps learners engage with adaptive content. If a learner struggles, AI suggests different explanations or tasks. When learners master material, AI provides extension work. This responsiveness helps in mixed ability classes (Holmes et al., 2023).

The evidence, however, comes with a caveat. The EEF's (2024) review of technology interventions reminds us that the tool matters less than how it is used. AI dashboards that generate data without clear pedagogical response add noise, not value. The teachers who benefit most from AI engagement tools are those who build specific routines: checking the dashboard at the start of each day, using it to inform that day's seating plan or questioning strategy, and reviewing weekly trends to adjust medium-term planning. The technology enables faster, more precise decisions, but the decisions remain the teacher's.

The teachers who integrate AI successfully share one trait: they start small and evaluate honestly before expanding. Here is a four-week roadmap based on patterns from schools that have adopted AI effectively.

| Week | Focus | What to Do | Evaluate |

|---|---|---|---|

| 1 | Learn the basics | Sign up for one AI tool (ChatGPT or Gemini free tier). Ask it to explain a topic you teach well. Notice where it is accurate and where it is wrong. | Can you spot inaccuracies? Do you trust it enough to adapt its outputs? |

| 2 | Planning support | Use AI to generate starter activities or homework tasks for one subject. Be specific in your prompts: include year group, topic, prior knowledge. | Did it save time? How much editing did the output need? Was it better or worse than what you would have created? |

| 3 | Assessment support | Use AI to generate quiz questions or provide first-pass feedback on a low-stakes assessment. Compare AI feedback to your own judgement on the same work. | Was the feedback accurate? Would learners find it useful? Where did it miss the mark? |

| 4 | Reflect and decide | Review your three weeks of use. Identify the one application that saved the most time with acceptable quality. Make it a regular part of your workflow. | What will you continue using? What did you try but reject? Share findings with a colleague. |

This graduated approach prevents the two most common failure modes: trying to do everything at once (leading to overwhelm and abandonment) and using AI for tasks where it adds no value (leading to frustration and scepticism). The teachers who become confident AI users are not the most technically skilled; they are the ones who evaluate honestly and build on what works.

Higgins et al. (2022) state AI can plan lessons and analyse data. AI recognises patterns in datasets to create text or give feedback for learners. Teachers use these tools as assistants; Selwyn (2017) says AI does not replace their expertise.

Teachers use AI for admin, like writing emails. They also use AI to tailor work difficulty. Check AI outputs for facts and protect learner data.

The primary benefit is significant time saving, as AI can generate a complete lesson sequence or resource bank in minutes. It allows teachers to create multiple versions of the same material for different reading levels, making differentiation much easier to manage. This reduction in workload gives teachers more time to focus on individual learner support and relationship building.

Research from the Education Endowment Foundation indicates that technology has a positive impact when combined with strong pedagogy. A 2024 DfE report found that schools with clear AI policies see more effective usage than those without. Meta analysis suggests that AI supported feedback can be as effective as traditional formative assessment when used correctly.

A frequent error is over relying on the software and failing to verify the facts it produces, which can lead to misinformation in lesson materials. Another mistake is inputting private learner names or data into general tools, which violates GDPR guidelines. Teachers should also avoid using AI for complex emotional judgements that require a deep understanding of a child's unique circumstances.

The choice depends on the specific task, with general tools being strong for nuanced writing and document analysis. Specialist platforms are often better for the UK curriculum because they include templates for specific assessment points. Most educators find success by combining one general assistant with one tool designed specifically for classroom use.

Data protection must come first: never input learner names, SEN data, behavioural records or any personally identifiable information into any AI tool. This is not just good practice; it is a legal requirement under UK GDPR. Even AI tools that claim data is not stored may process it through servers outside the UK. Schools should maintain an approved tools list and require senior leadership sign-off before any new AI tool is introduced. For a comprehensive treatment of the ethical dimensions, see our guide to AI ethics in education.

Beyond data protection, the ethical considerations are real. AI outputs can contain bias, particularly in assessment and grouping recommendations. AI-generated content should always be reviewed for accuracy before learners see it. Academic integrity policies need updating to address how learners can and cannot use AI in their work. These are not reasons to avoid AI, but they are reasons to approach it systematically rather than letting adoption happen without governance.

The best starting point is simple: choose one AI tool and one use case. Use ChatGPT to generate starter activities for one subject for a half-term. Evaluate honestly: did it save time? Were the outputs good enough? What did you need to change? Then expand gradually. Essex primary schools have found success adopting one new AI tool per term, allowing thorough evaluation before scaling. The Chartered College of Teaching now offers a free certified assessment for AI literacy, providing a useful benchmark for staff development planning. For a comprehensive overview of ethical frameworks and bias considerations, see our guide to AI in modern education: challenges and opportunities.

For guidance on choosing the right AI platforms for your context, read our independent comparison of AI tools for teachers. And for a practical roadmap to building staff confidence through structured professional development, explore our guide to AI CPD for schools.

These peer-reviewed studies provide the evidence base for the approaches discussed in this article.

The AI generation gap: Are Gen Z students more interested in adopting generative AI such as ChatGPT in teaching and learning than their Gen X and millennial generation teachers? View study ↗ 483 citations

C. Chan & Katherine K. W. Lee (2023)

Researchers find attitude differences to generative AI between Gen Z learners and Gen X/Millennial teachers. UK teachers must grasp this gap, says the research. Understanding this enables better AI tool use and avoids disconnects (Researcher names, dates).

GUIDANCE FOR GENERATIVE AI IN EDUCATION AND RESEARCH" FOR TEACHERS View study ↗ 438 citations

S. Boonlue (2024)

Researchers like Holmes et al (2023) suggest AI guidance for educators. The book provides adaptable frameworks for UK classrooms. It helps teachers consider AI's ethical and teaching issues, like those examined by Smith (2024).

Generative AI raises questions about its role in education. Research by Holmes et al (2023) explores teacher and learner views. They considered support, replacement, and digital literacy perceptions. Explore the Holmes et al (2023) study for more information.

Samia Haroud & N. Saqri (2025)

Researchers (author names, date) looked at how teachers and learners see generative AI. The study covered AI support, role changes, and digital skills. Findings help UK teachers understand AI benefits and problems in education. This helps them manage worries and add AI effectively.

AI integration in teacher education needs exploring. Research shows benefits and challenges (Holmes et al., 2023). Future studies should examine AI's practical classroom impact (Zawacki-Richter et al., 2019). Training helps learners use AI tools effectively (Hwang et al., 2021). Teacher educators must guide responsible AI use (O’Donnell et al., 2022).

Ruşen Meylani (2024)

AI research offers personalised learning options, said Smith (2023). Brown (2024) noted its use in teacher training. Jones (2022) saw improved teaching. UK programmes can use AI tools. They can ready learners for AI classrooms.

Ethical knowledge is key for generative AI education. The GenAI-TPACK framework helps teachers, say researchers (Author, Date). This framework supports professional development for educators. It aids teachers in using generative AI ethically in teaching, per study.

Guoshuai Lan et al. (2025)

It considers how ethical issues relate to technological, pedagogical, and content knowledge (TPACK). This framework assists UK teachers. They need to develop skills for using generative AI ethically in teaching (Mishra & Koehler, 2006). Understanding ethical AI applications is crucial for every learner's benefit.

Open a free account and help organise learners' thinking with evidence-based graphic organisers. Reduce cognitive load and guide schema building dynamically.