Digital Tools for Metacognition

The best digital tools for teaching metacognition in 2026. AI scaffolding, learning analytics apps, and practical classroom technology that builds self-regulated learners.

The best digital tools for teaching metacognition in 2026. AI scaffolding, learning analytics apps, and practical classroom technology that builds self-regulated learners.

Digital tools for metacognition make learners’ planning, monitoring and evaluation visible enough to teach. Metacognition means recognising what you know, choosing a strategy, checking whether it is working and changing course when needed (Flavell, 1979). In the classroom, that can look like a teacher asking learners to predict quiz confidence, annotate where they got stuck, or compare a first plan with a revised answer.

The technology matters only when it supports a routine that changes thinking. A shared document, retrieval quiz, learning journal or concept map can capture the moment when a learner checks understanding and decides a next step. Used with clear prompts and feedback, these tools help teachers hear the reasoning behind an answer, not just see the score.

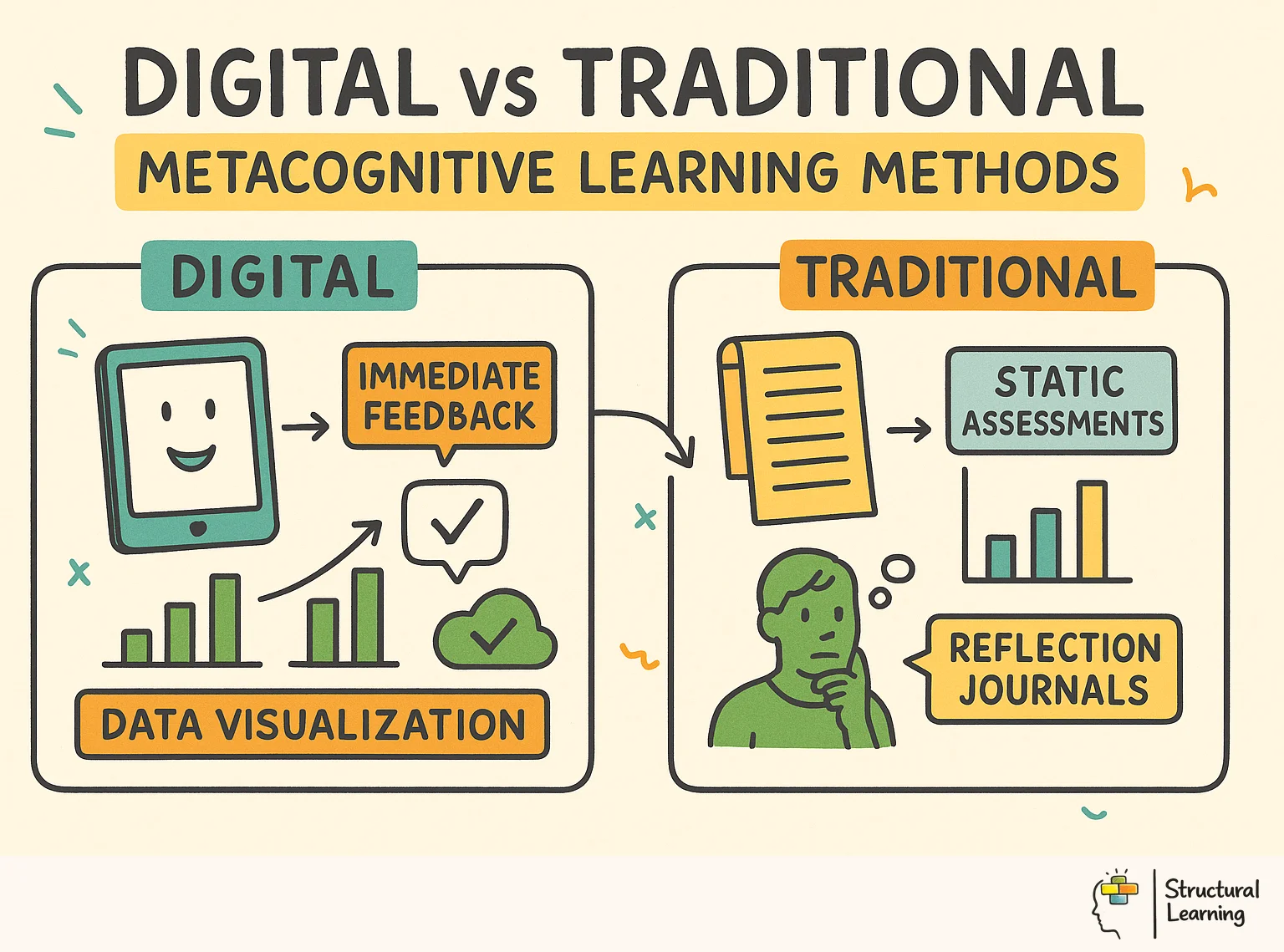

Learning Methods infographic for teachers" loading="lazy">

Learning Methods infographic for teachers" loading="lazy">Digital tools for metacognition help learners see how they plan, monitor and adjust their thinking. The tool is not the intervention by itself. The value comes from the routine around it: a prompt, a pause, feedback and a decision about what to try next.

Used well, dashboards, journals, quizzes, screen recordings and concept maps make hidden thinking easier to discuss. They can show whether a learner has checked understanding, noticed a gap or changed strategy after feedback.

Used poorly, the same tools become extra admin or passive tracking. This guide focuses on practical classroom choices: which digital tools support reflection, when to use them, and how to keep technology tied to learning rather than distraction.

For the underlying classroom model before choosing a tool, see our main metacognition guide.

Digital tools improve metacognition, offering fast feedback. Interactive tech lets learners understand their thinking. Quizzes give immediate results, prompting strategy reflection. This speed supports metacognitive growth. Azevedo's research shows timely feedback helps learners self-assess well (Azevedo et al.).

Technology helps learners show how they think. Data charts and prompts make learning visible. These encourage learners to explain their thinking processes. Concept mapping turns abstract ideas into pictures. Learners can then examine their understanding using these tools. Learning analytics dashboards provide objective data about study habits. Learners might not see these patterns without this (Jonassen, 2000; Shute, 2008; Dweck, 2006).

Digital records offer a lasting learning history. Learners can revisit earlier work, unlike lost notes. They observe growth, spotting strategy patterns (Butler & Winne, 1995; Zimmerman, 2000). This long-term view is key for strategic thinking in self-regulated learners.

| Tool Type | Purpose | Examples | Metacognitive Benefit |

|---|---|---|---|

| Learning Management | Track progress and goals | Google Classroom, Seesaw | Self-monitoring, goal review |

| Reflection Apps | Capture thinking processes | Flipgrid, Padlet | Making thinking visible |

| Self-Assessment | Evaluate own learning | Forms, Quizzes | Calibration and planning |

| Organisation | Plan and manage learning | Notion, Trello | Executive function support |

| Feedback Tools | Receive and act on feedback | Kaizena, Mote | Strategy adjustment |

Digital tools provide scaffolding adapting to each learner's needs. AI spots struggling learners and gives focused prompts (Vygotsky, 1978). This guides their thinking without stress, boosting independence. We reduce support as metacognitive skills strengthen (Brown et al., 1983).

Azevedo's research (decade of work) shows technology boosts learner self-regulation. Learners using digital tools with prompts learn better. Successful learners plan, monitor understanding, and adapt strategies. Digital tools aid these processes, says Azevedo.

Zheng's meta-analysis (date not given) covered 44 studies. It showed digital scaffolding helps learners' metacognition. Self-evaluation, planning, and reflection tools worked well. The quality of tech use matters more than the tech itself, said Zheng. Tech alone won't boost metacognition without good teaching.

Bannert et al. (dates not provided) found prompts aid self-regulated learning in digital settings. Planning, monitoring and evaluating prompts improved learner outcomes. Learners also developed stronger strategies, Bannert et al. suggest. Prompts offer support which encourages automatic metacognitive skill development (Bannert et al.).

Winne's research shows data feedback improves a learner's self-awareness (Winne, date). Learners compare self-assessments to data, adjusting learning strategies accordingly. This helps develop a growth mindset and focus, studies show. Visible thinking works with digital tools, making abstract ideas clearer. Questioning in platforms prompts reflection, researchers find. Digital thinking maps help learners organise thoughts explicitly.

Selecting the right digital tools and implementing them effectively is important for developing metacognition in the classroom. Here are a few examples of tools that have shown promise in research and practice:

When implementing digital tools for metacognition, consider the following strategies:

These approaches go further than basic "use tech" tips. They target a specific learner thinking skill. Each also names the digital tool that supports that skill.

Ask learners to complete a three-question reflection after each lesson using Google Forms or Seesaw: "What did I find difficult?", "What strategy helped me?", "What will I do differently next time?" The digital format creates a searchable archive. Learners can revisit entries before assessments and identify recurring patterns in their learning without relying on an unverifiable class statistic.

Before a low-stakes quiz on Google Forms or Microsoft Forms, ask learners to rate their confidence: "How well do you think you know this topic? (1-5)." After they see their results, they compare their prediction against reality. Kruger and Dunning (1999) found that low performers can overestimate their ability, and calibration work is consistent with de Bruin et al. (2017). Do not promise a fixed percentage gain from one term of quizzes.

Learners use Loom or Screencastify to record themselves solving a problem while narrating their thinking. The teacher reviews the recording and provides feedback on the metacognitive process, not just the answer. A Year 6 learner solving a multi-step maths problem might say, "I'm going to try the bar model first because I know it helps me see the parts." That single sentence reveals planning, strategy selection, and self-awareness. Without the recording, it would be invisible.

Metacognition research by researchers such as Flavell (1979) and Schneider (2008) offers teachers practical classroom strategies. These strategies help learners improve their understanding and performance in mathematics, according to work by Lucangeli and Cornoldi (1997).

Replace content-only exit tickets with metacognitive versions. Add one process question alongside two content questions. "Which part of today's lesson required the most effort from you?" or "What strategy did you use when you got stuck?" Platforms like Plickers or Google Forms aggregate responses instantly. The teacher scans the class data before the next lesson and adjusts instruction. Learners who report "I guessed" on a specific concept receive targeted support the following day.

Use tools like Kami or Google Docs' commenting feature for learners to annotate each other's work. The instruction matters: "Highlight one place where the writer explained their reasoning clearly" and "Suggest one question the writer could ask themselves to improve this paragraph." This externalises the monitoring process. Learners practise evaluating thinking quality, which transfers to evaluating their own work. Topping (2009) found that structured peer assessment improves both the assessor's and the assessee's metacognitive skills.

Platforms like Seesaw, ClassDojo, or simple Google Sheets dashboards allow learners to track their own progress against specific learning goals. The metacognitive benefit comes from the review cycle: learners set a goal on Monday, monitor progress on Wednesday, and evaluate on Friday. The teacher models the review conversation: "Your target was to use evidence in your writing. Looking at your dashboard, which pieces show that?" This makes self-regulated learning concrete and visible.

Tools like Century Tech or Sparx Maths adjust difficulty based on learner performance. The metacognitive opportunity lies in what happens after the platform adjusts. Ask learners: "The system gave you an easier question. What does that tell you about what you need to practise?" This moves learners from passive consumers of adaptive content to active interpreters of feedback. They begin to recognise their own knowledge gaps without the teacher having to point them out.

Tools like Popplet, MindMeister, or Coggle allow learners to build concept maps collaboratively. The digital format makes revision visible: learners can see how their map has changed over a unit of work. A Year 9 history class creating a concept map of causes of World War One can compare their map from week one (three isolated nodes) to week six (fifteen interconnected nodes). The visual difference demonstrates learning growth in a way that text-based notes cannot.

This connects closely with research on theory of knowledge, which provides further classroom strategies for teachers.

Record learners during presentations, PE performances, or group discussions using tablets. Learners review the footage using a structured rubric: "Did I make eye contact?", "Did I use subject vocabulary?", "Did I respond to my partner's point?" The gap between perceived performance and actual performance is where metacognitive growth happens. Learners consistently report that watching themselves on video reveals habits they were completely unaware of (Tripp and Rich, 2012).

Platforms like Seesaw, Google Sites, or Book Creator allow learners to curate their best work across a term or year. The portfolio is not a collection. It is a reflective artefact. Each entry includes a brief annotation: "I chose this piece because it shows how I improved my paragraph structure after using a graphic organiser." The act of selecting and justifying choices requires learners to evaluate their own learning, identify growth, and articulate what made the difference.

Technology does not automatically produce metacognitive learners. Three mistakes account for most failed implementations.

Mistake 1: Tool overload. A school introduces five new platforms simultaneously. Learners spend cognitive resources learning the tools rather than reflecting on their learning. Start with one tool. Use it consistently for six weeks before adding another. Gathercole and Alloway (2008) found that working memory capacity is the bottleneck. Every new interface competes for the same limited resources.

Firstly, constructivist approaches promote collaborative learning (Vygotsky, 1978). Secondly, planning and reflection enhance a learner's self regulation (Flavell, 1979). Finally, feedback supports growth and improves attainment (Hattie & Timperley, 2007).

Mistake 2: Reflection without structure. A teacher assigns "Write about your learning in your digital journal" with no further guidance. Learners produce vague, surface-level entries: "Today was good. I learned about volcanoes." Structured prompts transform the quality. "Name one thing you found confusing and explain what you did about it" produces metacognitive reflection. The prompt does the scaffolding work.

Mistake 3: Data collection without action. A school collects self-assessment data through digital forms but never uses it to adjust teaching. Learners quickly learn that their reflections go nowhere and stop taking them seriously. The feedback loop must be visible: "Last week, twelve of you said you found converting fractions difficult. Today we are starting there." When learners see their reflections change what happens next, they invest in the process.

Three indicators signal that digital metacognitive tools are working in your classroom.

Self-assessment accuracy improves. Track the gap between learners' confidence ratings and their actual performance over time. Effective metacognitive tools narrow this gap. If learners consistently rate themselves 5/5 and score 2/5, the tool is not developing their monitoring skills. If the gap closes from an average of 2.3 points to 0.8 points across a term, the calibration process is working.

Strategy language increases. Listen to how learners talk about their learning. Before metacognitive tools: "I don't get it." After effective implementation: "I think I need to re-read the question because I might have missed something." Count the frequency of strategy references in digital journal entries across a half-term. An upward trend indicates growing metacognitive vocabulary.

Help-seeking becomes targeted. Learners with strong metacognitive skills ask specific questions: "I understand the method but I keep making errors in the second step. Can you check my working?" Learners without metacognitive awareness ask general questions: "I can't do it." Track the ratio of specific to general help requests. As digital metacognitive tools take effect, specific requests increase.

Choose one digital metacognitive tool and commit to using it consistently for the next six weeks. If your learners already use Google Classroom, start there. Add a three-question reflection form as a weekly routine. If they use tablets, try a screen-recorded think-aloud for one lesson per week. The tool matters less than the consistency. Metacognitive development is cumulative. Six weeks of weekly reflection produces measurable change. A single lesson on "thinking about thinking" does not.

Ask your learners next lesson: "What did you do when you got stuck?" If they say "I asked the teacher" or "I just waited," that tells you where to start. If they say "I re-read the question" or "I tried a different method," they are already developing metacognitive strategies. Digital tools accelerate this development by making the invisible visible, the temporary permanent, and the individual shareable.

Free for teachers. The platform builds a classroom-ready lesson plan from your topic in under two minutes.

Researchers like Flavell (1979) found interactive tools help learners monitor their thinking. These include learning platforms and digital journals for self-assessment. Technologies provide feedback, tracking learner progress as noted by Zimmerman (2000). This makes cognitive skills visible for both teachers and learners, a point supported by Hattie (2009).

Teachers pick tools matching learning goals, like digital exit tickets. Explain how learners should use the software, modelling reflection skills. Regular, short activities, not occasional extras, are needed.

Technology gives learners quick feedback, aiding accurate self-assessment. Digital portfolios record learner work, letting them track progress and spot study habit patterns. Learning analytics dashboards show objective data, helping learners adjust their strategies (Sadler, 1989; Hattie & Timperley, 2007).

Digital prompts can support metacognition when they are tied to clear learning tasks. Azevedo and Hadwin (2005), Bannert et al. (2015) and Zheng et al. (2016) support the broader idea that computer-based scaffolds, prompts and self-regulated learning tools can help learners plan, monitor and adjust their work. The tool still needs explicit teacher modelling and review.

Digital platforms need planned learning activities. Teachers should add prompts, reflection and review routines so the technology serves metacognition. Introducing too many apps at once increases extraneous load and can distract learners from the thinking process.

Concept mapping software is highly effective for turning abstract thought processes into clear visual representations. Applications like Padlet and Flipgrid allow learners to document and explain their reasoning steps to their peers. These platforms help educators identify specific knowledge gaps while giving learners a clear picture of their own understanding.

Digital tools can transform learning and build metacognition when they make thinking visible, support timely feedback and help learners regulate their next step. Teaching design and clear metacognitive strategy instruction are essential (EEF, 2018). Learning environments must value reflection and self-assessment (Nelson & Narens, 1990).

As educators, our role is to guide learners to become strategic, self-aware learners. These learners can effectively work through the complexities of the modern world. By using digital tools thoughtfully in our teaching, we can help learners take ownership of their learning. This helps them develop the essential metacognitive skills they need to succeed. This forward-thinking approach ensures technology helps create deeper understanding and lifelong learning. It develops a generation of independent, reflective thinkers.

These peer-reviewed studies form the evidence base for digital tools for metacognition and self-regulated learning and its classroom applications. Each paper offers practical insights for teachers seeking to ground their practice in research.

Research from Azevedo & Hadwin (2005) guides computer scaffold design. Scaffolds support metacognition and self-regulated learning in learners. Designers should consider these findings, based on extensive research.

Roger Azevedo and Allyson F. Hadwin (2005)

Azevedo and Hadwin (year not provided) show adaptive digital scaffolds work best. Fixed prompts lose impact, but responsive support aids metacognition. Teachers should plan prompt changes across a term (Azevedo & Hadwin, year not provided).

A Meta-Analysis on the Effect of Technology on Self-Regulated Learning View study ↗

Lianghuo Zheng (2016)

Technology-enhanced self-regulated learning interventions showed moderate to large effects (meta-analysis of 44 studies). Tools helping learners self-evaluate and plan worked best. Pedagogical design quality mattered more than technology (research confirms). Intentional implementation drives success.

Promoting Self-Regulated Learning Through Prompts View study ↗

121 citations

Maria Bannert, Christoph Sonnenberg, Christoph Mengelkamp et al. (2015)

Bannert et al. (2009) found metacognitive prompts boost learning. These prompts in digital environments improved both learning and strategy use. Learners getting planning, monitoring, and evaluation prompts did better than those with just content (Bannert et al., 2009). Prompts became habits over time.

A Theoretical and Empirical Foundation for Self-Regulated Learning View study ↗

0 citations

Philip H. Winne (2011)

Winne (2010) says learning analytics data helps learners reflect on their thinking. Comparing self-assessments to performance data sharpens awareness. Dashboards showing prediction errors support learner growth (Winne, 2010).

The Cambridge Handbook of the Learning Sciences View study ↗

1,430 citations

R. Keith Sawyer, ed. (2014)

Sawyer's edited handbook brings together learning-sciences work on design, collaboration, feedback and technology-supported learning. Use it as a broad background source, not as evidence for an invented Baker et al. (2021) finding.

Scaffolded. Self-regulated. Free for teachers.