Cognitive Load Theory in the IB: Why Inquiry Isn't Unguided Discovery

Cognitive Load Theory in the IB: Why Inquiry Isn't Unguided Discovery | Structural Learning

Cognitive Load Theory in the IB: Why Inquiry Isn't Unguided Discovery | Structural Learning

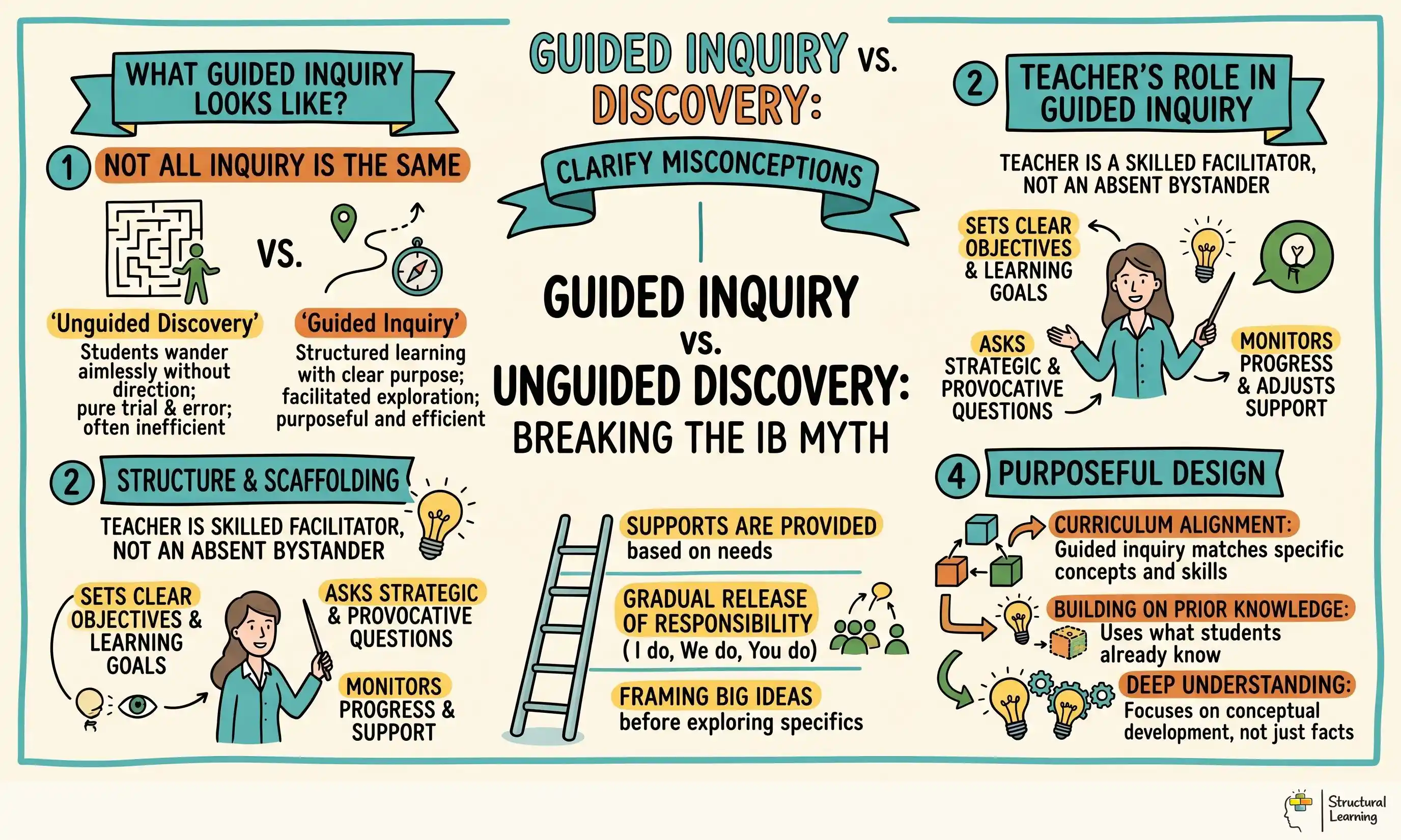

The International Baccalaureate gets blamed for many things. Ofsted inspectors flag it as "inquiry-based and lacking explicit instruction." Cognitive science advocates worry that students will be overwhelmed trying to figure things out themselves. Education traditionalists see it as the worst sort of progressive hand-waving, where teachers step back and children somehow construct knowledge from thin air. None of this is true, but the caricature is so powerful that many UK leadership teams have ruled out IB without understanding what it actually is. The real story is far more interesting: cognitive load theory and the IB are not opponents. Properly taught, they are complementary. This article is for MAT leaders, heads considering IB adoption, and teacher leaders defending rigorous pedagogy against both unguided discovery and rote learning. The myth is that IB means discovery learning. The reality is guided inquiry with explicit scaffolding, the very model that cognitive load theory itself endorses.

An Ofsted inspector recently commented on a UK IB school: "The inquiry-based approach is not supported by sufficient explicit instruction, leaving students to work things out for themselves." A traditional head considering IB remarked, "Isn't it all just discovery learning? Cognitive load theory tells us that doesn't work." A parent asked, "If students figure things out themselves, how do they learn procedures? Won't they get confused?"

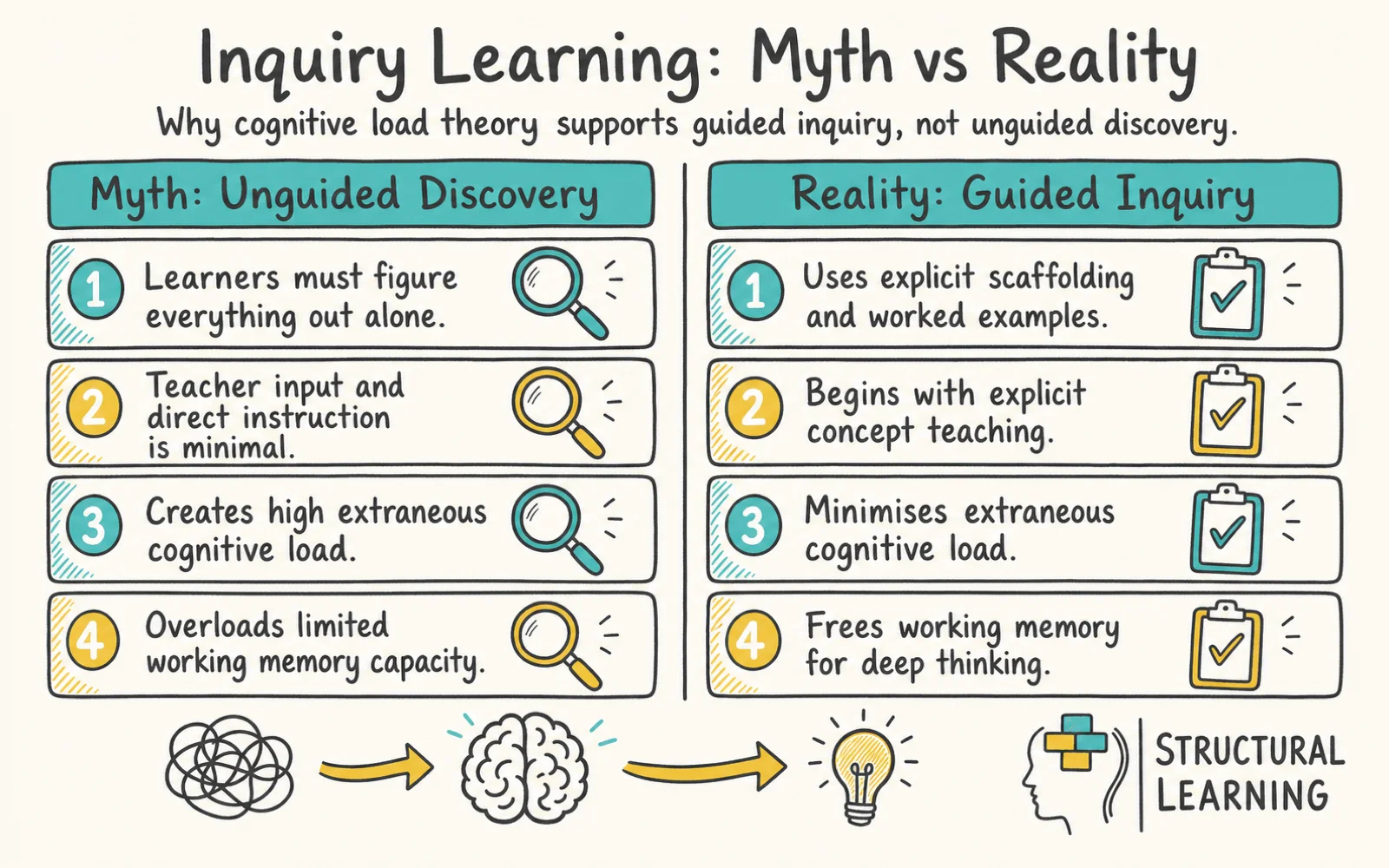

These concerns share a common thread: the assumption that IB is unguided discovery. The problem is, this assumption is wrong. It's also persistent enough to have real consequences for schools making curriculum choices. When a leadership team believes IB means "minimal teacher input," they either reject it outright or adopt it poorly (which then confirms their suspicions when results disappoint).

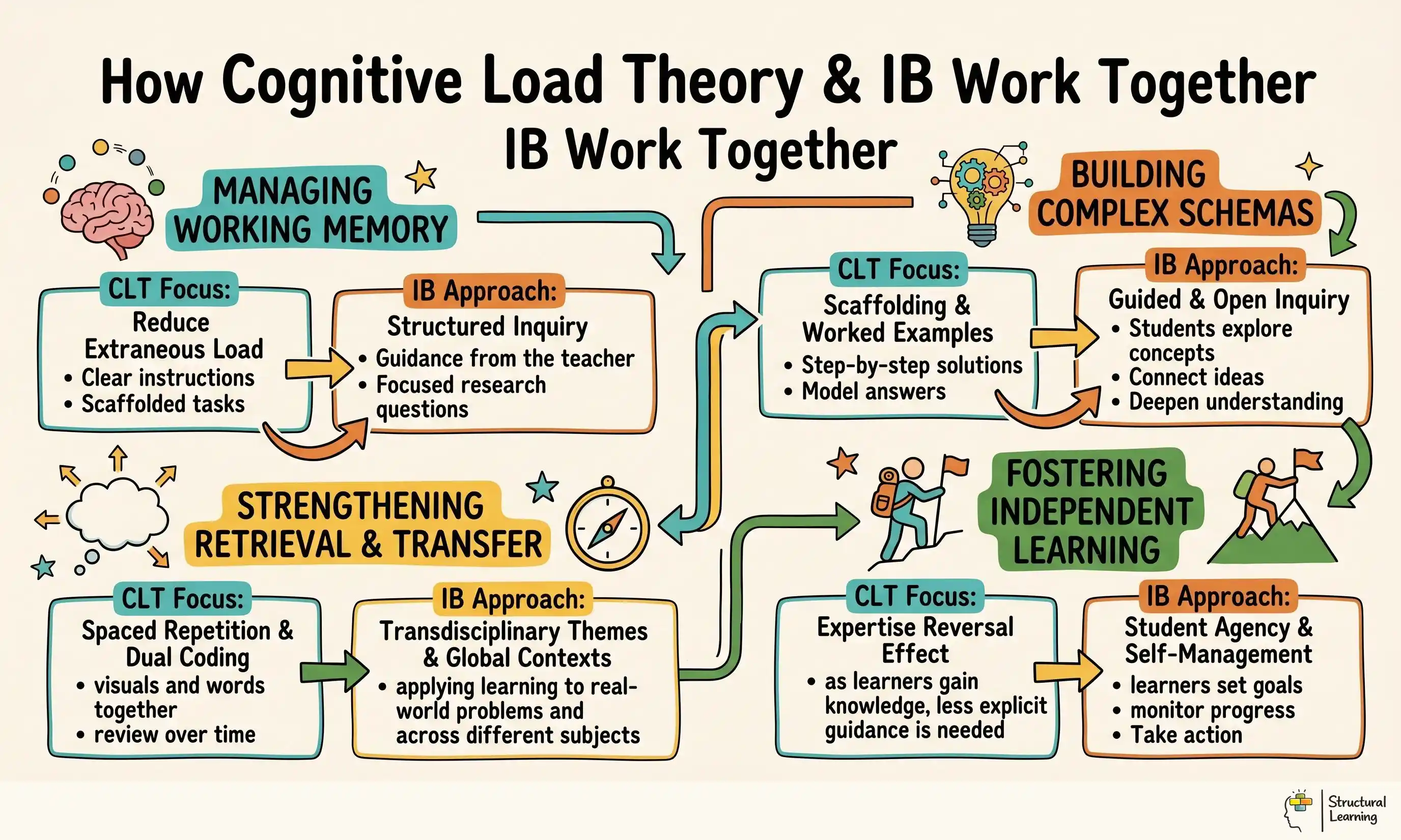

The tension here is real, but it rests on a misunderstanding. Constructivism, the idea that learners build meaning for themselves, is a theory of how people learn, not a description of how IB teachers teach. Every serious learning scientist accepts constructivism: knowledge isn't poured into passive heads. But accepting that truth doesn't mean teachers should disappear. In fact, cognitive load theory, the research base on worked examples, and the evidence from high-performing IB schools all point in the same direction: the most effective IB classrooms are saturated with explicit teaching. The difference is what is explicit and when. This article reconciles that tension by looking at what cognitive load theory actually requires, what the IB actually does, and where the two align, and where IB schools get it dangerously wrong.

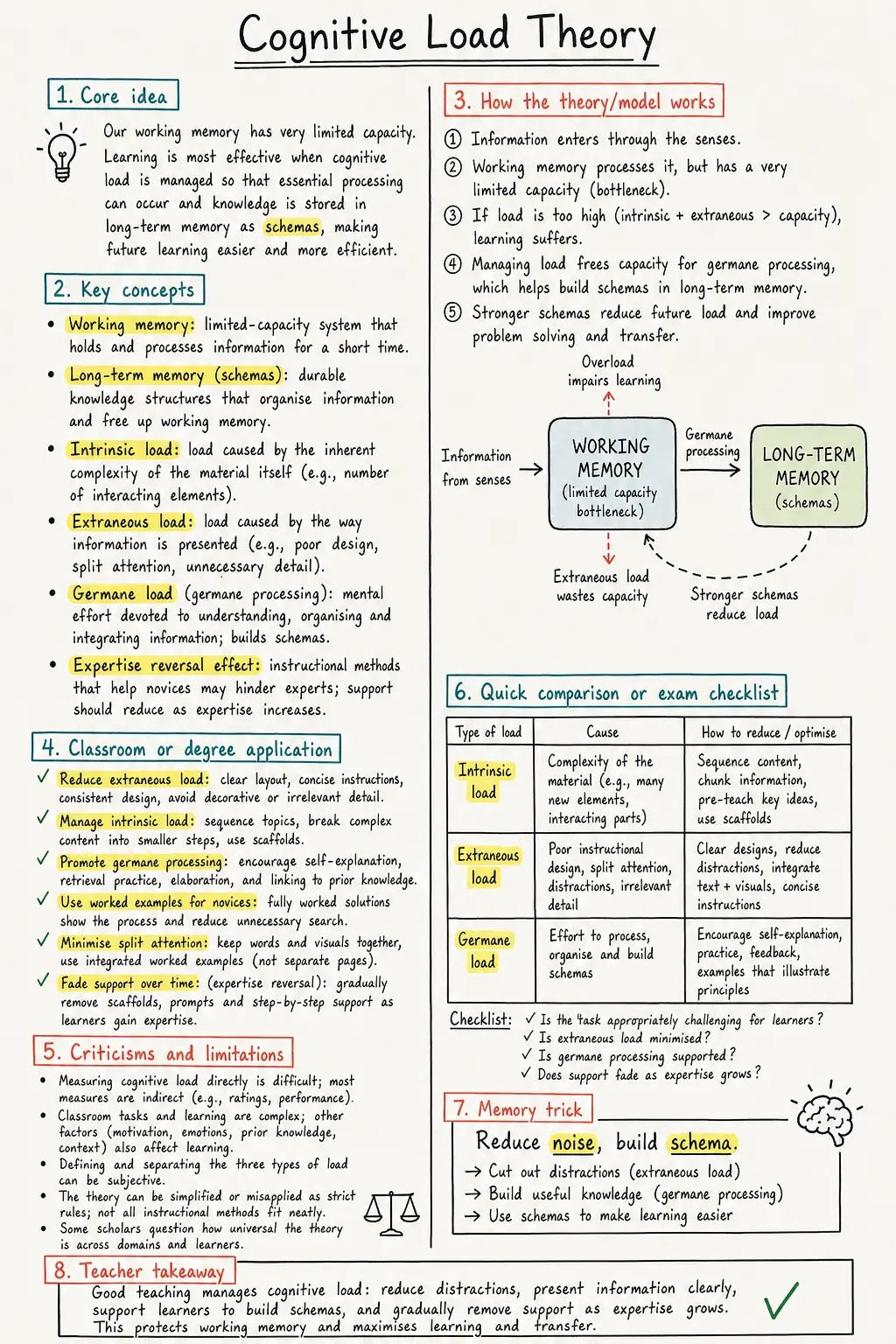

Cognitive load theory (CLT) begins with a simple fact: your working memory is tiny. When you're solving a problem or learning something new, you can hold only a handful of pieces of information in active memory at once (Sweller, 1988). The moment you exceed that limit, learning stalls. You become confused. You give up. Understanding this constraint is the key to understanding both why unguided discovery fails and why scaffolded inquiry can succeed.

Sweller identified three types of cognitive load (Sweller, 1994). Intrinsic load is the inherent difficulty of the material itself. Learning supply-and-demand curves is harder than learning grocery items because it requires holding more relationships in mind at once. Extraneous load is the wasted mental effort spent on things that don't help learning, figuring out how to use a tool, understanding an unclear instruction, or being confused about what "good" looks like. Germane load is the productive cognitive effort you direct toward building mental schemas (organised knowledge structures). The goal is simple: minimise extraneous load and direct working memory toward germane load so that you can learn despite intrinsic difficulty.

Here's where unguided discovery fails. When you tell a learner, "Figure out how the water cycle works," you've just added an enormous layer of extraneous load. The learner must now figure out both the content (the water cycle) and the process (how to investigate, what questions to ask, which sources to trust, how to organise findings). Two problems at once. Working memory overloads. They flounder, not because they're incapable, but because the cognitive demand is unreasonable (Kirschner, Sweller, & Clark, 2006).

Sweller's solution is equally straightforward: teach through worked examples, clear success criteria, and explicit scaffolding. Show a learner how to approach the problem step by step. Gradually remove the scaffolds. By the time they work independently, the intrinsic load is manageable because you've removed the extraneous load. The learner's working memory is free to do real learning.

The critical insight, one that's often missed in the CLT versus constructivism debate, is that Sweller has never opposed application, problem-solving, or meaningful learning. He opposes unscaffolded problem-solving. Sweller (2019) explicitly acknowledged that "discovery learning can work if heavily scaffolded." The question is not whether students should inquire or apply knowledge. The question is whether they have the scaffolds they need to do so without cognitive overload.

This is where the caricature breaks down. IB is not unguided discovery. It's guided inquiry within a structured framework, supported by explicit skill teaching. Here's what each phase of the IB actually looks like.

In the Primary Years Programme (PYP), units are built around what's called a structured inquiry cycle. It begins with the teacher explicitly teaching a central idea, not a vague theme, but a specific conceptual framework. An example: "Living things depend on their environments. We call this interdependence." That is not discovery. That is direct instruction. Next, students explore within defined boundaries. They don't investigate "nature"; they investigate "the interdependence of animals and their habitats." The teacher has bounded the scope, provided research protocols, modelled how to ask questions, and given graphic organisers to structure their thinking. Investigations are scaffolded with sentence starters, worked examples of data collection, and explicit success criteria for the final product.

Consider a specific PYP water unit. Does it begin with "Go find out about water"? No. It begins: "Water moves between the atmosphere, the land, and living things through a continuous cycle. We call this the water cycle. Today, we're going to investigate one part of it, evaporation, using this research protocol." [The teacher then models the protocol with a worked example.] This is scaffolded inquiry, not discovery learning. The central idea is taught. The scope is bounded. The process is modelled. Only within these guardrails does exploration happen.

The Middle Years Programme (MYP) operates through criterion-referenced assessment. Students are told explicitly what they must be able to do. A history unit on empires doesn't start with "Discover what an empire is." It starts: "By the end of this unit, you will be able to: define empire, identify three characteristics of imperial systems, analyse primary sources for perspective, and evaluate the impact of empire on colonised societies. Here's what each criterion looks like at level 4, level 3, level 2." This is not discovery. This is clarity. Students know exactly what success means because the teacher has made it visible upfront.

Download a one-page study note for Cognitive Load Theory, with the key ideas, limitations and classroom links in one place.

The Diploma Programme is similar. Economics doesn't begin with students discovering supply and demand. It begins with direct instruction. The teacher or seminar leader teaches the theory: "When demand exceeds supply, prices rise. When supply exceeds demand, prices fall." This is explicit, audible teaching. Then comes application: "Now, using this model, explain why energy prices in the UK have surged." This is guided practice. Finally, independent application: "Write an essay explaining a real-world market using supply-and-demand analysis." By the time students work independently, they understand the framework because the teacher taught it first.

Where does constructivism enter? Not in the idea that learners figure things out without guidance, but in the principle that learners apply knowledge to authentic, real-world problems. They build meaning through use, not in abstract drills. In a well-taught IB classroom, a PYP student doesn't just memorise the water cycle; they investigate how water moves through their school garden, making the concept concrete. An MYP historian doesn't just memorise dates; they analyse primary sources, reconstructing how colonialism changed lived experience. A DP economist doesn't just solve practice problems; they explain real financial crises. That is constructivism done right: knowledge applied in context, which deepens understanding.

None of this is discovery learning. All of it respects cognitive load theory. IB explicitly combines direct instruction (concepts, frameworks, processes taught outright) with guided application (students practice within structured, scaffolded contexts). The confusion arises because IB emphasises inquiry, and "inquiry" has become a code word for discovery learning in UK education discourse. But inquiry, asking questions, investigating, analysing, only works when it's guided. IB, at its best, provides that guidance at every step.

In 2006, Paul Kirschner, John Sweller, and Richard Clark published a landmark paper titled "Why Minimal Guidance During Instruction Does Not Work." It became the rallying cry for direct instruction advocates and a weapon wielded against any teaching that involved student inquiry (Kirschner, Sweller, & Clark, 2006). The paper's finding was clear: unscaffolded, minimally guided discovery learning produces weaker learning outcomes than explicit instruction.

The problem is what happened next. The paper got cited as evidence that all inquiry-based learning is ineffective. IB, being inquiry-based, got tarred with the same brush. Leadership teams read headlines like "Constructivist Learning Doesn't Work" and assumed that meant IB doesn't work.

But Kirschner, Sweller, and Clark said no such thing. They specifically criticised minimal guidance. They didn't evaluate IB, which is not minimally guided. They didn't evaluate scaffolded inquiry. They evaluated unguided discovery, the kind where a teacher says, "Investigate renewable energy" and disappears, leaving learners confused about where to start.

Three years later, Hmelo-Silver, Duncan, and Chinn (2007) published a direct response to Kirschner et al. in the same journal. Their meta-analysis examined guided inquiry and problem-based learning, the kind with explicit instruction, worked examples, and scaffolding. The effect sizes were high: 0.63 to 0.70, comparable to or exceeding direct instruction alone. The key variable wasn't whether inquiry happened; it was whether the inquiry was guided.

This distinction is everything. Unguided discovery: low effect sizes, cognitive overload. Guided inquiry: high effect sizes, productive cognitive load. IB is guided inquiry, which means the Kirschner critique doesn't apply. The misconception that it does is understandable, both are described as "inquiry-based",but it's wrong. The evidence that matters is whether scaffolded inquiry works. It does. And that's what IB is built on.

One of cognitive load theory's most useful frameworks is the worked example effect: learners acquire complex skills more efficiently when they study worked examples of how to solve a problem than when they attempt problems without guidance. The effect is robust and well-documented (Sweller, 2011). But worked examples aren't a one-time tool. They're part of a continuum. You provide many worked examples early on. Gradually, you fade them, removing one step at a time, until learners can work independently. This is the scaffolding fading effect.

The IB structure, properly implemented, follows this logic across the 3-to-18 continuum. The younger the learner, the more scaffolding. The older they become, the more autonomy, but the autonomy itself is scaffolded, not abrupt.

In PYP (ages 3 to 12), scaffolding is intensive. Teachers model inquiry processes explicitly. When a unit explores "how living things interact with their environment," the teacher doesn't just assign the investigation; they model how they would break down the big question into smaller, answerable questions. They provide graphic organisers that structure thinking. They supply sentence starters for research notes. They show annotated examples of strong observations versus vague ones. The central idea is taught directly. The skills of investigation, how to observe, record, compare, are demonstrated. Success criteria are visible from day one. By the time a PYP learner begins independent investigation, they understand the framework, have seen it modelled, and know what quality looks like.

In MYP (ages 11 to 16), scaffolding remains high but shifts slightly. Instead of modelling processes, teachers are explicit about criteria. Rubrics are given at the start of a project, not at the end. Teachers often show exemplars: "This analysis is level 4 because it uses specific evidence, considers multiple perspectives, and explains significance. This one is level 2 because it asserts ideas without supporting evidence." Students now understand not just the task but what quality means. Additionally, MYP makes skill teaching explicit. The Approaches to Teaching and Learning (ATL) framework names five skill clusters: communication, collaboration, organisation, thinking, and self-management. These aren't implicitly acquired through projects; they're taught directly. A teacher might model critical thinking: "When I read this source, here's what I ask myself: Who wrote this? What's their bias? What's the time period? What would they not say?" This is scaffolded inquiry with visible thinking processes.

In DP (ages 16 to 19), the pattern is similar. Content is taught explicitly in seminars and lectures. Theory precedes application. Students learn economics then apply it to analyse real markets. They study historical methodology then conduct historical investigations. By this point, learners have spent a decade with IB's scaffolding, so the level of explicit instruction might look lighter, but theory is still foregrounded. The difference is that the working memory has grown through years of systematic development. What would be cognitive overload for a Year 3 PYP learner is manageable for a Year 12 DP learner because the foundational schemas have been built, step by step.

The visual logic is a scaffolding curve that descends gradually from top-left (high support in PYP) to bottom-right (greater autonomy in DP). But critically, the curve is smooth. There's no cliff where scaffolding vanishes. At every stage, explicit teaching and guided practice precede independent application. This is both constructivist (learners build meaning through application) and aligned with CLT (extraneous load is minimised through clear scaffolds and worked examples).

It would be naive to claim that every IB school executes this model well. Some do get it badly wrong, violating CLT principles in the process. These are the moments when Sweller's warnings apply, not to IB's framework, but to IB's implementation.

Under-teaching inquiry procedures in PYP is the first risk. Some teachers treat the inquiry cycle as student-led question formulation without modelling how to formulate questions. The result is that learners waste enormous cognitive effort just trying to figure out how to start. Extraneous load spikes. A teacher corrects this by doing what Sweller prescribes: modelling. "Here's how I'd break down this big question. Notice I'm asking smaller, answerable questions, not vague ones. Here are three strong questions; here's why this one is too broad." After two or three modelled examples, students can usually formulate their own. The procedure is explicit; the inquiry is still guided.

Concept-based teaching without procedural content is a second trap. Some IB schools emphasise conceptual understanding (knowing that water moves through systems) while neglecting procedures (how to calculate evaporation rates or track water through a specific ecosystem). This creates an incomplete schema. Students understand the "what" but not the "how." Cognitive load theory insists both are needed. Procedures support concept learning; concepts make procedures meaningful. A well-scaffolded unit teaches both. "The concept is water interdependence. Here's the procedure for measuring it. Now apply both to your investigation."

Open-ended tasks without explicit criteria is a third area where IB schools stumble. Some PYP exhibitions or MYP projects are assigned with vague success criteria: "Demonstrate your thinking" or "Show what you've learned." The resulting cognitive load is immense because learners are simultaneously learning content and guessing what quality means. Sweller would say the extraneous load is avoidable. Give the rubric first. Make exemplars visible. Define "demonstrate thinking" into specific, observable actions. Then the task is challenging (high intrinsic load) but not confusing (low extraneous load).

Reducing explicit teaching in DP out of a misguided commitment to student autonomy is the fourth mistake. Some schools reduce seminar time, thinking that more "self-directed learning" is more constructivist. It's not. DP learners still need explicit instruction in theory. What they don't need is recitation drills. But they need lectures, seminars, and direct explanation of complex frameworks. A well-taught DP respects both: teachers teach theory explicitly; students then apply it deeply.

The key difference between a well-taught IB school and a poorly-taught one is scaffolding. High-performing IB schools provide more explicit modelling, clearer criteria, more worked examples, and more frequent checks for understanding, not less. They respect cognitive load theory by removing extraneous confusion so that learners can focus their working memory on genuine learning.

Worked examples are perhaps the single most well-documented lever in learning science. When a learner studies a step-by-step solution to a problem, then compares it to their own attempts, learning accelerates. The effect size is robust: 0.52 across studies (Rosenshine, 2012). Yet many IB schools, particularly in PYP, significantly under-use worked examples, sometimes in a misguided attempt to prioritise "student discovery."

A worked example in IB doesn't mean the teacher solves the problem for the learner. It means the teacher models the process of approaching the problem. In PYP inquiry, it means the teacher thinks aloud: "I want to investigate how water moves through soil. First, I'd ask myself: What's my testable question? Here's one: 'How fast does water drain through different types of soil?' That's testable because I can actually measure it. Here's another: 'What is soil?' That's not testable because it doesn't lead to investigation. Notice the difference?" After two or three modelled examples, the pattern becomes clear. The process is visible. When learners formulate their own questions, they're not starting from zero; they're imitating a demonstrated pattern.

In MYP, worked examples might look like analysis models. A history teacher shows a strong historical analysis of a primary source: "Notice how this student cites specific evidence. They explain the context, who wrote this, when, why. They identify bias or perspective. They consider significance, why does this matter? These are the criteria you'll be graded on. Here's a weaker analysis: it mentions the source but doesn't cite specific words. It assumes context rather than explaining it. It doesn't acknowledge perspective. As a result, it's unclear why the source matters." The exemplar makes the criteria concrete. Students see what level 4 looks like before they write.

In DP, worked examples appear in seminar discussions and problem sets. An economics teacher doesn't just tell students, "Supply and demand interact." They show: "Here's a supply curve; here's a demand curve. When they intersect, that's the market price. Now I'm going to walk you through a real case, the UK energy crisis. I'll show you how to use these curves to explain what happened." They model the analytical thinking. Then students apply the same analytical process to a new case they choose.

Rosenshine's principles of instruction (Rosenshine, 2012) emphasise that worked examples should be gradually faded. Show all steps; then omit one step and ask students to fill in the gap; then remove two steps. This is the scaffolding fade. It's explicit, measurable, and it works. Yet many IB schools either skip worked examples entirely (assuming students will figure it out) or fail to fade them (creating dependence rather than independence). A CLT-respecting IB school does both: many worked examples early, deliberately faded over time, allowing learners to gradually assume more independence as their schemas solidify.

What does CLT-respecting IB look like on a typical Tuesday morning? Here are five concrete moves that teachers can make in PYP, MYP, or DP classrooms to simultaneously honour both IB's constructivist principles and cognitive load theory's scaffolding requirements.

Move 1: Teach the inquiry process explicitly before expecting students to inquire. Don't assume learners know how to ask a good question, locate sources, or synthesise findings. Model it aloud. In a PYP unit on water, the teacher might say: "I need to research how water moves through plants. Here's my thinking: First, I'll break this into smaller questions. 'How does water travel inside a plant?' That's a good question, I can investigate it. 'Are plants important?' That's too vague. 'How does water enter through the roots and exit through leaves?' That's two related questions I can explore together. Notice that I'm specific, not vague. I'm asking about processes I can actually see or measure." After modelling twice more with different topics, the pattern becomes reproducible. Now when students formulate their own questions, they're following a demonstrated model. Extraneous load drops; productive learning increases.

Move 2: Give rubrics and success criteria before the task, not after. In MYP and DP, hand out the grading rubric at the start of the unit, not on the day students submit work. Better yet, show exemplars. "This essay is level 4 because it uses specific evidence, addresses all parts of the question, and explains the significance of each point. This one is level 2 because it makes general statements without backing them up. Here's why the difference matters." Now learners can self-assess drafts. They're not guessing what "good" means while writing; they're aiming at a target they can see. This directly reduces extraneous load and frees working memory for genuine learning.

Move 3: Use concept plus procedure plus practice, not concept alone. Some IB units teach the concept (living things depend on their environment) and assume procedures will follow naturally. They won't, especially for younger learners. Teach all three. Concept: "Water moves between Earth, the atmosphere, and living things." Procedure: "Here's how I'd trace water through one cycle, I'd start with evaporation, label it on a diagram, then track it to condensation, precipitation, collection." [Model on a worked example.] Practice: "Now you trace water through a different cycle, the one in our school garden." Each layer of learning is scaffolded and then applied. The learner isn't guessing how to use the concept; the procedure makes it concrete.

Move 4: Model your thinking aloud using worked examples. This is Rosenshine's "modelling and guided practice" in action. When you're teaching analysis, whether in history, English, or science, think aloud so learners see the process. "I'm reading this source about the Industrial Revolution. I notice it's written in 1850 by a factory owner. That's important because he'd have a bias toward factories. He calls factory work 'noble labour.' But he wouldn't mention worker injuries, exploitation, or poverty because that would contradict his bias. So I need to read this source critically, recognising its perspective." Then interpret another source the same way, letting students notice the pattern. Now they can apply the process: they analyse their own sources using the same thinking moves they just saw modelled. The analytical schema is now visible. Cognitive load drops; competence grows.

Move 5: Check for understanding frequently using low-stakes feedback. This is explicit in Rosenshine but sometimes underemphasised in IB. Regularly ask: Do you understand? Use quick checks: thumbs up/sideways/down; exit tickets; quick quizzes; think-pair-share. When you see confusion, re-teach that step, not the whole unit. If learners are confused about how to formulate a research question, spend 10 minutes re-modelling. You'll catch extraneous load before it compounds. You'll know whether to fade scaffolds or maintain them. This isn't "teaching to the test"; it's formative assessment as a CLT tool. It keeps learners in the optimal zone: challenged but not overwhelmed.

These five moves don't require choosing between IB and CLT. They integrate both by ensuring that all inquiry is guided, all concepts are procedurally grounded, and all learning happens with enough explicit scaffolding that working memory stays productive rather than overloaded.

John Hattie's meta-analysis of 800+ studies is often cited in UK education policy discussions. His effect size rankings have become influential, sometimes more so than the research itself. When educators reference Hattie, they often cite one fact: direct instruction has an effect size of 0.59, while inquiry-based learning has an effect size of 0.30 to 0.50. The conclusion many draw: direct instruction is better; inquiry is weak.

But Hattie's data is more nuanced, and that nuance matters for IB. Hattie distinguishes between inquiry-based learning (loosely structured student exploration) and guided problem-based learning (structured inquiry with explicit scaffolding). The effect size for guided problem-based learning is 0.63 to 0.70, higher than direct instruction alone and substantially higher than unguided inquiry. The variable that predicts success isn't whether inquiry happens. It's whether the inquiry is guided (Hattie, 2009).

This is the reconciliation that IB advocates often miss when they're defending the model. And it's the reconciliation that CLT advocates often miss when they assume inquiry-based learning is inherently weak. The evidence shows that the type of inquiry matters enormously. Unguided discovery is weak. Guided inquiry, where scaffolding is high, worked examples are provided, and criteria are explicit, is strong. In fact, it's comparable to or better than direct instruction alone because it combines the schema-building benefits of explicit teaching with the application and transfer benefits of meaningful problem-solving.

IB, when taught well, sits in the 0.63 to 0.70 range. A PYP unit where the teacher explicitly teaches the central idea, models the inquiry process, provides graphic organisers, and uses worked examples isn't unguided discovery. It's guided inquiry. It has high effect sizes because the scaffolding is doing the cognitive work that Sweller and Rosenshine identified as essential. The gap between "inquiry-based" as a label and IB-as-actually-practised is where the confusion lies. Well-taught IB isn't unguided discovery. It's the high-effect-size version.

Working-memory-aware. Schema-building built in. Free for teachers.

Free for teachers. The platform builds a working-memory-aware lesson plan from your topic in under two minutes.

For a MAT leader or head considering IB adoption, here's the straight answer: yes, IB is compatible with cognitive load theory,if taught well. And the conditions for teaching it well are surprisingly specific. They're not mysterious. They're not a matter of faith. They're testable, observable, and they align with 40 years of cognitive science research.

Red flags of poorly-taught IB include: minimal direct instruction in PYP (teachers think the inquiry is the teaching); rubrics given at the end, not the start (learners have no scaffolding for success); open-ended projects without worked examples (learners are left guessing how to approach the task); and emphasis on "student choice" at the expense of structure (bounded choice becomes unbounded, and cognitive load soars). If your school is doing these things, you're not honouring either IB or CLT. You're creating confusion.

Green flags of well-taught IB include: explicit teaching of central ideas and concepts before inquiry; clear, pre-task rubrics with exemplars; frequent modelling and worked examples; gradual scaffolding fade as competence grows; and frequent checking for understanding to catch cognitive overload before it compounds. If your school is doing these things, IB and CLT are working together. Learners acquire both knowledge and the ability to apply it meaningfully because explicit instruction has removed the extraneous load that would otherwise prevent learning.

The honest tension between IB and CLT isn't a philosophical conflict. It's a practitioner problem. IB's constructivist roots can, if poorly understood, lead to under-teaching and cognitive overload. But IB's actual framework, its explicit criterion-referenced assessment, its structured inquiry cycles, its emphasis on teaching ATL skills directly, is entirely compatible with cognitive load theory. The best IB schools and the most CLT-respecting schools often look very similar: teachers are visible and active, modeling, scaffolding, making thinking processes explicit. The difference is vocabulary and framing, not pedagogy.

For leadership teams evaluating IB, the question isn't "Should we choose IB or cognitive science?" It's "Do we have the capacity to teach IB well, with high scaffolding, clear criteria, frequent modelling, and genuine checking for understanding?" If yes, IB is a defensible choice from a cognitive science perspective. If no, IB (like any other curriculum) will disappoint. The pedagogy matters far more than the name.

These peer-reviewed studies provide the evidence base for the strategies discussed above.

Cognitive Load Theory in the Context of Teaching and Learning Computer Programming: A Systematic Literature Review View study ↗

31 citations

Berssanette et al. (2022)

This systematic review examines how Cognitive Load Theory applies to teaching computer programming. Teachers can use CLT principles to structure programming lessons more effectively, breaking down complex coding concepts into manageable chunks to prevent cognitive overload in students.

A systematic review of immersive technologies for education: Learning performance, cognitive load and intrinsic motivation View study ↗

64 citations

Poupard et al. (2024)

This research reviews how immersive technologies affect learning performance and cognitive load in educational settings. Teachers considering VR or AR tools should be aware that whilst these technologies can enhance motivation, they may also increase cognitive burden without careful instructional design.

Leveraging cognitive load theory to support students with mathematics difficulty View study ↗

18 citations

Barbieri et al. (2025)

This study explores how Cognitive Load Theory can specifically support students with mathematics difficulties. Teachers working with students who have maths learning challenges can apply CLT strategies to reduce unnecessary cognitive burden and improve mathematical understanding through targeted instructional approaches.

A Review of Factors Affecting Cognitive Load in Immersive Virtual Learning Environment View study ↗

Zhang et al. (2023)

This review identifies factors that increase cognitive load in virtual learning environments. Teachers using immersive technologies should consider how complex virtual elements can distract learners and implement strategies to minimise extraneous cognitive load whilst maximising educational benefits.

Cognitive Load Management Strategies in Piano Education View study ↗

Bae (2024)

This study investigates how piano educators manage students' cognitive load during music instruction. Music teachers can apply these CLT-based strategies to structure lessons more effectively, helping students process complex musical information without overwhelming their working memory capacity.