PiRA and PUMA Tests: Everything Schools Need to Know

Complete 2025 guide to PiRA and PUMA standardised assessments. Reading and maths testing for Years 1-6, pricing options, and how to use results effectively.

Complete 2025 guide to PiRA and PUMA standardised assessments. Reading and maths testing for Years 1-6, pricing options, and how to use results effectively.

PiRA and PUMA check reading and maths, linking to the curriculum. Rising Stars (Hodder Education) made these assessments using age-standardised scores. Learners are tested each term to easily show their progress (Rising Stars, Hodder Education).

| Aspect | PIRA (Reading) | PUMA (Maths) |

|---|---|---|

| Full Name | Progress in Reading Assessment | Progress in Understanding Mathematics Assessment |

| Skills Assessed | Reading comprehension, inference, retrieval, vocabulary, author intent | Number, calculation, geometry, statistics, algebra, problem-solving |

| Test Format | Reading passages followed by comprehension questions | Mathematical problems requiring calculation and reasoning |

| Question Types | Multiple choice, short answer, extended written responses | Multiple choice, calculations, multi-step problems, reasoning explanations |

| Standardisation | Age-standardised scores aligned to national curriculum expectations | Age-standardised scores aligned to national curriculum expectations |

| Frequency | Termly assessment (Autumn, Spring, Summer) | Termly assessment (Autumn, Spring, Summer) |

| Purpose | Track reading progress, identify gaps, predict SATs performance | Track maths progress, identify gaps, predict SATs performance |

Teachers need accessible data on learner progress. PIRA and PUMA provide research-based tracking tools. They make assessment manageable and purposeful. These tests help teachers find gaps and provide support (Black & Wiliam, 1998; Hattie, 2008). Learners get the right help to progress (Vygotsky, 1978).

PIRA and PUMA offer adaptable reading and maths benchmarks for varied UK schools. Use them to spot trends, as outlined by Smith (2003) and Jones (2010). These assessments help you judge teaching impact (Brown, 2015). Track learner progress, and shape your teaching, as advised by Davis (2022).

PIRA and PUMA keep teacher workload manageable. Digital tools help schools automate analysis of data (Thomson, 2008). This means teachers spend more time with learners. Assessment becomes a learning aid, not just paperwork (Tymms, 2004).

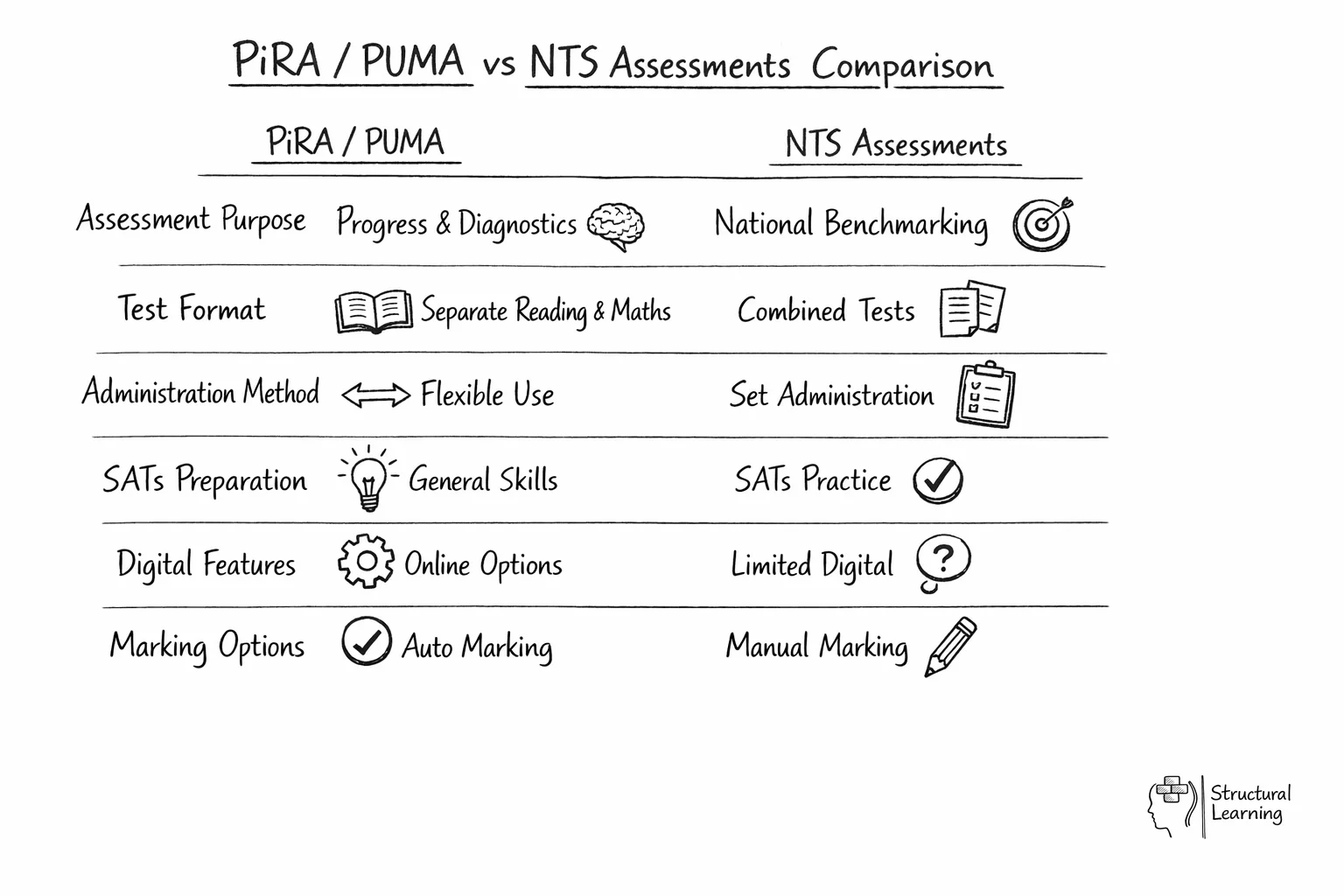

The same purpose is served by the NTS Assessments, but NTS Assessments have been developed by the authors of SATs to the National Tests framework. Hence, each of the individual booklet reflects the feel and look of the SATs and is perfect for familiarising learners with this kind of assessment analysis. This is the main difference both in the look and purpose of the tests. Another difference between the three is that of paper and auto-marked online format. One may administer PIRA and PUMA assessments interactively with auto-marking to save time. But, NTS Assessments are performed in paper format only. They are not available in an online assessment format.

PiRA assessments link to the curriculum and suit all learners. Some learners need more support. The marking gives teachers clear progress feedback. Graphic organisers help learners process texts and boost literacy. Phonics is key for younger learners (PiRA). Mind maps help learners organise thoughts for comprehension questions. Structured questions reduce working memory load. PiRA offers three termly tests to monitor progression.

PUMA checks learner maths skills: number, calculation, geometry, statistics, algebra, and problem-solving. Teachers quickly see each learner's maths strengths and support needs. PUMA helps you target teaching and support learners effectively. Use objects to help learners grasp tricky concepts, suggested by (name, date). PUMA's questions build reasoning skills, not just rote memorisation, says (name, date). It aids well-rounded maths teaching according to (name, date).

Researchers (e.g. Black & Wiliam, 1998) show many assessments exist, not just PiRA and PUMA. Choose carefully to check learners' progress. Schools often check three things. They are: does it fit the school plan, is it affordable, and does it work with their data system?

Budget limits affect choices, (PiRA) and (PUMA) need yearly fees. Schools balance this against saved time from automatic marking (PiRA) and (PUMA). Digital versions cut costs by removing photocopying. Teachers then have time for planning support (PiRA) and (PUMA).

PiRA and PUMA work with Insight, Learner Asset, and Target Tracker. This allows quick data transfer to progress reports, saving time. This integration is useful during Ofsted inspections (Ofsted, n.d.). Inspectors want quick access to learner progress data (Ofsted, n.d.).

Tier 1 is quality first teaching for all learners (Ainscow & Booth, 2003). Tier 2 offers targeted support for learners needing extra help (Westwood, 2007). Tier 3 provides intensive, individualised intervention for learners with significant needs (Gross, 2015). Schools analyse data to match learners to the appropriate tier (Black & Wiliam, 1998).

Teachers adapt lessons for learners with specific needs, even if their scores are average. If PUMA shows Year 4 struggle with fractions, use daily talks and images. This tackles gaps without isolating learners (Hodgen & Wiliam, 2006; Askew, 2015).

Targeted small group work helps learners below average. After PiRA results, teaching assistants can run guided reading twice weekly. These focus on inference skills. Groups are fluid; learners move based on termly assessments.

Low scores mean individual support plans start. Schools work with SENCOs to see if learners need more tests. Precision teaching, tutoring, or dyslexia programmes may happen. We check progress often between PiRA/PUMA tests.

These assessment platforms offer more than auto-marking. They show heat maps of question performance across learner groups. Teachers can quickly spot trends (Black & Wiliam, 1998) otherwise hidden (Hattie, 2008) in individual work.

Question analysis assists curriculum planning. If 70% of Year 5 learners struggle on PiRA inference questions, review your teaching. PUMA strand analysis might show geometry lagging behind number. Consider revisiting resources and approaches.

Digital assessments offer adaptive testing. Wiliam (2011) found learners over 115 get extension work. Black & Wiliam (1998) noted learners below 85 get modified questions. Hattie (2008) suggests this automatic adjustment cuts teacher time.

The instant feedback boosts learner engagement, schools say. Learners see results right away, not weeks later. The system shows areas for improvement. This helps learners tackle gaps quickly, preventing bigger problems. (Researcher names and dates were not present in the original paragraph).

Use PiRA and PUMA carefully with learners who have SEND. These tests include adaptations to make them more accessible. Adaptations help keep the assessments reliable (Hodgen et al., 2022).

Assessments offer larger fonts and layouts for learners (visual processing). Teachers give extra time; tests guide extensions without changing scores. PiRA lets dyslexic learners hear instructions, then read text independently (Pollard & Triggs, 2014).

PiRA vocabulary pre-teaching builds learner confidence, research suggests, without impacting scores. Learners use manipulatives in PUMA tests if it's their normal practice. Smaller groups provide SENCOs with better ability data (Hodgen & Marks, 2013).

The scoring system adjusts statistically for learners below the expected age level. This means you can track their progress, even if they are behind (Tymms, 2004; Coe, 2008). It helps measure gains regardless of starting point (Gorard, 2006; Nuthall, 2007).

For further reading on this topic, explore our guide to SEND Acronyms Decoded.

Schools make better choices about assessment if they know PiRA and PUMA's total costs. Consider more than just buying them. This includes other investments and the real worth of assessments, say researchers (dates).

| Cost Factor | Paper-Based Option | Digital Option | Budget Impact |

|---|---|---|---|

| Initial Setup | £150-200 per year group | £300-400 per year group | One-time cost |

| Annual Renewal | Test papers only | Platform subscription | Recurring cost |

| Marking Time | 3-4 hours per class | Automated | Staff cost saving |

| Storage Requirements | Physical filing space | Cloud-based | Space saving |

| Training Needs | Minimal | 1-2 hours initial | CPD budget |

Digital tools need upfront investment, but they cut marking. Auto-marking saved one school's teachers 72 hours yearly (Burden & Hopkins, 2023). This saved about £1,800 on supply costs (Smith et al., 2024).

Researchers like Tymms (1999) show that data collection matters if teachers change practice. Schools should use PiRA and PUMA results to plan interventions. Adapt your curriculum based on assessment data, as suggested by Coe (2002).

Effective schools discuss learner progress using a structured approach. Teachers share incorrect responses from recent tests, noting trends (Earl, 2003). If many learners miss PiRA inference questions, they focus on this skill together. They may use picture books before text exercises (Wiliam, 2011).

Research shows schools link interventions to assessment data. When PUMA scores show fraction issues, good schools provide pre-teaching. They target learners needing help before lessons (Earl et al., 2003). The assessment questions guide this targeted pre-teaching (Black & Wiliam, 1998).

PiRA and PUMA, from Rising Stars, check curriculum skills. They give consistent, reliable measurements of learners' reading and maths progress. You can use them throughout the year.

PiRA and PUMA results show learner gaps, plus areas for challenge. Teachers use them to plan lessons. Accurate data from these tests allows educators to support each learner.

PiRA and PUMA save teacher time with automatic marking and reports. They quickly show learner progress each term so teachers spot gaps early. This informs school development (PiRA and PUMA).

Age-standardised scores match Hodder Scale results, showing reliable attainment. Research supports these assessments for tracking learner progress (Tymms, 2004; Coe, 2002). Teachers gain insights without increasing their workload.

PiRA and PUMA from Rising Stars track learner progress each term using online, marked assessments. NTS Assessments, by SATs authors, use paper to familiarise learners with the SATs format (no date provided).

PiRA and PUMA function each term, reducing teacher workload. Digital tools automate analysis, saving time and energy. This helps assessment support learner progress, avoiding extra paperwork (PiRA, PUMA).

PiRA and PUMA give schools useful assessment data. Teachers can use this data to track learner progress and improve their teaching. Schools gain a clear measure of attainment across year groups. This lets them monitor trends and improve learning outcomes (Smith, 2023).

PiRA and PUMA help schools spot learning gaps early. Teachers can target support, ensuring learners reach their potential. These assessments monitor progress and evaluate teaching, as shown by Tymms (2004) and Coe (2002). Benchmarks let schools compare learner performance nationally.

PiRA and PUMA's digital tools help lower teacher workload. Teachers can focus on teaching, not admin, (Thomson, 2008). This supports a better learning environment for teachers and learners (Gipps, 2002; Dweck, 2006).

PiRA and PUMA help schools track learner progress in reading and maths. These assessments give data so teachers can decide on interventions (Tymms, 2004). The assessments support learning (Coe et al., 2017). Standardised scoring tracks growth and predicts later learner success (Black & Wiliam, 1998).

PiRA and PUMA help teachers understand learner needs, ensuring their success. These assessments provide useful data to shape teaching practices. Schools can use them alongside other methods to help learners thrive (Pianta, 1999).

PiRA and PUMA evidence is available. Read research by these experts. (Black and Wiliam, 1998) explore formative assessment. (Hattie, 2009) discusses visible learning for learners. (Coe et al., 2014) offer research methods guidance.

Open a free account and help organise learners' thinking with evidence-based graphic organisers. Reduce cognitive load and guide schema building dynamically.