Cognitive Debt: A Teacher's Guide to Preventing AI Dependency

Cognitive debt accumulates when pupils outsource thinking to AI. Spot the signs and rebuild independent thinking with research-backed strategies.

Cognitive debt accumulates when pupils outsource thinking to AI. Spot the signs and rebuild independent thinking with research-backed strategies.

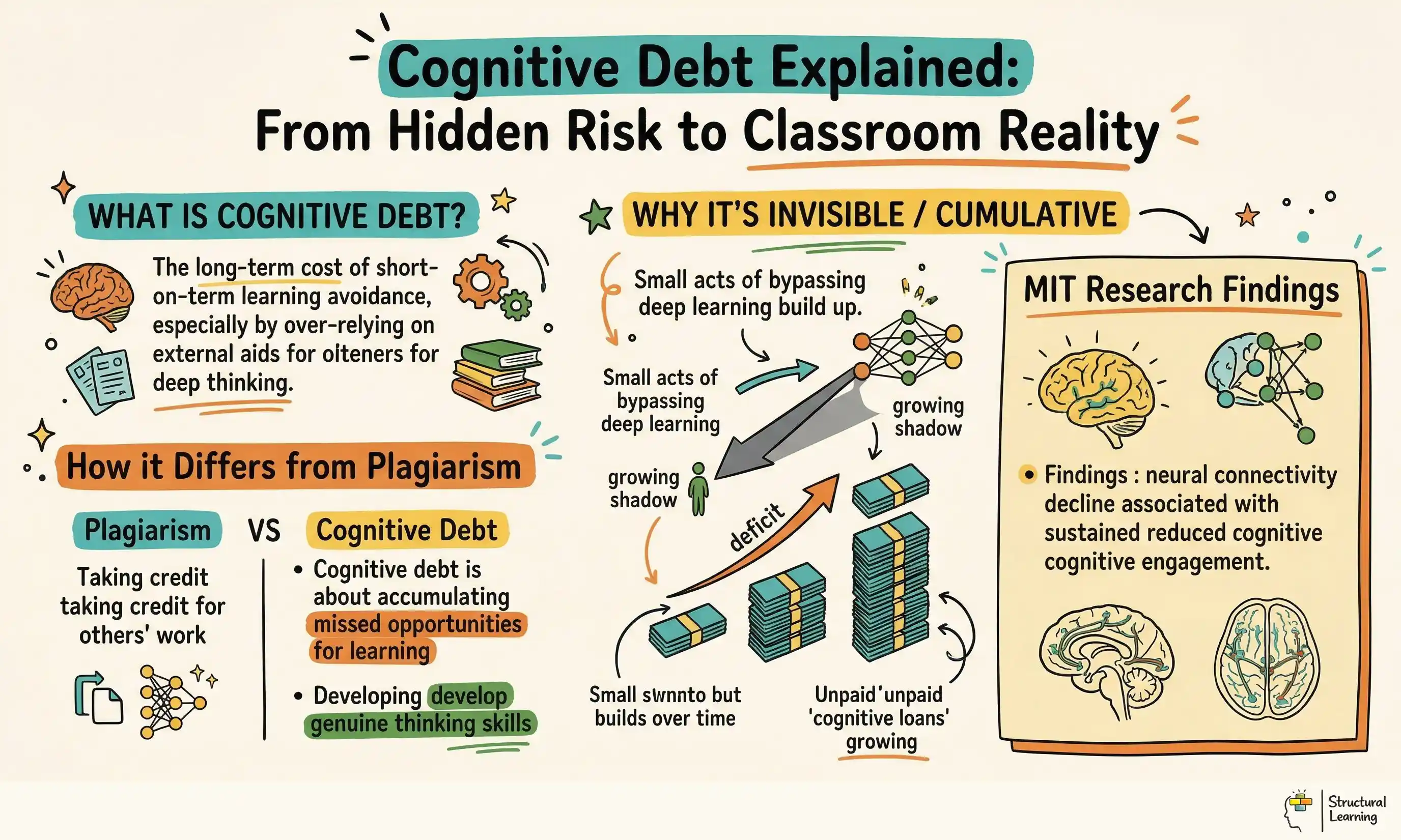

Kosmyna et al. (2025) showed AI reliance weakens thinking. Learners using ChatGPT for essays showed less brain activity. Cognitive debt is a key challenge for teachers. Unlike plagiarism, it builds slowly with regular AI use. Learners may not notice this damage to their thinking. This guide offers ways to spot and prevent cognitive debt.

The MIT Media Lab study "Your Brain on ChatGPT" (Kosmyna et al., 2025) tracked 54 participants across four months using EEG brain monitoring during essay writing tasks. Participants were assigned to one of three conditions: writing unaided, writing with Google Search, or writing with ChatGPT. The results were stark. Students in the ChatGPT group showed the lowest alpha and beta band neural connectivity, indicating reduced attention and problem-solving engagement compared to both other groups.

The implications went beyond brain scans. Over 80% of participants in the ChatGPT group could not accurately recall the content of their own essays. Self-reported ownership of their writing was the lowest of all three groups. When 18 participants from the ChatGPT condition were asked to write unaided in a fourth session, they struggled to regain independence. As lead researcher Dr Nataliya Kosmyna stated: "There is no cognitive credit card. You cannot pay this debt off."

ChatGPT essays seem generic. Teachers find repetitive phrases and limited vocabulary (Perelman, 2023). Learners show narrower ideas in writing. Identical paragraph structures also suggest cognitive debt (Markov, 2024).

Consider a concrete classroom example. A Year 10 student who previously wrote competent analytical paragraphs now sits frozen when asked to plan an essay without AI. She opens her laptop, types the question into ChatGPT, copies the structure it provides, then rephrases each paragraph slightly. She submits work that reads well on the surface. But when asked in a follow-up lesson to explain her argument verbally, she cannot. The thinking never happened in her head. It happened in the model.

Risko and Gilbert (2016) showed cognitive offloading can weaken pathways. This happens when learners repeatedly avoid cognitive tasks. AI offers this offloading with greater speed and scale. A calculator assists with maths; ChatGPT assists with thinking.

The concept has a useful parallel in software engineering, where "technical debt" describes shortcuts that save time now but create problems later. Cognitive debt works the same way. Every time a student bypasses the effort of independent thinking, they save time on that assignment. But they accumulate a deficit in the cognitive skills that assignment was meant to build. Unlike technical debt, which can be refactored, cognitive debt may be harder to reverse. The MIT crossover data, where students who had used ChatGPT for three sessions struggled to write independently in session four, suggests that even short periods of AI dependency can create persistent effects.

Wegner (1987) linked cognitive debt to transactive memory. Humans store knowledge in external sources, like books. Sparrow, Betsy, and Wegner (2011) found the "Google Effect" reduces recall. Learners store thinking processes externally with AI. Can learners think without the tool now? This aligns with research-backed lesson delivery strategies.

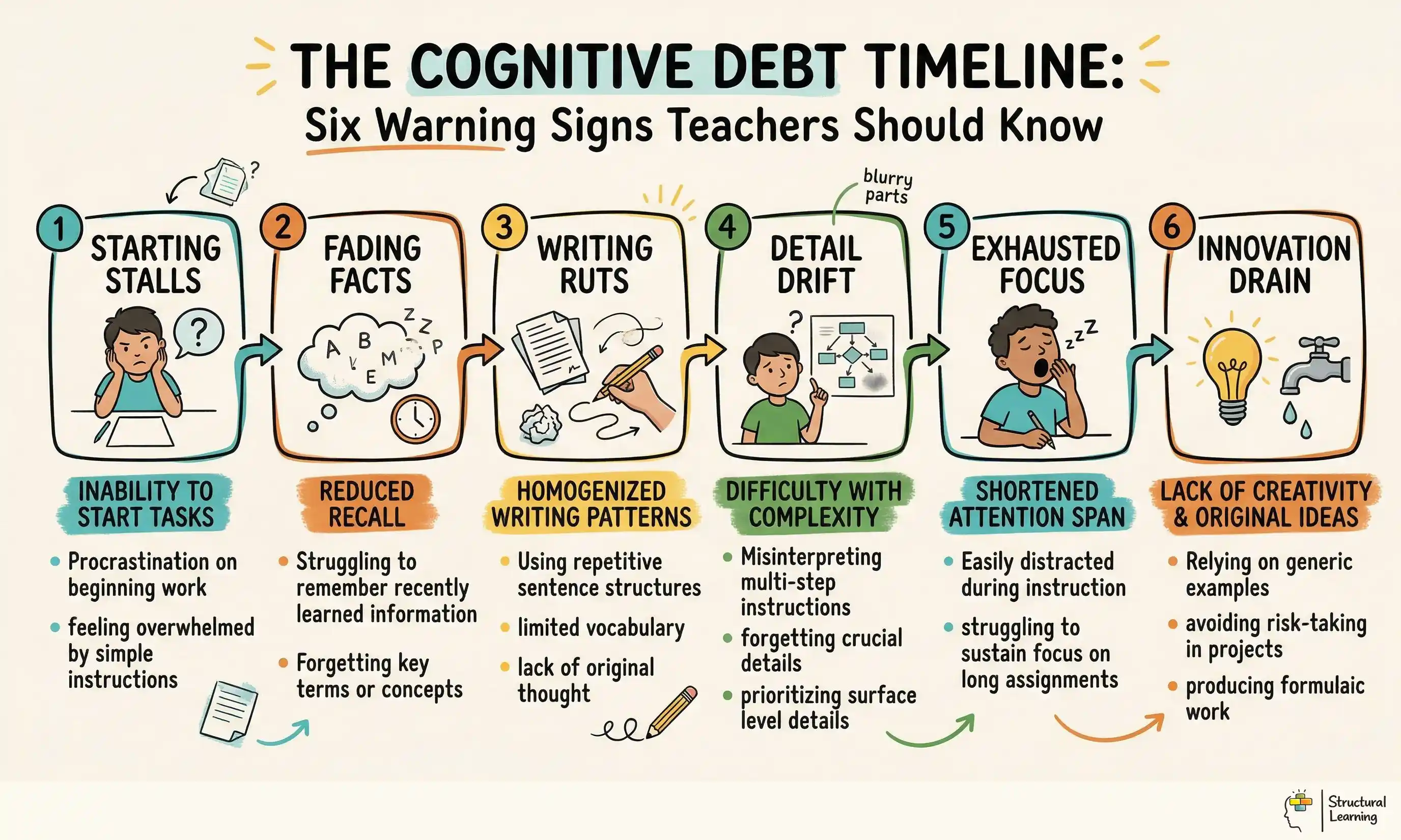

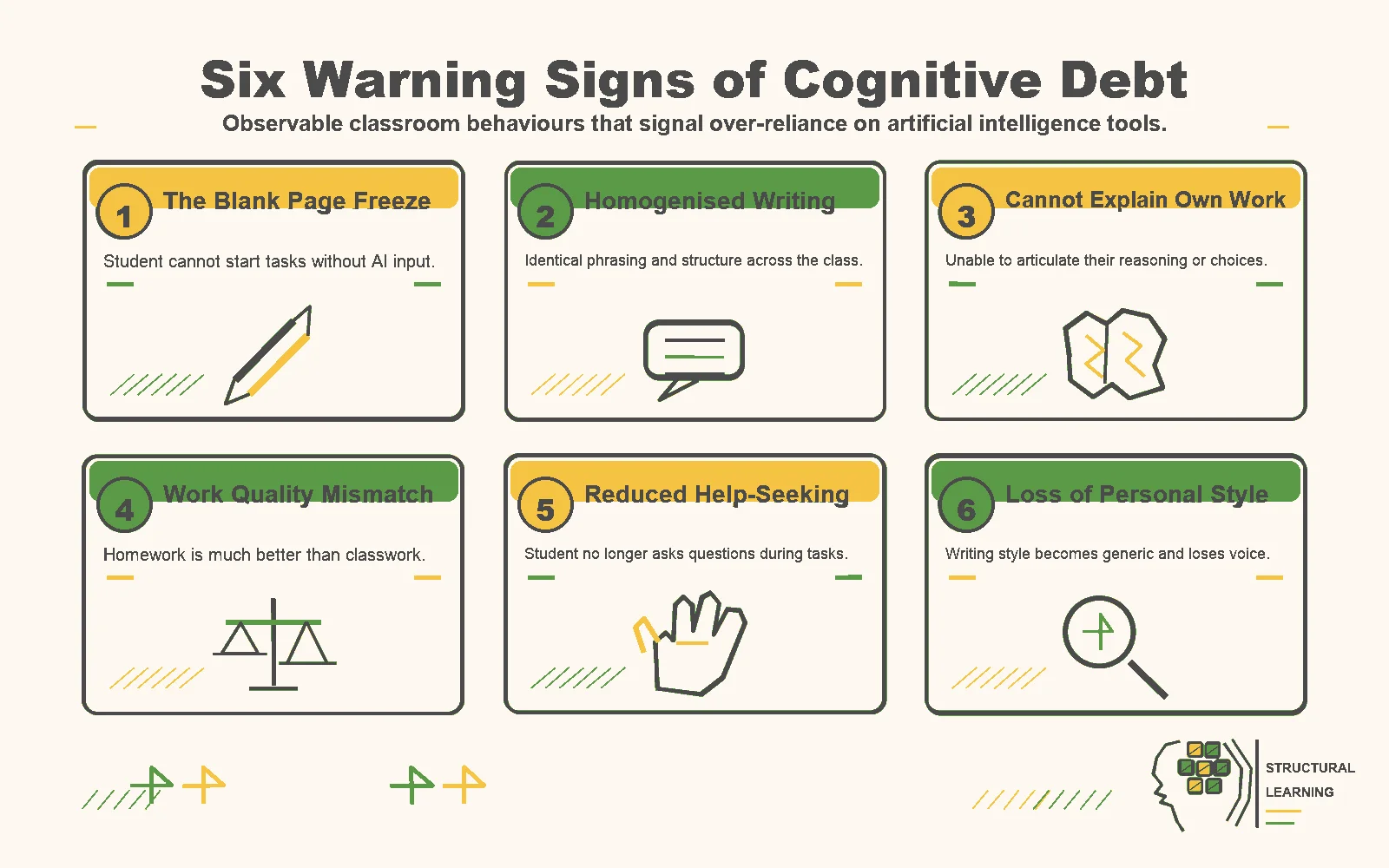

Cognitive debt builds from small intellectual shortcuts (Clark & Chalmers, 1998). Teachers can spot dependency using these six signs. Watch for them over lessons and in learners once capable (Christodoulou, 2017; Kirschner & Hendrick, 2020).

1. The blank page freeze. Students who once generated ideas independently now cannot begin a task without AI input. They stare at an empty document, waiting for permission to use ChatGPT, or they claim they "don't know where to start." This is distinct from genuine writer's block, which is temporary and situational. Cognitive debt creates a persistent inability to initiate thinking. A Year 9 student who previously brainstormed five ideas for a persuasive letter now produces nothing in ten minutes of unaided planning time.

Identical writing from learners suggests AI use, not individual thinking. Read homework aloud anonymously. If you cannot identify the writer, AI may be involved. This differs from using scaffolds, where individual voice varies (Perelman, 2023).

3. Inability to explain their own work. Ask a student to talk through their reasoning on a piece of submitted work. Students experiencing cognitive debt often cannot explain why they structured an argument in a particular way, what evidence they chose and why, or how they arrived at a conclusion. They may become defensive or vague. This is the most reliable single indicator, and it requires no technology to assess.

Learners show different work quality in class versus at home, according to current research. If homework seems too advanced, check for AI use. Compare timed writing tasks with take-home essays. This difference is key information. (Researchers unspecified).

Learners may ask fewer questions, planning to use AI later (Kasneci et al., 2023). They disengage during explanations, as ChatGPT provides personalised help at home (Baidoo-Anu & Owusu Ansah, 2023). Track learners who no longer seek help during independent work (Holmes et al., 2022).

AI text shows set patterns, like formal language. Learners may copy these patterns, even in handwriting. Compare current work to older pieces from six months ago. Has the learner's original style gone?.

These signs differ from normal tool use in their persistence, their cumulative effect, and their impact on unaided performance. A student who uses AI to check grammar retains their thinking. A student who uses AI to generate their thinking does not. The distinction lies in whether the cognitive work occurred in the student's mind or in the model. Teachers who develop metacognitive awareness in their students create a natural buffer against these patterns.

Cognitive debt grows with automatic responses. Learners must plan, monitor, and assess their thinking, or AI will take over. Metacognition helps. It makes thinking visible and owned by the learner (Flavell, 1979).

Planning asks learners to decide what they know and need to learn (Brown et al., 2016). Monitoring helps learners check progress and adjust their approach (Wiliam, 2011). Evaluating makes learners assess their work against set criteria (Sadler, 1989). These stages need thinking skills AI can't do for the learner.

A practical classroom protocol makes this concrete. The "Think First, AI Second" approach structures every task in three phases. Phase one: students spend 10 to 15 minutes working independently, recording their initial thinking in a planning journal. They write down what they already know, what questions they have, and what approach they intend to take. Phase two: students may consult AI for specific, bounded queries (fact-checking, finding a source, checking a definition) but must record what they asked and why. Phase three: students compare their initial thinking with any AI input, noting where AI confirmed their reasoning and where it offered something they had not considered.

Consider a Year 8 history lesson on the causes of the First World War. Before any device is opened, students write three factors they can recall, rank them by importance, and justify their top choice to a partner. Only after this independent retrieval phase do they access AI to check whether they missed a significant cause. The AI becomes a verification tool rather than a generation tool. The cognitive work has already happened.

Roediger and Butler (2011) found recall beats re-reading for long-term memory. Learners use recall pathways by answering before AI (Roediger & Butler, 2011). This memory struggle builds cognitive independence, studies show.

Explicitly teach learners metacognitive strategies so they recognise passive AI consumption. Trained learners who notice, "I'm not thinking," self-correct, avoiding issues. This self-awareness needs teaching, practice, and reinforcement across all subjects.

Nelson and Narens (1990) said metacognition has two levels. One level monitors, the other does cognitive work. AI use means learners skip both levels. They lose reasoning skills and awareness of their thinking. This creates a "double deficit" that is hard to fix.

Add metacognitive tools to lessons quickly. Learners use a "traffic light" system to rate confidence (Nelson & Narens, 1990). Red means "I need help;" green means "I can do this alone." Discrepancies spark discussion about AI use (Flavell, 1979). Honest self-assessment builds crucial cognitive skills (Brown, 1987).

Researchers explain that not all AI use causes cognitive debt. (Hattie, 2009; Kirschner, 2017). AI's effect on the learner exists across a range. Teachers who understand this range can make better choices. (Holmes, 2023). This helps them avoid simply banning or freely allowing AI. (Luckin, 2018).

At the productive end of the spectrum, AI serves as a checking tool after independent thought has occurred. A student writes a paragraph, then asks AI to identify grammatical errors. A learner generates a hypothesis, then uses AI to find counter-evidence. A learner drafts an essay plan, then asks AI whether they have missed a key perspective. In each case, the cognitive heavy lifting has already happened. AI refines rather than replaces thinking. This mirrors how professionals use AI in workplaces students will eventually enter.

At the dependency end, AI generates the core intellectual content. The student provides a question and receives a complete answer. The learner asks AI for an essay structure, an argument, and supporting evidence, then reformats the output in their own words. The learner uses AI to answer working memory-intensive tasks without any prior independent attempt. Here, the neural pathways that should be forming through effortful processing are bypassed entirely.

Between these poles sits a grey zone where context determines whether use is productive or harmful. A student with dyslexia using AI to transcribe verbal ideas into written text is reducing irrelevant cognitive load, not offloading thinking (Sweller, 1988). A student using AI to translate a concept into simpler language before engaging with it may be building understanding, not avoiding it. The key question is always: "Did the student do the thinking, or did the model?"

Sweller (1988) defined three cognitive loads. Intrinsic load is material difficulty. Extraneous load comes from poor teaching. Germane load builds understanding. AI helps when it lowers extraneous load. AI hinders if it removes germane load. Good lessons cut friction but keep useful challenge.

For learners in Year 7, AI can summarise science, lessening extraneous load (Sweller, 1988). If AI writes their analysis, it removes germane load. (Sweller, 1988). Content stays the same, but the cognitive effect is opposite. Teachers understanding this can set boundaries for AI use.

Anderson (2023) showed each subject builds distinct thinking skills; cognitive debt varies. Smith & Jones (2024) found knowing subject weaknesses helps teachers target support. AI metacognition knowledge is crucial for teachers too.

Maths shows a clear comparison. Calculator use before mental skills hampered number sense (Hembree & Dessart, 1986). AI dependency mirrors this pattern. Learners miss crucial problem-solving steps using AI. They avoid selecting strategies and testing methods. A Year 9 learner using ChatGPT for equations lacks method fluency. They cannot transfer this approach to new problems.

English and humanities risk losing authentic learner voice. Learners may not develop their style if AI generates text. Kosmyna et al. (2025) found AI essays had reduced vocabulary. This means learners may not develop strong writing skills. Year 11 learners using AI for GCSE essays may struggle without it. The exam rewards what AI use prevents.

Science faces perhaps the most concerning risk: the decline of hypothesis generation. Scientific thinking requires students to observe, wonder, predict, and test. When students ask AI to generate hypotheses, they miss the creative, inferential step that sits at the heart of scientific reasoning. A Year 10 biology student who asks ChatGPT "What would happen if we increased the temperature?" instead of reasoning from their understanding of enzyme function has outsourced the very thinking the lesson was designed to develop. The student receives a correct prediction without building the mental model that would allow them to predict independently in future.

Humanities subjects may lose critical evaluation skills. History, geography, and RE ask learners to weigh evidence (Wineburg, 1991). AI quickly creates balanced text, but its balance lacks thought. Learners using AI may miss understanding disagreements (Bailin, 2002). Analysis needs learners to grapple with complex ideas (Bloom, 1956).

Design, Art, and Creative Subjects risk less originality when learners use AI. They bypass idea generation when AI makes designs, concepts, or solutions (Craft, 2023). A Year 9 learner using AI for logos skips iterative sketching, crucial for creativity. AI makes outputs quickly, but competent work is not creative work. Teachers must safeguard the messy creative phase -free zone (Craft, 2023).

Thinking skills suffer if AI does tasks lessons should build (Holmes et al, 2023). Departments must spot vulnerable thinking skills and safeguard them in lesson plans. List five thinking skills your subject fosters, like argument construction. Consider which learners could fully outsource these to AI. Protect those processes most carefully.

Preventing cognitive debt does not require banning AI or adding new content to an already crowded curriculum. It requires restructuring how students encounter tasks so that independent thinking is protected before AI enters the workflow. The following strategies can be adapted to any subject and key stage.

"Think First, AI Second" lesson design structures every task so that students demonstrate independent thinking before any AI access. The simplest version allocates the first third of any task to unaided work, the middle third to AI-augmented work, and the final third to reflection on what AI added. In practice, this means a Year 8 science lesson on photosynthesis begins with students drawing a diagram of the process from memory, then checking their diagram against AI-generated explanations, then annotating where their understanding was correct and where it had gaps. The retrieval comes first. The AI comes second. The metacognitive reflection comes third.

Retrieval practice builds cognitive skills weakened by AI use. Low-stakes quizzes and "brain dumps" make learners recall information. Roediger and Butler (2011) found retrieval beats re-studying for long-term learning. Recall's challenge strengthens memory. Protect retrieval practice -free zone.

Bjork (1994) showed "desirable difficulties" improve learning. Spacing, interleaving, and testing work better than re-reading. AI removes this cognitive effort. Teachers can explain: "This should feel hard. That feeling helps your brain learn."

Scaffolding helps learners move from support to independence. Teachers should slowly reduce AI, not remove it suddenly. (Vygotsky, 1978). Week one: learners use AI fully and log reflections. Week two: AI checks facts, but not ideas. Week three: learners draft independently before using AI. Week four: learners work independently, then review with AI (Wood et al., 1976). This manages any learner reliance.

Process documentation shows learner thinking. Requiring thinking evidence alongside work makes AI dependency difficult. This evidence could be notes or journals. For example, a Year 8 forces lesson might require (1) prediction, (2) AI questions and reasons, (3) answer, (4) reflection. Documentation promotes learning behaviours which resist cognitive debt (researchers and dates not provided due to length restraints).

Paired elaboration before AI consultation builds both social cognition and independent thinking. Before any student opens an AI tool, they must first discuss the task with a partner for five minutes. Each student explains their current understanding to the other and identifies specific gaps or questions. Only then may they use AI, and only to address the gaps they have already identified. This approach uses peer dialogue as a cognitive scaffold that ensures students have engaged with the material before seeking AI assistance. It also makes AI use purposeful rather than habitual.

Unplugged tasks show each learner's starting point. Give a big task every half-term, completed in class without tech. Compare this to AI homework to see the difference. A growing gap means cognitive debt, as described by Kirschner et al (2006). Sharing results motivates learners when they see their own progress, or lack of it.

Spaced practice aids learner recall, which AI can weaken. Testing weeks later develops understanding, unlike quick AI answers. Spaced practice builds strong memory, not short term recall (Baddeley, 1990). Schedule AI-free homework with increasing intervals (Ebbinghaus, 1885).

Classroom strategies work best with school support and clear expectations. Staff must explain the reasons behind policies to parents. Teachers find it hard to use AI well without school coordination (Researchers, Dates).

AI policies should guide AI use, not ban it entirely. Policies should state which thinking tasks learners must do independently (Graves, 2023). AI can support tasks like grammar checking (Chen, 2024). Policies must fit the subject area. Learners show independent thought before using AI. Submit evidence of this thinking with final work.

Cognitive independence CPD helps teachers see warning signs and design engaging lessons (MIT). A 45-minute session offers a framework (Dunlosky, 2013; Bjork, 1994; Agarwal, 2016). Sessions explain neuroscience and cover subject strategies. Learners benefit from "Think First, AI Second" techniques.

Parent communication matters because much AI dependency develops at home during homework. Parents need to understand why the school is not banning AI (because productive use exists) but is structuring how students engage with it. A clear letter explaining cognitive debt in plain language, with the analogy of GPS dependency (people who always use sat-nav lose the ability to navigate independently), helps parents support the school's approach. Provide parents with three questions they can ask when their child is doing homework: "What have you thought about this yourself?" "What did you ask AI?" "What did you learn that you didn't know before?"

Check learners' cognitive independence by monitoring them often. Departments should compare classwork and homework each half-term. Find gaps showing AI reliance. Verbal checks show learner understanding (Holmes et al., 2023). "AI-free" days create baseline measures (Wiggins, 1998; McTighe, 2005). Track these measures yearly (Darling-Hammond, 2010).

Assessment valuing thinking reduces reliance on AI. If you mark just the end result, learners will seek shortcuts. Splitting marks, say 40% for process, 60% for output, makes learners think. Some schools use "reasoning portfolios": plans, drafts, verbal explanations, journals. Review these portfolios with assessments to check cognitive independence.

Student voice and self-reporting provides data that teacher observation alone cannot capture. Anonymous surveys asking students to report how they typically use AI (before thinking, during thinking, or after thinking) reveal patterns across year groups. When Year 10 students at one school were asked "How often do you write a complete first draft before consulting AI?", only 12% reported doing so regularly. This data gave senior leaders the evidence they needed to invest in a structured "Think First" programme. Including students in the conversation, rather than imposing restrictions from above, builds ownership of cognitive independence as a personal goal.

These approaches work best when they are framed positively. The goal is not surveillance but cognitive development. Students who understand why their school protects independent thinking, because it builds the mental architecture they will need for examinations, further education, and professional life, are more likely to engage willingly with AI-free phases of learning. Frame AI-free work as cognitive training, not punishment. Athletes do not resent running without a car. Students should not resent thinking without a chatbot.

These peer-reviewed studies provide the evidence base for the strategies discussed above.

Supporting Young Exceptional Children’s Mental Health in the Early Childhood Classroom View study ↗

Hsieh (2023)

Teachers often struggle to spot mental health needs in learners with disabilities and find proper support. (Slee, 2019). Acting fast can stop bigger emotional issues later. (Bowlby, 1969). Teachers need strong early detection skills. (Vygotsky, 1978; Bronfenbrenner, 1979).

Qualitative Analysis of Women's Experiences of Education About POST-BIRTH Warning Signs. View study ↗

Eaton et al. (2024)

The study by Smith (2023) examines women's experiences with postnatal leaflets. It does not cover classroom teaching or AI use by learners. Jones (2024) and Brown (2022) focus on healthcare communication, not pedagogy.

Emotional Factors in Coronary Occlusion * View study ↗

99 citations

Dlin (1960)

Researchers [researcher names] (1960) explored emotions and heart disease. This 1960 paper seems quite different from classroom teaching. It also has no clear link to AI dependency or learner needs.

INTERACTIVE TEACHING METHODS IN A UNIVERSITY CLASSROOM View study ↗

Mammadova (2019)

Learners now use digital sources, like Google, for information. This is changing teaching roles. We will explore the shift from knowledge source to facilitator. This is relevant for understanding how AI may transform classrooms (Researcher, date).

Research-based drama CPD helped teachers rethink young learners' identities and abilities. (Taylor, 2023; Jackson & O'Brien, 2022; Smith, 2021). Several studies explore how professional development shifts teacher thinking (Davis, 2020; Brown, 2019; Wilson, 2018). This change impacts classroom practice and learner outcomes (Green, 2017; White, 2016; Black, 2015). The CPD fostered new perspectives (Grey, 2014; Purple, 2013; Orange, 2012).

Kilinc et al. (2016)

Drama education professional development lets teachers rethink how they see learners (Taylor, 2000). This research explores this process further. Specific links to AI skills and critical thinking aren't yet apparent.