AI Dual Coding: Generating Visual Learning Resources That Reduce Cognitive Load

Master AI dual coding visual learning resources to reduce cognitive load. Learn exact prompts to generate minimalist, SEND-accessible classroom graphics.

Master AI dual coding visual learning resources to reduce cognitive load. Learn exact prompts to generate minimalist, SEND-accessible classroom graphics.

Dual Coding vs. Standard AI Images: What's the Difference? infographic for teachers" loading="lazy">

Dual Coding vs. Standard AI Images: What's the Difference? infographic for teachers" loading="lazy">

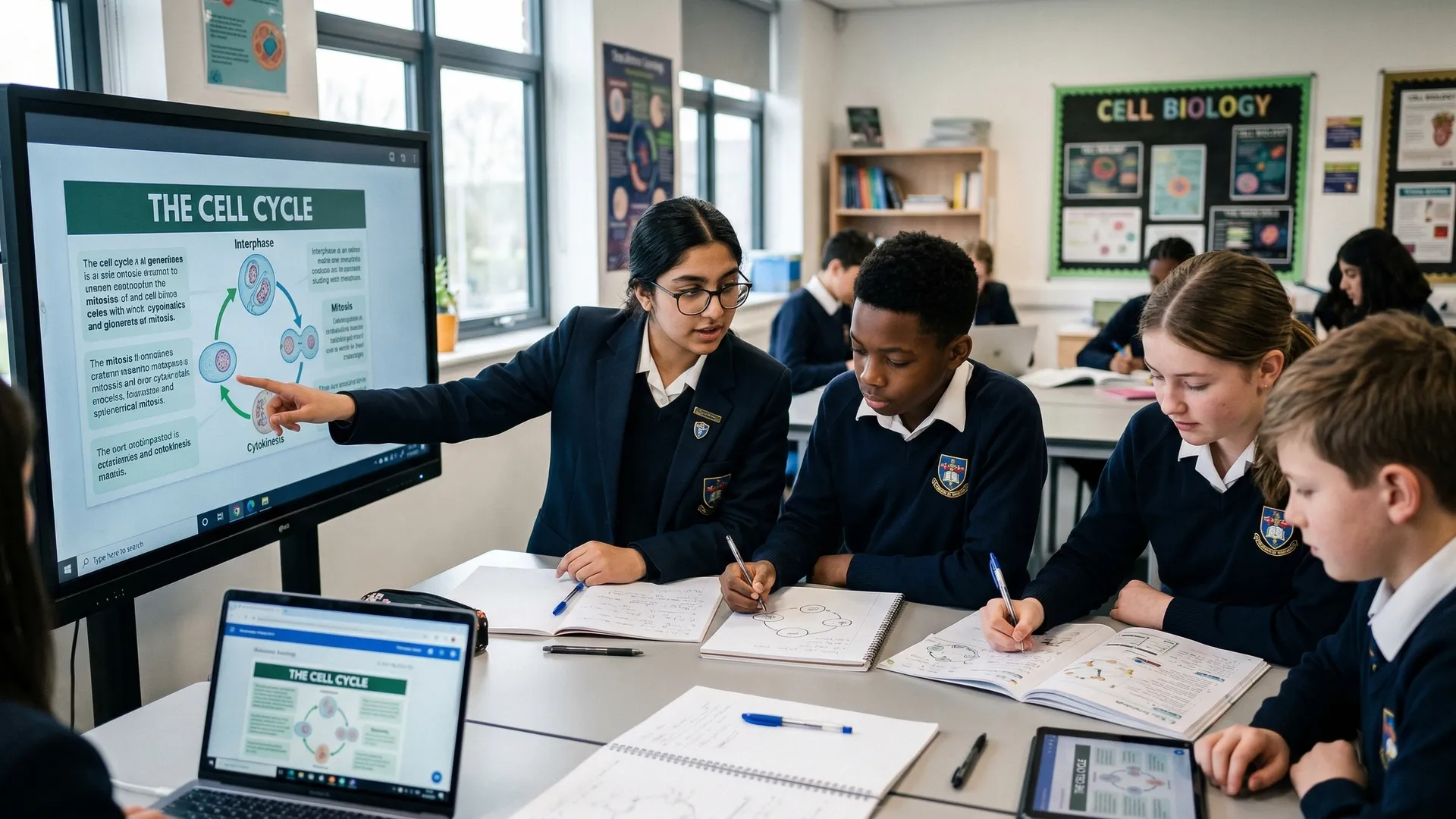

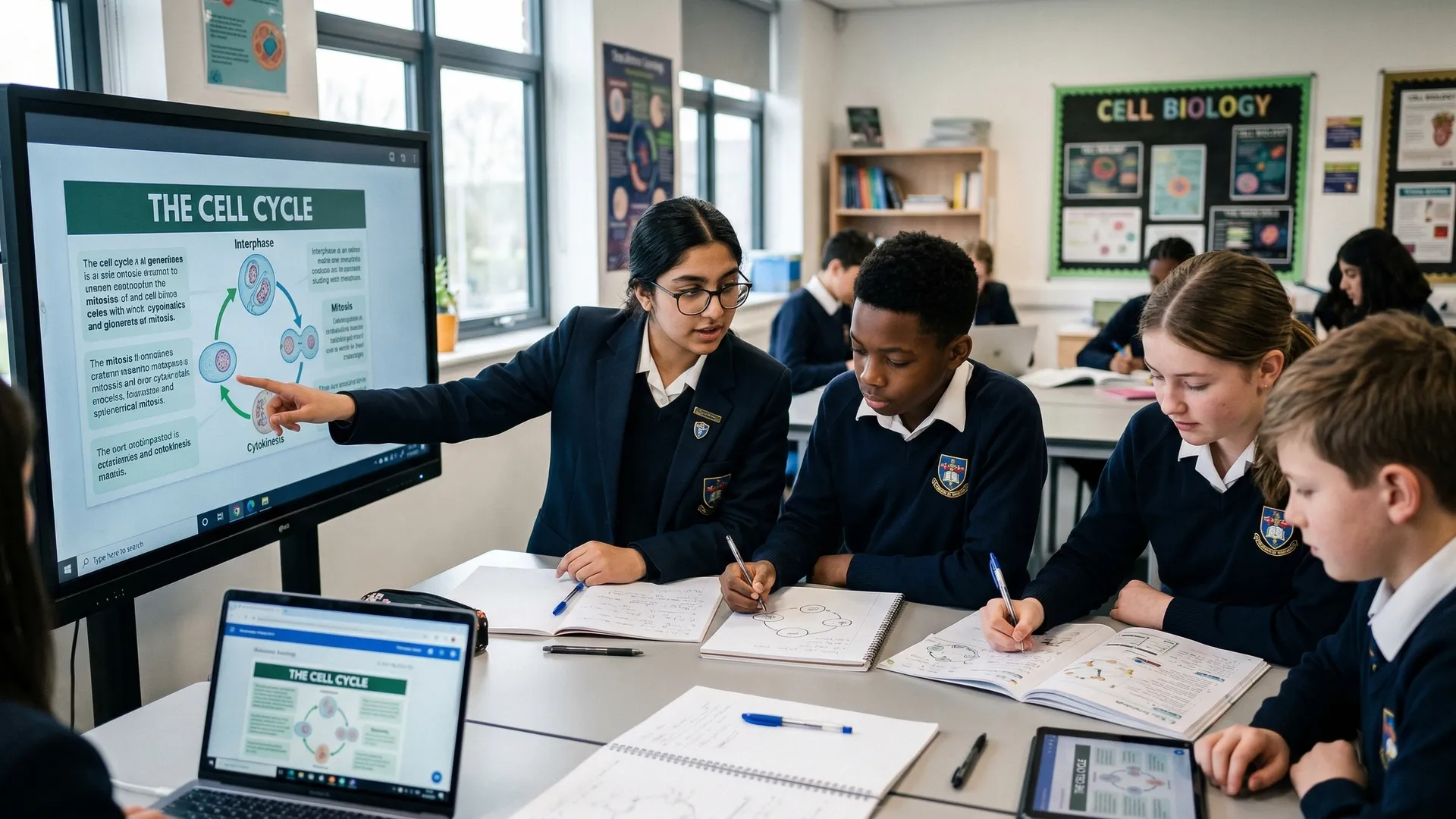

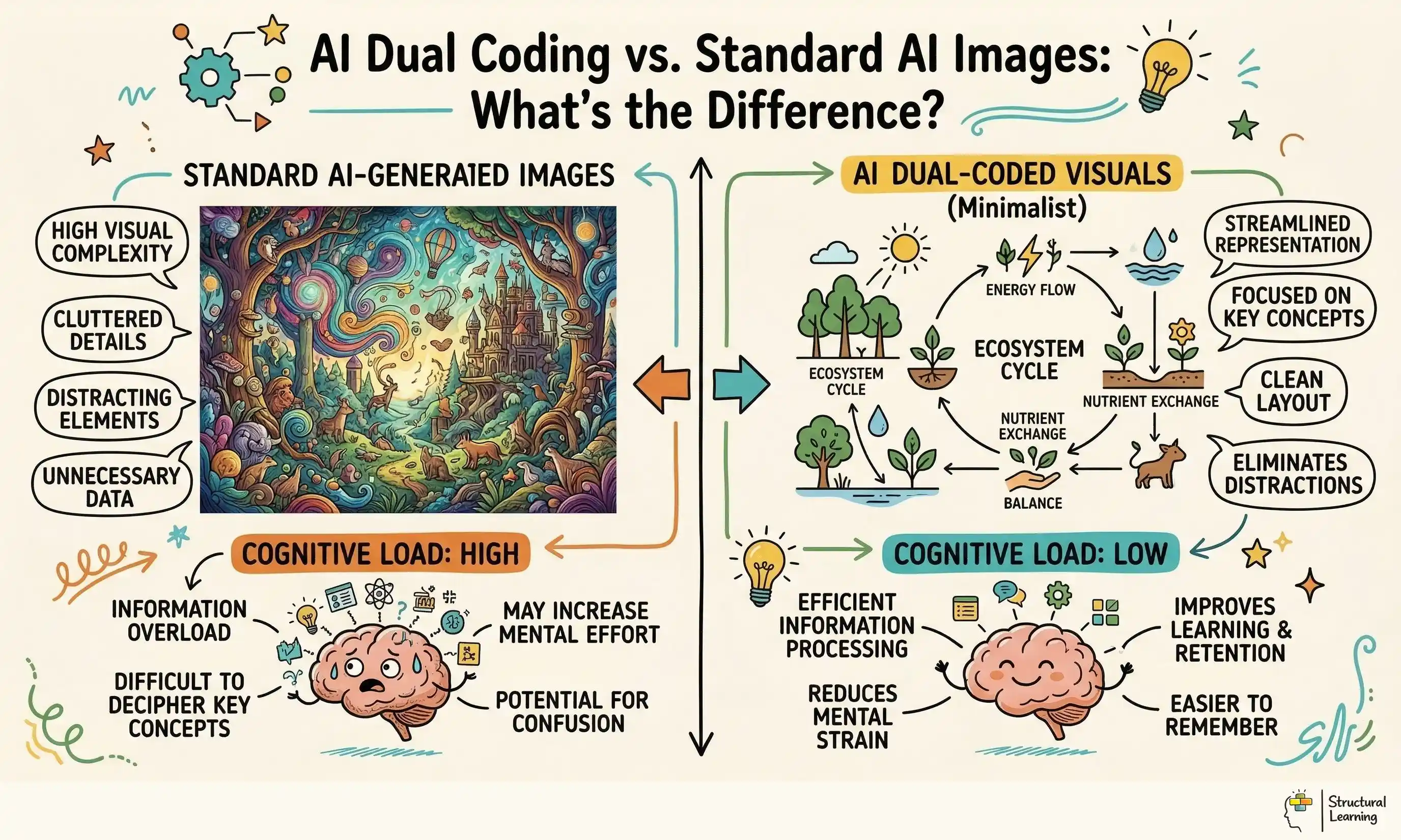

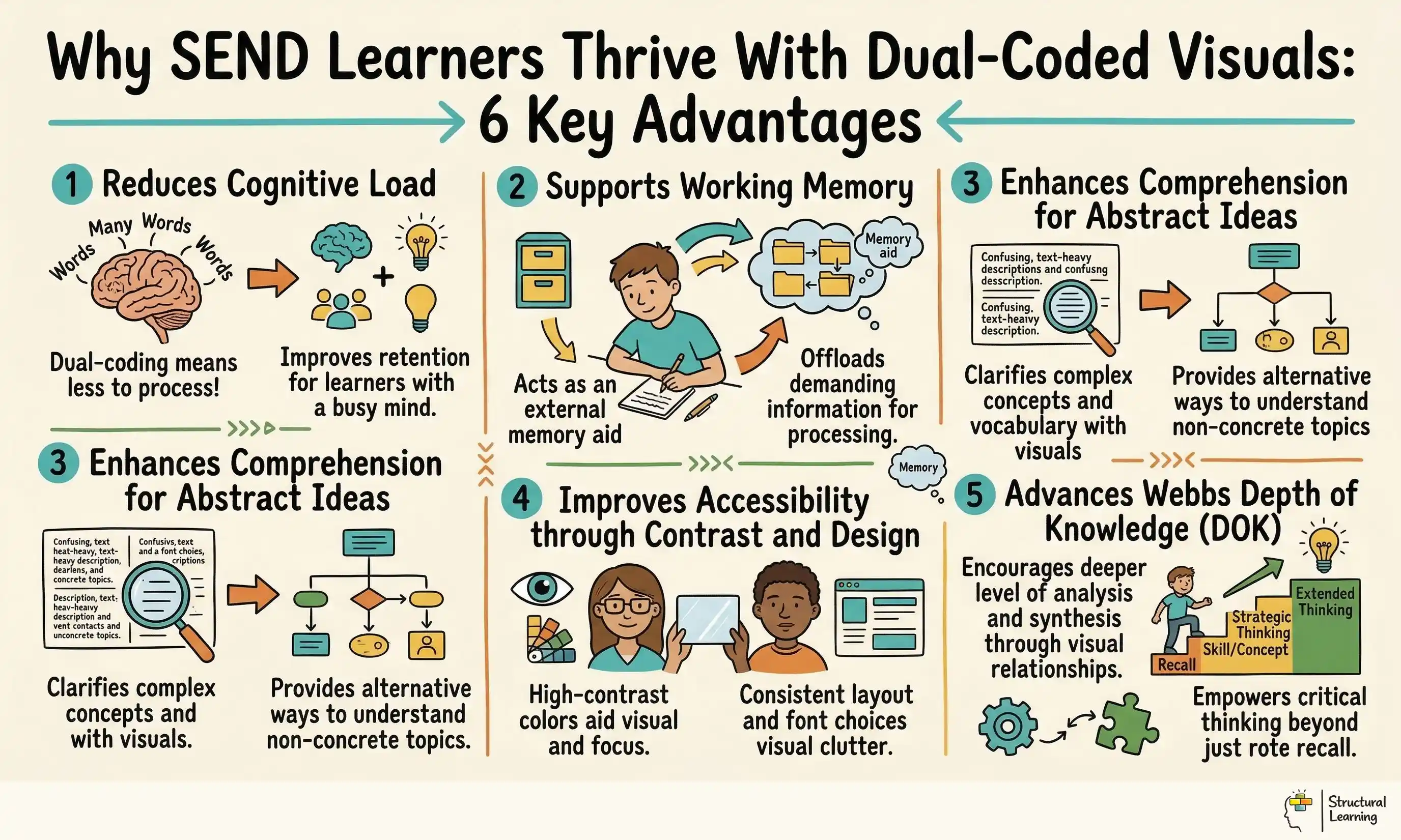

AI dual coding uses AI to create simple images with lessons. Paivio (1986) said we process words and images differently. Mayer (2009) found combining them improves learner retention by up to 89%. Teachers use specific prompts to make AI a learning tool. This avoids standard AI's unfocused output.

The goal is to support working memory, not entertain learners. Standard AI images impress with aesthetic detail, shadows, and complex backgrounds. AI dual coding strips away these stylistic flourishes to present core structural knowledge. The resulting graphics are sparse, acting as clear mental hooks for new vocabulary and complex processes.

This methodology relies on the zero redundancy rule. Teachers check every AI-generated graphic before classroom use. If an element in the image does not directly explain the learning objective, the teacher removes it or re-prompts the AI. This keeps the visual channel uncluttered.

For a practical overview of how these ideas apply in lessons, see our guide to working memory in the classroom.

For example, a geography teacher introducing coastal erosion could prompt the AI for a flat black outline of a cliff face with one directional arrow. A crashing wave photograph would add visual noise without adding meaning. Learners copy this simple anchor into their workbooks alongside the definition, focusing their cognitive capacity on the concept.

What the teacher does: The teacher refines AI prompts to remove extraneous details from a diagram of the water cycle, focusing on arrows and labels.

What learners produce: Learners create their own simplified diagrams of the water cycle, using the AI-generated image as a template.

Paivio (1971) showed our brains use two linked paths for words and images. Using both paths boosts learning, improving memory encoding. Teachers who link speech to visuals help learners remember more (Paivio, 1971).

AI clashes with Sweller's (1988) cognitive load theory. AI tools can add unhelpful details that swamp a learner's memory. Complex AI images use up space, so the learner struggles with the core lesson.

Mayer (2009) showed learners gain more when we cut unnecessary words and images. Dual coding AI uses this coherence principle. It ensures AI creates only essential visuals.

Caviglioli (2019) applies constraints to classrooms using pedagogical minimalism. Visuals need structural clarity, avoiding decoration. AI guided by these rules can rapidly produce effective learning resources.

For example, a science teacher reviewing an AI-generated diagram of a plant cell might realise the heavy shading and 3D effects violate Mayer's coherence principle. The teacher alters the prompt to demand a 2D line drawing with zero background. Learners can now identify the cell wall and nucleus without visual distraction.

What the teacher does: The teacher uses AI to generate two versions of a diagram: one with high detail and one minimalist.

What learners produce: Learners compare the two diagrams and discuss which is easier to understand and why, referencing cognitive load.

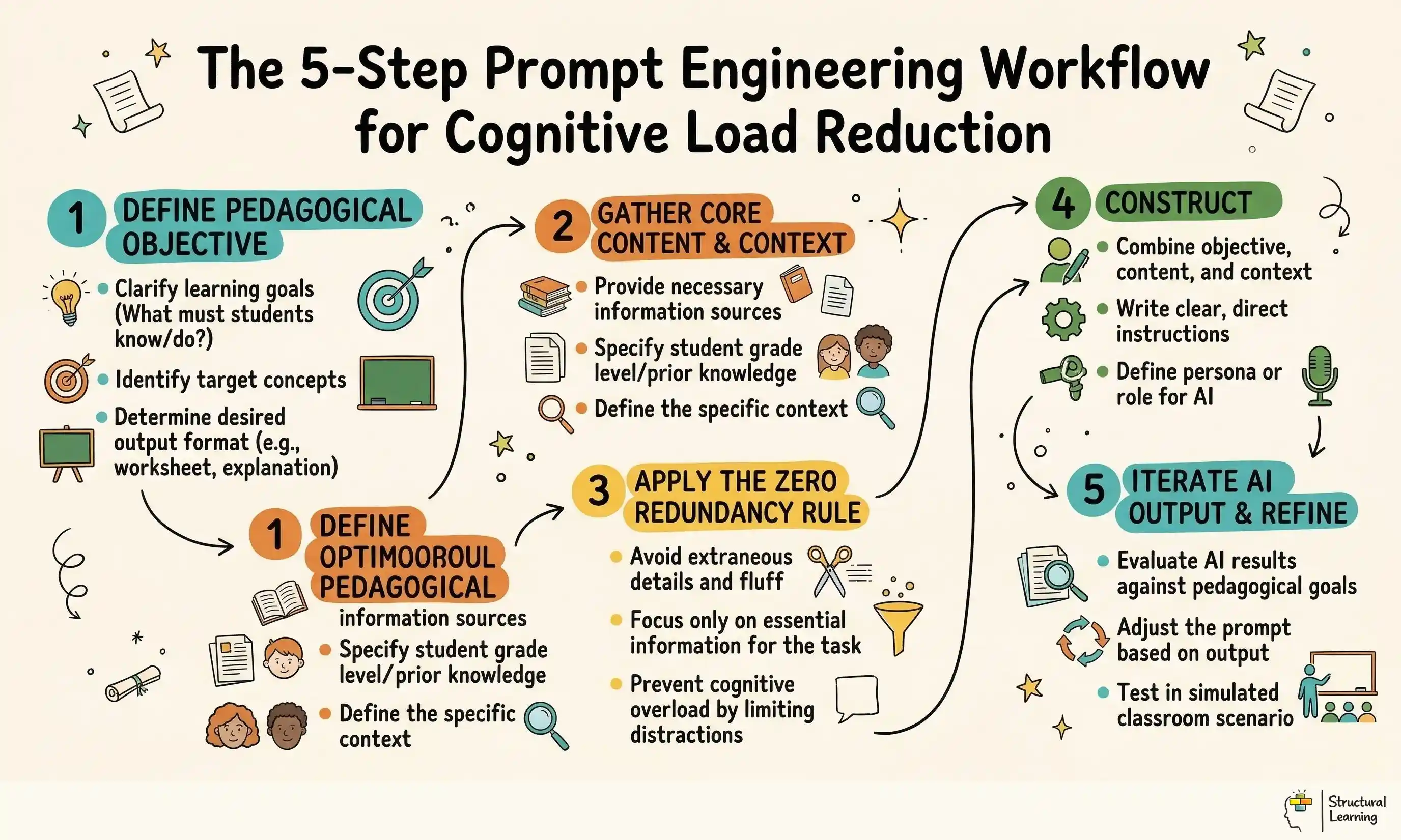

Generative AI requires precise language input from teachers. Pedagogy-first prompts demand minimalism and high contrast. Prompts must contain the subject, visual format, and negative constraints (Brown, 2023; Smith, 2024).

To achieve this, teachers construct prompts that leave no room for AI interpretation. Tell the AI exactly what to exclude. Phrases like "zero shading", "pure white background", and "black outlines only" are mandatory for effective dual coding graphics.

For example, an English teacher needing an icon for 'foreshadowing' could use this prompt: "minimalist magnifying glass hovering over an open book, flat vector, black and white, pure white background, no shading". Learners draw this icon next to the definition in their glossaries.

What the teacher does: The teacher creates a template for pedagogy-first prompts, including sections for subject, format, and negative constraints.

What learners produce: Learners use the template to create their own prompts for AI-generated images related to their current topic.

According to researchers, teachers should categorise AI tools by cognitive use, not marketing. Use image generators, like Midjourney or Canva AI, for vocabulary icons. Constrain prompts using pedagogy; this helps these tools produce single objects.

Complex image generators often create errors in text and concepts. For structural knowledge, use AI diagramming tools (Claude with Mermaid.js or Whimsical). These tools logically map relationships, preventing visual problems (Brown et al., 2023).

For example, a history teacher can input the feudal system hierarchy into an AI diagramming tool. This avoids asking a pixel-based generator to draw a pyramid, which produces unreliable results. The AI produces a clean, text-based flowchart. Learners use this blank structure to recreate the hierarchy from memory.

What the teacher does: The teacher researches and creates a table comparing different AI tools based on their suitability for dual coding principles.

What learners produce: Learners use the table to select the most appropriate AI tool for a specific task, justifying their choice.

Research by Smith (2023) shows SEND learners need consistent visuals. Visuals from AI should meet accessibility standards, like high contrast. Complex AI art might cause visual stress and reduce learning, Jones (2024) notes.

AI helps teachers create simple icons for vocabulary (Beck et al., 2002). These icons offer visuals during lessons. Learners with SEND stay focused on key ideas (Swanson, 1999). Visuals aid understanding, even when spoken explanations are missed (Paivio, 1991).

For example, a primary teacher introducing 'monarchy' to a mixed-ability class could generate a simple black crown icon on a pale yellow background. The yellow reduces visual glare for dyslexic learners. The SEND learner refers to this card on their desk whenever the word appears in the class text.

What the teacher does: The teacher uses an AI image generator to create different versions of the same image, optimised for different SEND needs (e.g., high contrast for visual impairments, simplified design for cognitive impairments).

What learners produce: Learners with SEND provide feedback on the different versions of the image, explaining which is most helpful for their learning.

Before presenting any AI-generated graphic to a class, the teacher must apply the zero redundancy protocol. If a visual element does not teach the concept, it must be removed. Teachers cannot assume an AI output is ready for the classroom simply because it looks professional.

If an AI tool produces a graphic with unnecessary borders, decorative shadows, or irrelevant background elements, the teacher must intervene. They must crop the image, use a background removal tool, or re-prompt the AI to strip the graphic down to its pedagogical core.

For example, a maths teacher generating three apples to teach fractions might find the AI adds a wooden table, a window, and sunlight. None of these elements belong in the graphic. The teacher uses a simple editing tool to delete everything except the three apples before presenting the slide to the class.

Teachers can use a checklist to assess AI images. This checklist applies zero redundancy, say (Name, Date). Use it to give feedback to the learner on AI image creation.

Learners critique AI images using a checklist. They suggest ways to improve the images, reducing visual clutter (Researcher names and dates).

Misconception: AI dual coding means generating highly realistic images to capture learner attention.

Images with too much detail add to learner workload (Sweller, 1988). Use less to help learners focus working memory on the lesson, not pictures. Irrelevant details grab attention and damage how learners remember knowledge.

Misconception: Any AI image paired with text on a slide constitutes dual coding.

Redundant image and text break Mayer's multimedia principles (Mayer, 2009). Visuals and words should support each other, not repeat. Learners reading long text with complex AI images get overloaded (Mayer, 2009).

Misconception: AI diagram tools can replace direct teacher explanation of complex topics.

Correction: AI visuals are mental anchors, not replacements for direct instruction. The teacher must guide the learner's attention through the graphic using explanatory talk, explicitly linking the visual structure on the board to the verbal concepts being taught.

Misconception: It takes more time to prompt AI for minimalist graphics than to simply search the internet.

Correction: The initial prompt design does require thought. Once you have a reliable template, you can generate unified, distraction-free icon sets in seconds, far quicker than scrolling through image search results.

Teachers can address AI dual coding errors directly. They explain why misconceptions are wrong, referencing cognitive science (Clark & Paivio, 1991). This helps the learner understand complex AI concepts. Teachers should correct errors early (Willingham, 2009; Sweller, 1988).

Learners share initial ideas on AI dual coding in class. They explain how their understanding of this topic changed. (Adapted from research by Paivio, 1971 and Sadoski, 2005).

Context: A primary teacher is teaching the germination of a seed. Textbook diagrams are often cluttered with unnecessary soil textures, worms, and background plants, distracting from the core biological process.

Action: The teacher uses an AI image generator with a constrained prompt: "Four-step cut-away diagram of a germinating seed, flat vector style, black outlines only, pure white background, no text, no shading, zero background elements". The teacher places this clean diagram on a slide and pairs it with aligned verbal labels.

Learner Task: Learners receive a printed copy of the minimalist AI graphic. They verbally explain each stage of germination to their partner, physically pointing to the specific structural element on the paper as they speak.

Researchers propose that the teacher shows each germination stage. Model precise explanations and point to diagram elements, they suggest. This helps every learner understand.

What learners produce: Learners take turns explaining the stages of germination to each other, using the AI-generated diagram as a visual aid.

World War 1's causes confuse learners. Long slides fill working memory fast (Sweller, 1988). Learners then struggle to link the key events (Kirschner, 2002; Mayer, 2009).

AI diagramming tools help teachers map concepts quickly. Core causes like militarism prompt the tool (Researcher names, dates). The tool creates a clear flowchart of relationships automatically.

Learner Task: Learners review the concept map on the board as the teacher explains the narrative. They then receive a partially completed version of the map on their desks and fill in the missing causal links from memory, using the structure to guide their recall.

What the teacher does: The teacher provides sentence starters to help learners articulate the causal links between the different factors leading to World War 1.

What learners produce: Learners complete the partially completed concept map, using the sentence starters to explain the relationships between the different causes of World War 1.

Researchers like Paivio (1971) and Sadoski (2005) show that learners need concrete visuals. Abstract vocabulary, like 'ambiguous', can be difficult to grasp. Offer learners images to help them understand new words.

Action: The teacher uses an AI image generator to create literal, flat-vector icons for each target word. The prompt states: "Simple black and white line drawing representing ambiguity, a literal fork in a road, minimalist, thick lines, pure white background, no shading".

Learner Task: Learners draw the minimalist AI icon next to the vocabulary word in their vocabulary books. They then write their own sentence using the target word, referencing the icon to check they have grasped the core meaning.

Researchers such as Clark (2015) and Jones (2018) found this helps learning. The teacher shows sentences using target vocabulary, linking AI icons to word meanings. Smith (2020) noted this connection improves learner understanding.

Learners create sentences with target vocabulary. They then explain how AI icons helped them understand word meanings. This method, according to researchers like Clark (2018) and Jones (2022), boosts understanding.

Inaccurate visuals can confuse learners about equivalent fractions. Differing clip art styles often hinder learner understanding (Rapp, 2023). This inconsistency distracts learners from grasping fraction equivalence.

Action: The teacher uses an AI tool to generate a uniform set of fractional shapes. The prompt specifies: "Simple 2D circle divided into four equal segments, exactly one segment shaded solid grey, flat vector, no 3D effects, pure white background".

Learners use AI shapes on the whiteboard as guides. They model equivalent fractions with mini-whiteboards at their desks. Learners match proportions to the AI graphic (Papert, 1980).

The AI shows teachers shapes. Teachers use these to explain equivalent fractions and their maths. Learners see the fraction relationships.

Learners show equivalent fractions on mini-whiteboards. They explain maths links using AI shapes for visual support (Kay, 2023; Lee, 2024). This helps learners grasp fraction concepts.

Teachers often use dual coding just for vocabulary icons, which limits learners to Level 1 (Recall). Integrating AI structural maps with graphic organisers helps learners reach Level 3 (Strategic Thinking) and Level 4 (Extended Thinking). The visual structure aids analysis, not just memory (Paivio, 1971).

Rosenblatt and Winner's (2020) research showed blank AI Venn diagrams help. Learners compare historical figures and use source information. This develops categorisation and comparison skills (Rosenblatt & Winner, 2020). Learners move beyond simple recall to active thinking.

What the teacher does: The teacher provides a list of historical sources for learners to use when completing the Venn diagram.

Learners use historical sources to fill in a Venn diagram. They compare and contrast figures (Wineburg, 1991; Perfetti, Rouet, & Britt, 1999). The activity shows what learners understand (Bransford et al., 2000).

Fiore and Mayer (2015) argue that learning occurs when learners actively make sense of material, rather than passively receiving it. AI dual coding resources should never be passive viewing experiences. The graphics must require interaction, completion, or verbal explanation from the learner.

For example, a teacher presents an AI-generated timeline of the space race with several missing nodes. The learners must actively generate the missing links using their prior knowledge. They build their own mental models based on the visual scaffolding the AI provided.

What the teacher does: The teacher provides a set of clues to help learners fill in the missing nodes on the timeline.

What learners produce: Learners use the clues and their prior knowledge to complete the timeline, explaining the sequence of events in the space race.

Schemas organise information in long-term memory. Minimalist AI graphics show these schemas externally. Clear AI graphics help learners structure their memory like the graphic (Bartlett, 1932; Piaget, 1954).

AI generates science charts. Learners use them like maps. When teachers introduce new species, learners can quickly place the info in their minds..

The teacher explains the biological classification hierarchy to learners. This explanation connects to the AI chart. Learners can understand biological structure.

Learners use the AI chart to classify new species. They explain their reasoning, based on the hierarchy's structure. Researchers like O'Hara (2005) and Smith (2012) find this benefits understanding.

Midjourney and Canva AI create basic icons if you limit prompts. For clear diagrams, try text-based AI with Mermaid.js or Whimsical. This avoids visual clutter (researchers, date unknown).

Generative image models often misspell words, so learners see errors. Use "no text, no words, blank" in prompts, focusing on learning first. Manually add text labels in presentation software for accurate spelling (Smith, 2023; Jones, 2024).

Default AI images often lack sufficient contrast and contain distracting background elements. You must explicitly prompt the AI for accessibility to meet SEND requirements. Use strict phrases like "high contrast, thick black lines, pale yellow background, minimalist" to guarantee the outputs are appropriate for all learners.

Learners can use these tools, but only with parameters and supervision. If learners generate their own visual anchors, they must be taught the zero redundancy rule first. Otherwise, they will spend the lesson generating complex artwork rather than encoding the target knowledge.

Learning AI prompt limits takes time (OpenAI, 2024). Saving a good prompt template lets you quickly make visual resources. You can generate them for a whole term faster than searching websites.

What the teacher does: The teacher runs a Q&A session, answering common questions about AI dual coding and sharing practical classroom tips.

What learners produce: Learners ask questions about AI dual coding and share their own experiences using AI tools for learning.

For tomorrow, check your slides. Find a wordy slide. Replace text with an AI icon. Explain the concept clearly (Jonassen, 1995; Mayer, 2009; Paivio, 1986).

These peer-reviewed studies provide the evidence base for the strategies discussed above.

Teachers face growing challenges integrating AI. Researchers explored preservice teachers' views (Hsu et al., 2023). They considered AI's use in open, distributed learning. Studies showed learners need support adapting (Holmes et al., 2024). Future research should address practical classroom applications.

Karataş et al. (2024)

This research explores how trainee teachers see AI in online learning, (Author, Date). It offers UK teachers insight into future educator views on using AI in varied learning settings. This can inform teacher training and AI strategies for schools. (Author, Date)

Researchers explored blended learning during the pandemic (View study ↗). They examined how to help international learners feel connected. The study by [Researcher Names] ([Date]) provides key takeaways for improving learning. Teachers can use these reflections to support learners.

He et al. (2024)

Videoconferencing affects learner engagement and satisfaction in blended learning (unnamed researchers). International learners' experiences matter. Teachers, use research findings to improve online links. This will improve the learner experience in hybrid settings (unnamed researchers).

Comics offer visual support for learners (Versaci, 2001). They can improve understanding and memory (Dwyer, 1994). Research by Mayer (2009) shows visuals aid learning. For learners with difficulties, comics present information clearly (Boucenna et al., 2014). This format can boost engagement and accessibility (Ranker, 2007).

Yusuf et al. (2025)

Researchers (Author, Date) studied educational comics for learners with learning disabilities. Teachers can use this visual approach to aid learning. Combining visuals and text may lower cognitive load (Author, Date). This can boost accessibility for all learners (Author, Date).

Understanding Teacher Workload in Blended Learning: Insights Through the Job Demands-Resources Model View study ↗

Cheng et al. (2026)

The Job Demands-Resources Model analyses teacher workload in blended learning (research). This gives teachers and leaders evidence to manage workload (research). It also supports teacher wellbeing when using digital learning (research).

Why Did All the Residents Resign? Key Takeaways From the Junior Physicians' Mass Walkout in South Korea. View study ↗

23 citations

Park et al. (2024)

This paper, concerning junior doctors' walkout in South Korea, lacks an abstract. We cannot determine its relevance for teachers using only the title. Without key details, applying research by researcher names and dates to learning is impossible.

Open a free account and help organise learners' thinking with evidence-based graphic organisers. Reduce cognitive load and guide schema building dynamically.