EEF Teaching Toolkit: Cost, Evidence & Impact Ranked

The EEF Teaching and Learning Toolkit summarised with cost ratings, evidence strength and months of impact for every strategy. Plan your spending with confidence.

The EEF Teaching and Learning Toolkit summarised with cost ratings, evidence strength and months of impact for every strategy. Plan your spending with confidence.

The Education Endowment Foundation publishes the most thorough and accessible evidence base available to classroom teachers in England. The Teaching and Learning Toolkit translates decades of educational research into clear guidance. It shows which classroom strategies produce the greatest gains for the least cost. For many teachers, it is the first and best place to start when evaluating whether a new approach is worth trying.

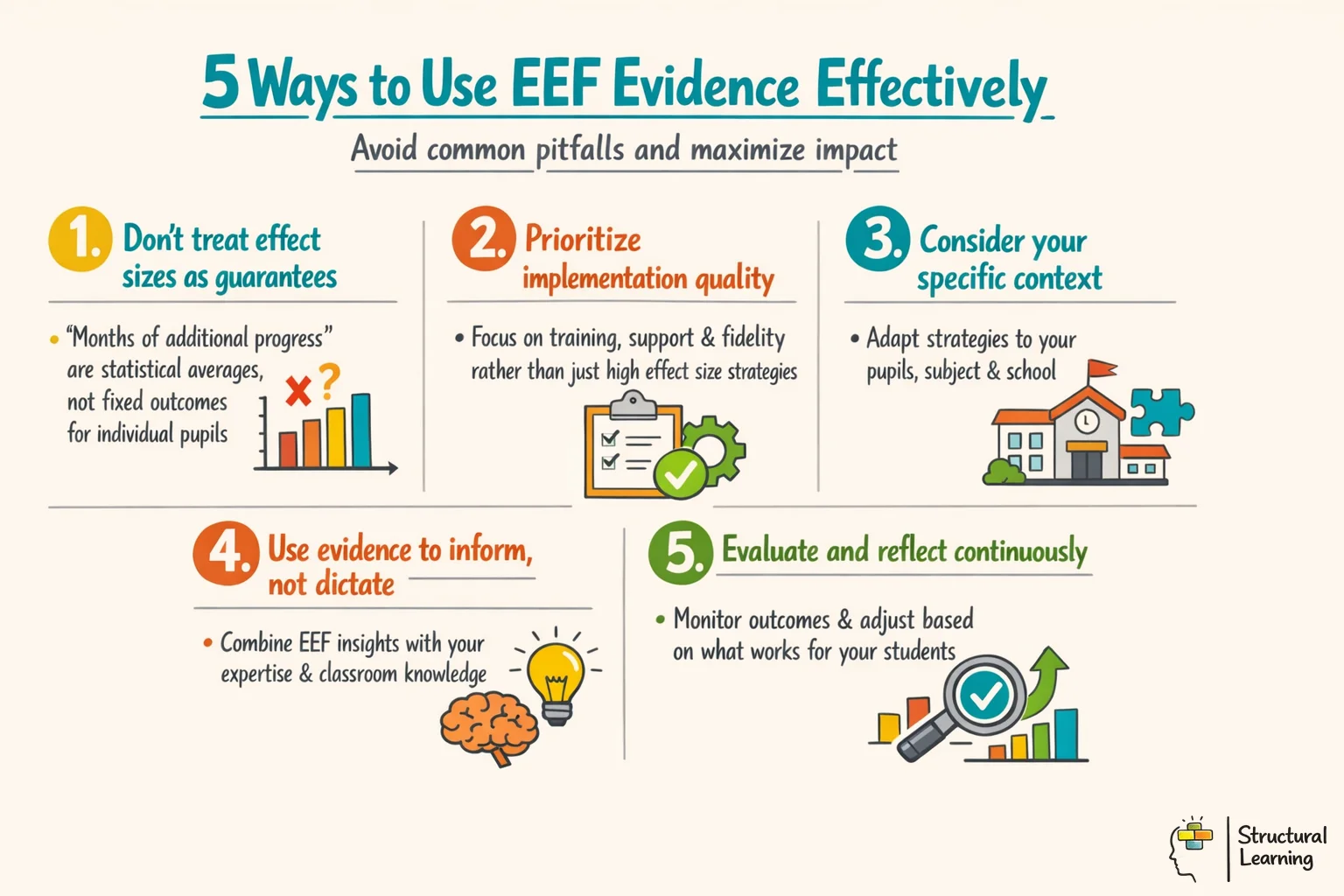

Yet the Toolkit is often misread. Schools treat effect sizes as guarantees, misunderstand what "months of additional progress" actually means, and import strategies without considering implementation quality. This guide explains what the EEF is and how to read its evidence correctly. It also shows how to use it wisely in planning and school improvement work.

A concise Structural Learning audio episode on EEF Teaching Toolkit: Cost, Evidence & Impact Ranked, grounded in the curated research dossier and focused on practical classroom use.

The Education Endowment Foundation, formed in 2011, aims to improve outcomes for all learners. They received funding from the Department for Education. Their goal is to reduce the impact of income on achievement. The Foundation funds trials, reviews research, and provides free guidance (EEF, 2011).

The EEF uses strong research methods, unlike much educational writing. They fund trials and combine existing research (EEF, various dates). Findings from studies are synthesised for easy comparison (EEF, various dates). Teachers can question and use this evidence, not just accept it.

For classroom teachers, the practical entry point is the Teaching and Learning Toolkit. The Toolkit was first published in 2011 in partnership with the Sutton Trust. It currently covers more than 35 instructional strategies (Higgins, Katsipataki, Kokotsaki, Coleman, Major and Coe, 2014). Each entry summarises the average effect on learner attainment, the cost to use, and the strength of the underlying evidence base.

Why does it matter? Because many of the most popular classroom approaches, from learning styles to Brain Gym, have little or no strong evidence behind them. The EEF Toolkit helps you distinguish between strategies that have been tested at scale and those that are little more than well-marketed habit. If your school is deciding where to invest pupil premium funding, the Toolkit is the most defensible starting point available.

Each entry in the Teaching and Learning Toolkit presents three data points: months of additional progress, cost, and evidence strength. Reading these correctly takes a little practice. Most teachers focus on the months figure and overlook the other two, which is where the most important caveats sit.

Months of additional progress represents an average effect across all the studies included in that strand. A figure of '+6 months' means learners using this approach made six additional months of progress. This is compared to learners who did not use the approach. It does not mean every classroom using the strategy will see this gain. The figure is a population average, and it says nothing about whether the approach will work in your specific school with your specific learners.

Cost is rated on a five-pound-sign scale. A single pound sign indicates very low cost (typically under £80 per learner per year); five indicates over £1,200 per learner per year. This matters because a strategy with a smaller effect size but near-zero cost may represent better value than an expensive intervention with a modestly higher effect.

Evidence strength is perhaps the most underused dimension. It is rated from one to five padlocks. Five padlocks indicates a large body of high-quality evidence, typically including multiple well-conducted randomised controlled trials. One padlock indicates limited or inconsistent evidence. When you see a high effect size paired with a low padlock rating, treat the figure with considerable scepticism. There is a real risk the estimate will not hold up as more research accumulates.

A practical classroom example: imagine you are comparing two strategies for your Year 9 maths class. Metacognition and self-regulation shows +7 months and four padlocks. A particular commercial reading programme shows +8 months and one padlock. The metacognition figure has much stronger evidence behind it. It should usually be preferred, even though the headline number is slightly lower.

The most striking finding in the Toolkit is that several of the highest-impact strategies cost almost nothing to use. This is not accidental. Strategies based on how memory and cognition work tend to produce large gains. They address the underlying mechanisms of learning, rather than relying on expensive materials or software.

Metacognition and self-regulation sits at the top of the Toolkit with an average impact of +7 months and four padlocks. Teaching learners to plan, monitor, and evaluate their own learning has consistent effects across ages and subjects. In practice, this means giving learners structured time to assess whether their strategy is working and to switch approaches when it is not. A Year 6 teacher might use a short pre-task planning prompt: "What do you already know? What will you do if you get stuck?" followed by a post-task reflection: "Did your strategy work? What would you change?" This costs nothing beyond lesson time.

Feedback shows +6 months and five padlocks, making it the most evidence-secure entry in the entire Toolkit. But the EEF is clear that not all feedback is equal. Research by Hattie and Timperley (2007) shows important findings about feedback. Feedback about the task and learning strategies works much better than praise. It also works better than feedback about the learner as a person. A comment such as "Your paragraph structure is clear; the argument would be stronger if you addressed the counterargument" gives the learner something to act on. Telling a learner they are "a natural writer" does not. Formative assessment is the delivery mechanism for effective feedback, and the two entries reinforce each other.

Reading comprehension strategies add +6 months (Hattie, 2017). Teachers can model summarising, questioning, clarifying and predicting. Learners then internalise these skills and use them independently. A teacher might think aloud with a shared text. Learners practise in pairs after seeing the process.

Collaborative learning shows +5 months, but with a caveat the Toolkit makes explicit: unstructured group work does not produce these gains. The effect applies when collaboration is genuinely necessary for tasks. Roles must be clear and the group accountable for both the process and the product.

Peer tutoring also reaches +5 months. Structured peer tutoring, where roles are assigned and both tutor and tutee have clear tasks, benefits both parties. The act of explaining material forces the tutor to organise their own understanding. This is why learning gains are not limited to the learner being taught. Here is a low-cost example that works well in many schools. In Key Stage 2, older learners read with younger ones using a structured approach. This paired reading programme has been tested successfully.

The "months of additional progress" metric makes the EEF Toolkit readable, but it simplifies a more complex statistical picture. Understanding what lies beneath the figure helps you use it more accurately.

The months figure is derived from an effect size, typically Cohen's d or a similar standardised measure. An effect size of 0.2 is considered small, 0.5 moderate, and 0.8 large. The EEF converts these into months using a standard conversion. This is based on typical academic progress per year - roughly 0.2 standard deviations of improvement per month in primary school. This conversion varies by age and subject, which means the months figure is an approximation, not a precise measurement.

The most important thing to understand is that effect sizes from research studies measure average effects under research conditions. Research conditions include careful implementation, researcher-designed materials, and heightened attention from teachers who know they are being studied. None of these are guaranteed in a normal classroom. Slavin (2020) looked at data from hundreds of trials. Programmes that were not put in place properly showed almost no effect. This happened even when research suggested they should work well.

A concrete example from retrieval practice: the Toolkit shows a strong effect for this strategy. Retrieval practice only works well under certain conditions. The retrieval attempt must be genuinely effortful, feedback must be provided, and practice must be spaced over time. A low-stakes quiz given once at the end of a unit will produce much smaller gains. This happens when there is no spacing or feedback, unlike what the headline figure suggests. The mechanism matters as much as the label.

Coe et al. (2014) found six parts of great teaching. Learners' enthusiasm and teacher workload poorly predict learning. Pedagogical knowledge and assessment quality are strongest (Coe et al., 2014). Use the EEF Toolkit alongside research like this.

Several persistent misreadings of the EEF Toolkit circulate in schools. Naming them directly is more useful than leaving them implicit.

Misconception 1: Higher months means better. A strategy showing +8 months with one padlock is not necessarily better than one showing +5 months with five padlocks. Evidence strength tells you how reliable the estimate is. An eight-month figure derived from a handful of small, poorly controlled studies is far less trustworthy than a five-month figure supported by dozens of large trials. When evidence strength is low, the real effect could be anywhere from negative to double the stated figure.

Misconception 2: The Toolkit tells you what to do. The EEF is explicit that the Toolkit is not a prescription. It tells you what has worked on average, in other schools, under research conditions. It does not tell you what will work in your school, with your staffing, your learner demographic, and your existing practice. Goldacre (2013) argued in his influential DfE paper that evidence should inform professional judgement, not replace it. The Toolkit is a starting point for a professional conversation, not a shopping list.

Misconception 3: Zero or negative months means the strategy is harmful. Several entries show modest or near-zero effects. This does not mean the strategy is damaging. It may mean the evidence is mixed, or that context determines whether the strategy works. Inquiry-based learning, for example, shows modest effects on average but produces stronger gains when used with learners who already have strong foundational knowledge. Average effects obscure important variation.

The Toolkit includes well-researched strategies. Many classroom techniques lack large-scale study and are absent. Absence from the Toolkit doesn't mean ineffectiveness. Instead, it shows insufficient research (EEF, 2018).

Misconception 5: The EEF says learning styles are completely useless. Learning styles do not appear in the EEF Toolkit. This is because evidence does not support teaching to a learner's preferred style. However, the EEF does support the use of varied representations, including visual and verbal formats. Dual coding, which uses paired visual and verbal information, appears with a positive effect estimate. The distinction is between matching instruction to a supposed fixed learner type (no evidence) and using multiple representations to aid understanding (good evidence).

The gap between knowing the evidence and using it in planning is where most schools lose ground. The EEF Toolkit is consulted during pupil premium reviews or CPD sessions, then filed away. The strategies that consistently produce gains, feedback, metacognition, retrieval practice, and spaced practice, require deliberate structural choices in lesson planning, not one-off efforts.

Consider how a Year 10 history teacher might integrate three high-impact strategies into a single lesson on the causes of the First World War. At the start of the lesson, a short retrieval quiz covering prior knowledge of European alliances serves the retrieval practice strand. This takes five minutes and requires no marking, as learners self-check against a visualised answer key, which also serves the dual coding strand. The main teaching phase shows worked examples on the board. This reduces the thinking load on learners. Without this, learners would have to work out the analysis and written structure at the same time. At the end, learners complete a three-sentence metacognitive reflection. They write what they understood, what they found difficult, and what they will revisit before the next lesson.

The EEF Toolkit shows learning strategies. Lesson design makes these strategies work. Spacing and feedback boost learning (Bjork, 1992; Hattie & Timperley, 2007). Metacognitive monitoring helps learners (Nelson & Narens, 1990). Plan lessons so these mechanisms are key, not chance.

Rosenshine's principles of instruction (Rosenshine, 2012) support many EEF-aligned routines. He synthesised research on effective teaching behaviours, including reviewing previous learning, presenting new content in small steps and checking learner understanding frequently.

Scaffolding shows promise in the Toolkit. Teachers give support, removing it as learners gain independence. Permanent scaffolding hinders long-term learning by reducing effort. Design support with a plan for removal, not lasting reliance.

Questioning appears in the Toolkit via feedback and formative assessment. "Cold calling" and "think-pair-share" help learners retrieve information. These also offer formative data, making feedback timely (Black & Wiliam, 1998). Teachers asking only volunteers get a skewed view and help those who need it least.

The Teaching and Learning Toolkit is the EEF's best-known product, but it sits within a much larger evidence base. The EEF also publishes themed guidance reports, trial results, and implementation frameworks that are worth knowing about.

Guidance Reports cover classroom teaching practices. Current reports include metacognition (metacognition and self-regulated learning), literacy (improving literacy in secondary schools), early language and literacy, maths in Key Stage 2 and effective professional development. Each report has prioritised, numbered recommendations and classroom examples from research. The metacognition guidance report (metacognition and self-regulated learning) gives learners a seven-step model. This teaches them to consider their own thinking, using examples from classrooms (primary and secondary) across subjects.

EEF Project Reports are the published results of individual randomised controlled trials and other studies the EEF has funded. These are more technical than the Toolkit summaries but are freely available and searchable on the EEF website. If you want to know whether a specific commercial programme was trialled and what happened, the project reports are the right source. Some results are surprising: several programmes widely used in schools have shown no significant effect in EEF trials, or have shown effects limited to specific learner groups.

The Early Years Toolkit aids three to five-year-old provision, covering areas like self-regulation (Kraft et al., 2016). Maintained nurseries and funded settings use this toolkit to guide spending decisions (Siraj-Blatchford et al., 2002).

The International Toolkit uses research from high-income nations. It helps teachers assess if findings from the US or Australia apply in UK classrooms. Sometimes they do, sometimes not, said researchers (various dates). The toolkit aids this assessment.

EEF guidance highlights direct instruction, especially for literacy and numeracy with disadvantaged learners. Discover evidence on explicit teaching, worked examples and guided practice. Check if programmes use solid teaching principles before committing to them (EEF, various dates).

EEF Teaching Toolkit in practice — a classroom-ready briefing you can use this week.

The EEF was founded, in part, to give school leaders a more rigorous basis for spending pupil premium funding. Since 2011, schools in England have been required to publish how they spend their pupil premium allocation and to demonstrate its impact. The EEF Toolkit became the dominant reference point for justifying these decisions. When Ofsted inspectors ask about the rationale for a school's pupil premium strategy, most senior leaders now point to the Toolkit.

This has had real benefits. Schools today spend less pupil premium money on laptops for disadvantaged pupils than in 2011. The Toolkit shows this strategy has very little impact on attainment. Schools also spend less on one-to-one teaching assistant support that focuses on being nearby rather than targeted teaching.

However, the instrumental use of the Toolkit in school improvement carries risks. If leaders use it to justify decisions already made, the evidence base becomes a compliance exercise. It stops being a genuine tool for improvement. The EEF is clear about this. Effective school improvement follows a cycle. Choose strategies based on evidence, put them in place properly, and check if they work in your school. Then make changes as needed.

EEF's guidance has four implementation stages. Exploration finds needs, choosing an approach. Preparation designs and plans interventions. Delivery puts plans in place, monitoring progress. Sustaining change embeds improvements over time. Schools skipping preparation see weaker results than those investing in planning (EEF).

School improvement plans should connect evidence to local need and implementation capacity. The EEF implementation guidance stresses careful selection, contextual fit and planned delivery rather than adding generic policy demands. Instruction informed by working memory, good questions and formative assessment is more useful than producing multiple tasks for their own sake.

When using EEF evidence in school improvement planning, three questions focus the work: What problem are we trying to solve? What does the evidence say about strategies that address this problem? And how will we know whether our implementation is working? The Toolkit answers the second question. Your school's data and professional judgement must answer the first and third.

| Strategy | Months Gain | Cost | Evidence Strength | Classroom Starting Point |

|---|---|---|---|---|

| Metacognition and self-regulation | +7 | ££ (low) | 🔒🔒🔒🔒 (high) | Pre-task planning prompts and post-task reflection for all learners |

| Feedback | +6 | £ (very low) | 🔒🔒🔒🔒🔒 (very high) | Actionable written comments linked to success criteria, not effort |

| Reading comprehension strategies | +6 | ££ (low) | 🔒🔒🔒🔒 (high) | Teacher think-aloud modelling summarise, question, clarify, predict |

| Collaborative learning | +5 | £ (very low) | 🔒🔒🔒🔒 (high) | Structured tasks requiring genuine interdependence, not parallel work |

| Peer tutoring | +5 | ££ (low) | 🔒🔒🔒🔒 (high) | Structured same-age or cross-age paired reading with clear role cards |

| Mastery learning | +5 | ££ (low) | 🔒🔒🔒 (moderate) | Clear unit outcomes with targeted re-teaching before moving on |

| Behaviour interventions | +4 | ££ (low) | 🔒🔒🔒 (moderate) | Consistent routines, calm correction, and relationship-building protocols |

| Extending school time | +2 | £££££ (high) | 🔒🔒 (low) | Poor value for money; evidence does not support this over better teaching |

| Digital technology | +4 | £££ (moderate) | 🔒🔒🔒 (moderate) | Only effective when it makes a specific learning mechanism more accessible |

Free for teachers. The platform builds a classroom-ready lesson plan from your topic in under two minutes.

They offer a comprehensive and nuanced understanding of effective teaching. Research by Hattie (2008, 2012) and Coe (2020) highlights what works well. Education Endowment Foundation (EEF) guidance supports evidence use. Visible Learning (Hattie, 2008) helps teachers impact learner outcomes. Evidence reviews (Slavin, 2008; Higgins et al., 2019) offer further insights.

These papers provide the research foundation behind the EEF's approach to evidence-informed practice.

, the Sutton Trust-EEF Teaching and Learning Toolkit (2018) gives accessible summaries. Researchers find the Toolkit helps teachers make evidence-based choices. Hattie (2008) showed effect sizes indicate impact. Visible Learning synthesises many meta-analyses. Slavin (2008) champions evidence-based education programmes for learners.

Higgins, S., Katsipataki, M., Kokotsaki, D., Coleman, R., Major, L.E. and Coe, R. (2014)

The Toolkit’s primary reference explains effect sizes and progress (Higgins et al., 2021). It details evidence grading and summarises 35+ strategies. Schools planning with the Toolkit will find it helpful (Higgins et al., 2021).

Hattie's (2008) Visible Learning synthesises over 800 meta-analyses on achievement. Educational research heavily cites this study. Find Hattie's key findings on what helps every learner.

Hattie, J. (2009)

Hattie (2008) analysed many studies to find factors impacting learner success. "Visible learning" helps teachers see learning from the learner view. Learners become aware of their learning (Hattie, 2012). The EEF uses this in top strategies like feedback (Sutton, 2011) and metacognition (EEF, 2018).

What Makes Great Teaching? Review of the Underpinning Research View study ↗

Commissioned by the Sutton Trust; widely cited in ITT and CPD design

Coe, R., Aloisi, C., Higgins, S. and Major, L.E. (2014)

Effective teaching has six key parts, this review shows. Enthusiasm alone does not improve learner outcomes, studies find. Strong content knowledge and good formative assessment help learners most (Coe et al, 2014).

Education research and practice can change when schools use evidence carefully. Slavin (2020), Gorard (2017) and Whitehurst (2002) all argue for stronger evidence use, but schools still need to judge whether the evidence matches their context, learners and implementation capacity.

Slavin, R. (2020)

Slavin argues that evidence is central to education reform. Use programme evidence carefully: check the quality of the evaluation, the similarity of the setting and whether your school can implement the programme well.

Building Evidence into Education View study ↗

Published by the Department for Education, 2013

Goldacre, B. (2013)

Goldacre (2013) argued for stronger use of trials and evidence in education. His Building Evidence into Education paper helped shape the debate about evidence-informed teaching and the role of rigorous evaluation.

The EEF website offers free reports and evidence summaries. See guidance from the EEF, including trial reports, on their searchable database. Interleaving (Rohrer, 2012) and Bloom's taxonomy (1956) align with findings on spacing and retrieval.

Mapped to the curriculum. CPD-aligned. Free for teachers.