Cognitive Biases: 12 Thinking Errors That Affect

Confirmation bias, anchoring, the Dunning-Kruger effect and 9 more cognitive biases affect how pupils learn and how teachers assess.

Confirmation bias, anchoring, the Dunning-Kruger effect and 9 more cognitive biases affect how pupils learn and how teachers assess.

Teachers make hundreds of judgement calls each day, and some biases respond to training while others require structural safeguards.

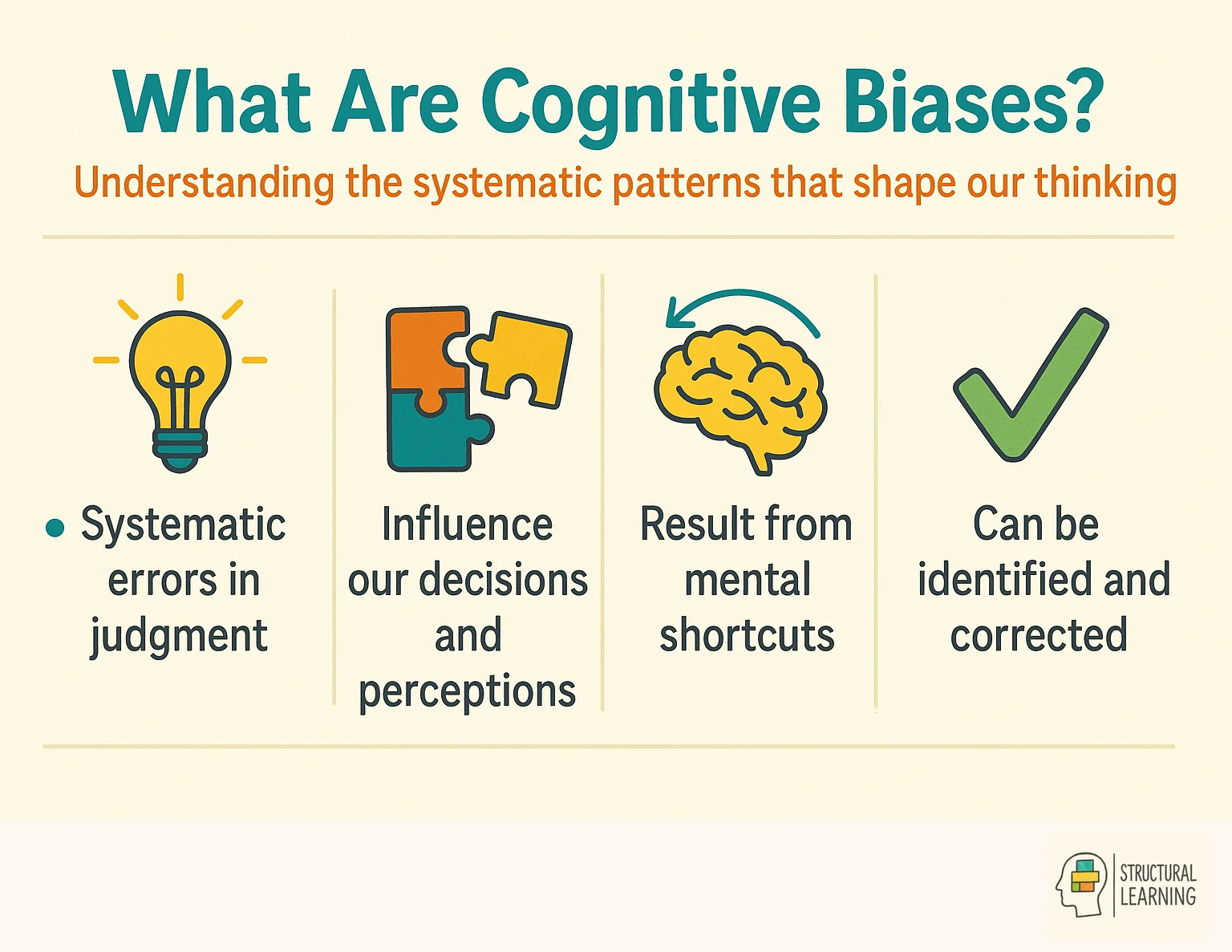

Cognitive biases cause illogical judgements (Tversky & Kahneman, 1974). Learners form unique realities through perceptions. A 2025 meta-analysis (Nature Human Behaviour) found debiasing interventions helped (g = 0.26). However, training struggled to reduce representativeness bias.

Kahneman (2011) said System 1 (fast) and System 2 (slow) thinking cause biases. Kruger and Dunning (1999) found low-performing learners overestimate skills. Nickerson (1998) stated confirmation bias is a common thinking error in education. Rosenthal and Jacobson's (1968) study showed teacher expectations impact learner success.

Cognitive biases can cause learners to misinterpret information, says research (Ross & Nisbett, 1991). This affects their behaviour more than objective facts, according to studies (Asch, 1951; Milgram, 1963). These biases create flawed judgements and illogical thinking (Kahneman, 2011).

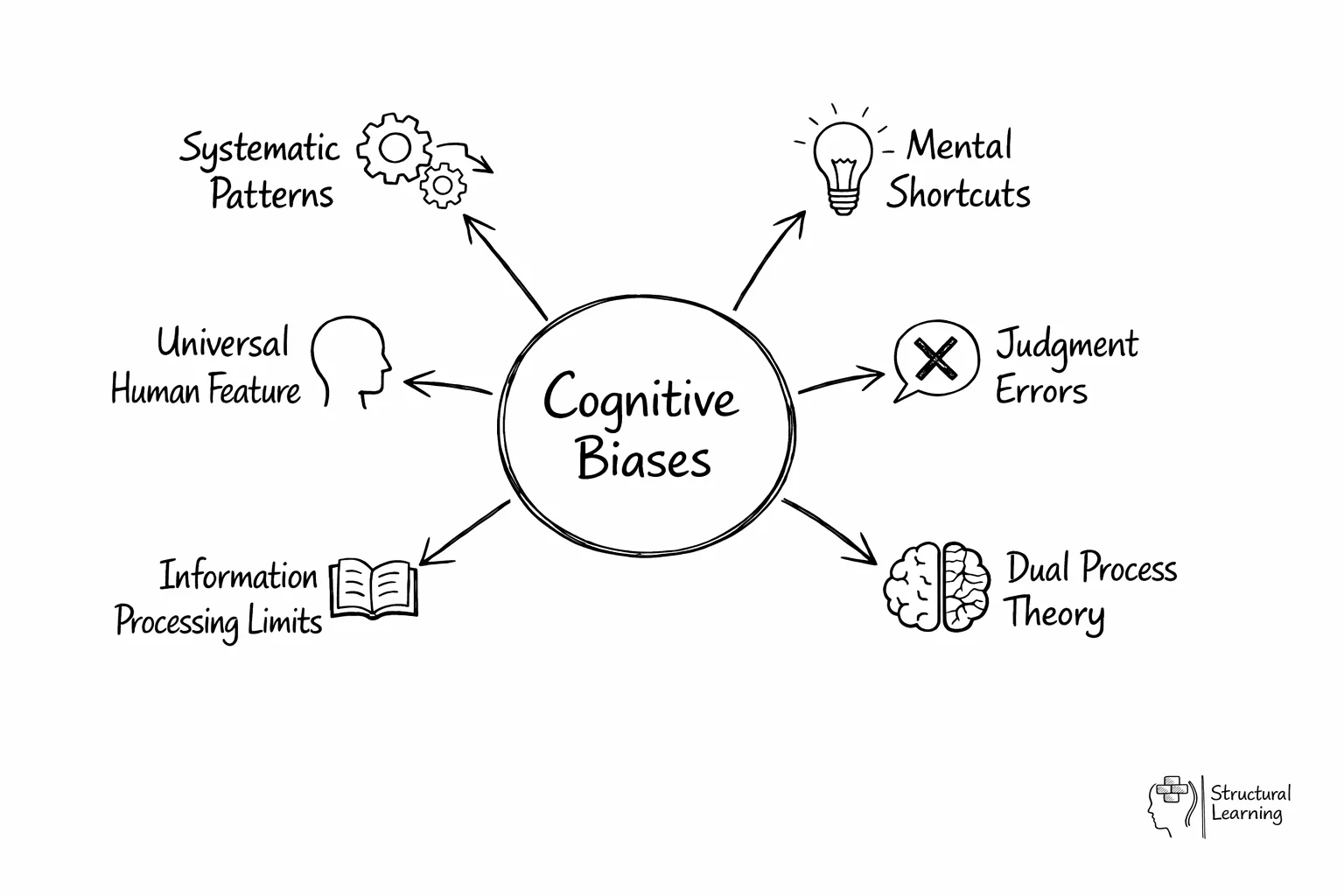

Although cognitive biases are a pervasive aspect of human cognition, they are not necessarily all maladaptive. For more on this topic, see Cognition of learning. They can be seen as a byproduct of the brain's attempt to simplify information processing.

They are often a result of the brain's limited information processing capacity and can be seen as mental shortcuts that usually get us where we need to go, but sometimes lead us astray. This phenomenon is well explained by dual process theory, which distinguishes between fast, automatic thinking and slower, more deliberate cognitive processes.

Here are three key points that summarise what cognitive biases are:

We will examine biases, like self-serving bias (attribution of success to self, failure to situation). The actor-observer bias, identified by Jones and Nisbett (1971), explains attribution of others' actions to character, our own to situation.

Biases shape how we see rationality and create self-awareness blind spots. The fundamental attribution error (social psychology) and optimism bias (positive psychology) impact us. Understanding these ideas comes from sources like APA and research from Nisbett & Ross (1977) and Shepperd, Klein, Waters, & Weinstein (2013).

Cognitive biases affect education. The availability heuristic may make teachers focus on recent events (Tversky & Kahneman, 1974). This focus could distort learner understanding of history. Anchoring bias can affect marking; first impressions influence feedback (Strack & Mussweiler, 1997). These impressions impact learner progress.

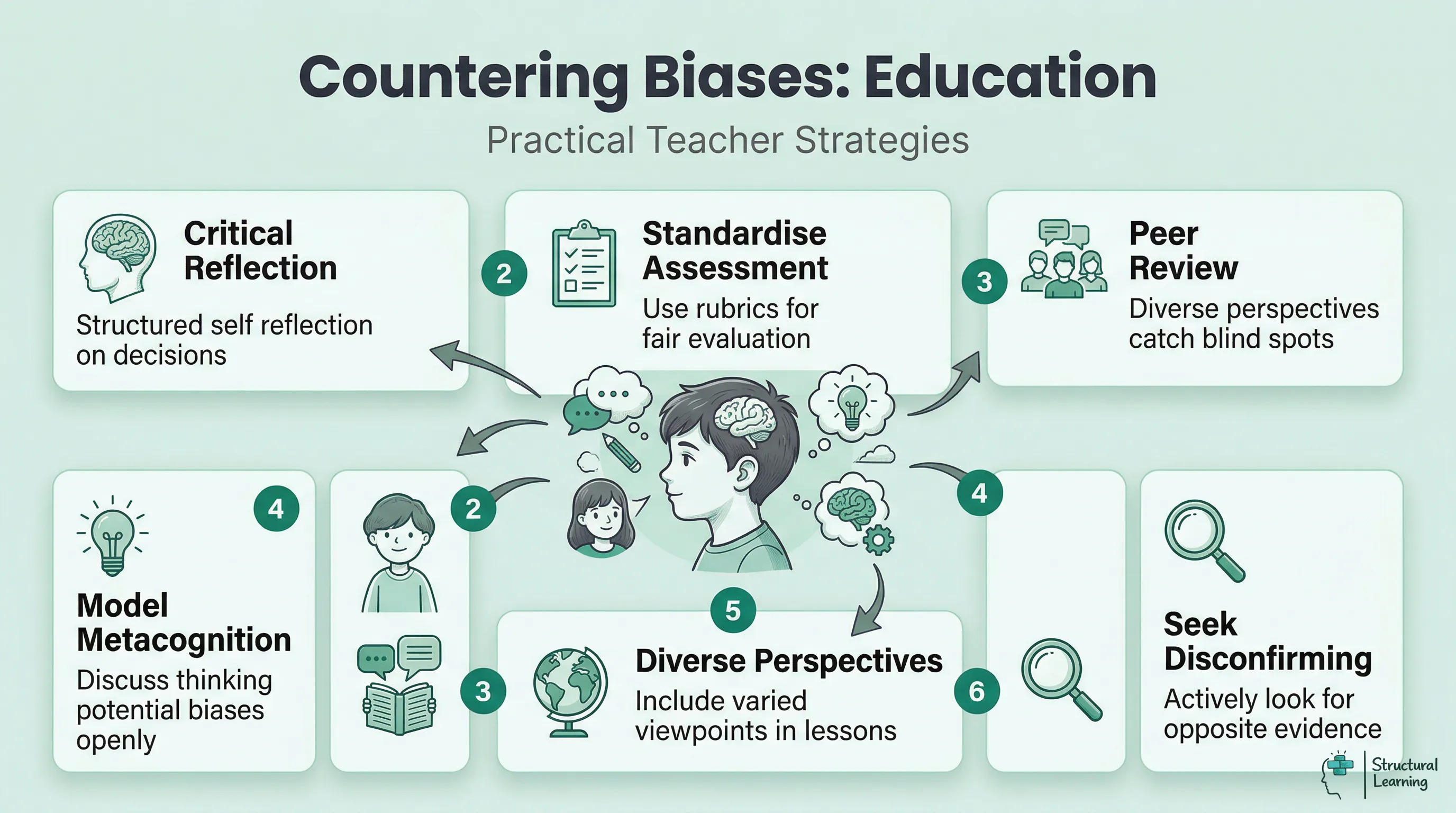

Implement structured reflection and use rubrics, say researchers (e.g. Kahneman, 2011). Encourage peer review. Teachers, model your thinking. Discuss biases. Use diverse views in lessons. Actively seek disconfirming evidence when marking work.

Kahneman and Tversky (1970s) showed thinking errors are common. Their work on judgements earned Kahneman a Nobel Prize in 2002. The research changed views on human rationality and decisions.

Cognitive biases were first identified and studied by a number of influential psychologists, each of whom made significant contributions to the field. This list showcases key researchers from the field of cognitive biases, each contributing a building block to our current understanding of the topic.

This article examines each bias, looking at origins and impacts. We show real-world examples (Kahneman, 2011; Tversky & Kahneman, 1974). This helps the learner understand a complex topic (Ariely, 2008; Thaler & Sunstein, 2008).

Wason (1924-2003) found logical fallacies. He used the Wason selection task to show confirmation bias. Research in the 1960s showed learners seek belief-confirming information (Wason).

2. Robert H. Thouless (1894-1984): Thouless contributed to the early exploration of cognitive biases with his work on wishful thinking and the distortion of evidence. He was a psychologist at Cambridge University Press, and his research in the 1950s examined into the psychology of judgment and decision-making.

Tversky and Kahneman (1970s) changed how we see judgement. Their prospect theory and bias work appeared in key journals. Learners’ thinking was the focus for Tversky (1937-1996) and Kahneman (b. 1934).

4. Gerd Gigerenzer (b. 1947): Gigerenzer's research focuses on the role of heuristics in decision-making. He has contributed to the understanding of how people make decisions under uncertainty and is known for his critique of the work of Kahneman and Tversky, emphasising the adaptive nature of heuristics.

5. Daniel L. Schacter (b. 1952): Schacter's work at Harvard University has been pivotal in exploring memory biases, especially false memories. His research in cognitive psychology and cognitive neuroscience has shed light on the mechanisms of memory distortion and their implications for cognitive biases.

Keith E. Stanovich (1950) showed that critical thinking relates to cognitive biases. Stanovich's research links cognitive skills with rational choices. Thinking skills help learners overcome biases, his work suggests. Teachers can use questioning to help learners recognise limits. This connects to social cognitive theories about processing information (Stanovich).

Nisbett (date not provided) showed cultural background affects thinking. Some biases are universal, but culture and learning shape others. Teachers should note diverse learners solve problems differently. Understanding this also helps teachers give effective feedback across cultures.

Dweck's (2006) mindset research links to cognitive biases affecting how learners view success. This impacts social-emotional learning, helping learners manage emotions. Awareness of biases is key for learners with special needs. It affects perceptions of learning challenges (Dweck, 2006).

Researchers study cognitive bias and its impact on learners in classrooms. Bias links to dissonance when learners face new ideas (Festinger, 1957). Mindfulness-Based Cognitive Therapy addresses some biases (Segal et al., 2018). "Cognitive Distortions" (Burns, 1980) helps learners change thinking patterns. Researchers study how bias develops in infancy too (Baillargeon, 2004).

Cognitive biases impact teaching practices. Teachers may favour existing beliefs about learner ability (confirmation bias). Kahneman (n.d.) found teachers judge using recent examples (availability heuristic). Initial impressions can skew assessments (Tversky & Kahneman, 1974).

The halo effect affects how you judge a learner across subjects. Sweller (1988) found overconfidence means rushed teaching, causing problems. Attribution bias makes teachers blame learners' struggles on laziness, instead of learning obstacles.

Teachers can make fairer judgements by noting common mistakes. Keep good records to fight availability bias. Use rubrics to lessen halo effects (Dunning-Kruger, date). Question your first thoughts about each learner's skills often. This helps you build fairer classrooms and decide teaching methods using evidence.

Cognitive biases impact learning and teaching without us realising. Learners often show confirmation bias, looking for support (Nickerson, 1998). This limits critical thinking if learners ignore conflicting facts. The Dunning-Kruger effect (Dunning & Kruger, 1999) makes some learners think they are more skilled. Some able learners underestimate their abilities.

Teachers also make thinking errors. The halo effect means one trait affects a learner's mark. Anchoring bias creates unfair grades from first views (Sweller, n.d.). Confirmation bias in lesson plans ignores learner needs (Sweller, n.d.).

Acknowledging bias lets teachers use strategies for fairer learning. Learners find evidence against their ideas, reducing bias (Lord et al., 1979). Anonymous marking and reflection also help (Sadler, 1981; Boud et al., 1985). Teachers should review marking and get feedback for fair practice.

Cognitive biases appear in classrooms daily, affecting teaching and learning. Teachers can show confirmation bias, says research (Rosenthal & Jacobson, 1968). They may favour information confirming initial impressions. The halo effect (Thorndike, 1920) means past good work affects assessment, creating errors.

Learners use thinking shortcuts that affect learning. The availability heuristic means learners focus too much on recent topics (Tversky & Kahneman, 1974). Learners get overconfident, thinking they understand when only familiar (Dunlosky et al., 2013).

Good teaching spots patterns. Teachers should question learner assessments to fight bias. Anonymous marking lowers halo effects. Teach learners metacognitive strategies. This helps them notice bad shortcuts (Kahneman, 2011). Learners improve self-assessment by separating recognition from recall (Bjork et al., 2013).

Cognitive bias means judgement deviates from logic (Tversky & Kahneman, 1974). Individuals may process information illogically. This can result in inaccurate conclusions based on perceptions (Gilovich, 1991; Ariely, 2008).

Provide learners with examples of confirmation or anchoring bias. Use activities to help learners recognise these biases in their own thinking. This improves their decision making skills (Kahneman, 2011; Tversky & Kahneman, 1974).

Teaching cognitive biases matters, say researchers. This work sharpens a learner's critical thinking skills. Learners then process information with more objectivity. As a result, they avoid decision-making errors (Kahneman, 2011; Tversky & Kahneman, 1974).

These errors can hinder effective learning. Teachers may oversimplify concepts, miss real-life examples, or neglect practical application (Bransford et al., 2000). Learners then struggle to fully understand the material (Willingham, 2009; Ambrose et al., 2010). Addressing these issues helps learners succeed (Brown et al., 2014).

Focus on learners showing bias awareness (Kahneman, 2011). Look for fewer poor choices (Ariely, 2008). Check if their critical thinking improves (Willingham, 2007; Halpern, 2014).

We can reduce bias with clear strategies. Kahneman (date) showed slowing down thinking helps. Teachers: use reflection before learner assessment choices. This encourages fairer grouping and interventions.

Frameworks help avoid bias, like confirmation and halo effects. Use rubrics that focus on what learners do, not just feelings (Guilbert, 2005). Review work anonymously, or mark one thing for all learners first. Work with colleagues; diverse views challenge bias (Hattie, 2012; Petty, 2014).

Self-monitoring boosts bias awareness (Kahneman, 2011). Note first impressions of new learners. Review these notes later to spot thinking patterns (Goleman, 1995). Teams can check for bias in meetings. Educators should question any quick judgements (Allport, 1954; Steele, 2010). This process makes bias recognition useful.

Grading can be affected by cognitive biases, impacting learners. The halo effect means one good thing colours later marking. Contrast effect changes grades based on prior work. Rosenthal and Jacobson (1968) showed expectations become self-fulfilling, altering grades and achievements.

Anchoring bias affects grading, using irrelevant data as reference points. Previous grades or appearance can skew assessment (Tversky & Kahneman, 1974). Confirmation bias makes teachers see what they expect (Nickerson, 1998). This overlooks learner progress or misinterprets answers (Darley & Gross, 1983).

Blind marking, where possible, reduces bias (Aronson, 2000). Use clear marking rubrics before you start. Mark all answers to one question at a time. Discuss marking with colleagues for consistency (Sadler, 2009; Bloxham, 2012).

Researchers (e.g. Kahneman, 2011; Tversky & Kahneman, 1974) explored cognitive biases. Bias affects learner judgement and decision making. Teachers can address bias to improve learning outcomes (e.g. Croskerry, 2003; Lilienfeld et al., 2009).

Researchers suggest AI dialogue systems impact learner cognition. A systematic review (View study ↗629 citations) explores this area. They examine how excessive use affects learners' cognitive abilities. More research is needed to fully understand the effects (Author, Date).

Zhai et al. (2024)

Over-reliance on AI systems could harm learners' thinking skills (Zawacki-Richter et al., 2019). Teachers using AI should know this, especially regarding automation bias (Cummings, 2004). Learners might accept AI answers without thinking critically (Parasuraman & Manzey, 2010).

Human-AI Collaborative Essay Scoring: A Dual-Process Framework with LLMs View study ↗63 citations

Xiao et al. (2024)

AI can score learner essays, assisting teachers. Awareness of confirmation bias (Kahneman, 2011) and anchoring bias (Tversky & Kahneman, 1974) is vital. These biases impact AI and teacher assessment. Combined approaches may improve reliability.

Heuristics and Biases: The Psychology of Intuitive Judgment View study ↗3874 citations

Gilovich et al. (2002)

Kahneman (2011) explores errors in thinking. People use shortcuts, leading to biases. These biases, like availability or representativeness (Tversky & Kahneman, 1974), impact learners and teacher judgements. Teachers need this knowledge.

Incentivizing Dual Process Thinking for Efficient Large Language Model Reasoning View study ↗17 citations

Cheng et al. (2025)

AI systems mimic dual-process thinking (Kahneman, 2011). System 1 gives quick answers; System 2 needs more thought. Teachers, understand cognitive biases. System 1 may cause errors, but System 2 helps learners analyse (Evans, 2003).

Planning Like Human: A Dual-process Framework for Dialogue Planning View study ↗45 citations

He et al. (2024)

Researchers propose a new AI dialogue system framework. It uses thinking processes like human conversation planning. Teachers should grasp cognitive biases. The planning fallacy (Kahneman, 2011) impacts learner and AI decisions. Understanding helps teachers lead classroom discussions well.