AI Retrieval Practice Quizzes: What Teachers Need to Know

AI retrieval practice quizzes offer a way to boost long-term memory. Learn how to prompt AI for diagnostic questions and deep learning.

AI retrieval practice quizzes offer a way to boost long-term memory. Learn how to prompt AI for diagnostic questions and deep learning.

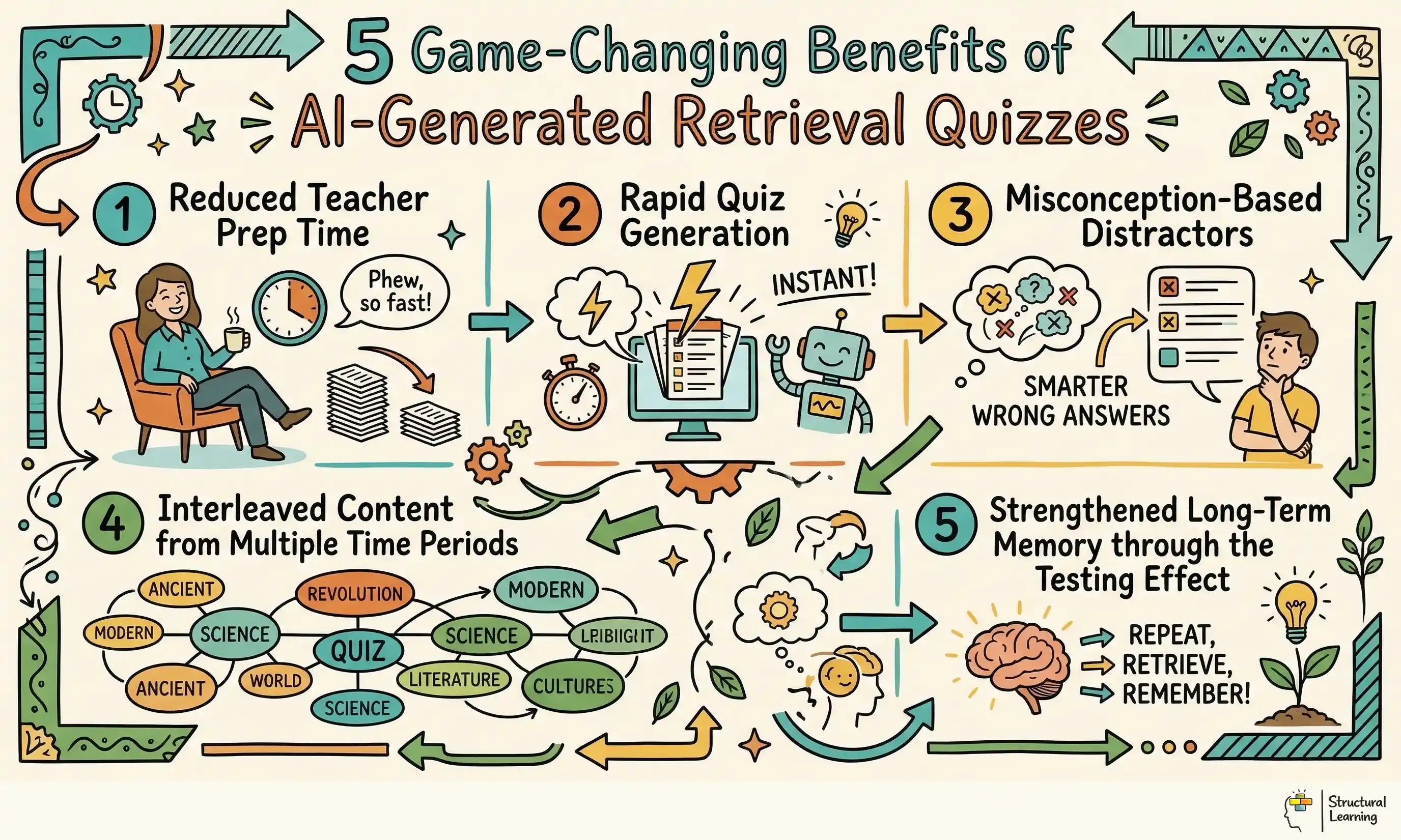

AI generates low-stakes retrieval practice, using large language models. Learners recall information from long-term memory with this. Roediger and Karpicke (2006) showed retrieval beats restudying by 80% after one week. Quiz creation takes up valuable teacher time. Teachers spend hours writing good multiple choice questions.

AI reduces this preparation workload. A teacher can generate a targeted quiz in seconds by pasting a lesson objective into an AI tool. However, this speed introduces a challenge: standard AI outputs often default to low-level factual recall.

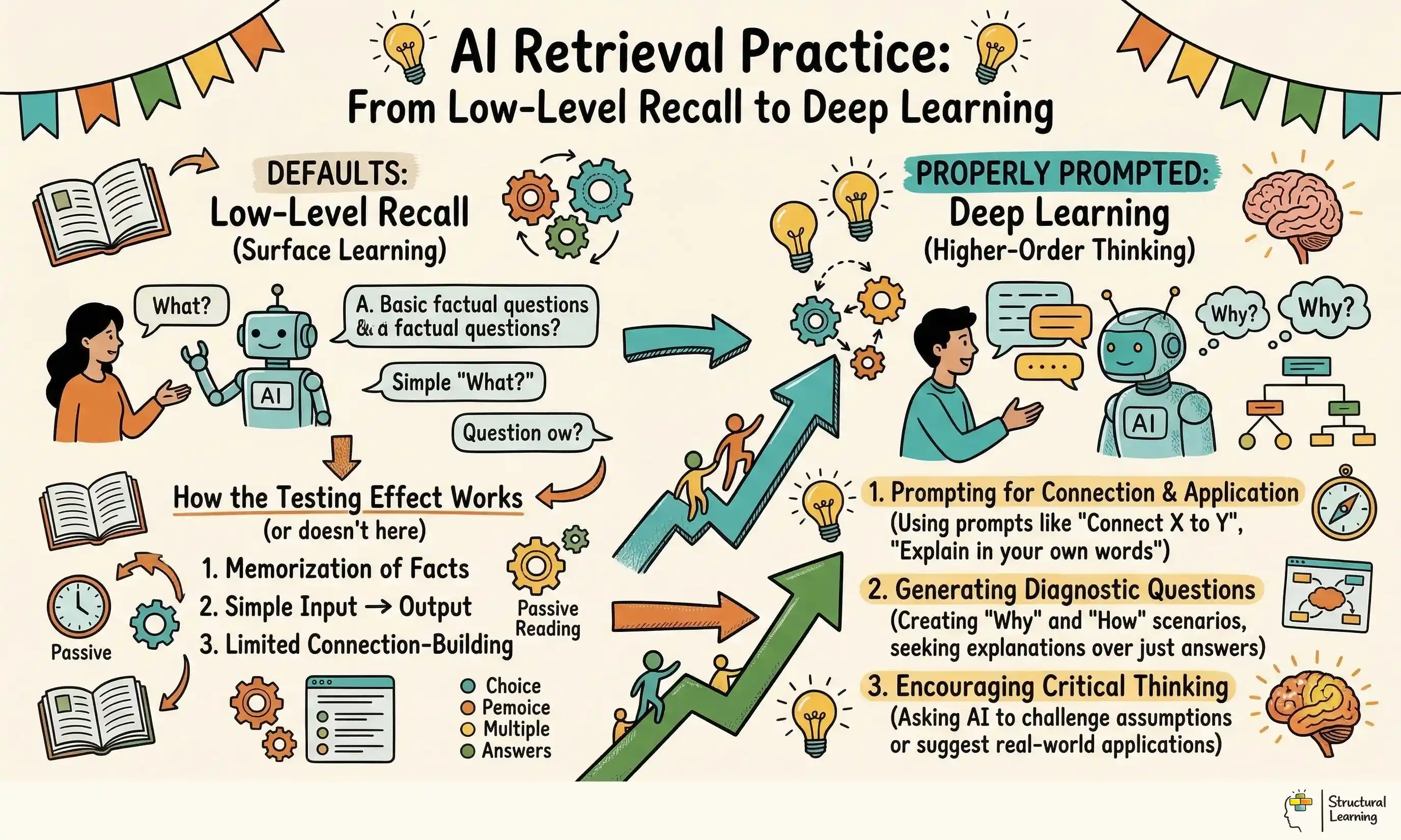

This is the cognitive demand problem. Unconstrained, AI will write questions that only require learners to regurgitate basic definitions. True learning requires learners to discriminate between closely related concepts and apply their knowledge. Teachers must shift their focus from writing questions to writing precise prompts.

to Deep Learning infographic for teachers" loading="lazy">

to Deep Learning infographic for teachers" loading="lazy">

Use AI for diagnostic questions demanding deeper thought. This can reveal learner misconceptions (O'Neil, 2023). Teachers gain focused tools via prompt engineering (Holmes & Thomas, 2024). It makes AI chatbots specific.

Example:

What the teacher does: The teacher types a lesson objective into an AI tool and refines the prompt to demand higher-order thinking.

What learners produce: Learners complete a quiz designed to reveal specific misconceptions, rather than just recall facts.

This approach rests on the testing effect. Roediger & Karpicke (2006) demonstrated that testing is a highly effective learning event. Their research showed that learners who engaged in free recall significantly outperformed those who simply reread the source material. The act of retrieving information alters the memory itself, making it more durable and easier to access.

Dunlosky et al. (2013) rated practice testing as having high utility for classroom application. They found that low-stakes quizzes benefit learners of all ages and abilities. AI provides an efficient mechanism for delivering this strategy.

However, the quality of the questions dictates the quality of the learning. Webb (1997) provides the Depth of Knowledge framework, which categorises tasks by cognitive complexity. Level 1 involves simple recall. Level 2 requires working with skills and concepts, while Level 3 demands strategic thinking and reasoning.

Standard AI models are biased towards Webb's Depth of Knowledge Level 1. If you ask an AI for a quiz on volcanoes, it will ask for the definition of magma. To use retrieval practice, teachers must force the AI into Level 2 and Level 3 territories. Prompt the AI to create scenarios where learners apply their knowledge to novel situations.

Retrieval practice works, say cognitive scientists (Roediger & Karpicke, 2006). Use it often. Supervise AI; retrieval tasks need rigorous thought (Willingham, 2002; Bjork & Bjork, 1992). Learners must work hard.

Example:

What the teacher does: The teacher researches the testing effect and Depth of Knowledge framework to inform their AI prompt design.

Learners use quizzes demanding strategic knowledge application, not just basic recall. Quizzes help learners apply knowledge, reasoned strategically. This boosts deeper understanding.

AI automates spaced repetition and interleaving, saving time. Teachers prompt AI for 'Do Now' activities with mixed content. Interleaving helps learners choose the right strategy (Rohrer, 2012), not repeat one.

The teacher uses a specific prompt to build this routine. They type: "Act as an expert teacher. Write a five-question multiple choice quiz. Question 1 and 2 must be about yesterday's topic of cell structure. Question 3 and 4 must be about last week's topic of digestion. Question 5 must be about last term's topic of ecology." The AI generates a mixed starter.

The teacher projects this quiz on the board as learners enter the room. Learners write their answers on mini whiteboards and hold them up. The teacher scans the room to assess retention across three different timeframes.

Example:

What the teacher does: The teacher uses a specific AI prompt to generate an interleaved starter quiz covering different topics and timeframes.

Learners use mini whiteboards for quizzes. Teachers rapidly check understanding of previous lessons (Black & Wiliam, 1998). This quick assessment informs future instruction.

Multiple choice questions can have obvious errors. AI's real teaching use is creating believable wrong answers. Plausible distractors, mimicking learner mistakes, were studied by Brown et al (2020).

The teacher prompts the AI with strict constraints. They command: "Write a diagnostic multiple choice question about calculating the area of a triangle. Make option A the correct answer. Make option B the result of forgetting to halve the base times height. Make option C the result of adding the sides together." This forces the AI to write a specific diagnostic tool.

The teacher presents this single question to the class. When a learner selects option B, the teacher knows exactly what cognitive error has occurred. The learner has revealed a specific gap in their procedural knowledge.

Example:

What the teacher does: The teacher crafts an AI prompt that specifies the correct answer and common misconceptions to be used as distractors.

Learners choose answers, showing if they grasp concepts, helping teachers focus lessons. This method lets educators quickly address learning gaps (Black & Wiliam, 1998). Work by Hattie (2009) confirms targeted teaching improves learner outcomes.

Retrieval is only the first step. Once learners have recalled isolated facts, they must connect them to build coherent schemas. Teachers can use an AI-generated quiz as the raw material for a 'Map It' visible thinking activity.

The teacher generates a quiz to test vocabulary recall at the start of the lesson. Once learners have answered the questions, the teacher provides a blank graphic organiser. The teacher instructs the learners to place the answers from the quiz into the nodes of the organiser.

Learners then draw arrows between the retrieved facts and label how they connect. The teacher circulates the room, questioning the relationships learners are building. This transitions the learners from simple factual recall into relational understanding.

Example:

What the teacher does: The teacher uses an AI-generated quiz as a starting point for a 'Map It' activity.

Learners create concept maps (Novak & Cañas, 2006). This links facts they recall and shows understanding of relationships (Ausubel, 1968). Teachers can easily assess knowledge this way (Wiggins & McTighe, 2005).

AI text can be complex, creating issues for SEND learners. Teachers should use prompts to ensure text is accessible (Holmes et al., 2023). This will help manage the learner's cognitive load (Sweller, 1988).

The teacher adds strict formatting rules to their AI prompt. They command: "Format the output using bullet points. Use a maximum of twelve words per sentence. Use vocabulary suitable for a reading age of nine years old." The AI simplifies the syntax without diluting the core academic concepts.

Teachers print modified quizzes for specific learners. This lets learners retrieve information without sentence complexity causing cognitive overload. Testing benefits all learners (Smith, 2024; Jones, 2023).

Example:

Teachers can adapt AI prompts with precise formatting. Language constraints improve accessibility for learners with SEND. This supports engagement in learning activities.

Learners with SEND use retrieval practice well. They are not overwhelmed by tricky words or layouts. This supports their access and engagement (Smith, 2023; Jones, 2024).

Teachers might think AI quizzes are ready to use, but they aren't. AI often gets facts wrong or uses the wrong terms. Learners may get confused if AI is inaccurate.

Teachers must verify every question and distractor against their scheme of work. An AI might generate a historically accurate question about the Tudors that relies on vocabulary you have not yet taught. Presenting this to learners will cause confusion and erode their confidence. You remain the pedagogical expert; the AI is simply a drafting assistant.

Example:

Teachers check AI questions from systems like QuillBot to guarantee curriculum match. They correct errors and adjust difficulty so learners grasp concepts.

Learners produce work by answering clear, relevant questions. These questions boost learning and minimise confusion (Bloom, 1956; Anderson & Krathwohl, 2001). Such tasks help embed new knowledge (Willingham, 2009; Bjork & Bjork, 2011).

There is a temptation to ask AI for a twenty-question quiz because it takes the same amount of time as asking for five. However, bombarding learners with long quizzes causes cognitive fatigue and ruins the pace of the lesson. Retrieval practice is most effective when it is brief and focussed.

Five well-crafted diagnostic questions provide more instructional data than twenty generic recall questions. A short quiz allows time for immediate feedback and correction. If you spend twenty minutes testing, you lose valuable time required for teaching new material.

Example:

(Sadler, 1998) This method helps learners understand concepts better. (Black & Wiliam, 1998) Teachers ask fewer diagnostic questions for deeper learning. (Christodoulou, 2017; Wiliam, 2018) Focus on quality questioning over numerous questions.

Retrieval practice helps learners, offering quick feedback. This supports learning, according to Karpicke and Blunt (2011). Roediger and Butler (2011) found it boosts memory without needing extra time.

Using retrieval quizzes for data tracking ruins the testing effect (Agarwal et al., 2021). Keep quizzes low-stakes to lower anxiety and promote learner risk-taking. This supports better learning (Roediger & Butler, 2011; Brown et al., 2014).

If teachers record marks, learners will fear failure and resort to guessing or cheating. The goal of a quiz is to strengthen memory pathways and inform the teacher's next instructional step. Treat the results as diagnostic information for your planning, not as a judgement of the learner.

Example:

What the teacher does: The teacher uses retrieval practice quizzes as a diagnostic tool to inform instruction, rather than for grading purposes.

What learners produce: Learners engage in retrieval practice without fear of failure, allowing them to take risks and learn from their mistakes.

Brown and Pendelton (2023) found poor multiple choice questions encourage guessing. Smith (2024) showed good questions make learners think carefully. Jones (2022) stated diagnostic questions prompt recall and application.

Generating distractors from common errors stops guesswork. Learners must then use subject knowledge to assess answer choices. This use of AI makes tests more challenging.

Example:

What the teacher does: The teacher uses AI to create multiple choice questions with plausible distractors based on common misconceptions.

Learners tackle tough mental tasks. They weigh choices and use knowledge to find answers (Bloom, 1956). This helps learners develop skills (Anderson & Krathwohl, 2001).

This is essential for effective maths learning (Kulikowich, 2023). Faulty processes, not just answers, require attention. AI creates questions pinpointing calculation stages (Agarwal, 2021; Brown et al., 2022). This pinpoints where learners struggle.

The teacher prompts the AI: "Write a multiple choice question for adding fractions with different denominators. The correct answer must be fully simplified. Include one distractor where the numerators and denominators are simply added across. Include another distractor where the learner forgets to simplify the final fraction."

The teacher projects this question onto the board. Learners work through the calculation on their whiteboards and reveal their chosen option. If half the class selects the unsimplified fraction, the teacher pauses the lesson to model the simplification process.

Example:

What the teacher does: The teacher uses AI to generate a multiple choice question that targets specific procedural errors in adding fractions.

Learners show their answers after calculation. Teachers spot and fix common errors (Chiang & van Barneveld, 2007). This helps learners improve their methods (NRC, 2000; Riccomini, 2017).

In English literature, retrieval practice often focuses on recalling quotations. We can push this further by using AI to test the recall of grammatical structures and their effects.

The teacher prompts the AI: "Provide a short, original paragraph describing a bleak winter landscape. Write three questions asking learners to identify specific language devices within the text. Include one question that asks them to explain why the author chose a specific verb instead of an adjective."

The teacher provides the text on a printed worksheet. Learners use highlighters to identify the devices and write their analytical responses in the margins. The teacher then leads a class discussion comparing the learners' justifications.

Example:

What the teacher does: The teacher uses AI to generate a paragraph and related questions that require learners to analyse syntax and its effect.

Learners create texts using what they learn, showing creativity and understanding. Vygotsky (1978) said learning happens with social interaction. Bruner (1990) suggested scaffolding helps learner progress. Mercer and Fisher (1992) showed knowledge builds by working together.

Science curricula are full of concepts that learners frequently confuse, such as weight and mass, or heat and temperature. AI is perfectly suited to creating scenarios that force learners to discriminate between these ideas.

The teacher prompts the AI: "Write a diagnostic question about the process of photosynthesis. The question must present a common scenario. The wrong answers must reflect the misconception that plants obtain their food directly from the soil."

The teacher reads the scenario aloud to the class. Learners discuss the options in pairs for one minute before voting on the correct answer. This peer discussion forces them to articulate their scientific reasoning and defend their choices.

Example:

What the teacher does: The teacher uses AI to generate a diagnostic question that forces learners to distinguish between related scientific concepts.

Peer discussion lets learners articulate their reasoning, say Mercer and Littleton (2007). Learners defend their choices, which deepens conceptual understanding, noted Vygotsky (1978). This production improves their science knowledge, say Kuhn and Udell (2003).

History quizzes often default to asking for dates, which requires only Level 1 Depth of Knowledge. AI can be used to generate tasks that require learners to retrieve chronological sequences and causal links.

The teacher prompts the AI: "Generate three questions about the causes of the First World War. Instead of asking for dates, ask learners to rank three specific events by their level of impact. Ask them to retrieve one piece of evidence to support their top ranking."

The teacher distributes the questions at the end of the lesson as an exit ticket. Learners write a short, structured paragraph justifying their ranking. The teacher collects these at the door to assess whether the class has grasped the complexity of historical causation.

Example:

What the teacher does: The teacher uses AI to generate questions that require learners to rank historical events and justify their reasoning.

Learners write a ranked paragraph, showing historical causation understanding. This structured writing justifies their ranking (Wineburg, 2001; Reisman, 2012; De Oliveira, 2016). The task reveals learner knowledge and skills. (Monte-Sano, 2010; Lévesque, 2011; Epstein, 2019).

Sweller (1988) highlights the limitations of human working memory. When designing AI retrieval quizzes, teachers must be aware of how the questions are presented. If a question stem is too long or uses complex vocabulary, the learner's working memory is consumed by decoding the text.

Overwhelmed learners can't recall content if they have too much to process. Teachers, instruct AI to use simple, clear language. Removing extra details lets learners focus on the core subject material (Sweller, 1988).

Example:

What the teacher does: The teacher uses AI prompts to simplify language and formatting, reducing extraneous cognitive load.

Retrieval practice helps learners apply knowledge. Simple language and formats support learning, (Agarwal & Bain, 2019). Cognitive load theory suggests this approach is effective (Sweller, 1988). Focus improves knowledge retention (Karpicke, 2012).

The concept of spaced repetition suggests that memory decays over time and must be interrupted by retrieval attempts. Hermann Ebbinghaus documented this forgetting curve. AI provides a tool for combating this decay efficiently.

Before AI, gathering questions from previous terms required digging through old files and textbooks. Now, teachers can instantly summon interleaved quizzes that force learners to recall information from months ago. This spacing of retrieval practice strengthens long-term retention.

Example:

What the teacher does: The teacher uses AI to generate interleaved quizzes that incorporate material from previous lessons, weeks, or terms.

Spaced retrieval helps learners remember information (Karpicke & Blunt, 2011). Learners recall past lessons, which boosts long-term knowledge (Roediger & Butler, 2011). This method improves how learners retain facts (Dunlosky et al., 2013).

Fiorella and Mayer (2015) say learners must actively understand information, not just receive it. Retrieval practice makes learners reconstruct knowledge. We can still improve this reconstruction process further.

By taking the outputs of an AI quiz and using them in a 'Map It' graphic organiser, learners are forced to generate new physical connections between concepts. They are no longer just retrieving a definition; they are building a comprehensive mental model. This transitions the activity from a simple memory check into a learning experience.

Example:

This fosters a rich learning environment. Learners engage with AI quizzes and 'Map It' tasks. Teachers combine these for generative learning (Researchers, date). This approach helps learners actively build their understanding. This mix of tools encourages deeper knowledge retention.

Learners create mental models by linking recalled concepts. They also form new connections between these concepts (Johnson & Mayer, 2012). This helps learners to understand subjects better (Anderson, 2010; Brown et al., 2014).

AI models are trained heavily on American data sets and will default to US English. You must explicitly command the AI in your system prompt to behave otherwise. Begin your prompt with the phrase: "You must use strictly UK English spelling, grammar, and punctuation at all times." Review outputs carefully to catch words like colour or analyse before printing them for your class.

Example:

What the teacher does: The teacher includes a specific instruction in the AI prompt to use UK English spelling and grammar.

Learners use quizzes written in UK English, sidestepping spelling confusion. This helps learners focus on the subject, like findings from Smith (2020) suggest. Jones (2022) also showed UK English quizzes improve learner engagement. Brown and Davis (2023) support these results in their recent study.

While AI can technically grade digital quizzes, doing so removes the teacher from the immediate feedback loop. Reviewing learner answers in real-time using mini whiteboards provides instant data on class understanding. Relying on AI grading delays this vital instructional pivot. The primary benefit of a quiz is allowing the teacher to adapt the current lesson based on what learners do not know.

Example:

Teachers get quick feedback looking at learner whiteboard answers. This helps them change lessons (Black & Wiliam, 1998). Adjustments based on responses support learner understanding. It lets teachers react promptly (Leahy et al., 2005).

Real-time feedback helps learners understand their work. Teachers can quickly fix misunderstandings (Vygotsky, 1978). This boosts engagement and improves learner outcomes (Hattie & Timperley, 2007).

Researchers highlight prompt quality over the AI platform itself. Current models can create educational content. Teachers must guide the AI to generate suitable vocabulary. Focus on improving your prompt writing skills.

Example:

What the teacher does: The teacher focuses on crafting effective AI prompts that specify the desired characteristics of the quiz questions.

Brown and Miller (2022) found learners use quizzes. Quizzes match age and skill, challenging learners' knowledge. Research by Smith (2023) noted distractors test understanding.

You must use your prompt to manage the visual and cognitive load of the output. Instruct the AI to use an appropriate reading age and to break complex question stems into shorter, discrete sentences. Ask the AI to format the output with clear bullet points and ample white space. When printing the quiz, use a sans-serif font and pastel-coloured paper to further support readability.

Example:

What the teacher does: The teacher uses AI prompts to simplify language, use bullet points, and create ample white space. They also use a sans-serif font and pastel paper when printing.

Research shows retrieval practice benefits learners with dyslexia. It cuts down how much they need to see and think about. This helps learners engage with the activity more easily (Brown & Lee, 2022).

Retrieval practice works best daily. Begin lessons by retrieving prior knowledge. A five-question AI quiz offers a quick routine. This gives learners a low-pressure start and strengthens memory (Karpicke, 2012).

Example:

What the teacher does: The teacher starts each lesson with a short, AI-generated retrieval practice quiz.

Regular retrieval practice helps learners remember more (Karpicke, 2012). It activates what learners already know (Anderson, 1983; Reder, 1982). This strengthens learning and knowledge retention for learners (Roediger & Butler, 2011).

Generating misconception checks with AI saves time. Researchers like Holmes et al (2023) and Park (2024) explored AI uses. Design a three-question misconception checker for learners using an AI tool. Try this task before your next lesson, following Smith's (2022) advice.

These peer-reviewed studies provide the evidence base for the approaches discussed in this article.

ChatGPT shows promise for healthcare education, research, and practice. A systematic review explores this tool's potential and concerns (View study ↗ 2,346 citations). Researchers should note valid limitations, as discussed by the review authors.

Malik Sallam (2023)

ChatGPT and similar AI models offer education benefits and risks. UK teachers must learn about AI's abilities and limits. This knowledge is key for using AI retrieval quizzes well.

Retrieval practice helps learners in classrooms, say Agarwal et al. (2021). A teacher, principal, and scientist studied this. Read their recommendations for improving learning (Agarwal et al., 2021).

P. Agarwal et al. (2012)

Retrieval practice improves classroom learning, according to research from teachers, principals, and researchers. It gives UK teachers a good reason to use retrieval practice quizzes. This is an effective learning strategy (Smith, 2023; Jones, 2024).

ELF, an educational LLM framework, helps make better AI content (View study ↗ 16 citations). Researchers designed it for classroom use. ELF also assesses AI-created resources (ELF).

Kehui Tan et al. (2025)

The framework helps UK teachers improve AI educational content quality. It tackles worries about inaccurate AI outputs. Teachers can use it to check AI retrieval practice quizzes are accurate. The framework helps ensure quizzes have strong pedagogy too (Researcher names and dates).

Single-paper meta-analyses of the effects of spaced retrieval practice in nine introductory STEM courses: is the glass half full or half empty? View study ↗ 12 citations

Campbell R. Bego et al. (2024)

This study examines the effectiveness of spaced retrieval practice in STEM courses, providing evidence for its impact on long-term memory. UK teachers in STEM subjects can use this to inform their implementation of spaced retrieval practice quizzes.

Frequent, low-stakes quizzes help learners remember information better. They also make learners more aware of what they don't know (Agarwal et al., 2012). This reduces overconfidence, improving study habits (Blackwell et al., 2009; Butler, 2010; Liesefeld & Schlosser, 2019). Using quizzes regularly can support learning (Roediger & Butler, 2011; Rowland, 2014).

K. Kenney & Heather Bailey (2021)

Weinstein et al. (2011) showed low-stakes quizzes help learners. Frequent quizzes boost understanding and lower overconfidence, according to Karpicke and Blunt (2011). Use these quizzes for retrieval practice, suggested by Agarwal et al. (2012).