AI Literacy for Teachers: Understanding, Evaluating and

AI literacy means understanding how AI works, recognising its limitations and using it responsibly. Learn prompt engineering, how to spot hallucinations.

AI literacy means understanding how AI works, recognising its limitations and using it responsibly. Learn prompt engineering, how to spot hallucinations.

Teachers need AI literacy to assess and use artificial intelligence tools well. AI can change resource creation and learning, but teachers must know its limits. Use AI's power while protecting learners and keeping standards high (O'Neil, 2016).

AI literacy for teachers means knowing how AI systems create their answers. It also means checking those answers for accuracy and bias, and using AI in lawful and ethical ways. Teachers also need to know when not to use AI, especially when it could weaken teacher judgement, learner privacy or curriculum coherence (Long and Magerko, 2020; Miao and Cukurova, 2024).

What does the research say? Long and Magerko (2020) define AI literacy across 5 competencies: understanding AI concepts, recognising AI applications, evaluating AI outputs, using AI well and understanding AI ethics. The European Commission's DigComp 2.2 framework (2022) now includes AI literacy as a core digital competence for educators.'s (2021) review found that teachers with higher AI literacy integrate technology more effectively (d = 0.43) and are better at checking AI-generated content for classroom use.

The data handling policies of major AI tools have evolved significantly:

Consumer AI Tools:

Education-Specific Products:

Key recommendations: Never put personal learner data into consumer AI tools. Schools should name the approved tools in their AI policies.

This is key for teachers. It is more than simple tech skills. Use it as a starting point for professional discussion: identify the learner's current need, record evidence from more than one lesson, and agree the next classroom adjustment with the SENCO or family.

Generative AI tools became common for teachers around 2023. (2023) name four key parts: know AI, judge AI output, use AI ethically, and teach learners to do so. This matters for UK teachers, who study how tech aids thinking.

O'Neil (2016) argues AI literacy needs new skills. Learners must verify plausible but inaccurate AI outputs. This differs from typical digital content use.

AI language models work by predicting the most likely next word based on patterns learned from massive text datasets during training. They do not truly understand meaning. Instead, they create answers by using statistical probabilities between words and concepts. This helps explain why AI can write convincing text that may include factual errors or weak logic.

Understanding the mechanics helps you predict what AI can and cannot do reliably. Large language models like Claude or ChatGPT function by predicting the most statistically likely next word in a sequence. They don't "know" facts; they recognise patterns from training data.

This prediction mechanism explains both their power and their problems. When you ask for a lesson plan on photosynthesis, the model draws from thousands of educational resources it encountered during training. It assembles something that looks like a lesson plan because it has seen many similar structures. The content feels authoritative because the model has learned what authoritative educational writing sounds like.

But here's the critical limitation: the model has no way to verify if the Calvin cycle steps it just described are correct. It cannot check a biology textbook. It simply generates text that fits the pattern. This is why hallucinations occur, where AI confidently presents false information as fact.

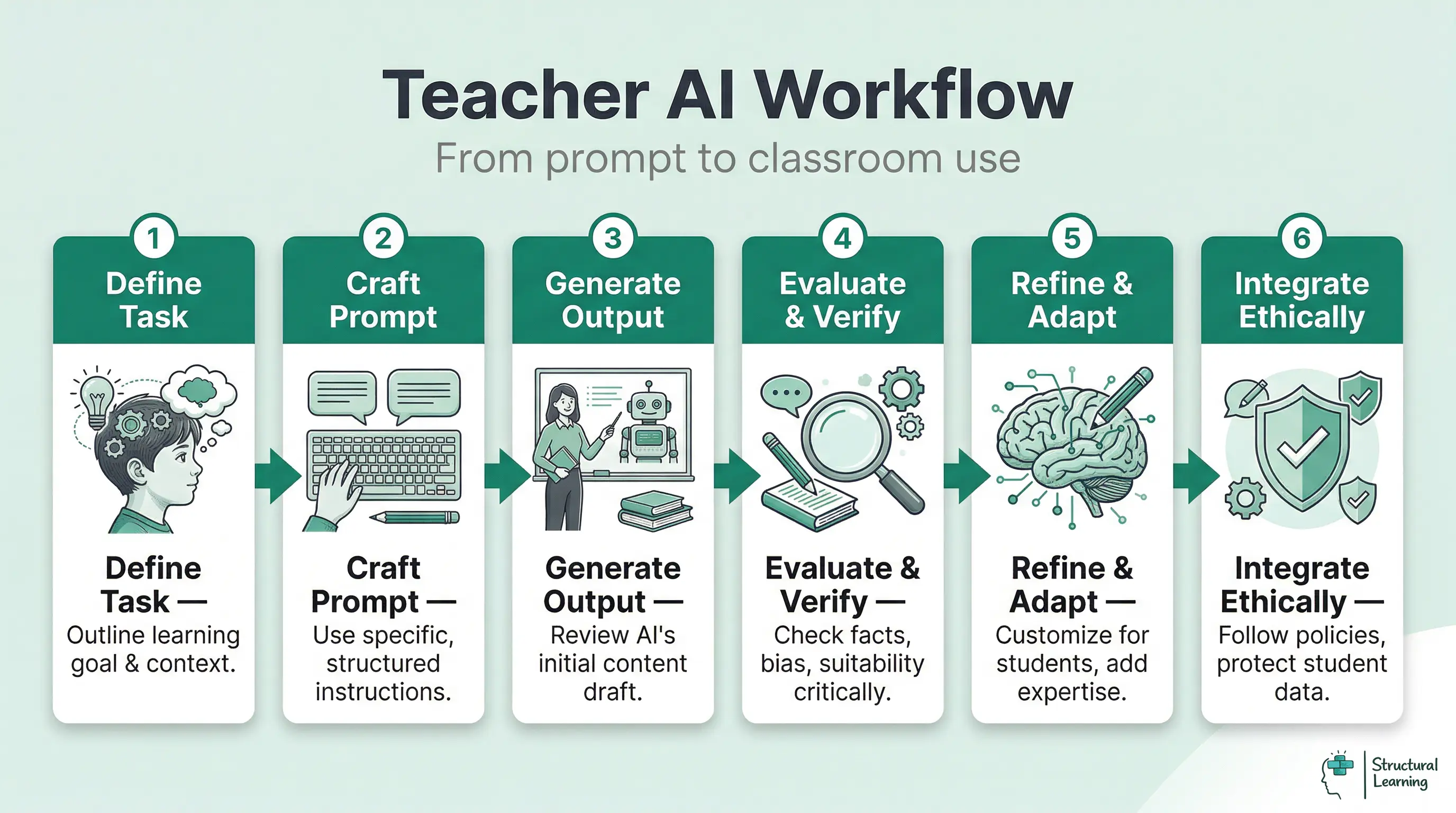

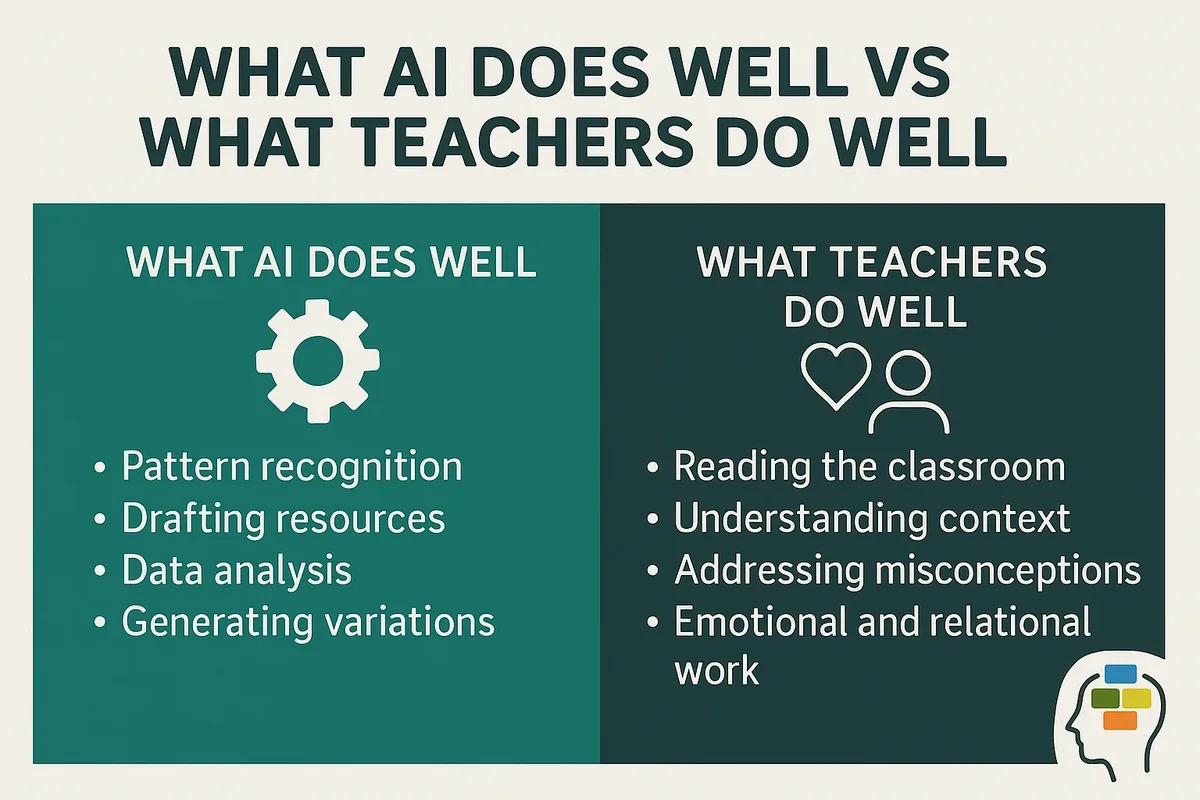

Your role shifts from consumer to critical editor. The AI provides a first draft; you provide the expertise. This relationship works well for time-consuming tasks like creating or generating discussion questions, where you can quickly spot errors. It works poorly for unfamiliar content where you cannot verify accuracy.

Prompt engineering helps teachers use AI tools to create good resources., 2023).

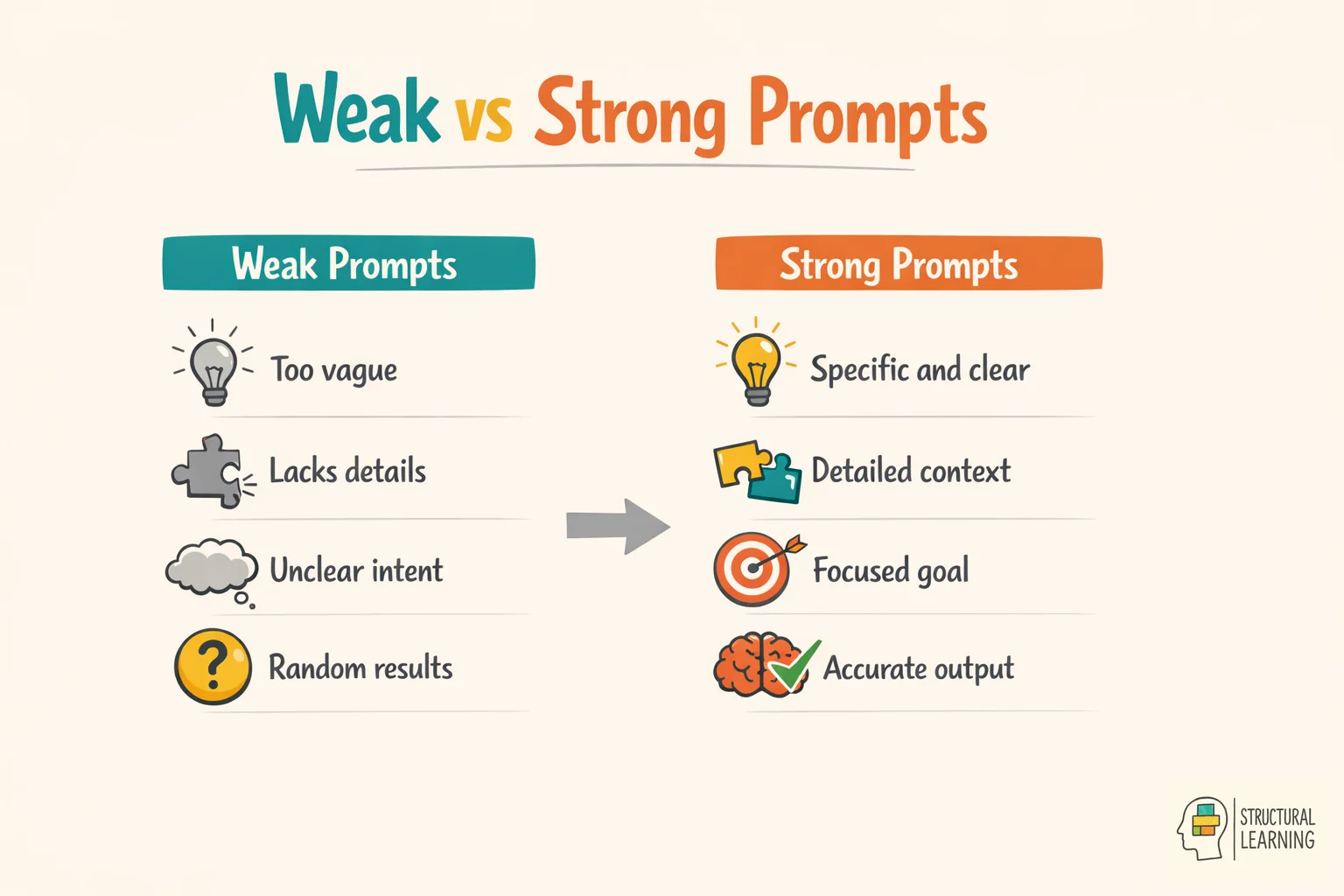

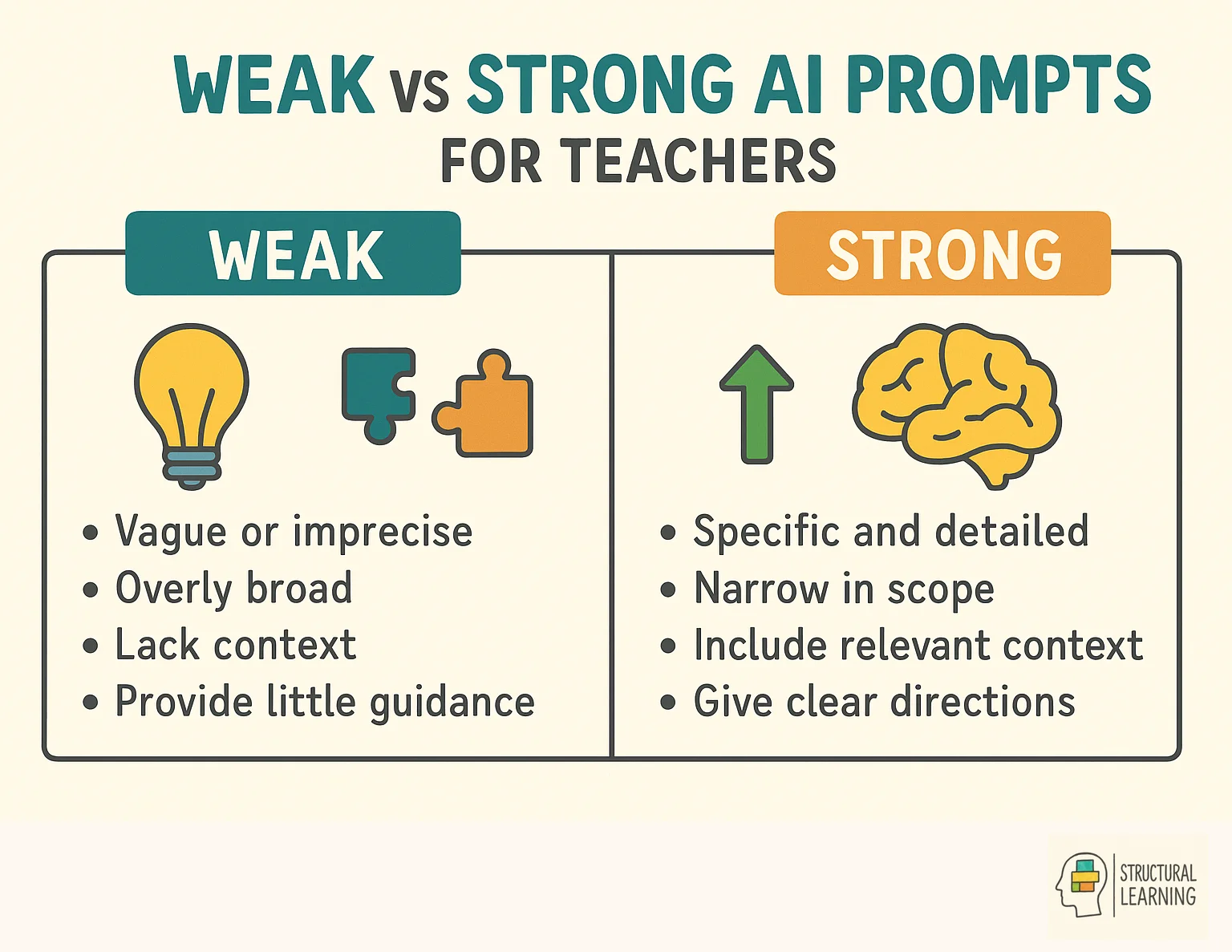

Effective prompts transform AI from mediocre assistant to powerful tool. The difference between "" and a well-structured request determines whether you save time or waste it correcting mistakes.

Specific prompts improve quality. Vague prompts often produce general outputs. Compare these two examples:

Weak prompt: "Make a worksheet about fractions."

Strong prompt: "Create a Year 4 worksheet with 8 questions on adding fractions with the same denominator. Include visual models for the first 3 questions. Use denominators of 4, 5, and 8 only. Provide an answer key with working shown."

The second prompt states the age group, topic scope, number of questions, visual needs, difficulty limits, and required parts. This gives the AI clear parameters.

Structure your requests in layers. For complex tasks, break your prompt into role, context, task, and format. This approach aligns with by making your thinking explicit:

This structure helps the AI understand what you want and why. The output becomes more pedagogically sound.

Use clear limits to maintain standards. Specify the reading level, vocabulary limits, or curriculum alignment you need. When creating resources, state the requirements clearly: "Use short sentences (maximum 12 words). Avoid complex clause structures. Include bullet points rather than dense paragraphs."

Templates save time. Create a collection of prompt structures for frequent tasks. Store them in a document you can quickly modify. This transforms prompt engineering from a creative challenge into routine workflow.

Check AI facts against trusted sources to spot errors like date or statistic issues. Before using AI in class, verify all facts with reliable sources.

AI models can give false information in the same confident tone they use for accurate content. These errors are called hallucinations, and they can be risky in education. Your learners trust the resources you provide.

Common hallucination patterns help you spot problems quickly:

Fabricated research citations appear often. The AI might reference "a 2024 study by Thompson et al. Showing spaced retrieval improves long-term retention by 34%." The structure looks right, and the claim sounds plausible.

But the study doesn't exist. Always verify citations independently before including them in teaching materials.

Historical dates and events get scrambled. AI might confidently state that the English Civil War ended in 1649 (correct), but then add that this led directly to the Restoration (which actually happened in 1660). The connections between facts become unreliable even when individual facts are accurate.

Check AI claims with care. If AI gives numbers, check the original research.

Educational research is complex, so simple stats can misrepresent it.

Verification strategies become part of your workflow:

Check facts with reliable sources. Compare curriculum content with exam board specs or textbooks. Use study details in Google Scholar to find research claims. For historical information, consult academic encyclopaedias.

Test generated examples yourself. If the AI creates a worked mathematics example, solve it independently. If it provides a science explanation, check it against your own understanding or a reliable reference. This catches errors before learners see them.

Use as a starting point, not a final product. The model might produce an excellent paragraph structure with three problematic sentences. Edit ruthlessly. Your expertise determines what stays and what goes.

Teach learners about hallucinations as part of. When learners use AI for research, they need the same verification habits. Model the process: show them how you check a claim, where you look for confirmation, what makes a source trustworthy.

Ethical AI needs clear policies on learner data, integrity, and disclosure. (2023).

This helps protect learners' wellbeing and support good education.

Data privacy controls what information goes into AI systems. Many AI platforms use your inputs to train future models unless you explicitly opt out. This means learner writing samples, assessment data, or personal information could become part of training datasets.

The UK GDPR applies fully to AI tool use. Do not enter identifiable learner information into public AI systems without consent and a valid educational purpose. Before using AI to create feedback on learner work, anonymise all writing samples. Remove names, school details, and any personal details.

Free AI tools and paid school versions differ in data policies. ChatGPT's free level uses learner chats for training (unless turned off). Enterprise accounts provide better privacy, (OpenAI, 2023). School leaders must check these differences when choosing platforms.

Academic integrity needs new approaches to assessment design. Traditional essay assignments are hard to police when AI can produce competent responses in seconds. Instead of fighting AI use, redesign tasks so AI becomes a tool rather than a shortcut.

Process-focussed assessment works well. Learners submit research notes, outline drafts, and reflection logs alongside final essays. AI can't fake the learning process. Metacognitive strategies become visible through this documentation.

Oral assessment provides AI-proof evaluation. learners present their understanding, respond to questions, and defend their reasoning in real time. This reveals depth of knowledge that written work might mask.

Clear roles help prevent AI misuse in group work. Each learner should then show what they contributed.

Learners must understand AI policy, separating right from wrong uses. Use AI to brainstorm ideas, check grammar, or make practise quizzes. Do not submit AI work as your own.

Communicate these boundaries clearly. Do not assume learners understand the ethical line. Model appropriate AI use.

Show learners how you use AI for planning but not for assessment design. Then discuss why some tasks benefit from AI assistance, while others require independent thinking.

Teachers should start with trusted AI tools, such as ChatGPT for lesson planning and Claude for creating content. They can also use subject-specific platforms that match curriculum standards. A strong toolkit has 3-4 reliable tools, rather than many platforms to learn at once. Choose tools with education-specific features, clear privacy policies, and links to existing teaching workflows.

Researchers (Holmes et al., 2023) say start with education AI. This is before looking at general platforms. Education tools include safety features, curriculum links, and privacy.

Google Classroom AI features integrate with existing workflows. The practise sets tool generates questions based on your curriculum content. The summarisation feature helps learners process long texts. These tools sit within your familiar Google environment with school-approved data handling.

Microsoft Copilot works with Office 365 for schools. Reading Coach gives learners fluency feedback. PowerPoint Designer suggests layouts from your content. These AI tools feel smaller than new platforms.

Subject platforms give focused support. Educake (AI-powered) changes maths questions based on learner answers. Duolingo (AI) customises the order of language practice. PhET Interactive Simulations now use AI to suggest science questions.

Once you feel confident with these focussed tools, try general-purpose AI for lesson planning and resource creation. Claude, ChatGPT, and similar models are good at making first drafts. You then improve these drafts with your pedagogical expertise.

Create a personal AI workflow. Document which tools you use for which tasks. Record your best prompts.

Note what works and what doesn't. This personal knowledge base prevents you from solving the same problem repeatedly.

Teachers share strategies in AI communities. The AI in Education Network offers UK resources. Find practical examples in subject-specific social media groups. Discuss acceptable practice in school PD sessions.

AI should ease admin work, but keep learners thinking hard. Differentiation takes too long; AI alternatives free time to improve lessons.

Introduce AI literacy by showing learners AI tools, their limits, and correct uses (Holmes et al., 2023). Begin with simple activities that teach effective prompts and fact-checking AI outputs (Kasneci et al., 2023). Focus on critical skills and ethics while letting learners use AI as a learning aid (Pedro et al., 2019).

Your learners need explicit instruction in working with AI, not just warnings against misuse. Treat AI literacy as a fundamental skill. It sits alongside judging whether a website is credible or doing library research.

Start with a clear demonstration. Use AI live during lessons so learners can see the process.

For example, when you need a grammar text, project your screen and narrate your thinking: "I'm asking the AI for three sentences in passive voice about climate change. Let's see what it produces. Now we'll check each sentence together to make sure the passive construction is actually correct."

This process reveals several lessons simultaneously. learners see how you structure prompts. They observe that AI makes mistakes. They learn verification habits. They understand that AI is a tool requiring human judgment.

Design AI-inclusive assignments that ask learners to evaluate ideas carefully. Give them an AI-generated paragraph with three planned errors, or use a real AI paragraph with natural errors. Ask learners to find the problems, explain why each one is wrong, and correct it. This builds the analytical skills they need for all AI interactions.

Create comparison tasks. learners generate an essay outline independently, then use AI to generate an alternative outline for the same prompt. They evaluate both, identifying strengths and weaknesses in each approach. This builds awareness of what AI does well and poorly.

Set classroom AI protocols through shared discussion. Instead of setting rules alone, ask learners to help create the guidelines. Ask: "When might AI help us learn better? When might it prevent us from learning?" Learner-generated rules are often stricter than teacher-imposed ones, because learners understand the temptations.

Document AI use as part of learning. When learners employ AI for research, they note which questions they asked, what responses they received, and how they verified information. This creates accountability and develops metacognitive awareness of AI's role in their thinking process.

Teach prompt engineering as a practical skill. learners who learn to write effective prompts gain a valuable capability. They also develop clearer thinking about their own questions. The process of crafting a specific, well-structured prompt requires understanding what you actually want to know.

Address the ethical issues directly. Talk about why submitting AI work as your own is dishonest.

Then explore how AI might reinforce biases. Ask who benefits from widespread AI adoption, and who might be harmed. These discussions link well to wider critical thinking goals.

AI literacy can cut workload by automating tasks. This lets learners focus on complex ideas and creativity. Poor AI use may add workload if learners struggle with tools (Holmes et al., 2023). Good teaching shows learners when to use AI (Zawacki-Richter et al., 2019) and when to think alone (Hwang et al., 2021).

Researchers like Sweller (1988) and Chandler and Sweller (1991) explore this. Cognitive load theory helps us see AI's impact on learner learning. Reduce unnecessary burdens, say researchers like Mayer and Moreno (2003). Maintain helpful challenges for the learner.

AI can help learners reduce workload. For example, it can suggest research categories, which supports organisation.

This frees up learners' memory for analysis. If vocabulary is tricky, AI can simplify the text. This helps learners understand key concepts more easily.

But AI can also remove germane load, which is the useful mental effort that builds expertise. When learners ask AI to solve mathematics problems, they avoid the productive struggle that develops problem-solving schemas. When AI writes essay topic sentences, learners miss the chance to practise organising arguments. The challenge is knowing the difference between obstacles to learning and the learning itself.

Use AI strategically to support, not replace, thinking. For a research project, AI might help generate search terms or suggest organisational frameworks. learners still conduct research, evaluate sources, and develop arguments. The AI reduces the cognitive load of starting, not the intellectual work of completing.

For SEND learners, this difference matters. AI that turns text into simpler language can reduce accessibility barriers. But AI that completes assignments for the learner removes learning opportunities. The key question is whether the task asks learners to show the exact skill you want them to develop.

Create scaffolding that gradually reduces AI support. Early in a unit, learners might use AI to check grammar and suggest improvements.

Mid-unit, they use AI only to identify errors, without getting suggestions. Late in the unit, they work independently. This approach aligns with research on scaffolding in education, where support fades as competence grows.

Consider one time-consuming task, such as making resources. Test AI options to support this, as suggested by Holmes et al (2023). Check AI work carefully; start small and build skills.

Start small and specific, rather than trying to change everything at once. Choose one routine task that takes too much time.

You might spend hours creating differentiated reading passages, writing individualised report comments, or generating practise questions. Use AI for that single task for one term. Then judge honestly whether it saves time without reducing quality.

Document what you learn. Keep notes on which prompts produce useful outputs, which tasks AI handles poorly, where verification takes longer than original creation. This evidence base informs your next steps and helps colleagues who follow your path.

Work with colleagues to build shared AI understanding. When three teachers explore AI, you find more issues and solutions together. Divide tasks: one focuses on resources, another on feedback, a third on planning.

Research on AI in education is growing (Zawacki-Richter et al., 2024). Studies find AI's impact relies on good implementation. Poor AI design can lower learner outcomes. Used well, AI may cut workload and keep standards.

Accept that this is evolving practice. What works in 2025 might not work well by 2027, as AI capabilities grow and learners become more familiar with them. Your AI literacy is not a fixed achievement. It is ongoing professional learning.

The fundamental question remains constant: does this tool serve learners' educational needs better than alternatives? When the answer is yes, proceed. When the answer is uncertain, experiment cautiously. When the answer is no, regardless of time savings, maintain your current practise.

AI literacy programmes can improve teacher efficiency and learner results (Holmes et al., 2023). Research suggests that gradual AI integration works best. Teacher training is vital for success.

AI tools are helping teachers with planning and resources. It is now important to understand AI literacy. Studies by researchers show how AI literacy is taught (Holmes et al., 2023).

Free for teachers. The platform builds a classroom-ready lesson plan from your topic in under two minutes.

Create Free Account →

AI literacy means understanding, judging, and using AI tools well in education. It involves knowing how AI works, its limits, and using it to ease workload. Teachers need AI literacy, as Ofsted checks tech supports learning (Holmes et al., 2023).

Effective prompts should be specific and structured, including clear context, desired format, learner level, and learning objectives. For example, instead of 'Make a worksheet about fractions,' try 'Create a Year 4 worksheet with 8 questions on adding fractions with the same denominator, including visual models and using denominators of 4, 5, and 8 only.' Structure your requests in layers with role, context, task, and format to make your thinking explicit.

AI can confidently present false information as fact, a problem called "hallucinations." Watch for fabricated citations, scrambled dates, and statistics without sources.

AI language models predict the next word that is most likely to fit. They do this from patterns in training data, not by truly understanding meaning or checking facts. They can write text that looks authoritative because they have learned what educational writing sounds like. But they cannot verify whether the information is correct, so they may give confident but incorrect responses.

Check facts in specifications or textbooks when planning lessons. Use Google Scholar to find studies, citing research. Solve maths examples yourself before teaching them. Treat AI as a draft; regular checks help learners.

AI literacy means learners must work with systems that give plausible, but sometimes wrong, information. This is different from normal digital skills, so new checking habits are needed. Learners must critically assess AI content.

The AI provides first drafts.

Clear school policies on data privacy, integrity, and AI use protect learners. Ethical frameworks are vital when schools use AI tools (Holmes et al., 2023). Teachers must model good AI use and teach ethical, critical AI skills.

AI literacy is still an unsettled construct. Long and Magerko define broad competencies for evaluating, using and working with AI, but the evidence base for teacher development has not yet settled which classroom behaviours show secure literacy (Long and Magerko, 2020). UNESCO's framework gives a useful progression, yet a framework is not the same as proof that a training course changes assessment quality, learner outcomes or safeguarding practice (Miao and Cukurova, 2024).

A second criticism is methodological. Much school AI evidence measures speed, workload or teacher attitudes, not curriculum coherence, subject reasoning or the quality of feedback over time. The Department for Education notes limited evidence on AI's impact on learner development and educational outcomes (Department for Education, 2025). Selwyn and colleagues also warn that teacher work often expands around the frailties of generative tools rather than simply shrinking (Selwyn et al., 2025).

There are cultural limits too. Large language models are trained on uneven data, so their default examples can favour dominant English-language, North American and commercially shaped norms. Holmes and Zhgenti argue that bias, opacity and threats to teacher agency are democratic concerns, not minor technical faults (Holmes and Zhgenti, 2024). For UK classrooms, this matters when AI rewrites local dialect, EAL experience or regional knowledge into a bland standard voice. These limits do not make AI literacy dispensable. They show why its enduring value lies in judgement: knowing when to use AI, when to question it and when to keep the thinking with teachers and learners.

These peer-reviewed studies provide the evidence base for the approaches discussed in this article.

Mapped to the curriculum. CPD-aligned. Free for teachers.

Open a free account and help organise learners' thinking with evidence-based graphic organisers. Reduce cognitive load and guide schema building dynamically.

Brown et al. (2023).

Chen (2024).

Chen et al. (2020).

Holmes (2024).

Holmes (2023).

Holmes and Zhgenti (2024).

Holmes et al (2023).

Holmes et al (2024).

(2023).

Hong et al. (2023).

Hsu et al. (2023).

Hwang et al. (2021).

Kasneci et al. (2023).

Kim (2024).

Li et al. (2023).

Long and Magerko (2020).

Luckin et al. (2020).

Miao and Cukurova (2024).

O'Brien (2023).

O'Neil (2016).

O'Neill (2023).

OpenAI (2023).

Oppenheimer (2024).

Pedro et al. (2019).

Selwyn (2019).

Selwyn et al. (2025).

Singh (2022).

UNESCO (2023).

Wong (2024).

Zawacki-Richter et al. (2019).

Zawacki-Richter et al. (2024).