Creating an AI Policy for Schools [2026]

Every school needs an AI policy. This practical guide covers governance structures, assessment integrity, data protection.

![Creating an AI Policy for Schools [2026]](https://cdn.prod.website-files.com/5b69a01ba2e409501de055d1/694e6106ab5232ef431fd9f4_creating-ai-policy-schools-2025-classroom-teaching.webp)

Every school needs an AI policy. This practical guide covers governance structures, assessment integrity, data protection.

AI policies are vital, but they are easier to use when they follow a clear structure. Learners use AI tools such as ChatGPT, so schools need clear rules. For more on this topic, see Ai cpd for schools. Schools should balance new chances with integrity, as researchers noted (Holmes & Watson, 2023).

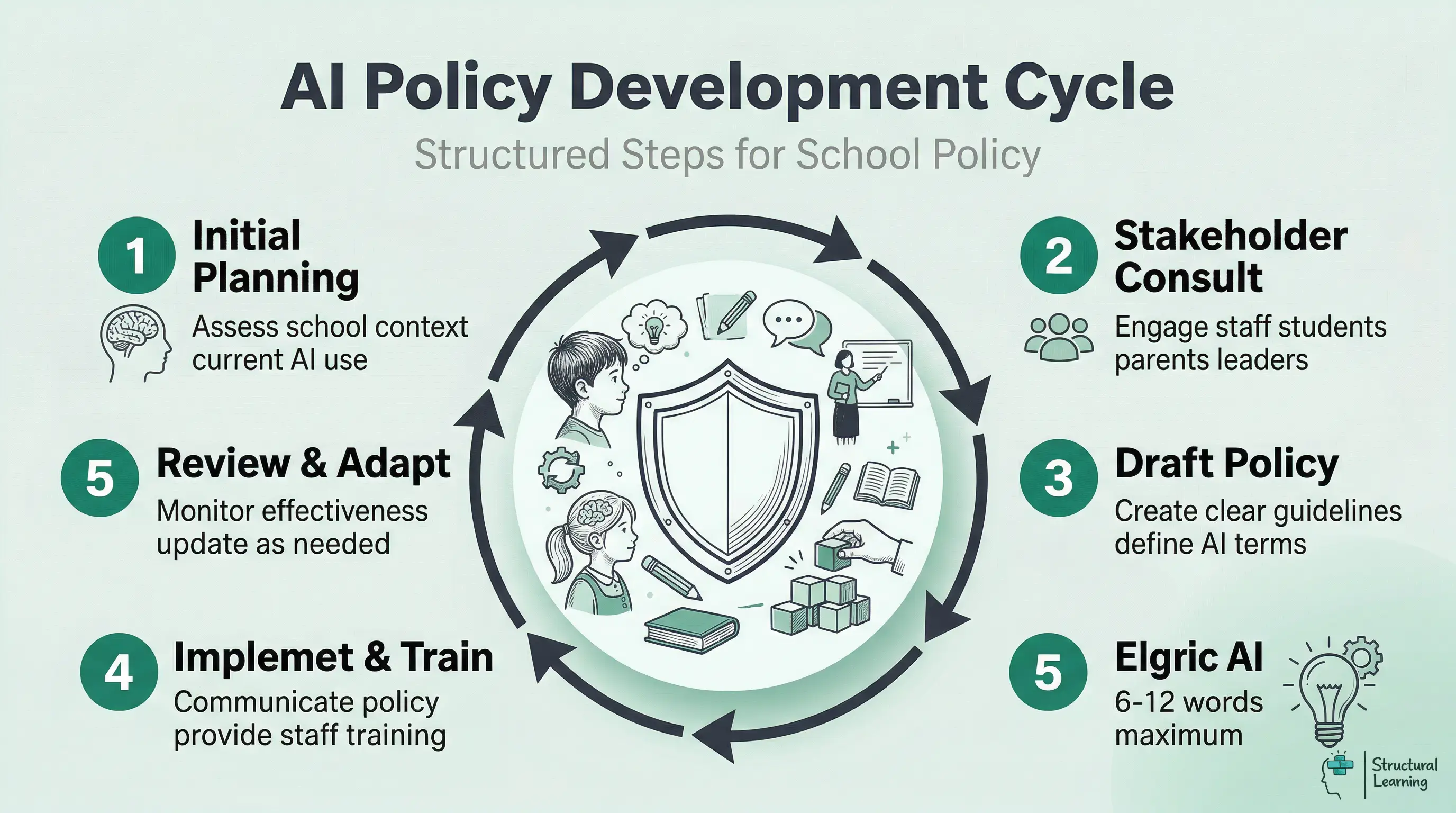

This guide covers each step: planning, consulting, implementing and reviewing. The term describes a structured process for turning evidence into a classroom decision, not a label on its own.

The DfE first published guidance on generative AI in 2023 and updated it in 2024. It allows schools to make their own choices, but it sets firm expectations on data protection, safeguarding, age-appropriate use and human oversight. UNESCO (2023) reported that fewer than 10% of schools and universities had formal guidance on generative AI.

The ICO (2024) expects schools to assess data protection risks before staff use AI tools with learner data. For more on this topic, see AI tools for teachers.

The templates offer a starting point, not a final version. Consider them blueprints for your school's AI policy. The policy must show your setting and community needs. Regardless of school type, such as primary or sixth form, the core ideas from Holmes et al. (2021) stay the same.

Learners use AI, so schools require a policy. Staff explore AI, and parents seek reassurance. DfE (2024) allows schools to create their own AI frameworks. Without policies, schools risk unplanned AI adoption.

The DfE's guidance gives schools room to design local policy, but it is not a blank cheque. Leaders must protect personal data, keep adults in charge of decisions, follow safeguarding duties and explain standards to families. Learners are already using AI, often without help, while staff need agreed boundaries before they test new tools.

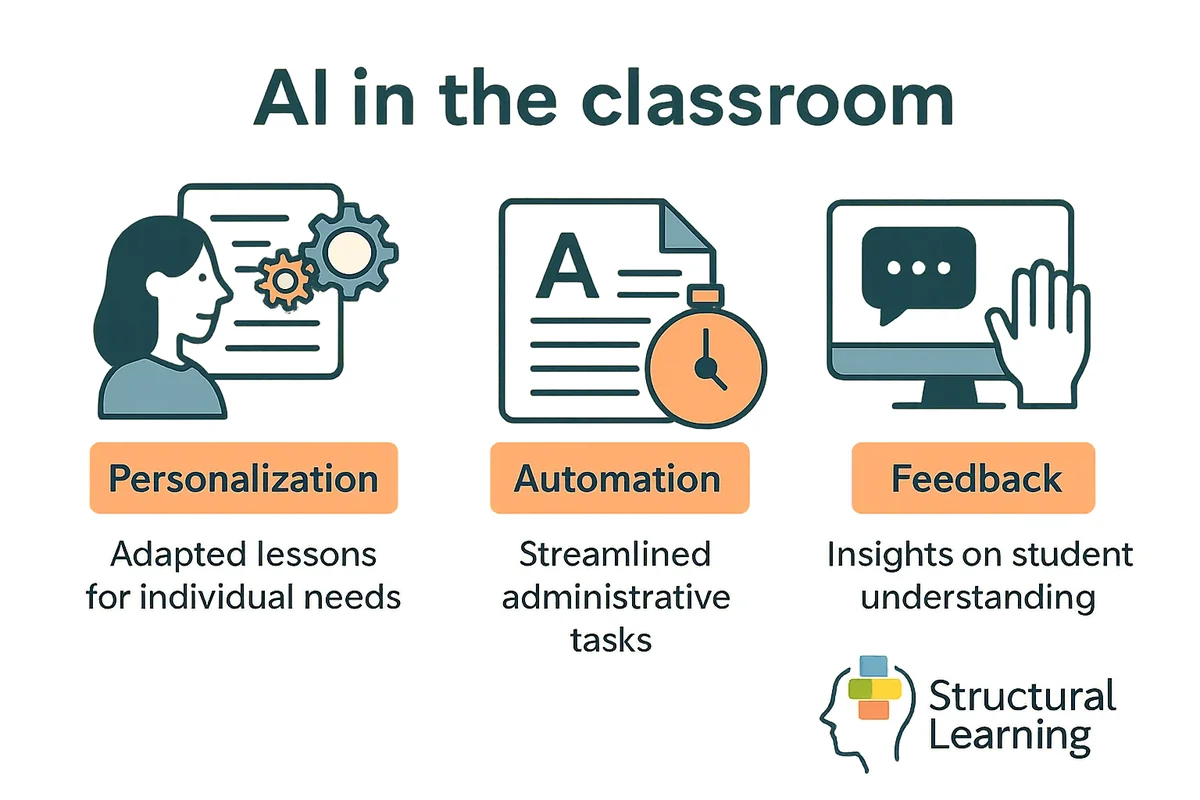

AI policies guide teachers on classroom use, assessment, safeguarding and staff workload. They help keep assessment fair. They also reassure parents that staff use AI with professional judgement (Holmes, Bialik and Fadel, 2023; Holmes and Tuomi, 2024). A 2026 policy should cover text generators, image tools, live voice translation, deepfakes and autonomous tutoring agents.

Jisc (2024) found that clear institutional policy can reduce staff anxiety and support more consistent AI use. Teachers gain confidence with lesson planning when they know the limits. Learners then hear the same message in English, science, art and homework tasks.

Chan (2023) defines AI policy as a school-wide framework for teaching, governance and daily operations. In schools, leaders should not see AI only as a marking issue. A Year 10 homework policy should say when learners can use AI for planning, how they declare that support and how teachers check understanding without relying on detection software.

Exam boards updated AI policies (2024). Clear AI policies help teachers feel confident about teaching and assessment. Consistent standards matter (Regulatory Board, 2024).

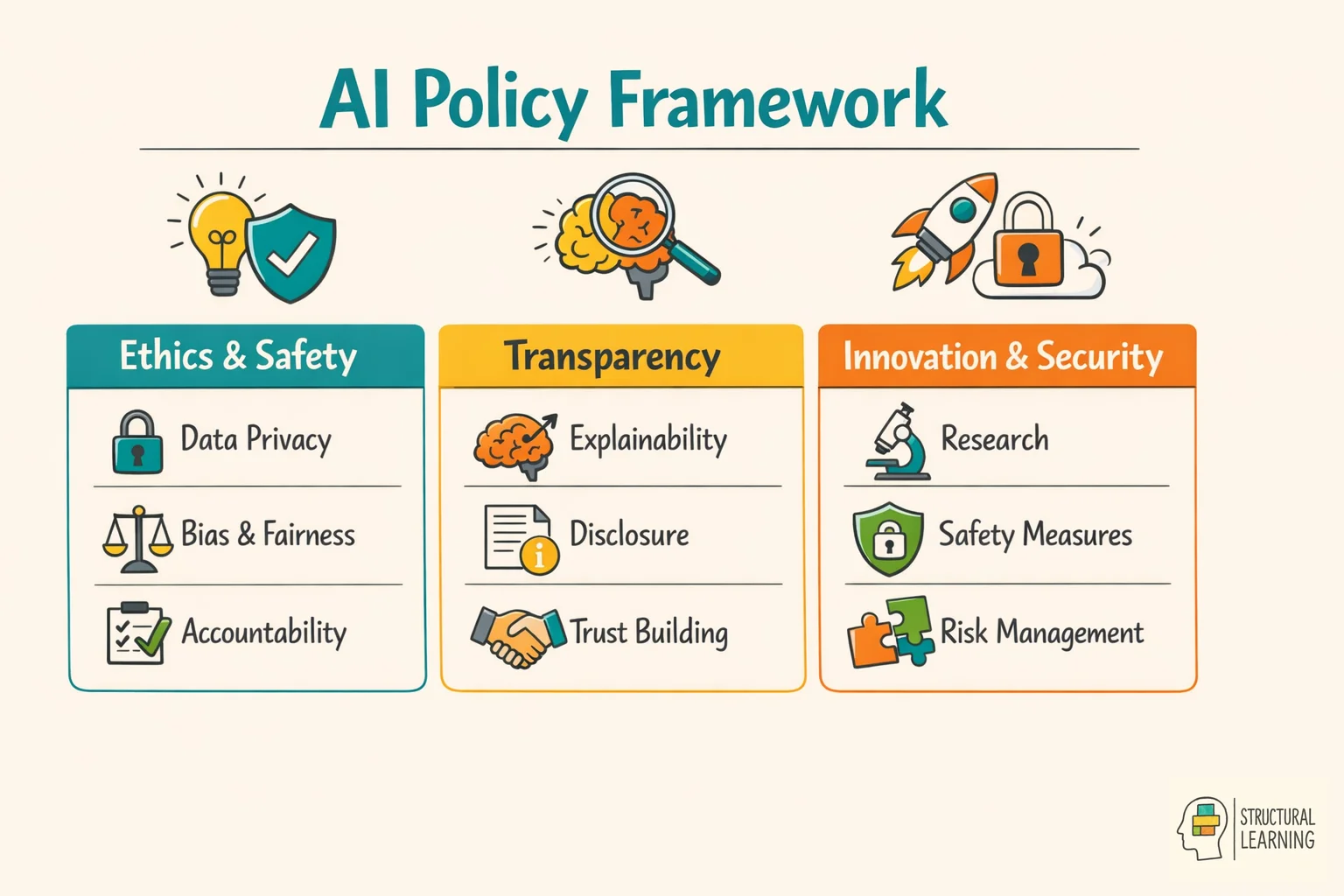

Define AI as software that uses data and prompts to make predictions, create content or take actions. Use examples parents recognise. These might include ChatGPT drafting feedback, an image generator making revision posters, a voice tool translating a parent meeting, or an agent completing a sequence of online tasks. Holmes and Tuomi (2024) warn that policy now needs to cover multimodal and more autonomous systems, not only text generation.

Schools can group AI tools by purpose and risk. Some tools support practice in maths or reading (Holmes et al., 2023). Other tools help teachers draft resources, feedback or communications. Higher-risk tools make claims about assessment, behaviour, wellbeing or learner need, so they need closer review.

Record evaluated tools in the template document. Add tools as your list grows and include cost, accessibility, data use, evidence claims and energy demand. This helps leaders consider the digital divide, because learners with paid AI accounts may have more support than classmates using free tools.

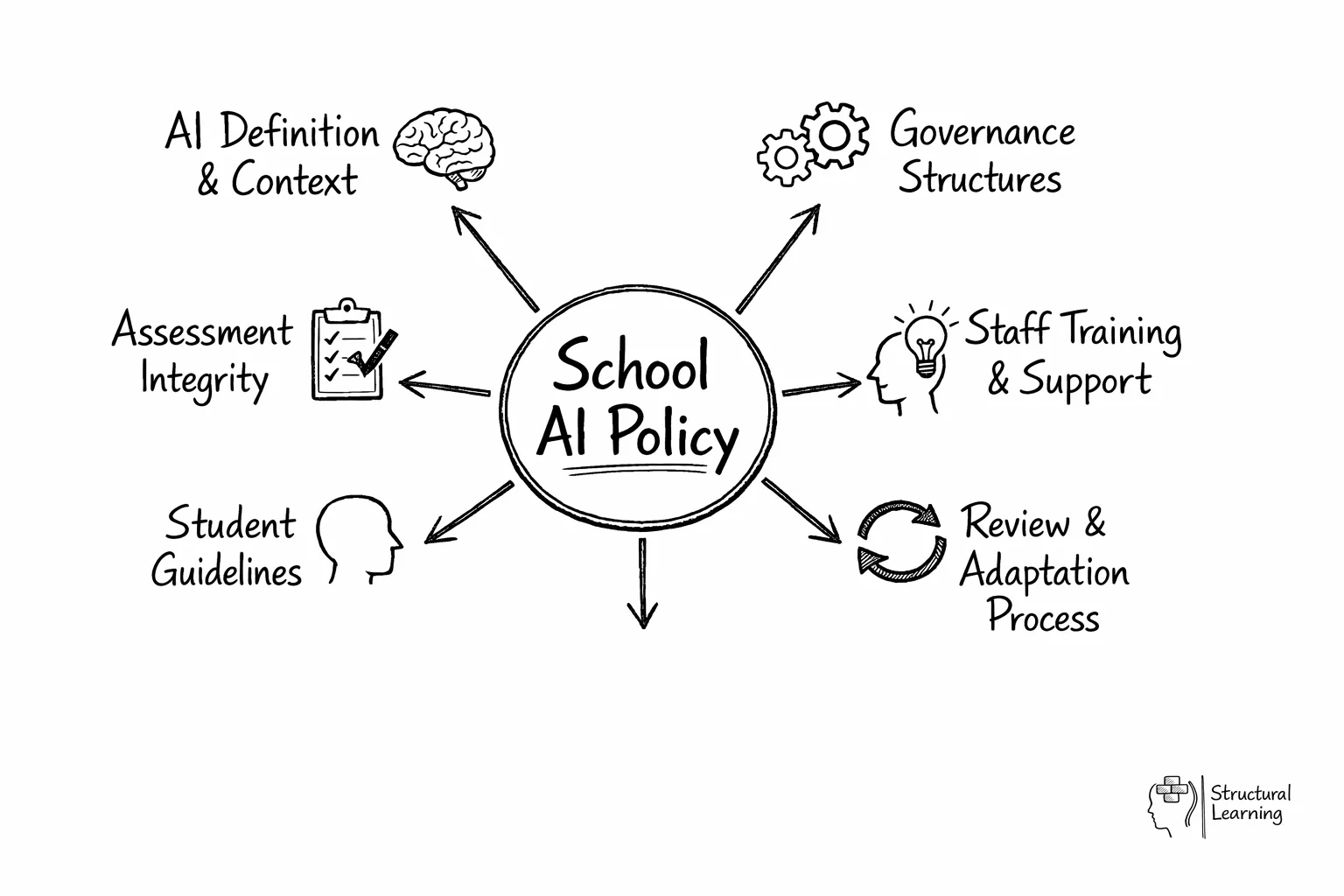

Who decides if a new AI tool gets adopted? Who handles concerns when a parent questions whether their child's homework used ChatGPT? Your policy needs named roles and clear processes.

AI policies need clear roles. A governor or senior leader should give strategic oversight, which means keeping the policy linked to school priorities. The digital learning lead manages day-to-day operations, while heads of department adapt the policy for subject assessment. This helps teachers build learners' thinking using tech (Holmes et al., 2023).

AI governance needs your school's setup in mind. Don't build entirely new systems for AI use. If your safeguarding works well, adapt its core ideas for AI. (Holmes, 2023; Irving & Kumar, 2024).

AI Literacy Quiz Worksheet for Primary Learners">

AI Literacy Quiz Worksheet for Primary Learners">

Staff anxiety about AI in assessment is understandable, but a policy built only on cheating prevention will fail. Teach AI-assisted cognitive offloading as a curriculum skill: learners can ask AI for options, then compare, reject, verify and improve them. Questioning checks whether learners understand the work (Bloom, 1956), while formative feedback helps teachers judge when AI support has replaced thinking rather than extended it (Wiggins, 1998).

When defining acceptable use, be specific. Avoid blanket bans because they push AI use underground and make assessment disputes harder. Use a traffic light model: green for declared planning, vocabulary support or accessibility aids; amber for teacher-approved translation or feedback on a draft; red for submitting AI-written work as independent work. Bearman and Luckin (2024) argue that schools should shift from unreliable detection to evaluative judgement, so learners must explain why an AI suggestion is sound, biased or wrong. Data privacy considerations extend beyond learner names and email addresses to include SEND plans, safeguarding notes, Pupil Premium context, assessment scripts and staff performance data. Shadow AI is the immediate risk: a member of staff pastes sensitive information into a public tool to draft a report, without a Data Protection Impact Assessment or approved processor agreement. Schools should list approved tools, define prohibited data, record lawful basis and require human review before any AI output affects a learner (ICO, 2024).Governance structures and reviews are key when schools put an AI policy into practice. School leaders should assign AI oversight (Holmes et al., 2021). Staff training, learner education and parent updates are also vital (Kasneci et al., 2023).

Form an AI group with staff, leaders and tech support. This group monitors issues and updates guidance (Zawacki-Richter et al., 2019), keeping your policy current.

Implement AI policy carefully, starting with senior leaders. A six-month plan that reaches all learners is best. Rogers (1969) supports a pilot phase with keen teachers. Use it as a starting point for professional discussion: identify the learner's current need, record evidence from more than one lesson, and agree the next classroom adjustment with the SENCO or family.

Refine the policy using classroom feedback before wider use. This builds support and tackles real challenges.

Train department heads and key staff in months one and two. Training should cover AI tools and their impact on teaching (Ertmer & Ottenbreit-Leftwich). Teacher confidence impacts successful technology use (Ertmer & Ottenbreit-Leftwich). This initial training builds a vital base.

In months three and four, give learners clear AI guidelines. Use workshops and subject examples so they can see what the rules mean in practice.

Before month six, ask for feedback and use it to improve the policy. This keeps the AI policy practical, useful, and flexible as new technology changes.

Good AI policy needs continuing professional development, not a single launch meeting. Teachers must understand how AI tools work, where they fail and how the school records approved use. Give departments time to test a tool, compare outputs and write subject examples before asking them to guide learners. Mishra and Koehler (2006) show that teachers need support to connect technology with subject knowledge and pedagogy.

Training must cover what AI can do and where its limits are. It should also address worries about academic integrity. For more on this topic, see Ai academic integrity. Teachers need practical strategies for using AI in lessons.

Hands-on work with AI platforms helps teachers see how learners might use them (Holmes et al., 2023; Zawacki-Richter et al., 2019). This also helps teachers spot AI work and discuss correct use (Lancaster & Cull, 2023).

Start with optional sessions for keen teachers before school-wide training. Mentoring, with tech-savvy staff supporting others, works well. Follow-up sessions keep policy consistent, helping staff as AI tools evolve (Holmes, 2023).

AI education needs clear rules about help and cheating. Leaders must ensure learners use AI to improve, not replace, thinking. Kirschner (2006) argued that novices need explicit guidance when tackling demanding tasks. Learners should use AI as support, not for simple task completion.

Learners need AI literacy across information, ethics and subject knowledge. Teach them to test AI claims against trusted sources, look for hallucinations, notice algorithmic bias and ask whose knowledge is missing. This matters for EAL and neurodivergent learners, who can be misread by detection systems or standardised writing norms (Noble, 2018; Benjamin, 2019).

Teach AI literacy across all subjects, not only in technology lessons. This means helping learners understand how AI works and how to use it well.

Teachers can model good AI use by researching carefully and checking AI facts. Discuss AI bias to show possible risks, build skills for an AI world (Holmes et al., 2023), and help learners maintain academic honesty (Wong, 2024).

AI monitoring should use professional judgement, good assessment design and clear records. It should not depend on detection scores alone. Weber-Wulff et al. (2023) found major accuracy problems in AI text detectors, and Bearman and Luckin (2024) argue that detection cannot carry academic integrity policy. Use detectors only as a prompt for a conversation, then ask learners to explain their sources, drafts and decisions.

Fair enforcement helps learners improve. For a first offence, teachers talk with the learner and ask for the work again. If problems happen again, the school takes firmer action.

Lewis (2001) and Sugai & Horner (2006) show that this keeps policy focused on teaching, not only punishment.

Review policies with staff and learners monthly or termly. Leaders should check AI detection, consistency, and learner grasp (Holmes et al., 2024). This input makes policy and teaching stronger.

Clear communication on AI policy is key. Schools should share AI policies with parents in plain language. Use it as a starting point for professional discussion: identify the learner's current need, record evidence from more than one lesson, and agree the next classroom adjustment with the SENCO or family.

This helps parents understand AI's benefits and the safety measures in place. Epstein's (dates missing) research shows that openness builds trust, while shared values at home support the school.

Parents need clear explanations about AI tools in schools. Tell them how you protect learner data and support responsible use.

At information sessions, show AI tools firsthand and address worries about cheating. Give clear examples of acceptable and unacceptable AI use, so parents can guide their child's home learning, like O'Neill (2023) suggested.

Newsletters and meetings keep families informed about AI and policy changes. Visual guides help parents support learners with homework. Open communication gives everyone the same AI safety messages (Holmes et al, 2024). This strengthens your school's digital learning programme.

AI policies must meet UK GDPR for learner and staff data. Before staff use an AI tool with personal data, leaders should check the lawful basis, processor terms, retention period and security controls. They should also decide whether a Data Protection Impact Assessment is needed. ICO (2024) guidance on generative AI expects organisations to assess risks before deployment, not after a complaint.

Data protection law, safeguarding duties, assessment rules and school governance should guide AI policy. Ofsted does not score a separate digital strategy measure. Instead, inspectors may look at technology through curriculum, safeguarding, behaviour and leadership evidence. Exam boards require learners' own work, so policy should match AI use to assessment rules and the DfE's guidance on human oversight and data protection.

AI audit trails are vital, especially when AI impacts learners, like behaviour tracking (Holmes, 2023). School leaders must appoint a data protection officer for AI oversight.

Free for teachers. The platform builds a classroom-ready lesson plan from your topic in under two minutes.

Create Free Account →

Schools need AI policies with acceptable use definitions. Policies should cover data protection, academic honesty, and staff training. A clear framework will help teachers and learners choose safe classroom tools (Holmes et al, 2024).

Form a group of leaders, teachers, tech staff, and parents. Audit current AI use and privacy risks. Leaders should then create guidelines, aligning them with behaviour and academic integrity policies (Holmes et al., 2023).

The DfE suggests schools manage AI risks. Leaders should build local systems; guidance avoids rigid rules. Protect learner data and ensure content is age appropriate. Maintain test integrity, as Holmes et al (2023) advise.

Teachers clearly state when learners can use AI for assignments. They show how to check AI outputs for errors and bias, modelling responsible use. Teachers report safeguarding or data privacy issues (agreed school channels) if they see misuse.

AI tools change how we check learner progress and protect honesty. Take-home essays are easily done by AI, warn researchers (Holmes et al., 2023). Schools should use varied assessments, researchers suggest (Wiggins, 1998). Acknowledge AI is now part of every learner's education.

Wiliam (2011) suggests more class assessments, oral exams, and group work for discussion. Learning logs show real learner understanding, say Black & Wiliam (1998). Darling-Hammond (2010) recommends using assessments to critique AI or apply learning locally. Bloom (1956) shows this helps distinguish AI use from genuine knowledge.

Schools need AI use rules (Holmes et al., 2023). Create AI rubrics and train staff. Learners need contracts outlining AI boundaries. Tell learners and parents about changes to assessments.

A school AI policy can look more settled than the evidence allows. Bearman and Luckin (2024) argue that AI detection is too unreliable to carry assessment decisions, so policies based on surveillance may create false accusations, especially for EAL and neurodivergent learners. The stronger response is to redesign tasks around drafts, oral explanation and evaluative judgement, but this takes staff time and shared moderation.

Rogers (1969) is useful for staff adoption because he stresses ownership and relevance, yet his learner-centred assumptions can underplay novice need for explicit guidance. Kirschner, Sweller and Clark (2006) argue that minimal guidance often fails when learners lack secure schemas, so AI literacy cannot be left to exploration alone. Teachers need worked examples, clear boundaries and direct modelling.

The evidence base is also unstable. Williamson, Macgilchrist and Potter (2023) warn that education technology is shaped by market power, platform design and proprietary data systems. AI tools change silently through model updates, so a study of one tutoring tool may not apply six months later. Cultural limits matter too: many policy templates are written from English-language, Global North assumptions and may miss local norms about language, privacy, disability and authority.

There are practical limits around cost, access and sustainability. Learners with paid tools may gain advantages, while high-compute systems raise environmental questions (Bender et al., 2021). Despite these limits, the underlying policy approach retains enduring value when it treats AI as a governed teaching tool, keeps human judgement central and is reviewed with staff, learners and families.

Kirschner, P. (2006). Why minimal guidance during instruction does not work.

Rogers, C. (1969). Freedom to learn.

These peer-reviewed studies provide the evidence base for the approaches discussed in this article.

Schools must use trauma-informed approaches (HEARTS). This whole-school program prevents issues and supports learners. It creates safer, supportive schools. (Cole et al., 2005; Cook-Harvey et al., 2018; Reddy et al., 2018)

J. Dorado et al. (2016)

HEARTS helps build trauma-informed schools; this is key when AI impacts learner wellbeing. AI policies must keep environments safe and supportive for vulnerable learners. Consider changes to teaching and data privacy (Cole et al., 2005).

Indonesia's sexuality education needed an enabling environment (Astuti et al., 2023). Implementation research showed key findings (Astuti et al., 2023). Researchers explored factors that affect learner engagement (Astuti et al., 2023). Understanding these factors helps create effective interventions (Astuti et al., 2023).

M. van Reeuwijk et al. (2023)

Researchers explore what helps create good sexuality education (study by names/dates). AI policies must support teaching sensitive topics. Use AI tools responsibly. Protect learner privacy and wellbeing.

Mapped to the curriculum. CPD-aligned. Free for teachers.

Open a free account and help organise learners' thinking with evidence-based graphic organisers. Reduce cognitive load and guide schema building dynamically.