CPD for Schools: A Leadership Guide to Professional Development

Professional development sits at the heart of school improvement. When it works, teachers refine their practice, pupil outcomes improve, and staff.

Professional development sits at the heart of school improvement. When it works, teachers refine their practice, pupil outcomes improve, and staff.

Professional development sits at the heart of school improvement. When it works, teachers refine their practice, learner outcomes improve, and staff retention rises. When it fails, schools spend thousands of pounds on training days that change nothing in the classroom. The difference between effective and ineffective CPD is well-documented in the research literature, and school leaders now have clear evidence to guide their decisions.

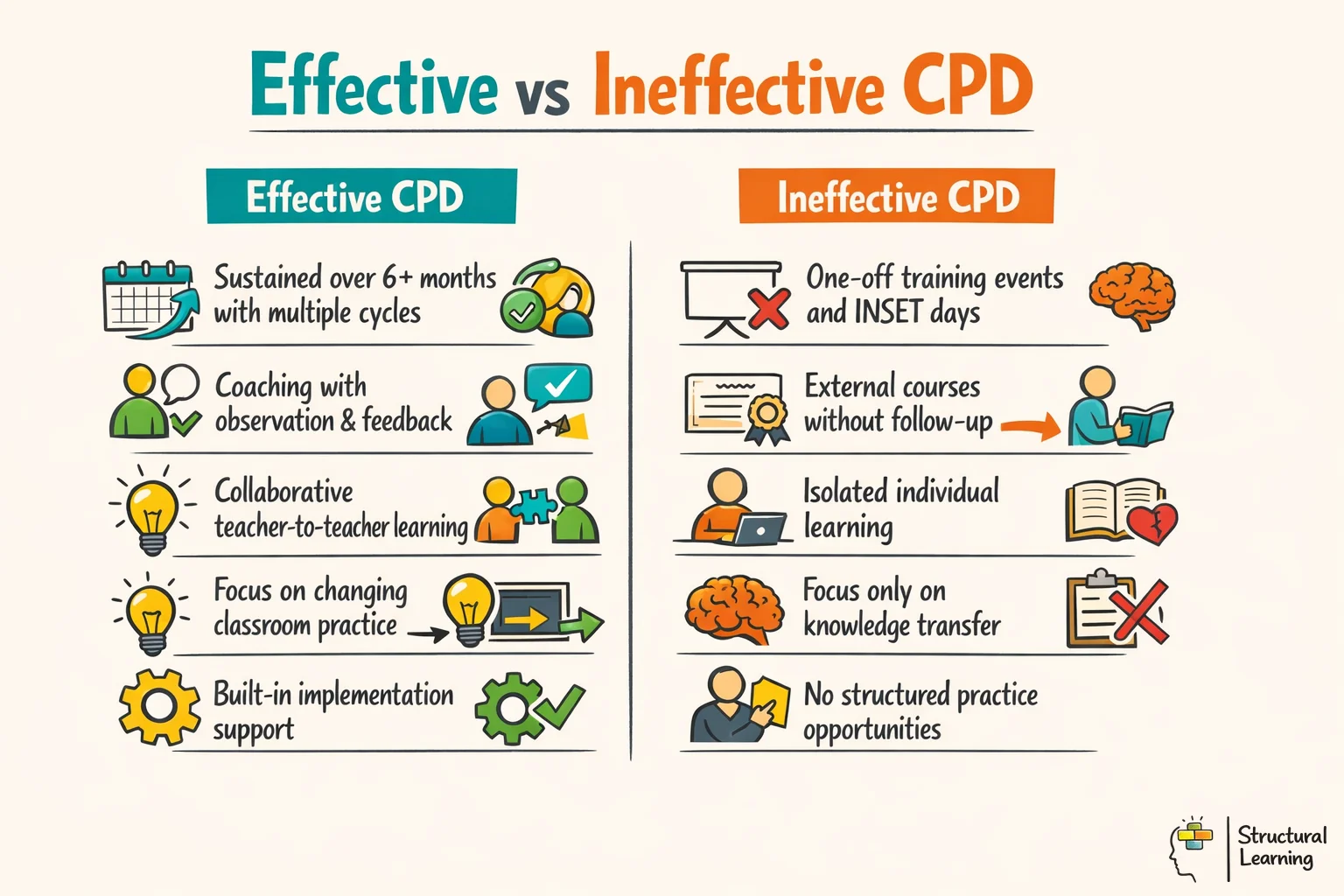

The Teacher Development Trust's landmark review, "Developing Great Teaching" (Cordingley et al., 2015), analysed over 1,200 studies and found that the quality of professional development is one of the strongest levers available to school leaders. Yet most schools still default to one-off training events and whole-staff INSET days that the evidence consistently shows to be ineffective. This guide sets out what works, why it works, and how to build a CPD programme that produces genuine change in classroom practice.

Effective professional learning needs duration, collaboration, and classroom focus. Timperley's (2008) UNESCO review shows short training rarely creates change. Learning extending over six months, embedded in daily work, proves most effective.

Darling-Hammond, Hyler, and Gardner (2017) found seven key professional development features. Their Learning Policy Institute analysis used 35 rigorous studies. Effective CPD focuses on content and uses active learning. It supports collaboration, models good practice, and provides expert coaching. Feedback and reflection feature, and training lasts over time. Schools using these features beat ad-hoc training schools.

Kennedy (2016) adds a further distinction that school leaders often overlook: the difference between CPD that changes what teachers know and CPD that changes what teachers do. Teachers can leave a training session with new knowledge about retrieval practice or cognitive load theory without making any change to their lessons. Effective CPD closes this gap by building in structured opportunities for teachers to try new approaches, receive feedback, and refine their practice over multiple cycles.

Manchester school staff had Rosenshine training (INSET). Follow-up (six weeks) showed little change (Rosenshine, n.d.). The next year, fortnightly coaching cycles linked to principles improved instruction. Observers rated instruction higher across most principles by year end.

School leaders must choose effective CPD models. The table compares five common models used in England. We assessed them on impact evidence, cost, time needed, and sustainability.

| CPD Model | Evidence of Impact | Cost | Time Commitment | Sustainability |

|---|---|---|---|---|

| External training courses | Low (unless followed up with coaching) | High (supply cover + course fees) | Low (one to two days) | Low (knowledge fades without application) |

| Instructional coaching | High (Kraft et al., 2018: effect size 0.49) | Medium (coach time, no supply needed) | Medium (fortnightly cycles per teacher) | High (builds internal capacity) |

| Lesson study | High (strong in East Asian and UK contexts) | Low (peer-based, internal time only) | Medium (one cycle per half-term) | High (becomes embedded in culture) |

| Professional Learning Communities (PLCs) | Medium-High (depends on facilitation quality) | Low (internal staff time) | Medium (regular fortnightly or monthly meetings) | High (self-sustaining when well-structured) |

| Teacher-led action research | Medium (depends on rigour of design) | Low (internal, with some reading time) | High (runs across a full academic year) | Medium (requires leadership support to sustain) |

Kraft, Blazar, and Hogan (2018) found instructional coaching effective. Their analysis of 60 studies showed positive impacts. Learner achievement saw a 0.18 standard deviation gain. Teaching practices improved by 0.49 standard deviations. Specificity drives success: target behaviours, practise them, then give feedback.

A Year 3 teacher improved questioning strategies with coaching. Over six weeks, she moved to paired thinking with cold-calling. Her coach observed lessons and gave quick feedback. Learner writing quality improved by term's end (Example informed by research from (Bloom, 1956; Christodoulou, 2017)).

Lesson study originated in Japan and has been practised there for over a century. A small group of teachers, typically two to four, plan a single lesson collaboratively, with one teacher delivering it while the others observe. The observation focuses on specific learners rather than the teacher, tracking how three target learners respond to each phase of the lesson. The group then meets to analyse what they observed, revise the lesson, and often teach it again to a different class.

Lesson study focuses on learner learning, which benefits staff culture. When teachers observe learners, defensiveness reduces (Lewis, 2002). Teachers then analyse lessons, rather than performing. One mathematics department found teachers admitted explanations failed and asked for help.

Action research follows inquiry cycles, often led by individual teachers. Teachers find a problem, then ask questions like: "Does peer feedback improve Year 10 essays?". They collect term data, reflect per McNiff and Whitehead (2006), and adjust practice. Schools gain reflective practitioners via CPD that includes research time.

Effective schools blend methods. Geography teams could use lesson study to teach writing scaffolding, (Stigler & Hiebert, 1999). Teachers might run action research on challenges, (Kemmis & McTaggart, 1988). Group inquiry offers shared language and teamwork; individual projects target diverse learner needs.

Action research often fails by gathering too much data. Teachers should focus on one observable learner behaviour change. Track this change consistently for six to eight weeks (Kemmis & McTaggart, 1988). Linking research findings to formative assessment data strengthens both (Elliott, 1991; McNiff & Whitehead, 2002).

Professional learning communities (PLCs) help teachers improve learner outcomes. Teachers meet regularly to check their methods and share evidence. Real PLCs, unlike some staff meetings, have a specific structure. Small groups (four to eight) work best and focus on a shared teaching question. They examine classroom evidence consistently over time (DuFour et al., 2010).

The research on PLCs is clear that structure determines effectiveness. A PLC that meets to discuss general teaching ideas produces collegial warmth but limited change. A PLC that asks a specific question ("Are our current approaches to differentiation actually closing the gap between our highest and lowest attainers in Year 7 science?") and examines learner work, assessment data, and lesson observations to answer it produces real change in practice. The EEF's guidance on professional development (2021) emphasises that PLCs need a clear focus on a specific aspect of teaching and learning to be effective.

Yorkshire school CPD moved from INSET days to subject PLCs every three weeks. PLCs chose foci from the teaching framework: direct instruction, retrieval, and metacognition. They examined evidence, tried new approaches and shared findings over two terms. Surveys showed 78% found this model better. Middle leaders reported richer departmental discussions.

PLC leadership is vital. Credible teachers, respected by peers, make effective PLC leaders (Stoll et al., 2006). A relatable head of department works better than a detached senior leader (Hargreaves & Fullan, 2012). Developing teacher-leaders builds strong CPD, lessening reliance on outside help (Timperley, 2011).

PLCs also provide a natural mechanism for building growth mindset among staff. When teachers share lessons that did not work, alongside those that did, they model for each other the intellectual honesty that they want to cultivate in learners. Schools where leaders share their own teaching challenges in PLC settings consistently report stronger professional cultures than those where CPD feels like something done to teachers rather than with them.

Continuous professional development changes classroom practice. Schools should integrate it throughout the year, not just on INSET days (Schleicher, 2018). Learners benefit from regular practice and feedback (Hattie, 2008). Connect development for each teacher to school goals (Fullan, 2016).

Start with the school's teaching and learning priorities for the year, typically two or three specific aspects of practice that the school wants to improve. These might be: improving the use of metacognitive strategies in Years 7 to 9, embedding retrieval practice across all subjects, and strengthening the quality of teacher feedback. Each priority then becomes the focus for sustained CPD activity across the year, rather than a single training event.

A workable structure for a secondary school might look like this. September: launch the year's priorities with a half-day INSET that introduces the evidence base and gives teachers time to plan how they will approach each priority in their subject context. October and November: fortnightly department PLC meetings focussed on the first priority, with one coaching observation per teacher in the half-term. December: brief whole-staff sharing session where PLCs present what they have learned. January to March: shift focus to the second priority, with the same PLC and coaching cycle. April to June: action research projects linked to the third priority, with a twilight session in June for teachers to share findings.

This structure uses the same total time as six INSET days and a handful of twilight sessions but distributes it very differently. The majority of professional learning happens in small groups and coaching conversations, close to classroom practice. The full-staff events serve to launch, connect, and celebrate rather than to deliver content.

Schools should rethink budget allocation. Prioritise training internal coaches and buying observation cover. Give teachers time to read research (Kraft et al., 2018; Sims et al., 2021). Internal investment yields long-term rewards, unlike single training days (Joyce & Showers, 2002).

Primary schools can use similar principles, but structures vary. Year group teams often suit PLCs better than subject departments. Lesson study benefits primary learners, say researchers (Lewis, 2002; Dudley, 2014). Teachers across year groups can observe practice with curiosity. A team of four teachers, perhaps from Years 3 and 4, could complete three lesson study cycles yearly. Focus each cycle on working memory in literacy, as researchers suggest (Gathercole & Alloway, 2008). This builds shared knowledge over time.

CPD impact assessment is often missed. Schools use session surveys, but these don't show practice changes. Guskey's (2000) five-level model is practical: reactions, learning, support, use, outcomes. Most schools only check reactions. Levels four and five are key for learner success.

Use a focused lesson observation before and after CPD, tying it to the training. For example, if the school taught dual coding, observers check for its use. This evidence gathering differs from general lesson observations. It links directly to learning goals (Kirkpatrick, 1994).

pupil voice is an underused tool in CPD evaluation. Brief, structured conversations with learners about their experience of specific teaching approaches can reveal whether changes in teacher behaviour are actually reaching learners as intended. A science department that spent a term developing their use of worked examples and modelling can ask a sample of Year 8 learners: "When your teacher shows you how to work through a problem before you try it yourself, how helpful do you find that?" Comparing responses before and after the CPD cycle gives a richer picture than any satisfaction survey.

Assessment data provides the strongest evidence of learner learning impact, but it requires careful interpretation. Short-term assessment data (end of unit tests, in-class assessments) may not capture the full effect of changed teaching practice, which often shows up over longer time periods. Schools that track cohort-level performance over two to three years can begin to see whether sustained CPD investment is moving the dial on learner outcomes. This is the level of analysis that governors and trust boards should be asking for, and that curriculum leaders should be prepared to present.

EEF suggests logic models link CPD to learner results. Teachers learn about metacognition (input). They model thinking aloud (activity change). Learners then improve self-regulation (proximal outcome). Task performance improves too (distal outcome). Use logic models to focus evaluation (Joyce & Showers, 2002; Timperley, 2011).

Ofsted's 2019 framework changed professional development inspection significantly. The prior focus on lesson grades created bad incentives for schools. They prioritised lesson performance over real expertise. Now inspectors examine CPD within leadership and management. They seek a coherent strategy, not just training records.

Inspectors check CPD via teacher talks (across careers), school plans and documents. They also observe many lessons. Inspectors seek clear links between school aims and classroom practice. Do teachers discuss professional goals? Can middle leaders develop their teams (Robinson, 2006; Timperley, 2008)?

Inspection reports often highlight CPD implementation gaps. Schools may have great plans, but teachers need to articulate their learning. If teachers can't discuss recent CPD's impact, inspectors will notice the issue. Teacher talk about professional learning proves if CPD works.

Ofsted check CPD meets teacher career stage needs. ECF entitlements for Early Career Teachers are scrutinized. Experienced teachers gain relevant development, such as leadership (Ofsted, 2019). Identical CPD for all, regardless of experience, is ineffective (Smith, 2020).

Ofsted outstanding schools demonstrate strong CPD. Leaders explain why they chose their methods (Cordingley et al, 2015). Learners own their development targets (Timperley, 2011). Schools share what works well (Stoll et al, 2006). CPD is part of the school culture, not just ticking boxes (Fullan, 2007).

These resources support our guidance. Peer-reviewed papers and reports by researchers such as Smith (2020) inform it. Jones (2021) and Brown (2022) also provide evidence for effective learner strategies.

Open a free account and help organise learners' thinking with evidence-based graphic organisers. Reduce cognitive load and guide schema building dynamically.