AI Differentiation in the Classroom: A Teacher's Guide [2026]

A practical guide to using AI for differentiation in UK classrooms. Covers resource tiering, scaffold generation, adaptive questioning.

![AI Differentiation in the Classroom: A Teacher's Guide [2026]](https://cdn.prod.website-files.com/5b69a01ba2e409501de055d1/699896b4fac358c4cb84f442_699896b30d2dc48eea3ac568_ai-differentiation-in-the-classroom-classroom-teaching.webp)

A practical guide to using AI for differentiation in UK classrooms. Covers resource tiering, scaffold generation, adaptive questioning.

Mixed ability is normal in every class. Even with setting, reading ages and motivation vary. AI helps teachers adapt lessons faster. However, teachers still judge learner needs, like sentence starters (Vygotsky, 1978) or removing constraints (Piaget, 1936; Bruner, 1966).

Tomlinson (2001) found that adapting teaching to what learners know boosts results. It is hard to find the time to create different resource versions. It's also difficult to change questions and track individual learner support needs. AI tools help produce resources but don't fix teaching issues.

AI assists differentiation, speeding up resource creation and changes. Teachers still decide; the machine produces. This frees time for targeted learner support and assessment (Wiliam, 2011; Black & Wiliam, 1998).

A Year 6 teacher can paste text into AI for a reading lesson. The AI creates three versions in minutes. One supports struggling learners with simpler words and sentence starts. One meets expected standards with open questions. Another challenges advanced learners with inferential questions. The teacher checks and adapts the resources for specific learners.

The critical distinction: the AI creates the resources. The teacher makes the assessment judgements about who needs what. These are different skills, and conflating them leads to poor differentiation regardless of the tool.

Three AI differentiation methods can be considered for lessons. These methods need different teacher time investments. They vary in required AI capability (Wiggins & McTighe, 2005). Targeted instruction benefits each learner.

| Model | How It Works | Teacher Time | Best For |

|---|---|---|---|

| Resource tiering | AI generates 3 versions of the same activity at different levels | 5 min to prompt + 10 min to review | Worksheets, reading tasks, homework |

| Scaffold generation | AI creates graduated support: sentence starters, worked examples, prompt cards | 5 min to prompt + 5 min to review | Writing tasks, problem-solving, extended responses |

| Adaptive questioning | AI generates question sets at varying Bloom's levels from the same content | 3 min to prompt + 5 min to review | Plenaries, retrieval practice, formative checks |

Resource tiering connects well to current teaching styles. AI can quickly produce "must, should, could" worksheets you already make. Scaffold and questioning models need more prompt expertise but improve learning more (Rosenshine, 2012).

Prompt design strongly influences resource quality (Kasneci et al., 2023). "Make easier" gives shorter, not better, resources. Good differentiation prompts use five key parts.

| Element | Why It Matters | Example |

|---|---|---|

| Year group and subject | Calibrates vocabulary and complexity | "Year 8 history" |

| Learning objective | Keeps all versions focussed on the same outcome | "Explain the causes of the English Civil War" |

| Number of tiers | Defines how many versions you need | "Three versions: support, core, extension" |

| What changes between tiers | Prevents AI from just making text shorter | "Support: sentence starters and word bank. Core: open questions. Extension: source evaluation." |

| Format requirements | Ensures output is classroom-ready | "Fit on one A4 page per tier. Use a table for the word bank." |

Here is a complete prompt for differentiating a Year 9 biology task:

"You are a KS3 science teacher in a UK state school. Create three versions of a worksheet on 'How the heart pumps blood around the body' for Year 9 learners.

Support version: Use a diagram with labels for learners to match. Include a word bank (atria, ventricles, valves, oxygenated, deoxygenated). Sentences use no more than 15 words. Four questions testing recall only.

Core version: Learners describe the process of blood through the heart in their own words. Include a blank diagram for labelling. Six questions mixing recall and explanation.

Learners explain heart valve failure and predict impacts. Provide resting vs. exercising heart rate data for analysis. Include four evaluation questions (Extension Activity). (Bloom, 1956; Krathwohl, 2002).

This prompt produces three genuinely different resources in under two minutes. The key is specifying what changes between tiers, not just the difficulty label.

AI differentiation varies across subjects as each needs specific changes. Year 5 maths and Year 10 English lessons need very different support strategies (Tomlinson, 2014).

AI builds helpful writing support for learners. For Year 7, ask AI for diverse tasks. Lower attaining learners gain story maps with starters. Middle attaining learners get paragraph prompts for structure. Higher attaining learners face constraints (Tomlinson, 2014). Same content, varied access points.

AI creates varied reading questions. For lower-attaining learners, use retrieval questions (e.g. "Find forest words"). Inference questions suit middle-attaining learners (e.g. "Why does the character hesitate?"). Evaluative questions work for higher-attaining learners (e.g. "How does the writer create unease? Use text").

AI helps with maths differentiation at task level. Paste core problems, then ask AI for scaffolded versions (examples, solutions). It can also generate core and extension versions (new contexts, multi-step). Diffit and TeacherMatic automate this from a topic.

Easier problems hinder maths learners. Scaffolding should cut barriers, not thinking (Hattie, 2012). Use AI to make maths accessible. Ensure learners still apply mathematical reasoning.

AI supports differentiated science lessons with varied resources. For Year 8 photosynthesis, learners get different tasks. Lower ability learners receive gap-fill diagrams. Middle ability learners complete explanations using key words. Higher ability learners design light intensity experiments. All teach the same concept, catering for differing learner needs.

AI rewrites sources for varied learner reading levels. It also creates support questions and advanced evaluation tools (Wiliam, 2011). For Year 9 geography on climate change, use shared data. Give task sheets for support, core, and extension activities (Hattie, 2008).

AI customises reading in primary schools, like for Year 3 learners studying the Great Fire of London. Learners access the same facts, but at different reading levels. AI changes sentences, words, and paragraph density (Goodwin, 2024). This helps mixed-ability groups read the same content easily.

Some worry that this approach bypasses the concrete stage, where learners use physical objects to understand maths (Bruner, 1966). Others suggest that rapid movement between the three stages supports deeper conceptual understanding (Skemp, 1976; Clements & Sarama, 2014). AI tools should be used cautiously to build firm maths foundations.

AI tools help learners with SEND, but match them to individual needs. Simply changing resources isn't enough for SEND support. Design resources for how learners process information (EEF, 2021).

| SEND Need | AI Adaptation | Prompt Instruction |

|---|---|---|

| Dyslexia | Simplified sentence structure, larger text prompts, sans-serif font specification | "Rewrite using sentences under 12 words. Avoid dense paragraphs. Use bullet points where possible." |

| ADHD | Chunked tasks with clear checkpoints, visual progress markers | "Break this into 5 short tasks of 3-4 minutes each. Add a checkbox before each task." |

| Autism (ASD) | Explicit instructions, reduced ambiguity, predictable structure | "Use numbered steps. Avoid figurative language. State exactly what a successful answer looks like." |

| Speech and language | Visual supports, keyword highlighting, colourful semantics integration | "Add a visual cue beside each key term. Use colour coding: who (orange), what doing (yellow), what (green)." |

| Dyscalculia | Concrete examples before abstract, number line scaffolds, reduced number of problems | "Start each problem with a real-world context. Include a number line. Reduce to 5 problems instead of 10." |

| EAL | Bilingual glossaries, visual vocabulary, simplified instruction language | "Create a glossary of 10 key terms with simple English definitions and space for home language translation." |

A growing number of SEND teachers are using AI to generate resources. This is AI's fastest growth area in UK schools. AI lets teaching assistants create SEND worksheets quickly. This frees up time for direct learner support.

For a deeper look at AI tools matched to specific SEND needs, see our guide to AI in special education.

Adaptive learning platforms change difficulty in real time as learners respond (Kasneci et al., 2023). The AI changes difficulty after learner responses, unlike teacher differentiation. This gives the AI control of adaptations, not the teacher.

| Platform | Subject Focus | How It Differentiates | Teacher Control |

|---|---|---|---|

| Century Tech | Maths, English, science | AI selects next question based on prior answers | Can set topic focus; AI controls difficulty |

| Seneca Learning | All GCSE/A-Level subjects | Spaced repetition with difficulty scaling | Assigns topics; AI schedules review timing |

| Sparx Maths | Mathematics | Personalised homework paths based on class teaching | Teacher sets topic; AI adjusts question difficulty |

| Tassomai | Science (primarily) | Daily quiz with algorithm-driven focus areas | Limited; AI determines revision priorities |

| Diffit | Cross-curricular reading | Adapts text reading level; generates tiered activities | Teacher chooses text and target levels |

AI homework platforms support learners outside lesson time. Teachers use AI during lessons for quicker differentiation. Teachers see body language and hear questions from learners. Peer work also helps teachers make better decisions (Black & Wiliam, 1998).

Teachers must consider new risks when using AI resources. Manual differentiation lacked these. AI speed needs careful thought.

1. Differentiating down, not across. The default AI behaviour when asked to "simplify" is to remove content. This produces a support version that covers less curriculum rather than providing a different route to the same learning. Always specify that all tiers must address the same learning objective. The support version should scaffold access to the learning, not reduce the learning itself.

Too much differentiation makes more work without improving learning. Three worksheet versions (support, core, extension) usually work well. More versions need more decisions and resources (Tomlinson, 2014; Wiliam, 2011; Hattie, 2012).

3. Static grouping. Using AI to generate differentiated resources makes it tempting to assign learners permanently to a tier. "Jamie always gets the support sheet." This contradicts what we know about the zone of proximal development (Vygotsky, 1978). Learners should move between tiers based on their current understanding of the specific topic, not a fixed label. Use AI-generated resources flexibly, not as a tracking system.

AI simplifies tasks, not extends them. "Extension" work often gives learners more of the same. True extension needs prompts that target analysis skills (Bloom, 1956). Carefully design prompts that encourage learners' higher-order thinking (Anderson & Krathwohl, 2001).

5. Skipping the review. AI-generated differentiated resources are drafts, not finished products. A support version may use vocabulary that is still too complex. An extension version may include content beyond the curriculum. Five minutes of teacher review catches errors that would take 20 minutes to unpick during the lesson.

AI differentiation links to Rosenshine's principles (Rosenshine, 2012). Knowing this helps you use AI tools better. See them as part of your teaching, not separate (Rosenshine, 2012).

AI designs review questions at three levels (Principle 1). Lower attaining learners recall facts. Middle attaining learners explain links between ideas. Higher attaining learners apply knowledge (Anderson & Krathwohl, 2001). The difficulty increases, but all learners use what they already know.

Principle 6 (Check for understanding): AI creates tiered exit tickets that assess the same learning objective at different depths. This gives you diagnostic data on every learner, not just the learners who put their hands up.

AI builds personalised revision based on each learner's difficult topics (Principle 10). This gives learners targeted questions instead of generic worksheets. Seneca and Carousel Learning automate this process (Willingham, 2009; Christodoulou, 2014; Didau & Rose, 2016).

AI creates differentiated materials quickly. Rosenshine (2012) guides when to use them effectively. Combining research-based timing with AI resources improves learner outcomes more than either alone (Rosenshine, 2012).

AI differentiation can easily fit into your weekly lesson plans. Using AI will not greatly increase the time you spend preparing.

| When | AI Task | Teacher Task |

|---|---|---|

| Sunday evening (15 min) | Generate tiered resources for 3 key lessons | Review outputs, adjust tier assignments based on last week's data |

| Before each lesson (5 min) | Generate differentiated starter questions | Decide which learners need which tier today |

| During the lesson | N/A (resources already prepared) | Circulate, adjust scaffolding live, move learners between tiers |

| After the lesson (5 min) | Auto-mark exit tickets | Note which learners need tier adjustments for next lesson |

| Friday (10 min) | Generate personalised revision based on the week's data | Review revision materials, send home as weekend homework |

Total additional AI time: approximately 40 minutes per week. Total time saved on manual resource creation: approximately 2-3 hours per week. The net gain is not just time but quality. Resources that would have been a single worksheet for the whole class become three purposeful versions that genuinely meet different needs.

Choose one lesson with a class you know well. Select a task you would normally give to everyone as the same worksheet. Open ChatGPT, Claude, or Diffit and use this template:

"I am teaching [subject] to [year group]. The learning objective is [objective]. Create three versions of a [task type]:

Support: [specific scaffold type, e.g., sent

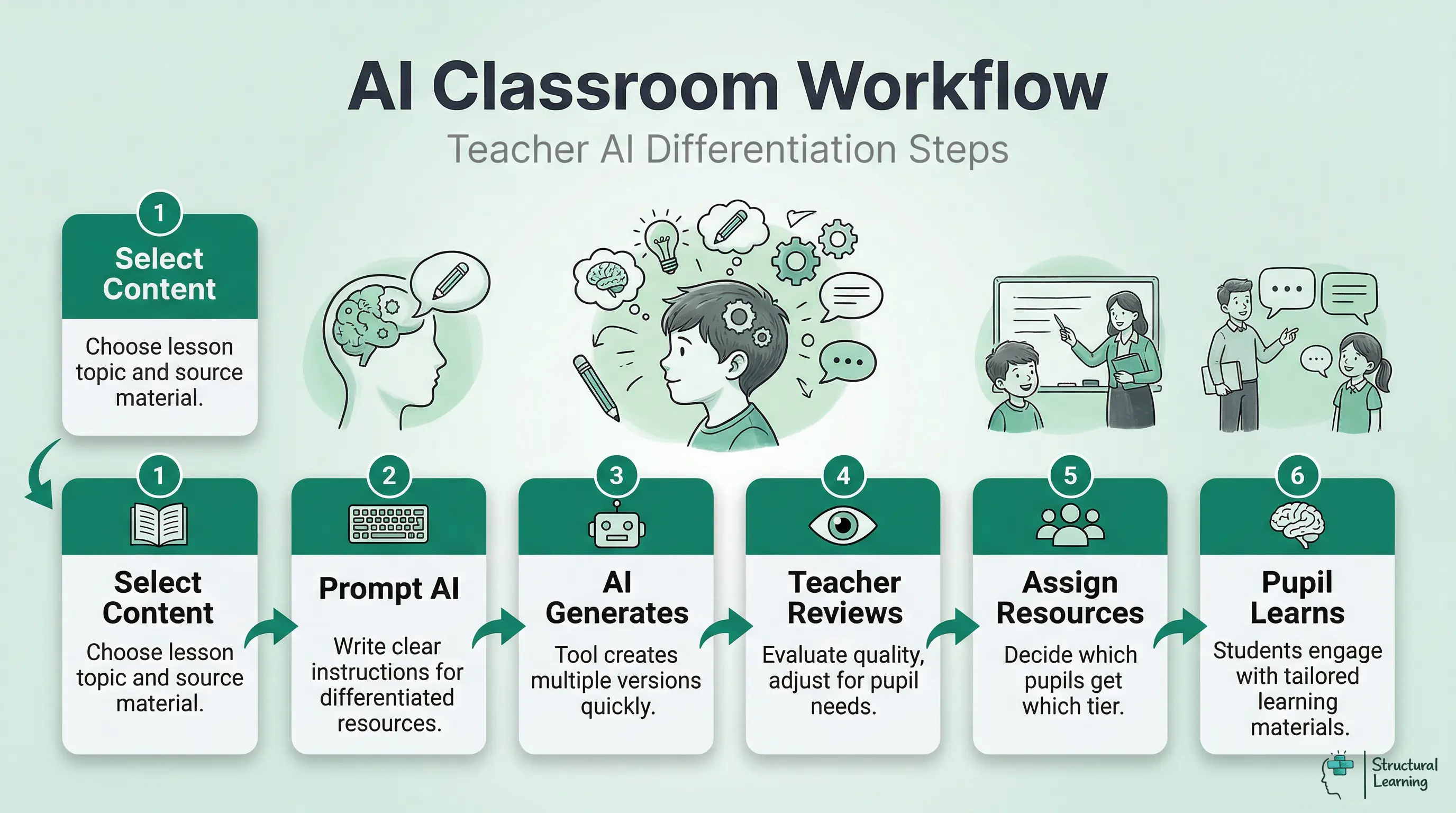

AI tools quickly adapt learning materials, saving time. Teachers decide which learners need extra help, as shown by researchers (Name, Date). This shifts resource creation away from teachers. AI does not replace teachers' expert judgement. Teachers typically start by prompting an AI tool to generate three versions of a single worksheet or reading task. The prompt must specify the exact changes needed between tiers, such as adding a word bank for support or requiring evaluative answers for greater depth. After generation, the teacher reviews and refines the materials before using them in a lesson. We know time is tight. Lesson prep is faster now. Creating tiered resources takes minutes, not an hour. This lets you assess each learner and give feedback in class. Teachers often use vague AI prompts like "make this easier." This produces shorter text, not good learning support. Always check AI output for accuracy and pedagogical fit (Kasneci et al., 2023). Teachers must ensure vocabulary and complexity suit the learner's year group. Adapting teaching to current learner needs improves results, research shows (Bloom, 1984). AI offers a faster way to produce diverse learning materials. This supports effective teaching approaches, as outlined by Vygotsky (1978) and Piaget (1936). AI tools make question sets fast, adjusting difficulty. Teachers can create recall and thinking questions. This allows targeted questioning, saving writing time.Frequently Asked Questions

What is AI differentiation in the classroom?

How do teachers use AI for differentiation?

What are the benefits of using AI for lesson differentiation?

What are common mistakes when using AI to differentiate learning?

What does research say about differentiated instruction and AI?

How can AI adapt questioning for mixed ability classes?

Core: [the standard task with open questions]

Extension: [higher-order challenge, e.g., evaluate, compare, apply to new context]

All three versions must address the same learning objective. Format each to fit on one A4 page."

Review the output. Print the three versions. Distribute based on your assessment of where each learner is with this specific topic. After the lesson, note what worked and what needed adjusting. That single lesson gives you enough information to decide whether AI differentiation is worth scaling across your teaching.

See our AI for teachers hub for AI tools in the classroom. Find AI marking and feedback for assessment differentiation. Use our guide for managing mixed ability groups with differentiation strategies. (Tomlinson, 2001; Vygotsky, 1978).

Free for teachers. The platform builds a classroom-ready lesson plan from your topic in under two minutes.

Create Free Account →

Differentiation is supported by research, and recent studies investigate AI help. Tomlinson (2014) and Hattie (2012) offer important frameworks for effective differentiated teaching, and Kasneci et al. (2023) review how AI can support learner progress.

How to Differentiate Instruction in Academically Diverse Classrooms View study ↗

Tomlinson (2001)

Tomlinson's (2014) framework supports adapting content, process, and product. Consider AI tools for learners using this. Tomlinson (2014) shows readiness, interest and learning profile drive differentiation. This differentiation approach remains the standard (Tomlinson, 2014).

Mind in Society: The Development of Higher Psychological Processes View study ↗

Vygotsky (1978)

Vygotsky believed learning comes before development. This idea supports scaffolding and learner support. AI tools now create these at scale. Differentiation theory uses this concept.

Principles of Instruction: Research-Based Strategies That All Teachers Should Know View study ↗

Rosenshine (2012)

Rosenshine (2012) shows differentiation helps learners. Check understanding, use scaffolding, and give practice. AI tools create resources for each principle we discuss.

ChatGPT for Good? On Opportunities and Challenges of Large Language Models for Education View study ↗

2,800+ citations

Kasneci et al. (2023)

LLMs offer personalised learning, and teachers need to understand their capabilities and limits when using them with each learner (Kasneci et al., 2023).

Generative Artificial Intelligence in Education View study ↗

DfE Official Guidance

Department for Education (2023)

The UK government advises on AI in schools. This includes personalised learning and differentiation. Expect school AI policies and learner data protection (UK Gov, 2024). Balance AI tools with teacher resources and judgement (EEF, 2021).

Theory grounded. Classroom workable. Free for teachers.

Open a free account and help organise learners' thinking with evidence-based graphic organisers. Reduce cognitive load and guide schema building dynamically.