CAT4 Tests: A Teacher's Guide to Cognitive Abilities Testing

This guide provides a thorough overview of the Key Takeaways CAT4 measures reasoning, not knowledge : The test identifies how students think across...

This guide provides a thorough overview of the Key Takeaways CAT4 measures reasoning, not knowledge : The test identifies how students think across...

This guide provides a thorough overview of the

* CAT4 measures how students think and learn rather than what they have already memorised.

* The assessment identifies a student's potential across four distinct areas of reasoning.

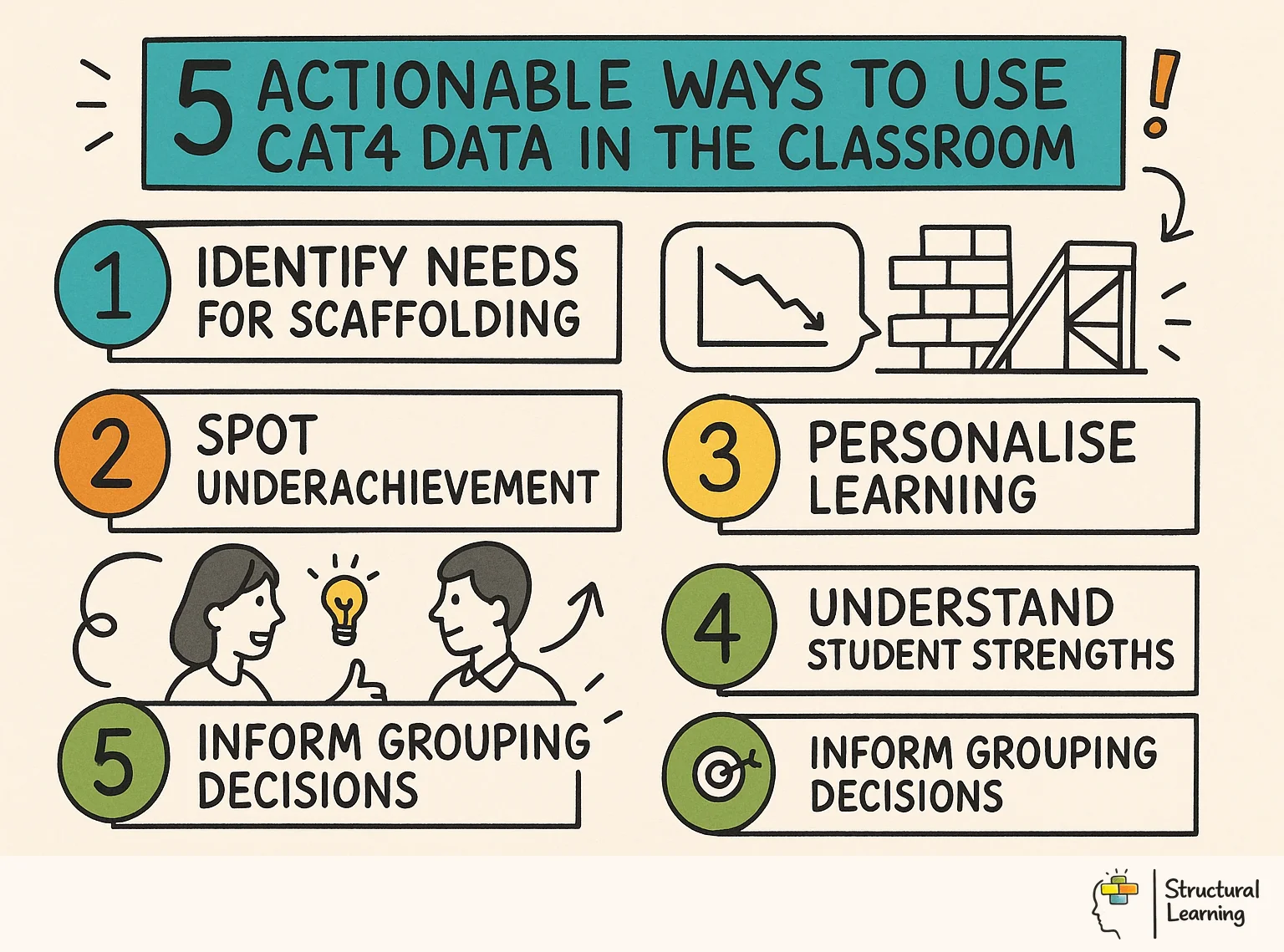

* Teachers can use CAT4 profiles to identify students who may require visual scaffolds or literacy support.

* Data from these tests helps schools spot underachievers whose low attainment masks high cognitive potential.

* The results provide a reliable baseline that is independent of a student's prior schooling or English language proficiency.

* CAT4 is not a measure of fixed intelligence and teachers should use it as a starting point for further investigation.

* Reliable data allows for more precise

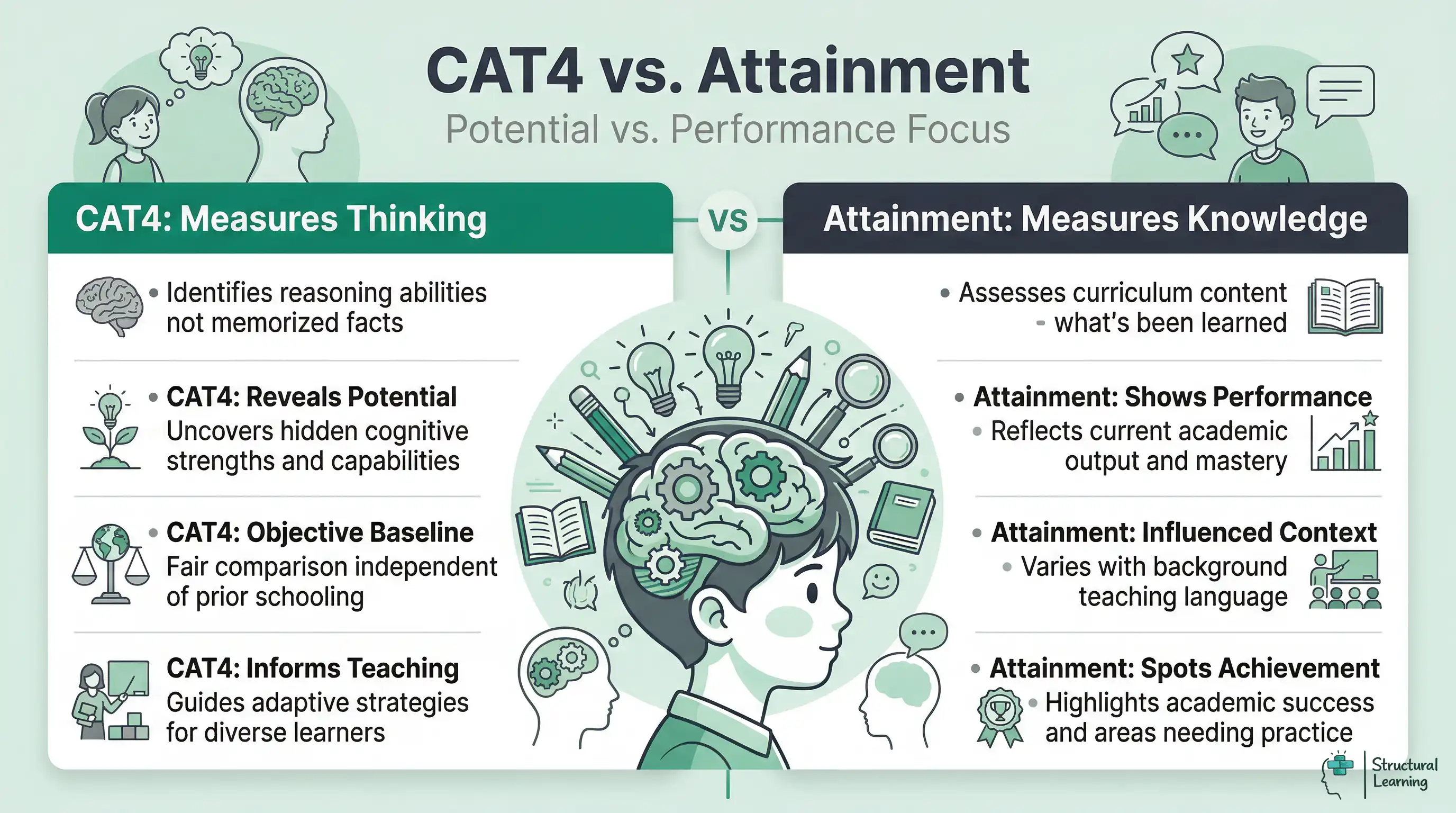

The Cognitive Abilities Test Fourth Edition (CAT4) is a diagnostic tool used by thousands of UK schools. It provides a strong measure of a student's reasoning abilities across four distinct batteries. Unlike SATs or GCSEs, it does not assess knowledge of the curriculum. Instead, it looks at how a student processes information and solves problems.

Professor David Lohman, a key figure in the development of cognitive testing, argues that these assessments measure "developed abilities." These are the skills that students have acquired over time through their environment and education. They are the tools a student uses to learn new content. By measuring these tools, we can predict how easily a student might grasp complex concepts in the future.

Schools usually administer the CAT4 at transition points such as Year 3, Year 7, and Year 9. The test is digital and adaptive, meaning it adjusts to the student's performance. This ensures that the assessment remains challenging but not demotivating. For the busy teacher, the primary value lies in the reports generated after the test. These reports provide a snapshot of a student's cognitive profile that attainment data often misses.

Teachers often feel sceptical about "more data." However, CAT4 is different because it offers a "standardised" view. It compares a student to a national sample of the same age. This helps teachers understand if a student is struggling because of a lack of knowledge or a genuine cognitive barrier. Ian Deary, a leading researcher in differential psychology, has shown that cognitive ability is one of the strongest predictors of educational success. Understanding this baseline allows us to set realistic but challenging expectations.

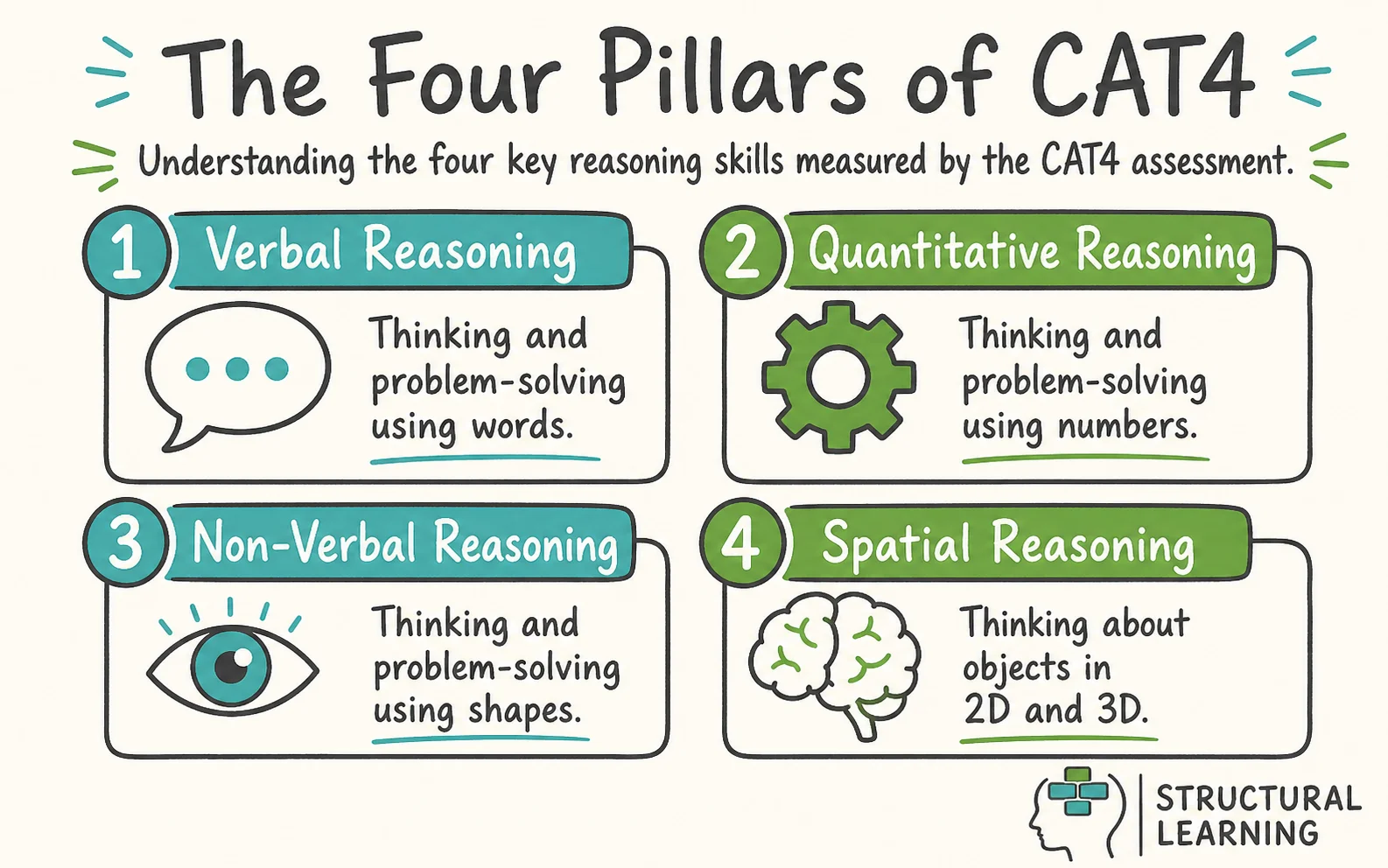

The CAT4 assessment splits into four batteries. Each battery focuses on a different way of thinking. Understanding these categories allows teachers to tailor their explanations and resources to the student's natural strengths.

The

This battery looks at a student's ability to work with numbers and numerical sequences. It is not a test of arithmetic. Instead, it measures how a student identifies patterns and relationships between quantities. It identifies the "mathematical mind" rather than the student who has just practiced their They can manipulate numbers mentally and understand the logic behind formulas. In a Science lesson, these students will quickly spot trends in data tables. If a student has a low quantitative score but high verbal ability, they may need you to "talk through" the logic of a maths problem before they can solve it.

Spatial reasoning measures the ability to visualise and manipulate 2D and 3D images in the mind. It is often the most overlooked battery, yet it is important for STEM subjects. Students must imagine how a shape would look if it were rotated or folded. This skill is vital for understanding geometry, molecular structures in Chemistry, or technical drawing in Engineering.

A student with high spatial reasoning is often a "big picture" thinker. They may struggle with linear, step-by-step instructions but can grasp a complex system if they see a model of it. Research shows that spatial skills are a strong predictor of future success in technical fields. If you have a student who can build incredible structures in Lego or Minecraft but cannot write a paragraph, check their spatial score. It is likely very high.

The reports generated by GL Assessment can feel overwhelming. However, three key numbers matter most for the classroom teacher.

The SAS is the most important score. It places the student on a scale where 100 is the national average for their age. Most students fall between 85 and 115. A score above 126 puts a student in the top 5% of the country, while a score below 74 suggests significant learning needs. Using the SAS allows you to compare students across different year groups and subjects fairly.

The NPR tells you where a student stands compared to 100 other students of the same age. An NPR of 75 means the student performed better than 75% of their peers. It is a useful way to explain results to parents. It provides a clear picture of a student's relative standing without the technical jargon of the SAS.

The Stanine scale divides the national results into nine groups. Stanines 1 to 3 are below average, 4 to 6 are average, and 7 to 9 are above average. This is a "broad brush" measure. It is useful for quickly grouping students for interventions. For example, you might decide to provide extra challenge for all students in Stanine 8 and 9.

John Hattie's research on

The most powerful part of the CAT4 is the "profile." This is the relationship between the four batteries. Most students have a "balanced profile," meaning their scores across all four areas are similar. However, many students have a "biased profile."

A student with a verbal bias has a verbal reasoning score that is significantly higher than their other scores. These students are "word-rich." They love reading and talking. However, they may struggle with visual tasks or complex data. In a Geography lesson, they might write a brilliant essay on coastal erosion but fail to draw an accurate map. They need help with "visualising" concepts.

A spatial bias is common in many students, especially those who find traditional school challenging. These students have spatial scores that are much higher than their verbal scores. They think in pictures. They often feel frustrated in a classroom that relies heavily on talking and writing.

If you have a student with a spatial bias, your teaching must change. Use physical models. Use mind maps. Allow them to use software like SketchUp or even draw their responses. These students are often the future architects and engineers of the world. They are not "bad at English," they are simply "spatial thinkers" operating in a "verbal system."

Students with a non-verbal bias are excellent at identifying patterns but may struggle to express them. You will often see this in EAL (English as an Additional Language) students. Their high non-verbal score shows they have the cognitive engine to succeed. They simply lack the "verbal fuel" at the moment. This data is vital for ensuring we do not place highly capable EAL students in low-ability sets just because their English is developing.

Data is only useful if it changes what happens in the classroom. Once you have identified the profiles in your class, you can adapt your teaching. This is not about "learning styles," which research has largely debunked. Instead, it is about "cognitive

For students with low verbal reasoning scores, the barrier is language. You should reduce the "verbal load" of your lessons. This does not mean dumbing down the content. It means using

In a Science lesson, instead of just describing the process of osmosis, show a clear animation. Give the student a diagram with missing labels. Let them process the concept through the image before they have to write about it. This uses their (often stronger) non-verbal or spatial skills to support their weaker verbal skills.

Students with high spatial scores often finish tasks quickly but might miss the details. Give them tasks that require mental rotation or 3D thinking. In Maths, let them explore the properties of shapes through physical manipulation. In History, ask them to "map out" the causes of a war using a physical or digital timeline that shows the scale of events.

These students often excel when given "open-ended" problems. If you are teaching about the Industrial Revolution, ask them to design a more efficient factory layout. This allows them to use their spatial reasoning to solve a historical problem. It moves them from simple recall to higher-level synthesis.

* English: Use the verbal SAS to set reading targets. If a student has a high verbal score but low attainment, look for barriers like dyslexia or low confidence.

* Maths: Look for the gap between the quantitative and spatial scores. A student high in quantitative but low in spatial may struggle with geometry despite being great at algebra.

* PE and Drama: High spatial reasoning is often linked to "situational awareness." These students may naturally "see" the space on a pitch or a stage.

* Art and Design: High spatial and non-verbal scores are your indicators for talent here. These students might be your most creative thinkers.

CAT4 is an exceptional tool for identifying neurodivergent learners who might otherwise slip through the cracks. It often reveals a "spiky profile." This is where there is a massive difference between the highest and lowest scores.

A student with high non-verbal and quantitative scores but a very low verbal score should be screened for dyslexia. The CAT4 shows that the "intellectual engine" is working perfectly, but the "output mechanism" (language) is blocked. Identifying this early prevents the student from feeling "stupid" and allows for targeted literacy intervention.

Many students on the However, CAT4 helps us find the "quietly gifted." These are students with high SAS scores (above 126) who are not currently excelling. They might be bored, or they might be masking their ability to fit in. Using CAT4 data ensures that we provide the right level of challenge for every student, regardless of their current output.

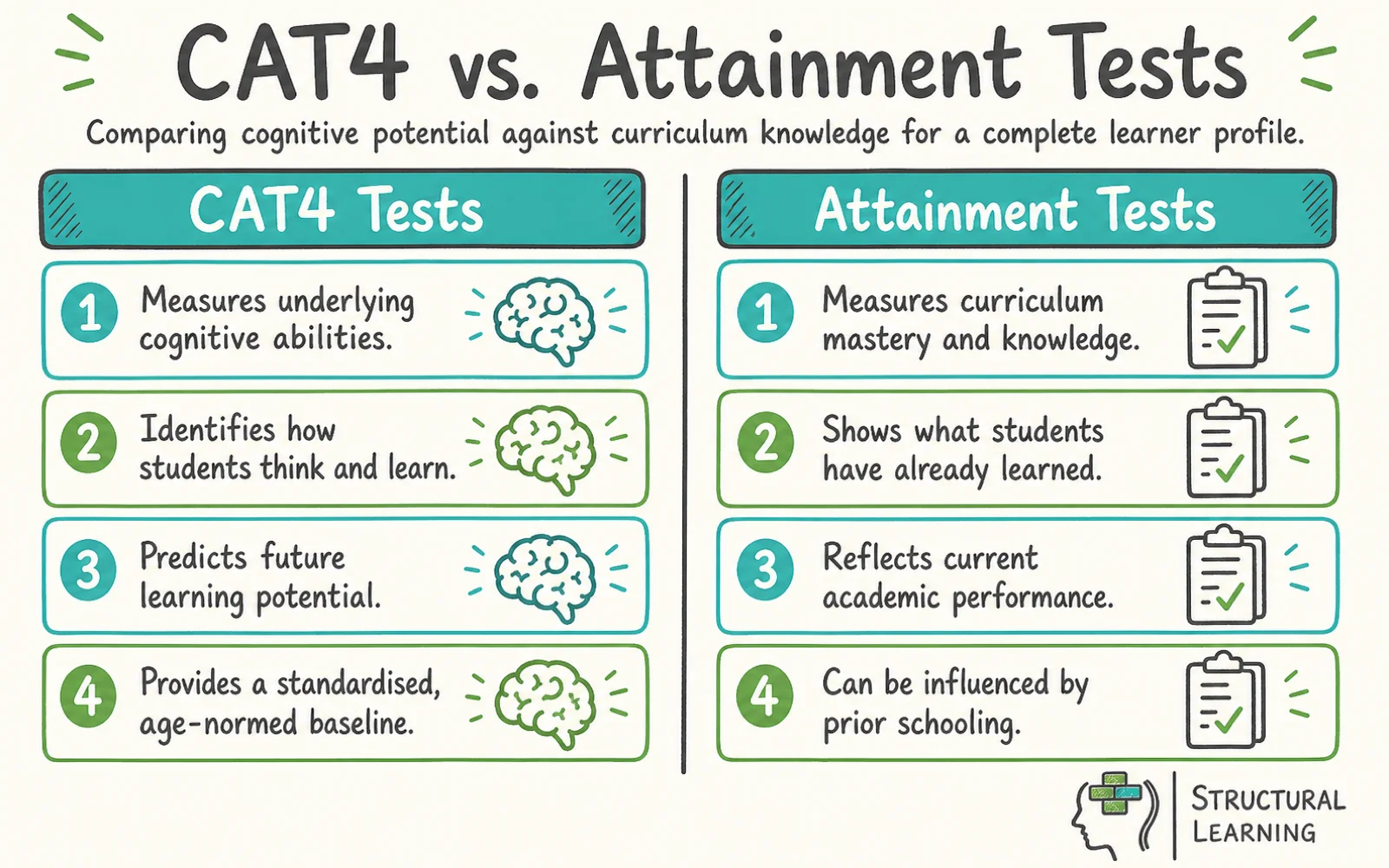

Teachers often hear about different tests and wonder how they compare. Below is a comparison of the most common assessments used in schools.

| Feature | CAT4 (GL Assessment) | WISC-V (Wechsler) | NNAT3 (Naglieri) | MidYIS (CEM) |

| :--- | :--- | :--- | :--- | :--- |

| Primary Use | Classroom baseline & profiling | Clinical diagnosis (e.g. SEN) | Non-verbal potential | Secondary school baseline |

| Delivery | Group (Digital) | One-to-one (Professional) | Group (Digital/Paper) | Group (Digital) |

| Time Taken | Approx. 2 hours | 60-90 minutes | 30 minutes | 45-60 minutes |

| Components | 4 Batteries (V, NV, Q, S) | 5 Indexes (Full IQ) | 1 Battery (Non-Verbal) | 4 Sections (V, NV, Q, Sk) |

| Language | Low reliance (except Verbal) | High reliance | No language reliance | Moderate reliance |

| Cost | Moderate (per student) | High (requires specialist) | Low | Moderate |

The CAT4 is the "middle ground." It is more detailed than a simple non-verbal test like the NNAT3, but it is much more practical for schools than the WISC-V, which requires an Educational Psychologist. MidYIS is similar but focuses more on predicting GCSE grades, whereas CAT4 focuses more on the "how" of learning.

Scepticism often comes from a misunderstanding of what the test is for. Let's address the most common myths.

CAT4 measures cognitive ability, which is related to IQ but is not a "score for life." Cognitive abilities can develop. If a student is exposed to a rich, stimulating environment, their ability to reason will improve. We should see CAT4 as a "current state" of their learning tools, not a permanent label.

While there is a strong correlation between CAT4 and GCSE grades, it is not a guarantee. A student with a high SAS might still fail if they do not work hard or if they have poor mental health. Conversely, a student with a lower SAS can "over-perform" through sheer grit and excellent teaching. We call this "value-added."

There is a debate about this. However, many schools find that sharing the "profile" (not necessarily the number) helps students understand themselves. Telling a student "You are a visual thinker, so you should use diagrams to revise" is enabling. It moves the conversation away from "how clever am I" to "how do I learn best."

You do not need to spend hours staring at spreadsheets. Here is how to use the data efficiently.

Does CAT4 require any preparation?

No. Students should not "tutor" for CAT4. Doing so invalidates the results. The goal is to see their natural reasoning, not how well they can practise a specific test format. A short practise session to familiarise them with the digital interface is all that is needed.

How does CAT4 help with EAL students?

EAL students often have a "false low" in verbal reasoning. Their non-verbal and spatial scores show you their true potential. If a student has an SAS of 120 in Non-Verbal but 70 in Verbal, you know they are highly intelligent and simply need intensive language support.

Can CAT4 scores go down?

Yes. A score might drop if a student was unwell during the test or did not take it seriously. It can also happen if a student's

The "mean" score is the average of the four batteries. While useful as a quick summary, it can hide a lot of information. A student could have a "mean" of 100 but have a 130 in Spatial and a 70 in Verbal. Always look at the individual battery scores to get the full picture.

How often should we test?

Most schools test at the start of

Log into your school's data portal today and identify the three students in your class with the largest gap between their Verbal and Spatial scores. Provide a visual scaffold for their next written task and observe the impact on their engagement.

Free for teachers. The platform builds a classroom-ready lesson plan from your topic in under two minutes.

Create Free Account →

These studies examine the evidence base for cognitive ability testing and its application in educational settings.

The Structure of Cognitive Abilities: A Meta-Analytic Review View study ↗

1,400+ citations

McGrew, K.S. (2009)

This meta-analytic review of the Cattell-Horn-Carroll model underpins the theoretical framework behind CAT4. It demonstrates that cognitive abilities cluster into distinct factors (verbal, spatial, quantitative) which predict different academic outcomes, validating the four-battery approach used in classroom profiling.

Cognitive Abilities and Educational Outcomes: A Longitudinal Analysis View study ↗

2,800+ citations

Deary, I.J. et al. (2007)

This landmark study followed over 70,000 English students and found that cognitive ability measured at age 11 predicted 58% of the variance in GCSE results at age 16. It provides the strongest UK evidence for why baseline cognitive assessment adds genuine value to school data systems.

Spatial Ability and STEM Education: A Review View study ↗

950+ citations

Wai, J., Lubinski, D. & Benbow, C.P. (2009)

This study demonstrates that spatial reasoning is a powerful predictor of STEM achievement, often more so than verbal or quantitative scores. For teachers using CAT4 data, it explains why the Spatial battery deserves as much attention as the Verbal battery when identifying talent and planning differentiation.

Identifying Academically Talented Students: Some General Principles, Two Specific Procedures

Lohman, D. F. (2011)

Lohman, a key researcher behind cognitive abilities testing, argues that such tests measure "developed abilities" shaped by experience rather than fixed intelligence. This chapter supports the view that CAT4 scores should inform teaching rather than create permanent labels for students.

Identifying Twice-Exceptional Students Using Cognitive Profiling View study ↗

180+ citations

Foley-Nicpon, M. et al. (2011)

This study examines how cognitive profile analysis can identify twice-exceptional learners who are both gifted and have a specific learning difficulty. It validates the use of "spiky profiles" in CAT4 data to flag students who need both challenge and support simultaneously.

Adjust the sliders below to explore different CAT4 cognitive profiles. The Standard Age Score (SAS) scale ranges from 70 to 130.

Score: 100 (SAS)

Score: 100 (SAS)

Score: 100 (SAS)

Score: 100 (SAS)

Verbal

Quantitative

Non-Verbal

Spatial

CAT4 is a cognitive ability measure. Scores guide pedagogy but don't limit potential. All learners benefit from high-quality teaching, challenge, and growth-focussed feedback.

From Structural Learning | structural-learning.com

Formative. Diagnostic. Free for teachers.

Open a free account and help organise learners' thinking with evidence-based graphic organisers. Reduce cognitive load and guide schema building dynamically.