How to Write an IEP When the Student Has Made No Progress

A practical guide for IEP teams when a student has made no progress: writing present levels honestly, analysing what went wrong, rewriting goals.

A practical guide for IEP teams when a student has made no progress: writing present levels honestly, analysing what went wrong, rewriting goals.

Zero progress is not the worst outcome you can face in special education. Writing about it dishonestly is. When a learner ends the year at the same performance level they started, the IEP team has two choices. They can hide this fact with vague language and hopeful goals, or treat the flat trendline as useful data and build a better plan from it.

The second approach is the only one that serves the learner. It is also the only one that protects you legally.

This guide is for the Sunday night before the Monday morning annual review. It covers the legal framework, wording for documentation, the parent conversation and changes to goal writing. These steps can help turn a difficult meeting into a productive one.

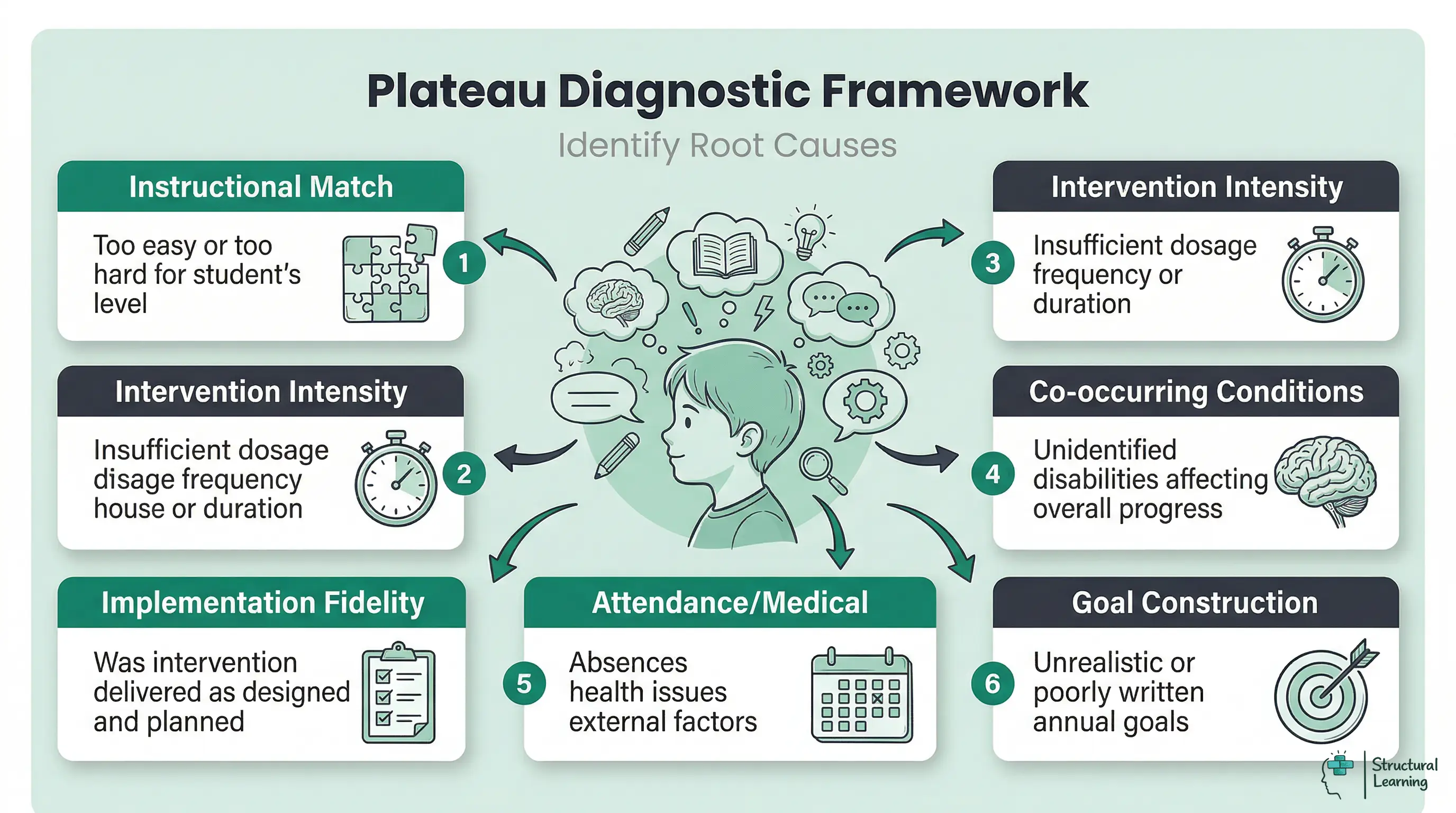

Before you write a single word of the revised IEP, identify why the trendline is flat. There is almost always a reason. Attributing zero progress to "the severity of the disability" without checking the evidence is professionally weak and legally risky.

Burns (2004) is better used more narrowly: his drill-ratio work illustrates how the instructional level of a task can affect practice and performance. For an annual review, that means asking whether the task was pitched at a level where the learner could practise successfully and build fluency, rather than assuming that more time on an inaccessible task would produce progress.

Before the annual review, treat each point below as a real hypothesis, or idea to test:

Intervention fit. Was the task within the learner's current instructional reach? Use curriculum-based measurement and other progress-monitoring data to see whether the learner is improving on the target skill.

Deno's work on curriculum-based measurement supports frequent, standardised measures that show the trend over time. It still leaves the team to judge the task, goal and instructional route (Deno, 1985).

Intervention intensity. NCII describes Data-Based Individualisation as a way to tailor and strengthen proven interventions. Teams do this by checking progress, using diagnostic data and making changes. If the learner needed intensive support but got too little support, or support was not delivered consistently, the programme may not have matched the need (National Center on Intensive Intervention, 2013).

Implementation fidelity. Did the team deliver the intervention as planned? Session cancellations, staff changes, shorter lessons and sequence drift all matter.

Implementation fidelity means checking adherence, dosage, quality and participant responsiveness. Annual review notes should record what staff actually delivered, not only what the plan intended (Dane and Schneider, 1998).

Co-occurring or newly visible needs. If a learner is not responding to reading support, another barrier may be getting in the way. It may link to language, working memory, attention, anxiety, hearing, attendance or another access need.

Bring the evidence back to the IEP team. Then consider whether further evaluation or specialist input is needed, rather than adding a placeholder citation to a diagnosis claim.

Attendance and opportunity to learn. Record missed sessions, absences and timetable changes separately from how well the intervention worked. If a learner missed a large part of the intervention period, still report the trendline honestly. But do not treat limited chance to learn as proof that the teaching approach failed.

Goal construction. Some annual goals cannot be achieved from the moment they are written. This happens because the baseline was overestimated, the expected growth rate was too high for this disability, or the goal mixed several different subskills that should have been taught in sequence.

Take 20 minutes before the meeting to run through this list with your progress monitoring data in front of you. Your hypothesis about the primary cause will shape every other section of the revised IEP.

Many teachers approach an IEP annual review following zero progress with the assumption that they have done something wrong. In most cases, they have not. Use it as a starting point for professional discussion: identify the learner's current need, record evidence from more than one lesson, and agree the next classroom adjustment with the SENCO or family.

IDEA gives eligible children with disabilities a free appropriate public education through an IEP. The IEP includes present levels, measurable annual goals, progress measurement, and special education and related services. The legal standard does not guarantee that every target will be met. However, the IEP must be designed, reviewed and revised so the child has a meaningful opportunity to make appropriate progress.

The Supreme Court's ruling in Endrew F. v. Douglas County School District (2017) clarified the standard. The Court said that a child's IEP must be 'reasonably calculated to enable a child to make progress appropriate in light of the child's circumstances.' This standard is sometimes called 'appropriately ambitious.' It replaced the earlier, lower standard with something more demanding.

Some courts had interpreted the old standard as requiring only minimal progress. But it is still a standard of reasonable calculation, not a guarantee of outcomes.

OSERS's Endrew F. Q&A says the IEP team makes a forward-looking judgement. The team uses the child's progress, potential for growth, parent views and other information. Yell and Bateman (2017) provide teacher-focused analysis of the same decision.

In practice, the safeguard is a clear evidence trail: accurate present levels, a reasonable plan, recorded delivery and prompt revision when data show that expected progress is not happening.

What creates legal vulnerability is not a flat trendline. It is:

- Writing goals that were never going to be achievable (setting the learner up to fail on paper)

- Failing to collect and document progress monitoring data throughout the year

- Ignoring a flat trendline mid-year without any documented response (no IEP amendment, no team meeting, no change in approach)

- Describing present levels in ways that do not show what the learner can actually do

Complete progress-monitoring and delivery records show what the team knew and how it responded. IDEA Sec. 300.324 requires periodic review, at least annually, and revision where there is lack of expected progress. A flat trendline should therefore lead to documented analysis and a revised plan, not a copied goal.

You can also strengthen your position by referencing MTSS and RTI frameworks in your documentation. For example, a learner may have moved through Tier 1 and Tier 2, and now need Tier 3 intensive intervention. If you have documented data at each stage, this gives a clear evidence trail. It supports the current IEP decisions.

The Present Level of Academic Achievement and Functional Performance is the section where most educators make their first mistake when progress has been zero. The instinct is to soften the language, add qualifying phrases, and avoid stating the flat trendline directly.

This instinct is understandable. It is also counterproductive.

A PLAAFP that obscures the reality of zero progress creates three problems. It gives parents inaccurate information about their child's performance. It makes it harder to justify the instructional changes you are about to propose. And it reduces the document's credibility as a legal record.

Write the PLAAFP using the following template as a starting frame, then adapt to your specific data:

"At the beginning of the [academic year], [learner name] demonstrated [specific skill] at [precise baseline measure] as measured by [assessment tool or method]. The annual goal targeted [specific growth] by [target review point]. Progress monitoring data was collected [frequency, e.g., every two weeks] using [tool].

This showed that [learner name] performed at [current level] at the end of the year. This represents [amount of growth] over the intervention period. This rate of progress indicates that the current instructional approach requires modification. Contributing factors identified by the team include [list from your diagnostic analysis above]."

Notice what this template does. It is specific (it names the measure, the baseline, the target, and the outcome). It is honest (it names the gap between target and reality).

It is analytical rather than defensive (it moves immediately to contributing factors, treating the data as information rather than accusation). And it is forward-pointing (the final phrase sets up the revised plan).

Here is a worked example:

"At the beginning of the 2024-25 academic year, Marcus demonstrated oral reading fluency at 42 words correct per minute (WCPM) on Grade 3 passages as measured by AIMSweb Plus. The annual goal targeted 70 WCPM by May. Progress monitoring data collected biweekly across 18 data points indicated that Marcus performed at 47 WCPM at the close of the year.

Growth of 5 WCPM over 36 weeks falls significantly below the 28 WCPM gain targeted. The team identified three contributing factors. These include 19 absences in the second semester and a personnel change in January that disrupted intervention consistency for six weeks. New assessment data also suggests working memory deficits that may require instructional accommodation."

This paragraph is uncomfortable to write. It will also protect you, inform the parents accurately, and build a coherent justification for the changes ahead.

Data-based individualisation (DBI) is the systematic process of using progress monitoring data to identify why an intervention is not working and to make targeted adjustments. Fuchs and Fuchs (2007) created DBI to solve a common problem in special education. This problem happens when teachers use an intervention without a clear plan for what to do if it doesn't work.

DBI asks four questions in sequence:

1. Is the learner responding to the intervention? Look at the slope of the progress monitoring data. A flat or declining slope across at least six to eight data points signals non-response. A slope that started upward and then plateaued signals a different problem (the learner may have reached a performance ceiling within that specific task type).

2. Was the intervention implemented as intended? Check your fidelity records, session logs, and any notes on staff changes or timetable disruptions. If you cannot answer this with documentation, that is itself a finding. Running an intervention without fidelity data is one of the most common gaps in special education practice.

Deno's (1985) work supports frequent curriculum-based measures that make the learner's growth visible. Use the progress-monitoring tool's norms, local data and team judgement to ask whether the goal was calibrated sensibly; do not cite CBM as if it supplies one universal growth rate for every learner.

Fuchs and Fuchs (2007) identify two options: instructional modifications and programme changes. Teams can adjust intensity, frequency or pacing. If needed, they can change the method completely. Use data to guide intervention decisions.

Run through this sequence with your data before the annual review meeting. Document your answers. This process is both good practice and a clear demonstration to parents that the team has analysed the plateau rigorously rather than simply writing new goals and hoping for different results.

Formative assessment tools can be embedded throughout the year to catch a plateau before it spans the full annual cycle. Your school needs a decision rule for responding to lack of progress. For example: "if a learner fails to show enough growth after six consecutive data points below the aimline, hold a team meeting." Having this rule prevents the Sunday-night situation you are now in.

After running the DBI analysis, you are ready to write revised goals. The core principle is simple: next year's goals must be different from this year's goals in some substantive way. Writing identical goals for a learner who made no progress is not just pedagogically unsound. It is legally indefensible under the Endrew F. standard.

The differences can be in any of the following dimensions:

Baseline accuracy. Use this year's end-of-year data, not the original baseline, as next year's starting point. This sounds obvious, but it is frequently done incorrectly. If Marcus ended the year at 47 WCPM, his IEP should not start from a baseline of 42 WCPM simply because that was the original entry point.

Endrew F. requires IEP teams to design goals that are appropriately ambitious in light of the child's circumstances. OSERS guidance says teams should consider present levels, previous rate of progress, parent information and potential for growth. That is stronger than copying an unmet goal, but it is still an individualised judgement rather than a fixed growth formula.

Subskill sequencing. If a large goal (increase oral reading fluency to 70 WCPM) produced no progress, consider whether the goal needs to be broken into component subskills. Phonemic awareness, decoding accuracy, sight word recognition, and prosody are all separable skills that contribute to fluency.

A learner who did not improve fluency may have made growth in one of these subskills that is invisible in the fluency measure. Identify the foundational skill that needs to be secured before the composite skill can grow.

Instructional approach. If the analysis indicates that the current intervention is not the right match for the learner, the goal needs to reflect a change in methodology. Document the new approach clearly: not just "specialised reading instruction" but the specific programme name, the instructional principles it is based on, and why it is a better match for the learner's profile.

Frequency and duration. If the learner was receiving 30 minutes per day and made no progress, this is not automatically an argument for more of the same. It may be an argument for a different intensity pattern, a different method or clearer diagnostic assessment. NCII's DBI framework asks teams to adapt intensity and intervention features in response to progress-monitoring data rather than rely on a single dosage rule.

Scaffolding in education literature is relevant here too. Vygotsky's zone of proximal development principle applies directly to goal-setting. A goal that needs major support must have clear scaffolding built into the plan, not just a target date.

When a learner struggles with the curriculum, not just one separate skill, review differentiation, scaffolding and accessibility. In simple terms, ask whether the route through the curriculum was accessible to the learner. Keep the attribution modest: these are planning principles. They do not prove that one named strategy will solve a zero-progress problem.

The language you use in the annual review meeting matters as much as the documents in front of you. The following table gives specific guidance on phrasing for the most common difficult moments.

| Situation | Do NOT say | DO say |

|---|---|---|

| Opening the meeting | "I know the data isn't great, but..." | "I want to start by sharing exactly what the progress data shows, and then we will look at what it tells us about next year's plan." |

| Presenting the flat trendline | "He didn't really make the progress we hoped." | "The data shows Marcus ended the year at 47 WCPM. The goal was 70. That is a 23-point gap we need to understand and plan for." |

| Explaining why progress stalled | "It was a hard year for everyone." | "We have identified three contributing factors: attendance in semester two, a personnel change in January, and new assessment information about working memory. Here is how the revised plan addresses each one." |

| Parent asks "Why didn't he learn to read?" | "We tried our best." | "That is exactly the right question. Let me show you what the data tells us about where the instruction needs to change." |

| Parent expresses frustration or anger | "I understand your concerns, but..." | "That frustration makes complete sense. [Pause.] Let me make sure you have all the information, and then let's talk about what changes specifically." |

| Parent asks about their legal rights | "You can request an IEE if you want, but..." | "Absolutely. You have the right to request an Independent Educational Evaluation at district expense. I can give you that information in writing today if you would like." |

| Explaining the new plan | "We're going to try some new things." | "The revised plan changes three things: the reading programme, the session frequency, and the way we will monitor and respond to data this year. Here is each one." |

| Closing the meeting | "Hopefully next year will be better." | "The goal for next year is [specific target]. We will review data every six weeks and meet if the growth rate falls below [decision rule]. You will receive a progress report in [month]." |

Specific data makes the conversation clearer. Name the learning difficulty directly, show the progress graph, acknowledge the gap and move to the revised plan. Avoid vague reassurance such as "they are doing fine" when the data show otherwise; it weakens trust and delays the next instructional decision.

Childre and Chambers (2005) found parents feel excluded from IEP meetings. Professionals often use language hard for parents to understand. Parents leave meetings unsure of next steps (Childre and Chambers, 2005). Zero progress meetings make these problems much worse.

Structure your parent communication in this sequence:

Step one: share the data first, without framing. Place the progress monitoring graph in front of the parent and describe what it shows: "This line shows where [learner] started in September. This line shows where we aimed to be by May. This line shows where [learner] actually is." Let the parent process the visual before you add interpretation.

Step two: acknowledge the emotional reality before you explain. Parents who have a child with a learning disability have frequently spent years in meetings where professionals explain before they listen. If a parent looks distressed, stop and say: "Before we go any further, I want to hear what this is like for you." This is not a delay. It is what makes the rest of the meeting productive.

Step three: share your analysis, not your excuses. There is a clear difference between explaining contributing factors with analysis and making excuses defensively.

For example: "our data shows that the 19 absences in semester two account for approximately eight weeks of missed intervention" versus "well, he missed a lot of school". Use the DBI framework from the analysis section as your structure. Parents can hear difficult information when it is framed as investigation.

Step four: present the revised plan in specific terms. Not "we will try a new approach" but "we are recommending a switch from [Programme A] to [Programme B]. This is because [Programme B] addresses decoding at the phoneme level, which the new assessment data identifies as the primary gap." Specificity communicates competence.

Step five: establish the monitoring promise. Tell the parent exactly how often they will receive progress data, what the decision rule is for convening a mid-year meeting, and how they can contact you if they have concerns between reviews. This last element is the most underused trust-building tool available to IEP teams.

Self-regulation in the classroom research also matters when you consider the learner's view. If the learner is old enough to take part meaningfully in the IEP meeting, ask what has and has not helped. Their voice is both legally appropriate and practically valuable. Learners who participate in their own IEP meetings demonstrate better self-advocacy and greater investment in their goals (Martin et al., 2006).

Sometimes zero progress is not a signal to adjust the programme. It is a signal to reexamine the underlying evaluation. Use it as a starting point for professional discussion: identify the learner's current need, record evidence from more than one lesson, and agree the next classroom adjustment with the SENCO or family.

IDEA mandates reevaluation every three years for learners with disabilities. The law lets you reevaluate sooner if a learner's progress warrants it. A prolonged lack of progress strongly suggests a need for reevaluation.

Consider requesting a reevaluation when:

The disability category or profile may need review. If a learner is not responding to reading support, the team may need to look at other factors. These could include language, processing, intellectual, hearing, working-memory or attention needs. IDEA Sec. 300.303 permits reevaluation when educational or related-services needs warrant it, or when a parent or teacher requests reevaluation.

Learners change over time. A profile that was accurate at seven may not tell the full story at twelve. This is especially true when executive function, mental-health, communication or curriculum demands have changed. Use current school data and professional evaluation, not just the original eligibility paperwork.

Progress-monitoring scores and classroom observations may not always match. A learner might show a skill in a structured assessment but fail to generalise it, or use it, in ordinary classwork. When this happens, review the context, task demands, supports and assessment conditions. Also consider whether re-evaluation is needed.

The team may suspect a different primary disability category. For example, a learner may be identified for autism spectrum disorder. But if their main barrier to progress is a specific learning disability in maths, they may need a reevaluation. This would refocus the IEP on the right primary need.

A reevaluation does not invalidate the existing IEP. It provides better data for designing the next one. Frame it to parents as exactly that: "We want to make sure we have the most accurate picture of [learner's] profile so that next year's plan is as precise as possible."

The 504 plan vs IEP distinction is also worth revisiting here. Sometimes, a 504 plan with specific accommodations may serve a learner better than an IEP with specially designed instruction. A reevaluation is the right way to determine this.

The best protection against the Sunday-night crisis of next year is building a structure into this year's IEP that makes a year-long plateau impossible to miss. The following practices are all evidence-based and implementable within most school contexts.

Set a clear decision rule for review. For example: "If [learner] fails to show adequate growth as defined by [specific criterion] across [number] consecutive data points, the IEP team will meet within [timeframe] to review and modify the plan." This fits the NCII DBI cycle and IDEA's requirement to revise the IEP where there is lack of expected progress.

Collect progress-monitoring data often enough to generate a usable trendline. Deno's CBM work supports brief, standard measures that can show growth over time, but the exact frequency should match the tool, skill, intervention intensity and review rule written into the plan. The key is that the team can see non-response early enough to act.

Use a visual graph, not a table of numbers. Progress monitoring data displayed as a graph with a goal line and an aimline is far more interpretable to teachers, parents, and administrators than a column of numbers. Most progress monitoring tools generate these automatically. If yours does not, a simple graph in Google Sheets takes less than five minutes to produce and is worth every one of those minutes.

Review data as a team, not in isolation. At minimum, a brief team check-in every six weeks on the progress monitoring data for learners receiving intensive intervention is good practice. This does not need to be a formal IEP meeting.

A 15-minute data review with the special education teacher, the classroom teacher, and any relevant specialist is sufficient. The purpose is to catch a flat trendline before it has been flat for six months.

Build mid-year review into the calendar. IDEA Sec. 300.324 allows changes to the IEP after the annual meeting, including amendments where the parent and public agency agree. A documented review is far preferable to arriving at an annual review with a year of flat data and no recorded response.

Cognitive load theory has practical implications for goal design as well. Goals that require learners to manage too many demands at once may fail for a specific reason. The failure may not be because the learner lacks the underlying skill.

Instead, working memory resources are exhausted before the skill can be practised to fluency. Chunking goals into smaller subskills, one at a time, reflects what the cognitive load literature tells us about skill acquisition.

A growth mindset framework for teachers is also worth considering here. Teams that interpret a flat trendline as "this learner can't learn" will respond very differently. This differs from teams that interpret it as "this learner has not yet responded to this approach." How we frame the data shapes the quality of our decision-making.

Learners with ADHD challenge diagnosis at annual reviews due to lacking progress. Barriers like attention and self-regulation issues can hide learned skills. A learner may know decoding but struggle to use it in tests (Barkley, 1997; Brown, 2006; Diamond, 2013).

Before blaming instruction, check ADHD accommodations if learners are not progressing. Reduced distractions and extra time may help. Preferential seating and task chunking may also help learners show what they can do (Barkley, 2014; Zentall, 1993).

If these accommodations were not in place, that is a contributing factor for the PLAAFP. If they were in place but not consistently implemented, that is a fidelity issue with the existing plan.

Organise learner progress data visually before annual reviews. Plot baseline, goal line, intervention dates and progress-monitoring points on one clear graph. This is not a separate research claim; it is a practical way to make the evidence visible so the team can discuss the plan rather than debate what happened.

Walk into the meeting with that graph. Refer to it. Let it be the centre of the conversation.

Data does not accuse anyone. It describes a situation and points toward what needs to change. A teacher who leads with data, names the contributing factors honestly, and presents a revised plan grounded in that analysis is doing their job with integrity. That is the full legal and professional standard, and it is achievable even when the news is difficult.

The learner in front of you did not fail. The current programme did not produce the expected results. Those are two very different statements, and the first step toward a better outcome is being clear about which one is true.

A flat trendline indicates that a learner has ended the academic year at the exact same performance level they started. It serves as essential diagnostic data to evaluate whether the current intervention is appropriate in intensity or difficulty. Teachers must document this clearly in the present levels section rather than hiding the lack of progress.

Before writing a completely new goal, teachers must first work out why the learner did not progress. They should use recent progress monitoring data to adjust the intervention intensity, frequency, or instructional match. The revised goal must reflect realistic expectations. It should be grounded in the learner's current baseline, rather than their age or year group level.

Diagnostic frameworks help teachers work out why support did not work. They reduce blame by focusing on intervention fit, intensity, fidelity, attendance, goal construction and newly visible needs. This helps the team record its response to slow progress. It also avoids pretending that the previous plan succeeded.

Research on instructional level, curriculum-based measurement and intensive intervention points to one clear review question: did the task, method and dosage, or amount of teaching, match the learner's current need? Under IDEA, when the learner has not made the expected progress, the team must review and revise the IEP. The answer should then lead to a documented plan change.

The most common mistake is using vague language to obscure the fact that the learner did not improve. Teachers also frequently attribute the lack of growth solely to the severity of the disability without examining the intervention itself. Copying and pasting the exact same goals for a second year without changing the support structure is legally risky and unhelpful for the learner.

Start with objective progress data, then acknowledge parent concerns before explaining the team's analysis. Keep the language plain, show the revised plan and agree how progress will be reported. Childre and Chambers (2005) show why accessible communication and parent voice matter in IEP meetings.

Free for teachers. Visual schedules, sensory adaptations, low-demand routines, built into the plan.

Create Free Account →

Two theories often used in IEP reviews need careful handling. Vygotsky (1978) used the zone of proximal development to explain learning through guided participation, but Chaiklin (2003) argued that the concept is often reduced to a vague slogan about scaffolding. In practice, teams can overclaim precision from a theory that was not designed as a progress monitoring tool.

Gardner (1983) can remind teams to look beyond one narrow test format, but multiple intelligences theory has been strongly criticised on psychometric grounds. Waterhouse (2006) argued that the theory lacks firm neuroscientific evidence, while Visser, Ashton and Vernon (2006) found that the proposed intelligences overlap more than the model suggests. Used crudely, it can slide into fixed learning-style labels.

There are cultural and methodological limits too. Standard IEP data can wrongly treat limited English proficiency, racialised behaviour expectations, poverty, interrupted schooling or neurodivergent masking as lack of ability. Vygotsky's work also came from a specific cultural and historical setting, so it should not be treated as culturally neutral. These theories are most useful when they help teams ask better questions about task match, mediation, language, assessment format and context, while the legal decision still rests on current evidence, family knowledge and observed learner response.

These sources replace placeholder, misattributed and malformed citations. Use the IDEA and OSERS sources for legal requirements; use the research sources for progress monitoring, intervention adjustment, fidelity and participation claims.

Individuals with Disabilities Education Act. Sec. 300.320 Definition of individualized education program. View IDEA regulation

Use this for present levels, measurable annual goals, progress measurement, periodic progress reporting and the statement of services and supports.

Individuals with Disabilities Education Act. Sec. 300.324 Development, review, and revision of IEP. View IDEA regulation

Use this for reviewing the IEP at least annually, revising it when there is lack of expected progress and making documented amendments.

Individuals with Disabilities Education Act. Sec. 300.303 Reevaluations. View IDEA regulation

Use this for reevaluation when educational or related-services needs warrant it, or when a parent or teacher requests reevaluation.

U.S. Department of Education, OSERS (2017). Questions and Answers on Endrew F. v. Douglas County School District Re-1. View OSERS Q&A

Use this for the standard that an IEP should be reasonably calculated to enable progress appropriate in light of the child's circumstances.

National Center on Intensive Intervention (2013). Data-Based Individualization: A Framework for Intensive Intervention. View ERIC record

Use this for the DBI cycle: validated intervention, progress monitoring, diagnostic data, adaptation and continued progress monitoring.

Fuchs, L. S. and Fuchs, D. (2007). A Model for Implementing Responsiveness to Intervention. View DOI record

Use this for a practical response-to-intervention model and data-based decision-making when learners do not respond to initial support.

Deno, S. L. (1985). Curriculum-Based Measurement: The Emerging Alternative. View DOI record

Use this for curriculum-based measurement as a frequent, standardised way to monitor learner progress over time.

Burns, M. K. (2004). Empirical Analysis of Drill Ratio Research: Refining the Instructional Level for Drill Tasks. View DOI record

Use this for cautious claims about instructional level and task difficulty, not broad legal claims about IEP progress.

Dane, A. V. and Schneider, B. H. (1998). Program Integrity in Primary and Early Secondary Prevention: Are Implementation Effects Out of Control? View DOI record

Use this for implementation fidelity, dosage and adherence when asking whether an intervention was delivered as planned.

Yell, M. L. and Bateman, D. F. (2017). Endrew F. v. Douglas County School District (2017): FAPE and the U.S.

Supreme Court. View DOI record

Use this for educator-facing analysis of Endrew F. and the changed FAPE standard.

Martin, J. E., Van Dycke, J. L., Christensen, W.

R., Greene, B. A., Gardner, J. E. and Lovett, D.

L. (2006). Increasing Learner Participation in IEP Meetings. View DOI record

Use this for learner participation and self-directed IEP meetings.

Childre, A. and Chambers, C. R. (2005). Family Perceptions of Learner Centered Planning and IEP Meetings. View DOI record

Use this for parent and family perceptions of IEP meetings and the need for clear, accessible communication.

Burns, M. K. (2004). Empirical analysis of drill ratio research: Refining the instructional level for drill tasks. Remedial and Special Education, 25(3), 167-173.

Childre, A. and Chambers, C. R. (2005). Family perceptions of student centered planning and IEP meetings. Education and Training in Developmental Disabilities, 40(3), 217-233.

Dane, A. V. and Schneider, B. H. (1998). Program integrity in primary and early secondary prevention: Are implementation effects out of control? Clinical Psychology Review, 18(1), 23-45.

Deno, S. L. (1985). Curriculum-based measurement: The emerging alternative. Exceptional Children, 52(3), 219-232.

Fuchs, L. S. and Fuchs, D. (2007). A model for implementing responsiveness to intervention. TEACHING Exceptional Children, 39(5), 14-20.

Individuals with Disabilities Education Act, 34 CFR Sec. 300.303, Sec. 300.320 and Sec. 300.324.

Martin, J. E., Van Dycke, J. L., Christensen, W.

R., Greene, B. A., Gardner, J. E. and Lovett, D. L. (2006).

Increasing student participation in IEP meetings: Establishing the self-directed IEP as an evidenced-based practice. Exceptional Children, 72(3), 299-316.

National Center on Intensive Intervention. (2013). Data-based individualization: A framework for intensive intervention. Washington, DC: Office of Special Education Programs, U.S. Department of Education.

U.S. Department of Education, Office of Special Education and Rehabilitative Services. (2017). Questions and answers on U.S. Supreme Court case decision Endrew F. v. Douglas County School District Re-1.

Yell, M. L. and Bateman, D. F. (2017). Endrew F. v. Douglas County School District (2017): FAPE and the U.S.

Supreme Court. TEACHING Exceptional Children, 50(1), 7-15.

Visual schedules, sensory adaptations, low-demand routines. Built in.