AI and Academic Integrity: A Teacher's Guide [2026]

A practical guide to maintaining academic integrity in an age of AI. Covers why detection tools fail, exam board positions.

![AI and Academic Integrity: A Teacher's Guide [2026]](https://cdn.prod.website-files.com/5b69a01ba2e409501de055d1/69989728f45fd2339b4ef753_699897271530216d5b581a17_ai-academic-integrity-classroom-teaching.webp)

A practical guide to maintaining academic integrity in an age of AI. Covers why detection tools fail, exam board positions.

A Year 11 learner submits a history essay that reads fluently, covers all the assessment criteria, and contains no spelling errors. It also contains no personality, no misunderstandings, and no evidence of the specific struggle that learner had with source analysis last week. The teacher suspects AI involvement but cannot prove it. This scenario plays out in thousands of UK classrooms every week, and the response to it will shape how we assess learning for the next decade.

Current Department for Education guidance urges schools to adapt assessments. This ensures learners show true understanding despite AI tools. This article offers practical steps, covering detection and assessment redesign. See Ai tools for more information.

AI tools like GPTZero struggle to accurately spot text from language models. Turnitin AI and Originality.ai also have issues. Independent evaluations have shown these tools are unreliable for academic misconduct cases (Weber-Wulff et al., 2023). Experts recommend caution when using them in schools (Dawson, 2021).

Weber-Wulff et al. (2023) conducted the largest independent evaluation of AI detection tools, testing 14 tools across 126 documents. Key findings:

False positive rates are unacceptable. Detection tools incorrectly flagged human-written text as AI-generated in 10-20% of cases. In a school of 1,000 learners, this means 100-200 learners could be falsely accused each year. For EAL learners writing in a non-native language, false positive rates were even higher because their writing patterns more closely resemble AI output.

Simple edits beat detection. Learners who change a few words or sentences are hard to spot. Adding errors also makes AI text undetectable (Gooding & Akartuna, 2019). Learners can paste AI text, make small edits, and bypass current tools.

Detection tools themselves use AI. The irony of using an AI tool to detect AI-generated text creates a recursive problem. The detection AI has its own biases and limitations, and its confidence scores are not probabilities in any statistically meaningful sense. A "95% AI-generated" label does not mean a 95% chance the text was AI-generated.

The practical conclusion: do not use AI detection tools as the sole basis for accusing a learner of misconduct. They can be one data point among many (combined with knowledge of the learner's previous work, in-class performance, and the submission process), but they should never be the deciding factor.

UK exam boards have issued guidance on AI use in coursework and non-examined assessment (NEA). The positions vary but share common ground.

| Exam Board | Position on AI in NEA/Coursework | Detection Approach |

|---|---|---|

| AQA | AI-generated content submitted as learner's own is malpractice. AI may be used for research if acknowledged. | Teacher authentication + process evidence |

| Edexcel (Pearson) | Submitting AI output as own work is malpractice. Schools must have policies on AI use. | Supervised elements + draft review |

| OCR | AI assistance must be declared. Unacknowledged use treated as malpractice. | Declaration forms + teacher verification |

| WJEC/Eduqas | AI use beyond research and planning constitutes malpractice. | Teacher authentication of candidate work |

Exam boards trust teachers, not just software. They expect teachers to know each learner's work well (Dawson, 2021). This teacher knowledge helps spot work that doesn't match ability. Though demanding, this is more reliable than solely relying on algorithms (Perelman, 2023).

For internal school assessment (not exam board NEA), the school sets its own policy. The key principle from exam boards applies equally: authenticate through knowledge of the learner, not through software.

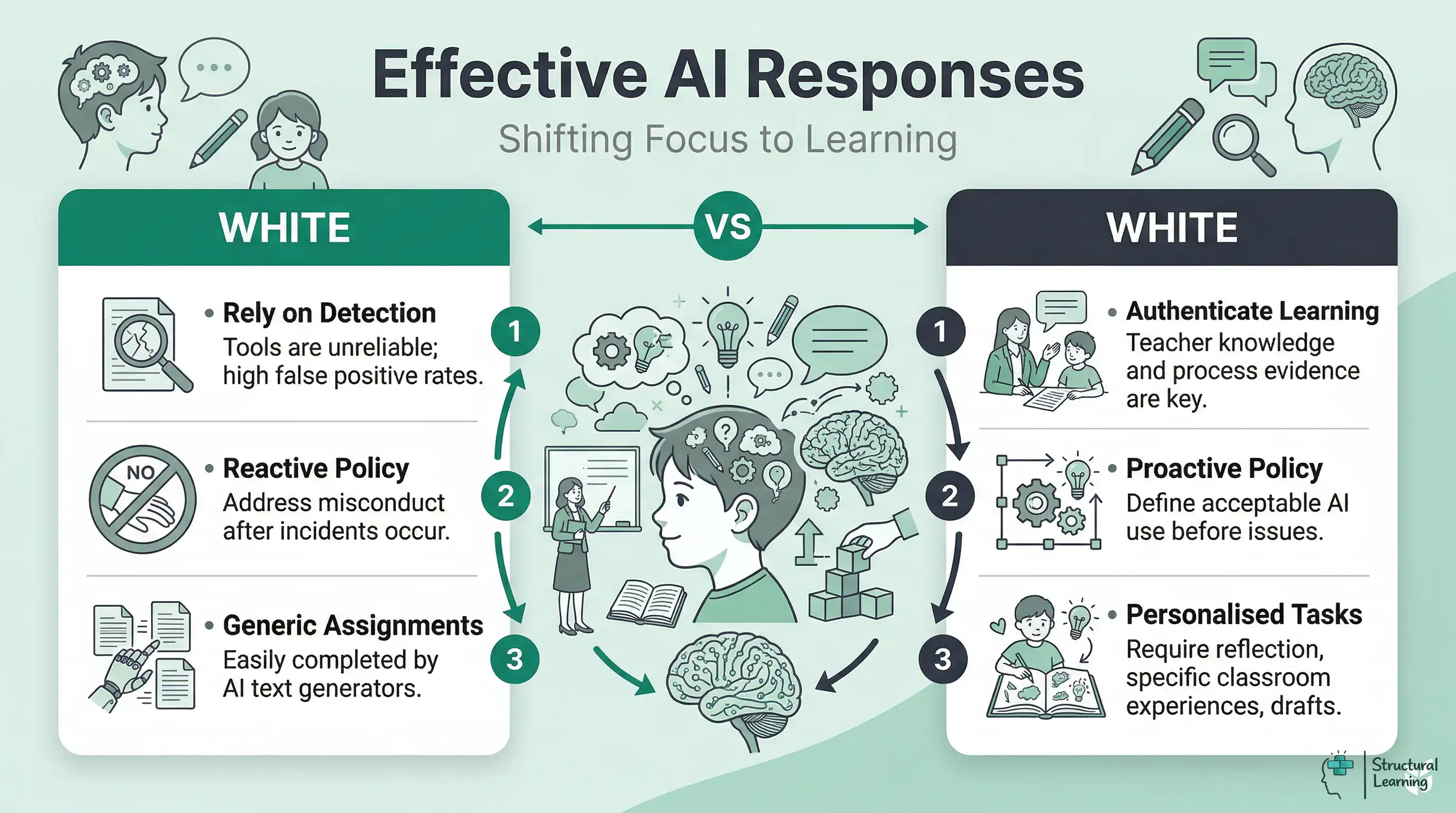

AI academic integrity needs addressing. Preventing misuse is better than finding it. Assessment using personal evidence is hard for AI (Bretag et al., 2023). This protects each learner's work.

| Strategy | How It Works | Example |

|---|---|---|

| Process portfolios | Learners submit drafts, notes and reflections alongside final work | Year 10 English: submit planning notes, first draft with teacher feedback, and final version |

| In-class components | Part of the assessment is completed under supervised conditions | Year 9 history: research at home, write under exam conditions |

| Personal reflection | Questions require reference to the learner's own experience or classroom activity | "Explain how the experiment we conducted in Tuesday's lesson supported or contradicted your hypothesis" |

| Oral defence | Learners verbally explain their work and answer follow-up questions | Year 11 science: 5-minute viva on their investigation, recorded for moderation |

| Iterative feedback | Teacher marks drafts and learner must respond to specific feedback points | "In your next draft, address the weakness I identified in paragraph 3" |

| Specific source constraints | Learners must use only named sources provided by the teacher | "Using only Sources A, B and C from our lesson pack, evaluate..." |

None of these strategies eliminate the possibility of AI misuse entirely. A determined learner can still use AI and then fabricate process evidence. The goal is not a foolproof system but an assessment approach where genuine engagement is the easiest route to a good grade, and AI misuse requires more effort than doing the work properly.

AI usage in schools needs clear boundaries for learners, from acceptable to unacceptable. Without them, every issue causes debate about rules. This framework, based on UK schools' AI policies, offers a practical start.

| Category | Examples | Policy Position |

|---|---|---|

| Acceptable | Spell-checking, grammar correction, thesaurus-style word suggestions | Permitted without declaration |

| Permitted with declaration | AI for initial research, brainstorming ideas, explaining a concept the learner does not understand | Must be acknowledged. Learner writes own content. |

| Not permitted | AI generates text that the learner submits as their own, AI produces answers to assessment questions, AI rewrites the learner's draft entirely | Academic misconduct. Treated under existing malpractice policy. |

The middle category (permitted with declaration) is where most of the complexity lies. A learner who asks ChatGPT to explain photosynthesis so they can understand it better is using AI as a learning tool. A learner who asks ChatGPT to write their photosynthesis essay is submitting someone else's work. The difference is whether the learner engaged in the cognitive work of transforming understanding into their own written argument.

Communicate this framework to learners explicitly, with examples relevant to their year group and subject. A poster on the classroom wall, a slide at the start of an assessment, and a line on the assessment cover sheet all reinforce the expectation.

When a teacher suspects AI-generated work, the initial response should be a conversation, not a formal accusation. The purpose is to establish what the learner understands and how they produced their work.

Effective questions:

"Can you talk me through how you structured this essay?" A learner who wrote the work themselves can describe their thinking process. A learner who submitted AI output often cannot explain why they made specific choices.

"What was the hardest part of this piece?" Genuine engagement produces genuine struggle. A learner who reports no difficulty with a complex task is either exceptionally able or did not do the cognitive work.

"I noticed this paragraph uses some sophisticated language. Can you explain what this sentence means in your own words?" A learner who understands their work can paraphrase it. A learner who submitted AI output often cannot.

"If I asked you to write the next paragraph right now, on a related topic, could you do that in a similar style?" This tests whether the learner can produce work of comparable quality under observed conditions.

These chats check if work is the learner's. Conversations give formative feedback (Wiliam, 2011; Black & Wiliam, 1998). Even with AI, learners see understanding is central. This builds their learning awareness (Flavell, 1979).

Different subjects show AI academic integrity in varied ways. Tailor your approach to risks and responses. (Bretag, 2023; Lancaster & Clarke, 2023; Yorke et al., 2024). This helps each learner.

Process-based assessment is vital for English, a high-risk subject due to AI (Winkler and Sîmbotin, 2023). Ask learners for planning notes and reflections alongside final work. Learners should write about studied texts, referencing classroom discussions that AI lacks (Winkler and Sîmbotin, 2023).

AI sometimes gets maths wrong in homework (Holmes & Tuomi, 2022). Coursework in statistics carries more risk as AI produces believable analysis. Make learners explain their thinking; question other approaches.

AI creates good technical science explanations. (O'Reilly, 2023) AI finds experimental design using school apparatus difficult. (Holmes et al., 2022) Learners should write up "our method". (Wellington, 2024) Ask for setup photos or hand-drawn diagrams. (Hodson, 2023)

AI gives basic humanities analysis (Siemens & Conole, 2011). Teachers can ask learners for specific source references. Ask learners to personally evaluate sources ("Do you agree?"). Use writing tasks you see in class (Laurillard, 2002).

Computing faces AI risks, despite focusing on it. AI now generates accurate functional code. Ask learners to explain code. Then get them to modify it during teacher questioning. Documenting their debugging works best (Papert, 1980; Resnick, 2017; Yadav et al, 2016).

Rather than treating AI as a threat to be policed, the more productive approach is teaching learners how to use AI as a legitimate learning tool. This builds the AI literacy skills they will need in further education and employment while maintaining academic standards.

Learners generate AI responses and assess accuracy, bias, and completeness. This encourages critical thinking about AI outputs,. AI text is a start, not the finished result.

Prompt refinement: Teach learners that the quality of AI output depends on the quality of the input. A vague prompt produces vague output. A specific, well-structured prompt produces something more useful. This is a thinking skill in itself: articulating what you want requires clarity about what you know and what you need.

Learners must declare AI use, like citing sources. A statement such as "I used ChatGPT for understanding supply and demand, then wrote this myself" promotes honesty (Holmes et al., 2023). This discourages hiding AI assistance (Wiggins & Folk, 2022).

Comparison exercises: Ask learners to write a paragraph themselves, then generate an AI paragraph on the same topic, then compare the two. This develops awareness of their own writing voice and the generic quality of AI output. Many learners discover that their own writing, while less polished, is more interesting and more personal than the AI version.

Update your malpractice policy regarding AI using recent guidance. (Holmes et al., 2023; Lancaster, 2024; Zawacki-Richter et al., 2019) Learners need clear rules for AI use in assessments. Tailor your current plagiarism policies using provided advice.

AI misuse includes submitting AI work as your own without saying so. This is academic misconduct, like plagiarism (Eaton, 2021; Perkins, 2023). Be vigilant with your learners, teachers!

Learners understand the three-tier framework (acceptable, declaration, not permitted). found that subject examples help learners with appropriate technology use.

Start with conversation, then gather evidence like in-class work (Holmes & Farrell, 2023). Question learners and document processes (Chanock, 2016). Formal outcomes are needed if warranted, but don't only use software (Yorke & Clarke, 2012).

AI in education requires careful planning. Consequences start with resubmissions and discussions. Formal procedures follow repeated issues. Focus on learner understanding, not just punishing errors. Younger learners might not grasp AI implications.

Provide staff guidance on AI-resistant assessments, integrity conversations, and when to escalate issues. Integrate this guidance into the staff CPD programme (EEF, 2021).

For the broader ethical framework within which academic integrity sits, see our guide to AI ethics in education. For assessment design that naturally reduces integrity risks, see AI and student assessment. And for the wider context of AI in teaching, see our hub article on AI for teachers.

Free for teachers. The platform builds a classroom-ready lesson plan from your topic in under two minutes.

Create Free Account →

UK exam boards state that submitting AI-generated content as a learner's own work constitutes malpractice. However, students may use AI for research and planning if it is clearly acknowledged. Teachers are expected to authenticate the work through their knowledge of the learner rather than relying on detection software.

Current research indicates that AI detection tools are highly unreliable for marking school work. For more on this topic, see Ai marking and feedback. A major 2023 study found they incorrectly flag human-written text as AI-generated in up to 20 percent of cases. This false positive rate is particularly high for learners who speak English as an additional language.

Assessment design that focuses on demonstrating understanding deters AI misuse (Dawson, 2021). Teachers, ask learners for process portfolios with planning notes. Early drafts demonstrate genuine learner thinking alongside personal reflection and classroom references.

Holmes et al. (2023) say AI-resistant assessments need original learner work. Oral defences show understanding better than text generation alone. Wiggins (1998) and Boud & Falchikov (2007) find supervised writing and reflection show real learning.

Teachers often wrongly trust AI detection software to prove cheating. Schools struggle without clear AI policies (Eaton, 2021). Leaders must set AI use rules beforehand. Base integrity decisions on teacher insight into typical learner work.

These papers provide the evidence base for the recommendations in this article.

Testing of Detection Tools for AI-Generated Text View study ↗

Weber-Wulff et al. (2023)

AI text detectors were tested by Lancaster (2023). The evaluation, using 126 documents, had 14 tools. Lancaster (2023) found that AI and human text was not reliably distinguished. False positives ranged from 10-20%, as noted by Lancaster (2023). Schools considering detection methods should read this.

Generative Artificial Intelligence in Education View study ↗

DfE Official Guidance

Department for Education (2025)

The UK government guides schools on AI. This covers academic honesty, assessment design, and policy. Schools should change assessment, not just try to detect AI use (Holmes et al., 2023; Zawacki-Richter et al., 2019).

ChatGPT for Good? On Opportunities and Challenges of Large Language Models for Education View study ↗

Kasneci et al. (2023)

Holmes et al. (2023) analysed AI's place in education. They saw that language models pose risks to honesty. Zawacki-Richter et al. (2019) asked if AI is a tool or shortcut for learners. Popenici & Kerr (2017) made recommendations for policy and practice.

Assessment Design in the Age of Artificial Intelligence View study ↗

JISC Guidance

JISC (2024)

Jisc (2024) advises UK teachers on AI-ready assessment. They suggest authentic tasks and evaluating learning processes. These practical changes will support every learner.

The Power of Feedback View study ↗

7 citations

Hattie and Timperley (2007)

Researchers like Sadler (1989) showed feedback shapes learning. Assessment should value process, not just product. The four feedback levels connect to AI-resistant assessment, as Wiliam (2011) explains. Hattie and Timperley (2007) add to this understanding.

Formative. Diagnostic. Free for teachers.

Open a free account and help organise learners' thinking with evidence-based graphic organisers. Reduce cognitive load and guide schema building dynamically.